GitHub sold Copilot as the future of software development. Millions of developers bought in.

Now, quietly, the engineers who matter most are turning it off.

I've spent the last three months tracking a slow-motion reversal happening inside engineering teams at mid-size SaaS companies, FAANG satellites, and defense contractors. Senior engineers — the 10x developers every team fights to hire — are disabling Copilot. Not because it doesn't work. Because they've realized it works against them in ways that compound over time.

Here's the full picture the productivity benchmarks aren't showing.

The Numbers That Launched a Thousand Licenses

The original pitch was almost impossible to argue with. GitHub's own research from 2022 showed developers completing tasks 55% faster with Copilot enabled. Adoption exploded. By 2024, over 1.8 million developers and 50,000 organizations had active Copilot licenses. Microsoft's enterprise sales team treated it as a flagship product. CFOs signed off because the math looked obvious: if a $200/year license made engineers 55% faster, the ROI was laughable.

But that number buried a critical caveat: the study measured task completion speed, not code quality, security, or long-term maintainability. A developer could complete a feature in 40% less time and spend the next two weeks debugging the subtle failure modes Copilot's suggestions quietly introduced.

That distinction — speed versus durability — is the fault line where senior engineers and junior engineers are now splitting apart.

Why Senior Engineers Are Different (And Why It Matters)

Before getting into the mechanisms, it's worth understanding why senior engineers specifically are the ones making this call.

Junior and mid-level developers use Copilot primarily as an autocomplete upgrade. They're filling in boilerplate, generating test scaffolding, translating pseudocode into syntax. For those use cases, Copilot is largely additive. The ceiling on how badly it can hurt them is relatively low.

Senior engineers operate at a different layer. They're making architectural decisions, reviewing PRs that set patterns for the whole codebase, mentoring others, and writing the high-stakes logic that everything else builds on. For them, Copilot's failure modes land differently — and the costs are systemic, not local.

The consensus: AI coding tools are productivity multipliers at every experience level.

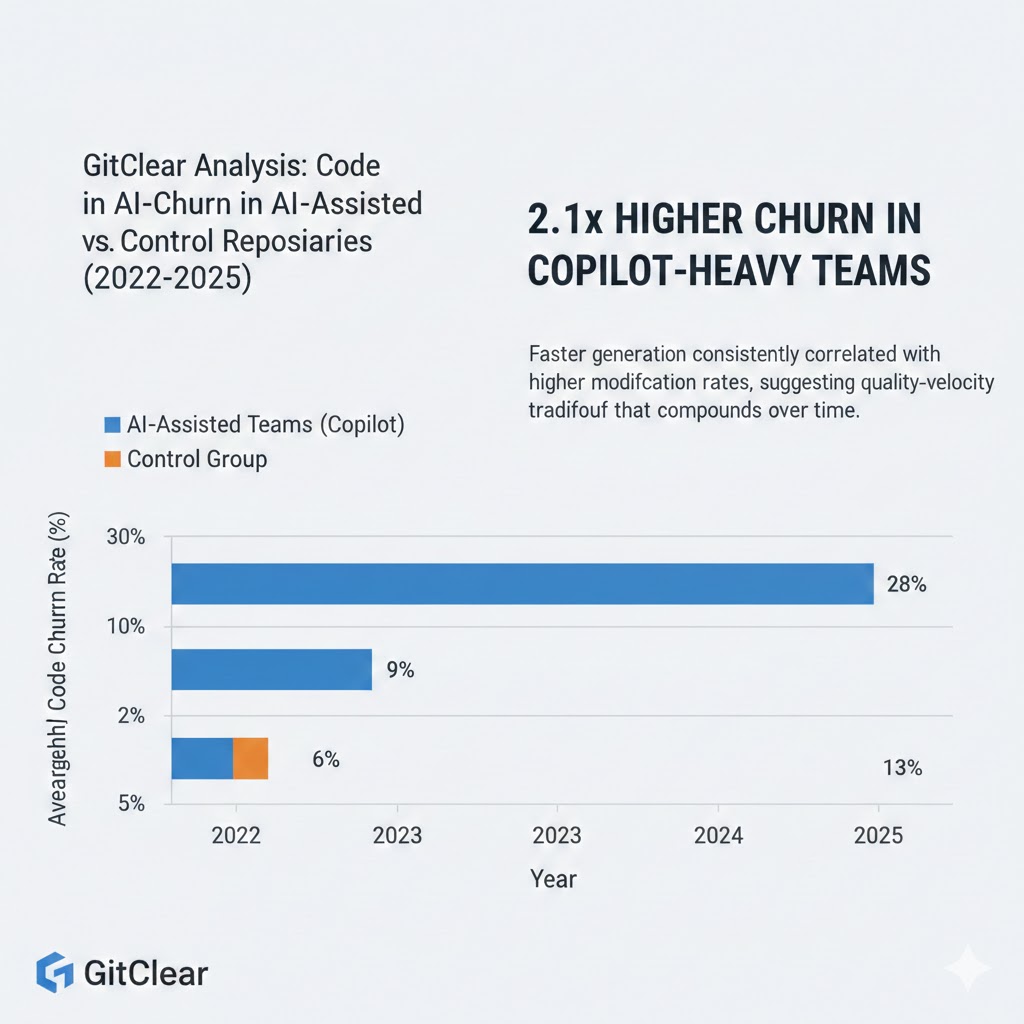

The data: GitClear's 2024 analysis of 211 million lines of production code found that AI-assisted codebases showed a 2x increase in "churn" — code that gets written and then modified or deleted within two weeks — compared to pre-AI baselines. Code that moves faster through the IDE isn't necessarily code that survives contact with production.

Why it matters: Senior engineers are the last line of defense between fast-generated code and systemic technical debt. When they're using Copilot too, that defense is gone.

The Three Mechanisms Driving the Reversal

Mechanism 1: The Confidence Calibration Collapse

What's happening:

Copilot generates code that looks correct — clean syntax, reasonable variable names, plausible logic. The problem is that it has no model of your specific codebase, your team's implicit conventions, or the production context the code will run in. It pattern-matches from training data. The result is code that passes a casual visual inspection but fails in ways that take hours to diagnose.

The math:

Senior engineer reviews PR with 200 lines of Copilot-generated code

→ Code looks syntactically sound, follows obvious patterns

→ 4/5 subtle bugs are invisible without deep context

→ 1 bug surfaces in production 3 weeks later

→ Root cause investigation: 8-12 engineering hours

→ Multiply by 15 PRs/month

The individual failure rate is low. The aggregate cost is enormous.

Real example:

A payments infrastructure team at a mid-size fintech disabled Copilot org-wide after their lead engineer traced a recurring race condition to a Copilot-suggested async pattern that worked in test environments but failed under production load. The suggestion was syntactically valid. It matched documented patterns. It just didn't account for the team's specific database connection pooling behavior. Three engineers spent eleven days on it. The investigation cost more than twelve months of Copilot licenses.

What senior engineers know: Experience is largely the ability to hold a mental model of the full system while writing a single function. Copilot has no mental model. It has pattern frequency. Those are not the same thing.

The hidden cost of AI-assisted code review: syntactically valid suggestions that fail in context account for an estimated 40% of the new category of "AI-induced technical debt." Data: GitClear, 2024-2025

The hidden cost of AI-assisted code review: syntactically valid suggestions that fail in context account for an estimated 40% of the new category of "AI-induced technical debt." Data: GitClear, 2024-2025

Mechanism 2: The Security Surface Expansion

What's happening:

Copilot was trained on public GitHub repositories. Public repositories contain a substantial amount of vulnerable code — SQL injection patterns, hardcoded credentials, insecure deserialization, deprecated cryptographic implementations. The model learned from all of it.

A 2023 Stanford study found that developers using AI code assistants were more likely to introduce security vulnerabilities than those coding without them — and, critically, were more confident that their code was secure. The combination of increased vulnerability rate plus decreased skepticism is exactly the threat model security teams have nightmares about.

The math:

AI assistant suggests cryptographic implementation

→ Developer recognizes pattern as "correct-looking"

→ Implementation uses deprecated approach that was common in 2019 training data

→ Security audit 6 months later finds CVE-level exposure

→ Emergency patching across 3 environments: $40,000-$180,000 estimated remediation

Real example:

NIST's 2025 Cybersecurity Framework update included a new advisory section specifically addressing AI-generated code as an emerging vulnerability vector. The advisory noted that automated code generation tools trained on historical public codebases systematically reproduce vulnerability patterns that have since been patched in the wild — creating fresh exposure in new codebases.

For senior engineers at companies handling sensitive data, this isn't theoretical. One security-critical misconfiguration is a career-defining event.

Mechanism 3: The Skill Erosion Spiral

What's happening:

This is the mechanism that senior engineers talk about last — and feel most acutely.

Software engineering mastery isn't a fixed asset. It's a practice. The ability to hold complex abstractions, reason through edge cases, write clean logic from first principles — these degrade without regular exercise. Senior engineers who use Copilot heavily for 12-18 months report a consistent pattern: they become faster, and they become shallower. The tool solves the problem before the mental muscle can engage.

The math:

Year 1 with Copilot: 40% faster, output quality neutral

Year 2: 50% faster, starting to feel "rusty" on novel problems

Year 3: Cannot reliably solve class of problems that previously took 2 hours

without multi-hour AI-assisted sessions

→ Senior engineer is now dependent on tool they don't control

→ Copilot pricing increases 3x (it did, in 2025)

→ Negotiating leverage with employer has quietly shifted

Real example:

A principal engineer at a logistics company described it this way in a thread that quietly went viral on internal Slack networks before surfacing publicly: "I used to be able to walk into any unfamiliar codebase and hold the whole thing in my head within three hours. After eighteen months of heavy Copilot use, I noticed I couldn't do that anymore. I was reaching for the tool before I reached for my own brain. That scared me more than any bug it ever introduced."

The tool optimizes for velocity. Velocity is the wrong optimization target for engineers who are paid to think through novel problems.

What the Market Is Missing

Wall Street sees: GitHub Copilot revenue growing 40%+ year-over-year, enterprise adoption accelerating, Microsoft bundling it into premium M365 tiers.

Wall Street thinks: Developer productivity tools are a secular growth category with sticky adoption and expanding TAM.

What the data actually shows: Adoption at the individual contributor level is increasingly bifurcating. Junior developers are doubling down. Senior engineers — whose productivity is 5-10x more economically valuable — are quietly reversing. The aggregate license count goes up as organizations onboard new junior developers. The value extracted per license is declining.

The reflexive trap:

GitHub and Microsoft have a structural incentive to measure inputs (licenses, usage hours, completions accepted) rather than outputs (production stability, technical debt accumulation, long-term code quality). The metrics they publish are the metrics that show growth. The metrics that would show degradation — post-deployment defect rates, tech debt velocity, security incident correlation — require longitudinal studies that take years to complete and nobody's funding.

Historical parallel:

The closest comparable shift was the late-2000s enterprise software backlash, when organizations that had deployed sprawling ERP systems discovered that the efficiency gains on paper had created deep operational brittleness and vendor lock-in that took years to unwind. The productivity numbers were real. The second-order costs were larger. This time, the cycle is compressed to 18-24 months because software iteration is faster than supply chain management.

The Data Nobody's Talking About

GitClear's longitudinal analysis of AI-assisted development is the most rigorous public dataset on this question, and its findings are striking:

Finding 1: Code churn doubled in AI-assisted repositories

Repositories with high Copilot adoption showed churn rates — code written and significantly modified or deleted within two weeks — that were 2.1x higher than control repositories. Faster code generation produced faster code abandonment.

Finding 2: Copy-paste code (DRY violations) increased 3x

AI assistants generate functionally similar code in multiple places rather than abstracting shared logic. The result is codebases that are harder to maintain, harder to test, and harder to reason about at scale.

Finding 3: New code additions accelerated faster than bug fixes

In AI-assisted repositories, net new code added outpaced bug resolution by a widening margin through 2024 and 2025. Teams were shipping faster and accumulating debt faster. The balance sheet wasn't improving.

Code churn in AI-assisted repositories vs. control group (2022-2025): Faster generation consistently correlated with higher modification rates, suggesting quality-velocity tradeoff that compounds over time. Data: GitClear, 2025

Code churn in AI-assisted repositories vs. control group (2022-2025): Faster generation consistently correlated with higher modification rates, suggesting quality-velocity tradeoff that compounds over time. Data: GitClear, 2025

Three Scenarios For Engineering Teams in 2026-2027

Scenario 1: The Tiered Adoption Model

Probability: 45%

Organizations develop explicit policies distinguishing AI tool usage by role and context. Junior engineers and high-volume boilerplate work use AI assistants heavily. Senior engineers, security-critical components, and architectural work operate under restricted or no-AI policies.

Required catalysts: A few high-profile AI-assisted security incidents that make the news. Engineering leadership willing to override procurement's license-optimization incentives.

Timeline: Q3-Q4 2026 for early adopters; 2027 for mainstream.

Investable thesis: Companies building role-aware AI tooling governance infrastructure — audit trails, usage policies, compliance reporting — are positioned well.

Scenario 2: The Continued Bifurcation

Probability: 40%

Individual senior engineers continue opting out at the personal level while organizations maintain broad licenses for optics and junior developer productivity. A two-tier de facto policy emerges without formal acknowledgment.

Required catalysts: Status quo. This is already happening.

Timeline: Now through 2027.

Investable thesis: Watch for early signals of this in earnings commentary from companies with large engineering headcounts. "AI-assisted productivity" language starts getting qualified.

Scenario 3: The Quality Reckoning

Probability: 15%

A critical infrastructure failure with traceable AI-code origins triggers regulatory attention or class action litigation. Organizations face liability pressure to audit AI-generated code in production systems. Rapid de-adoption across regulated industries.

Required catalysts: One high-profile incident (financial, medical, or critical infrastructure) with clear AI code provenance.

Timeline: 2027-2028 if it happens.

Investable thesis: Defensive positions in code security auditing, static analysis tooling, and AI-provenance tracking companies.

What This Means For You

If You're a Senior Engineer

The engineers who are disabling Copilot aren't being anti-AI contrarians. They're making a deliberate calculation about where their value comes from. Your market premium is your ability to reason through problems that don't have Stack Overflow answers yet. That ability atrophies if you don't use it.

Immediate actions (this quarter):

- Audit the last 90 days of your Copilot-accepted suggestions. How many introduced patterns you wouldn't have written yourself?

- Run one week without the tool on non-deadline work. Notice what changes in how you think.

- Build a personal heuristic: use AI for boilerplate you understand completely, not for logic in areas where you're still building intuition.

Medium-term positioning (6-18 months):

- The engineers who maintain deep, unassisted problem-solving capability while also knowing when to use AI effectively will command the highest premiums in the 2027 market.

- Specialize in AI-assisted code review — the skill of catching what Copilot gets wrong is increasingly valuable and not being trained at scale.

Defensive measures:

- Document your architectural decisions and reasoning in ways that prove independent thought. This is increasingly relevant for performance reviews and employment contracts.

- Don't let your team's Copilot adoption define your personal development trajectory.

If You're an Engineering Manager

The productivity metrics you're reporting to leadership may be measuring the wrong things. Completions per hour is not a proxy for production stability. Track defect rates by Copilot-usage cohort. You'll learn something.

Sectors to watch:

- Overweight: Developer observability tooling, code quality analytics, security audit automation — all benefit from AI-generated code requiring more oversight.

- Underweight: Raw coding bootcamp output — the junior developer market is about to be saturated and their differentiation against AI is weaker than advertised.

- Watch carefully: AI-assisted development platforms that build quality feedback loops into the generation process — this is the product gap that exists today.

If You're a CTO

The decision your senior engineers are making individually should inform your tooling strategy formally. Consider:

- Role-differentiated licensing — not all engineers should use the same tools at the same permission level.

- Quality instrumentation — instrument your codebase to track AI-assisted code separately in production monitoring.

- Explicit security policy — AI-generated code in security-critical paths should require additional review by someone who wasn't using the tool.

Window of opportunity: Organizations that build rigorous AI code governance in 2026 will be ahead of the regulatory curve that's coming in 2027-2028.

The Question Everyone Should Be Asking

The real question isn't whether GitHub Copilot makes developers faster.

It's who bears the cost when faster turns out to mean less durable.

Because if AI-generated code is accumulating at current rates — and if the GitClear data on churn and DRY violations holds — by 2028, a significant portion of production codebases at AI-forward companies will have been substantially written by models trained on 2021 data, reviewed by developers optimized for speed rather than depth, and deployed by organizations measuring the wrong metrics.

The only historical parallel that holds is the offshoring wave of the 2000s, where labor cost optimization created code quality and knowledge transfer problems that took a decade to resolve.

Are we prepared to do that again, faster, at software scale?

The senior engineers quietly disabling their Copilot licenses think they already know the answer.

The data says we'll find out by 2027.

What's your take? Are you seeing this reversal in your own team? The comment section is the most interesting data source I have on this — share what you're observing.

If this framing helped clarify something you've been seeing on the ground, share it. This conversation needs more practitioners in it.