Problem: Your Robotics Salary Is Stalling While AI-Fluent Peers Race Ahead

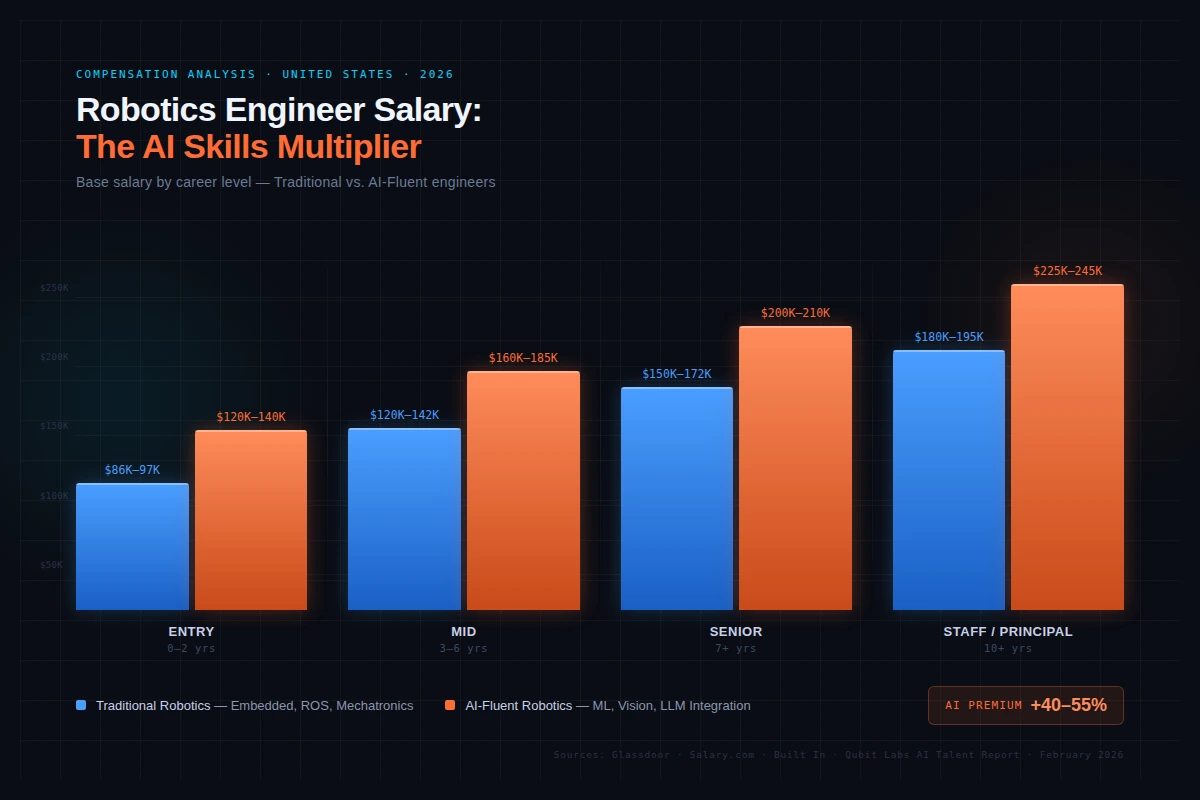

The base robotics engineer salary in 2026 sits around $133K–$142K. But engineers combining robotics with AI skills are pulling $200K–$210K. That's a 40–55% gap—and it's growing.

You'll learn:

- Where the salary floor and ceiling are in 2026

- Which AI skills move the needle most

- How to position yourself to capture the premium

Time: 10 min | Level: Intermediate

Why This Happens

Robotics used to be a mechanical and embedded systems discipline. Today's robots need to perceive, adapt, and decide in real time—skills that come from machine learning, computer vision, and foundation models. Employers are paying hard premiums for engineers who bridge both worlds.

The numbers tell the story:

- Traditional robotics engineer average: ~$133K–$142K (Salary.com, Glassdoor, 2026)

- Senior AI-fluent robotics engineer: $200K–$210K (Qubit Labs AI Talent Report, 2026)

- Machine learning engineers at the senior level: up to $212,928 annually

- AI safety/alignment specialists: up 45% since 2023

The gap isn't seniority—it's skill stack.

Base salary distribution across robotics roles—AI-integrated positions cluster at the top end

Base salary distribution across robotics roles—AI-integrated positions cluster at the top end

The AI Skill Multipliers That Actually Move Salary

The Core Four

These four skill areas show the clearest correlation with top-band robotics compensation:

1. Computer Vision Mid-level computer vision engineers average $169K. Add this to a robotics role and you're working on perception pipelines, object detection, and real-time inference—the hardest problems in physical AI.

2. Machine Learning / Deep Learning ML isn't optional anymore for advanced robotics. Senior ML engineers in robotics-adjacent roles hit $211K–$213K. ROS experience alone won't get you there.

3. LLM Integration Large Language Model engineers earn 25–40% more than general ML engineers. The emerging use case: natural language task planning for robot arms, service bots, and autonomous vehicles.

4. MLOps at Scale Getting a model running is one thing. Deploying it reliably on embedded hardware—with drift detection and retraining pipelines—earns a 20–35% premium over pure ML skills.

The Salary Breakdown by Level (2026)

| Level | Traditional Robotics | AI-Fluent Robotics |

|---|---|---|

| Entry (0–2 yrs) | $86K–$97K | $120K–$140K |

| Mid (3–6 yrs) | $120K–$142K | $160K–$185K |

| Senior (7+ yrs) | $150K–$172K | $200K–$210K+ |

| Staff / Principal | $180K–$195K | $225K+ (+ equity) |

Base salary only. Top-tier companies (Boston Dynamics, Tesla, NASA JPL, Redwire, Near Earth Autonomy) stack equity on top—sometimes significantly.

Where You Work Matters (But Less Than You Think)

San Francisco, Austin, and New York still pay top dollar. But the spread between locations has compressed as remote robotics-adjacent roles grew. What matters more in 2026 is specialization:

- Aerospace & Defense: Redwire, Near Earth Autonomy, NASA JPL pay at the top of the range

- Autonomous Vehicles: Tesla, Waymo-adjacent teams pay competitively with equity

- Manufacturing Automation: Solid mid-range pay, lower variance

Geography adds maybe 5–15% depending on COL. Skill stack adds 40–55%. Optimize accordingly.

How to Actually Close the Gap

Step 1: Get Proficient in One Vision Framework

Pick one and go deep: OpenCV + PyTorch or CUDA-accelerated inference with TensorRT. Real-time perception is the #1 bottleneck in physical AI systems. Knowing how to benchmark and optimize inference latency is immediately hireable.

# Example: Simple profiling of inference time on embedded hardware

import time

import torch

model = torch.load("perception_model.pt")

model.eval()

input_tensor = torch.randn(1, 3, 640, 640)

# Warm up

for _ in range(10):

_ = model(input_tensor)

# Benchmark

start = time.perf_counter()

for _ in range(100):

_ = model(input_tensor)

elapsed = (time.perf_counter() - start) / 100

print(f"Avg inference: {elapsed * 1000:.2f}ms")

# Target: <20ms for real-time robotics applications

Why this matters: Interviewers ask "can you get this to run at 30fps on a Jetson Orin?" not "have you used OpenCV?"

Step 2: Learn ROS 2 + ML Bridge Patterns

ROS 2 (Humble or Iron) is the standard. The gap most engineers miss: integrating trained PyTorch models into ROS nodes without blocking the real-time control loop.

# Pattern: Async inference node in ROS 2 (don't block the control loop)

import rclpy

from rclpy.node import Node

from sensor_msgs.msg import Image

import threading

class InferenceNode(Node):

def __init__(self):

super().__init__('inference_node')

self.subscription = self.create_subscription(

Image, '/camera/image_raw', self.image_callback, 10)

self._inference_thread = None

def image_callback(self, msg):

# Run inference in a separate thread—never block the subscriber

if self._inference_thread is None or not self._inference_thread.is_alive():

self._inference_thread = threading.Thread(

target=self._run_inference, args=(msg,))

self._inference_thread.start()

def _run_inference(self, msg):

# Your model inference here

pass

If it fails:

- Callback queue overflows: Reduce subscription QoS depth or increase inference speed

- Thread safety issues: Use

rclpy.callback_groups.ReentrantCallbackGroup

Step 3: Build One End-to-End Portfolio Project

Hiring managers at top robotics companies want to see a full perception-to-action loop. Pick one:

- Sim-to-real transfer using Isaac Sim + a physical robot arm

- Vision-language task planning with a small LLM controlling a robot

- Autonomous navigation with custom sensor fusion (not just the Nav2 defaults)

None of these require a physical lab. Isaac Sim is free for research. ROS 2 + Gazebo runs on any decent Linux machine.

Verification: Are You in the Right Salary Band?

Check yourself against current postings using these searches:

# Glassdoor / LinkedIn searches that reveal the AI premium

"Robotics Engineer" "computer vision" site:linkedin.com/jobs

"Robotics Software Engineer" "PyTorch OR TensorFlow" site:linkedin.com/jobs

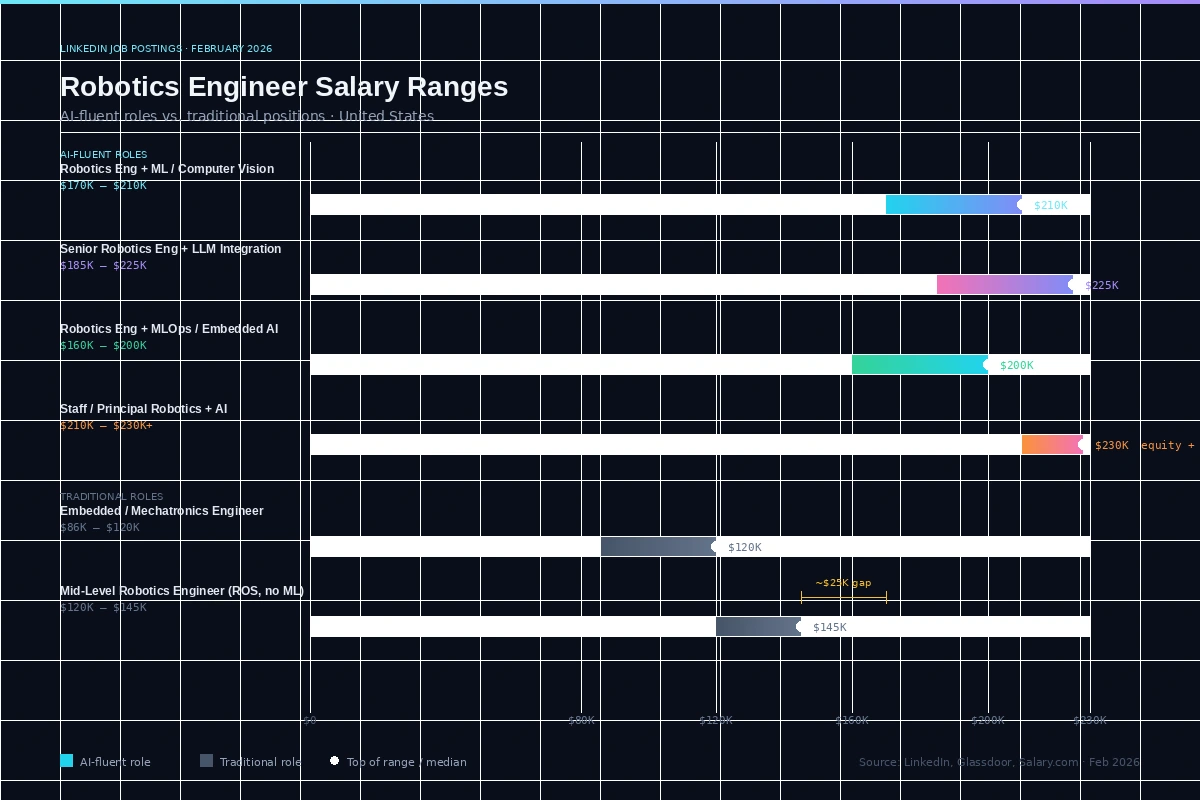

What you should see: Roles with ML/vision requirements consistently posting $170K–$210K+ vs. $120K–$140K for pure mechatronics/embedded roles. That's your benchmark.

AI-fluent robotics roles on LinkedIn, February 2026—the $170K+ band requires ML or vision skills

AI-fluent robotics roles on LinkedIn, February 2026—the $170K+ band requires ML or vision skills

What You Learned

- The 2026 robotics salary gap between traditional and AI-fluent engineers is 40–55%—and it's structural, not cyclical.

- Computer vision and ML are the highest-leverage skills to add, with LLM integration emerging as the next frontier.

- Senior AI-fluent robotics engineers are in the same compensation band as senior ML engineers ($200K–$213K).

- You don't need a full robotics lab to build a competitive portfolio—simulation tools have made that barrier irrelevant.

Limitation: These figures reflect US-based roles primarily. International salaries vary significantly—geographic arbitrage can cut costs 20–90% for employers, which is increasingly how teams are built.

When NOT to optimize for AI skills: If you're targeting industrial robotics in manufacturing (PLCs, HMI, SCADA), the traditional path holds—AI premiums are smaller there. Focus on IEC 61131-3 and safety certifications instead.

Salary data sourced from Glassdoor, Salary.com, Built In, PayScale, Qubit Labs AI Talent Report, and Rise AI Talent Salary Report — February 2026. Individual salaries vary by employer, location, and negotiation.