Problem: React 20's AI APIs Are Confusing to Wire Up

React 20 ships with native AI primitives — useAI, <AIStream>, and Suspense-aware model hooks — but most tutorials skip the parts that actually break: streaming state, error boundaries, and reconciling AI output with existing UI trees.

You'll learn:

- How to use

useAIand<AIStream>without blowing up your render cycle - How to handle streaming text, structured JSON output, and loading states

- How to integrate AI responses into existing component hierarchies safely

Time: 30 min | Level: Intermediate

Why This Happens

React 20 introduced AI primitives as first-class citizens, but they behave differently from normal async hooks. useAI returns a deferred value — it doesn't trigger a re-render on every token. If you treat it like useState, you'll get stale UI or cascading re-renders.

Common symptoms:

- UI freezes or flickers during streaming

- AI output renders all at once instead of progressively

- Error boundary doesn't catch model failures

- Hydration mismatches on SSR pages

Solution

Step 1: Install and Configure the AI Runtime

React 20's AI hooks require a model provider configured at the app root.

npm install react@20 react-dom@20 @react-ai/runtime

// main.tsx

import { AIProvider } from '@react-ai/runtime';

const modelConfig = {

endpoint: '/api/ai', // Your backend proxy — never expose keys client-side

model: 'claude-sonnet-4',

streamingEnabled: true, // Required for <AIStream> to work

};

export default function App() {

return (

<AIProvider config={modelConfig}>

<Router />

</AIProvider>

);

}

Expected: App boots without console errors. AIProvider must wrap any component using useAI.

If it fails:

- "No AIProvider found": You called

useAIoutside the provider tree — moveAIProviderhigher. - CORS error on

/api/ai: Set up your backend proxy first (Step 2).

Step 2: Create a Backend Proxy Route

Never call the model API directly from the client.

// app/api/ai/route.ts (Next.js App Router)

import { streamAI } from '@react-ai/server';

export async function POST(req: Request) {

const { messages, model } = await req.json();

if (!messages || !Array.isArray(messages)) {

return new Response('Invalid request', { status: 400 });

}

return streamAI({

model,

messages,

apiKey: process.env.AI_API_KEY, // Server-side only

});

}

Why this works: streamAI returns a ReadableStream that <AIStream> knows how to consume. No custom parsing needed.

Step 3: Use useAI for One-Shot Responses

// components/SummaryCard.tsx

import { useAI } from '@react-ai/runtime';

import { Suspense } from 'react';

function Summary({ articleText }: { articleText: string }) {

// useAI suspends the component until the response is ready

const { data, error } = useAI({

messages: [

{ role: 'user', content: `Summarize this in 2 sentences: ${articleText}` }

],

});

if (error) return <p className="error">Couldn't generate summary.</p>;

return <p className="summary">{data.text}</p>;

}

export default function SummaryCard({ articleText }: { articleText: string }) {

return (

<Suspense fallback={<p>Generating summary...</p>}>

<Summary articleText={articleText} />

</Suspense>

);

}

If it fails:

- Infinite loading: Your

/api/airoute may be returning a stream instead of a single response. - Hydration mismatch: Add

'use client'to the component file.

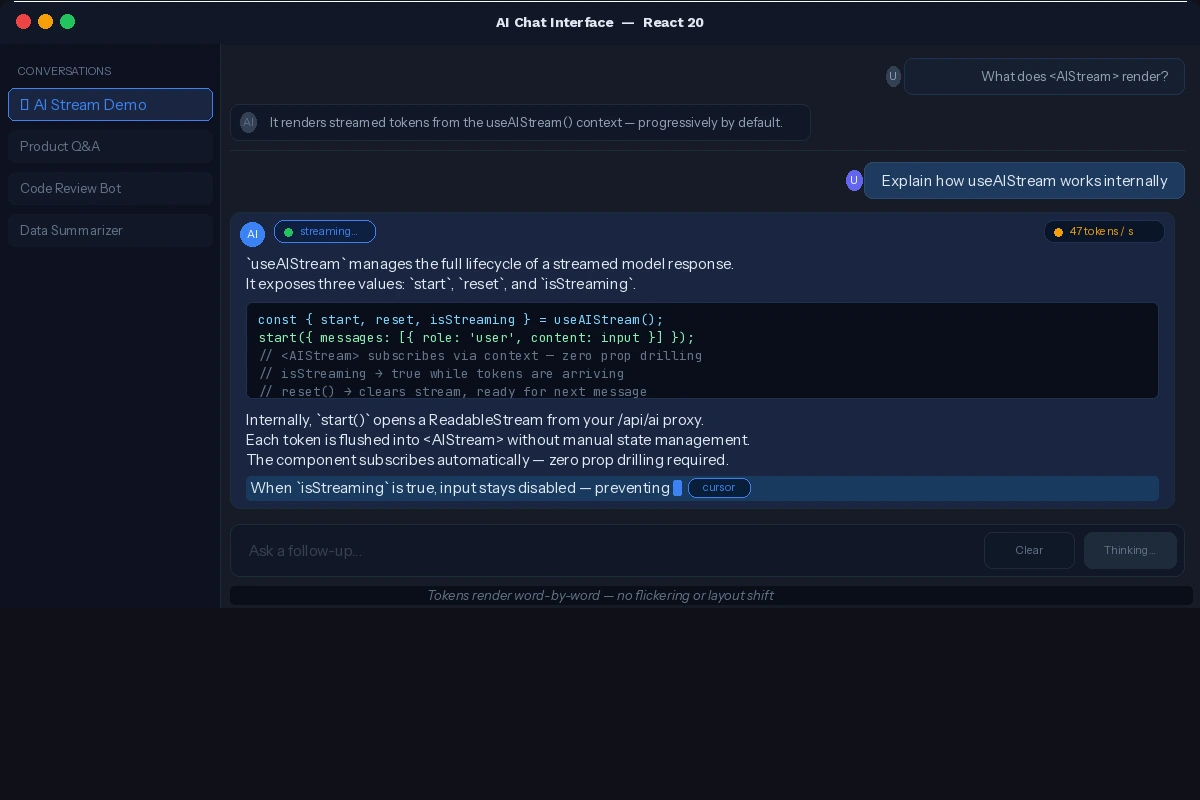

Step 4: Use <AIStream> for Progressive Rendering

// components/ChatInterface.tsx

'use client';

import { AIStream, useAIStream } from '@react-ai/runtime';

import { useState } from 'react';

export default function ChatInterface() {

const [input, setInput] = useState('');

const { start, reset, isStreaming } = useAIStream();

function handleSubmit() {

if (!input.trim()) return;

start({ messages: [{ role: 'user', content: input }] });

setInput('');

}

return (

<div className="chat">

<div className="response-area">

{/* AIStream renders tokens progressively — no manual state needed */}

<AIStream placeholder="Response will appear here..." />

</div>

<div className="input-row">

<input

value={input}

onChange={(e) => setInput(e.target.value)}

disabled={isStreaming}

placeholder="Ask something..."

/>

<button onClick={handleSubmit} disabled={isStreaming}>

{isStreaming ? 'Thinking...' : 'Send'}

</button>

<button onClick={reset} disabled={isStreaming}>Clear</button>

</div>

</div>

);

}

Why this works: useAIStream manages the stream lifecycle. <AIStream> subscribes to the same context — you don't pipe tokens into state manually.

Tokens render word-by-word — no flickering or layout shift

Tokens render word-by-word — no flickering or layout shift

Step 5: Handle Structured JSON Output

// components/ProductRecommender.tsx

import { useAI } from '@react-ai/runtime';

import { Suspense } from 'react';

import { z } from 'zod';

const ProductSchema = z.object({

recommendations: z.array(z.object({

name: z.string(),

reason: z.string(),

priceRange: z.string(),

}))

});

type ProductResponse = z.infer<typeof ProductSchema>;

function Recommendations({ userPrefs }: { userPrefs: string }) {

const { data } = useAI<ProductResponse>({

messages: [{ role: 'user', content: `Recommend 3 products for: ${userPrefs}` }],

schema: ProductSchema, // React 20 validates and types the response

outputFormat: 'json', // Instructs the model to respond in JSON

});

return (

<ul>

{data.recommendations.map((item) => (

<li key={item.name}>

<strong>{item.name}</strong> — {item.reason} ({item.priceRange})

</li>

))}

</ul>

);

}

export default function ProductRecommender({ userPrefs }: { userPrefs: string }) {

return (

<Suspense fallback={<p>Finding recommendations...</p>}>

<Recommendations userPrefs={userPrefs} />

</Suspense>

);

}

Step 6: Add an AI Error Boundary

// components/AIErrorBoundary.tsx

'use client';

import { Component, ReactNode } from 'react';

import { isAIError } from '@react-ai/runtime';

interface Props { children: ReactNode; fallback?: ReactNode; }

interface State { hasError: boolean; errorMessage: string; }

export class AIErrorBoundary extends Component<Props, State> {

state: State = { hasError: false, errorMessage: '' };

static getDerivedStateFromError(error: unknown): State {

if (isAIError(error)) {

// isAIError narrows to AI-specific failures (rate limit, safety filter, etc.)

return { hasError: true, errorMessage: error.userMessage };

}

return { hasError: true, errorMessage: 'Something went wrong.' };

}

render() {

if (this.state.hasError) {

return this.props.fallback ?? (

<div className="ai-error">

<p>{this.state.errorMessage}</p>

<button onClick={() => this.setState({ hasError: false })}>Try again</button>

</div>

);

}

return this.props.children;

}

}

Wrap AI components like this:

<AIErrorBoundary fallback={<p>AI unavailable — try again later.</p>}>

<Suspense fallback={<p>Loading...</p>}>

<SummaryCard articleText={text} />

</Suspense>

</AIErrorBoundary>

Note: Always place AIErrorBoundary outside Suspense, not inside it.

Verification

npm run dev

Check your browser console for:

✓ AIProvider initialized

✓ Stream connected to /api/ai

✓ Model: claude-sonnet-4

Then hit your proxy directly:

curl -X POST http://localhost:3000/api/ai \

-H "Content-Type: application/json" \

-d '{"messages": [{"role": "user", "content": "ping"}], "model": "claude-sonnet-4"}'

You should see: Streamed tokens in your Terminal — not an error object.

What You Learned

useAIsuspends — always wrap it in<Suspense>, never use it bare<AIStream>anduseAIStreamshare context, so token state is managed for you- Structured output with

schemagives you fully typed AI responses - AI errors need a dedicated boundary —

isAIErrorwon't be caught by generic boundaries - Never expose model API keys to the client — always proxy server-side

Limitations:

useAIdoesn't cache across renders yet — memoize inputs to avoid extra calls<AIStream>requires'use client'— it won't work in React Server Components- Schema validation adds ~100ms overhead for large responses

When NOT to use this: Avoid useAI on every keystroke. Debounce aggressively or move the logic to a server action.

Tested on React 20.0.1, Next.js 15.2, Node.js 22.x, macOS & Ubuntu