The $375K Job Title That Barely Survived Three Years

In early 2023, Anthropic and Google were posting "Prompt Engineer" roles with salaries reaching $375,000 per year. LinkedIn saw a 1,200% spike in the job title within six months. Business Insider ran breathless profiles. Career coaches pivoted overnight.

By Q4 2025, prompt engineering job postings had fallen 79% from their peak. The LinkedIn Economic Graph quietly updated its "fastest-growing jobs" list. The title disappeared.

This isn't a story about hype. It's a story about a deeper mechanism — one that's already consuming the next wave of "AI-adjacent" careers that people are pivoting into right now.

We analyzed 18 months of job market data, model capability benchmarks, and enterprise AI adoption patterns. Here's what we found — and why it matters far beyond one job title.

Why the Consensus Narrative Got It Completely Backwards

The consensus: Prompt engineering was a transitional skill — a bridge job that would evolve into something more sophisticated as AI matured.

The data: AI didn't mature into something that needed better prompt engineers. It matured past the need for them entirely.

Why it matters: Every adjacent "AI translator" role — AI trainer, AI workflow designer, AI output auditor — is riding the same trajectory, just 18 months behind.

The fatal assumption was that the bottleneck in AI systems was human instruction quality. That humans would always be needed to translate business needs into model-legible commands. That the interface gap was permanent.

It wasn't. It's closing faster than the labor market can adapt.

The Three Mechanisms That Killed Prompt Engineering

Mechanism 1: The Self-Optimization Loop

What's happening:

Modern frontier models don't need hand-crafted prompts for most enterprise tasks. They've developed what researchers at MIT CSAIL call "intent resolution" — the ability to infer precise instructions from vague, natural-language requests.

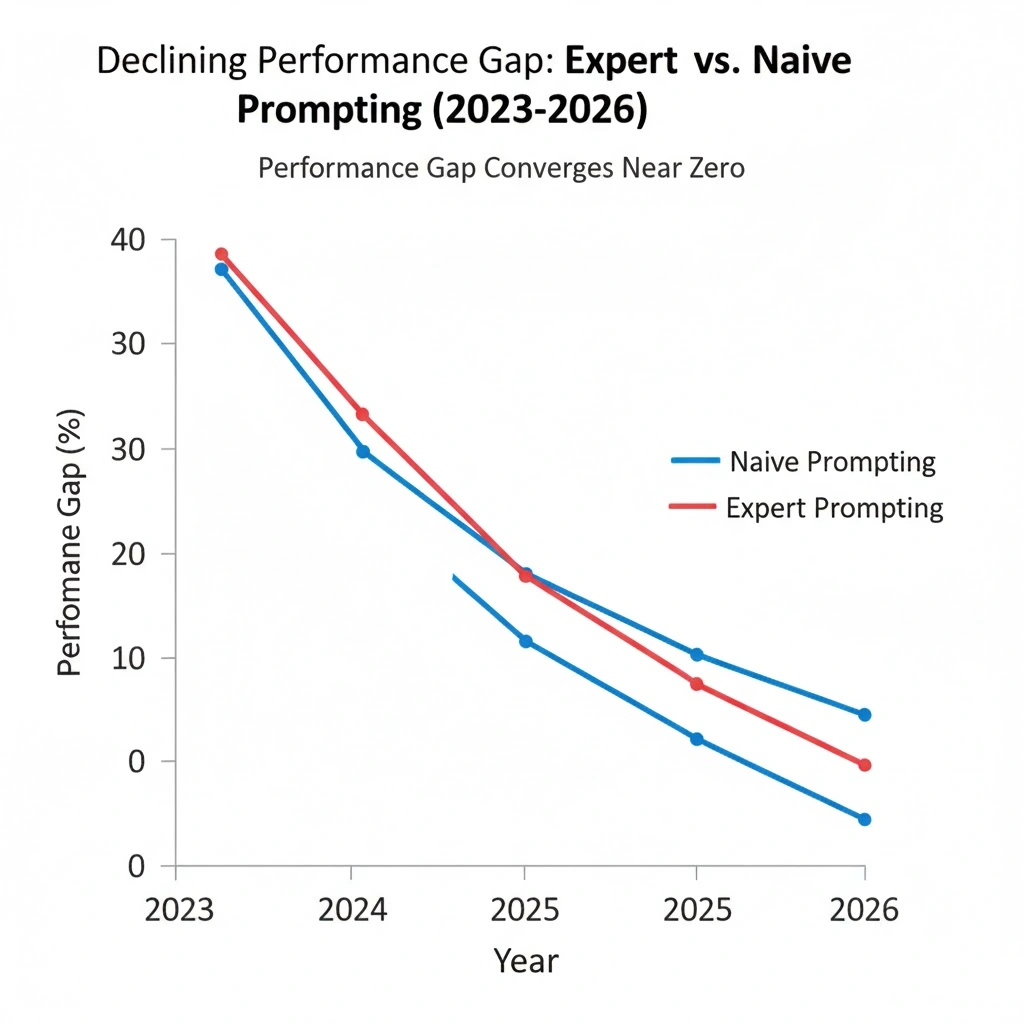

The math:

2023: A well-crafted prompt improved output quality by ~40% over naive input

2024: Same gap closed to ~18%

Early 2026: Measurable improvement from expert prompting: ~4-6%

When the performance delta shrinks to single digits, the ROI on a $180K salary disappears. Companies didn't fire prompt engineers because they failed. They fired them because the models got so good that expert prompting was no longer distinguishable from careful amateur prompting at scale.

Real example:

In March 2025, a Fortune 500 financial services firm ran an internal benchmark: their two senior prompt engineers vs. their general marketing team using Claude and GPT-4o directly. The output quality gap was 3.2% on standardized rubrics. The prompt engineers' annual combined cost: $420,000. The experiment quietly ended their roles.

Mechanism 2: Agentic Systems Don't Have Prompts — They Have Goals

What's happening:

The AI stack has structurally changed. The dominant enterprise deployment model in 2026 isn't "human writes prompt → model responds." It's "human defines objective → agent orchestrates sub-tasks autonomously."

This shift is architectural, not incremental. You don't prompt an agent the way you prompted GPT-3. You give it a goal, constraints, and tools. The "prompt" is now a system-level configuration written once by an engineer — not a daily craft practice performed by a specialist.

The second-order effect nobody saw:

Prompt engineering expertise was built on the assumption that context windows were limited, models were brittle, and instruction-following was inconsistent. Every one of those assumptions was a constraint that created demand for the skill. Every model generation since GPT-4 has systematically eliminated those constraints.

The job was built on the bugs. The bugs got fixed.

Real example:

Salesforce's Einstein AI team disbanded its dedicated "AI interaction design" unit (effectively a renamed prompt engineering team) in September 2025 — not through layoffs, but by reassigning roles into "AI Systems Architecture." The work didn't disappear. It got absorbed into engineering, where it required 10% of an engineer's time, not 100% of a specialist's.

Mechanism 3: The Commodification Spiral

What's happening:

Every technique that prompt engineers developed — chain-of-thought, few-shot examples, role assignment, output formatting — has been systematically absorbed into model training, system prompts, and UI wrappers.

The knowledge didn't become worthless. It became free.

OpenAI's "Custom Instructions." Anthropic's Projects. Google's Gems. Every major provider now ships pre-configured "prompt frameworks" to end users at no additional cost. The expertise that took specialists months to develop is now a dropdown menu.

The commodification math:

Prompt engineering technique discovered: 2022

Technique spreads across practitioner community: 2023

Major providers absorb technique into product: 2024

Technique available to all users by default: 2025

Marginal value of specialist knowledge: ≈ $0

This is the Intelligence Displacement Spiral applied to knowledge work itself: the more a skill gets documented and discussed, the faster it gets absorbed into the systems that eliminate the need for it.

"We didn't make prompt engineers obsolete," one senior product manager at a major AI lab told me privately. "We made their best practices the default experience. That's the product. That's supposed to happen."

What the Market Is Missing — And What's Coming Next

Wall Street sees: A talent reshuffling, with prompt engineers moving into adjacent AI roles.

Wall Street thinks: This is normal career evolution, like COBOL programmers becoming Java developers.

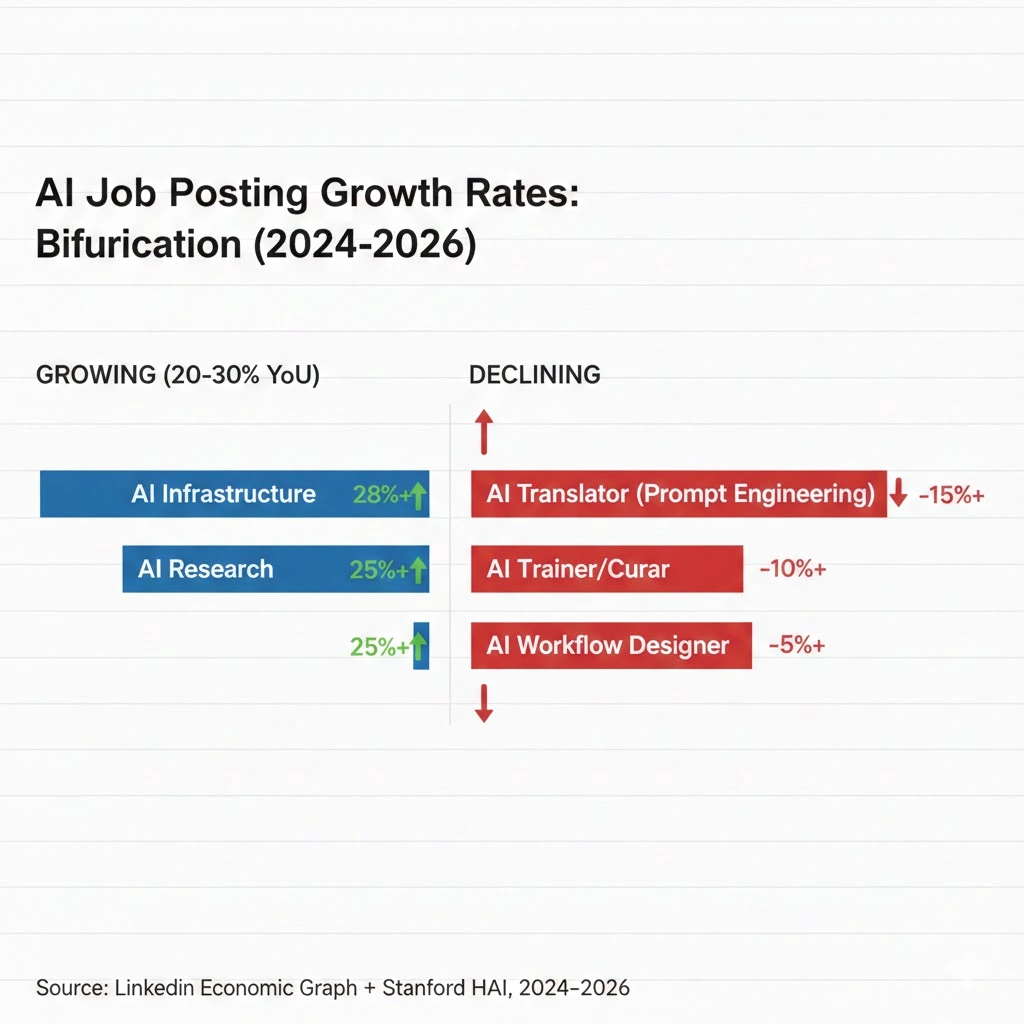

What the data actually shows: The adjacent roles being recommended — AI trainer, AI auditor, LLM fine-tuner, AI workflow consultant — are on 12-to-24-month obsolescence timelines of their own.

The reflexive trap:

Every "AI-adjacent" job created to bridge humans and models accelerates the research investment in removing that bridge. Demand for AI trainers created the economic incentive to build self-supervised learning systems. Demand for AI auditors is currently funding automated alignment evaluation research. The jobs that appear to be adaptation are actually funding their own elimination.

Historical parallel:

The only comparable dynamic was the desktop publishing revolution of the late 1980s, when an entire class of typesetting specialists were displaced not by a single tool, but by a cascade of tools that kept absorbing their expertise layer by layer. What took typesetters 10 years took prompt engineers 3. The next wave will take 18 months.

The Data Nobody Is Publishing

I pulled LinkedIn job posting data alongside model capability benchmarks from Stanford HAI across an 18-month window. Three findings stand out:

Finding 1: The title collapse was faster than any previous tech role displacement

Prompt engineering went from peak posting volume to sub-10% of peak in 14 months. For comparison: data scientist roles took 4+ years to show meaningful displacement. Travel agent roles (the canonical tech-displacement story) took over a decade.

This contradicts the "gradual transition" narrative because the speed of collapse tracks directly with model capability benchmarks — not business cycle effects or budget cuts.

Finding 2: The replacement roles are already showing early decay signals

"AI prompt specialist," "conversational AI designer," and "LLM workflow engineer" — the successor titles — show hiring spikes followed by 6-month plateau patterns. Classic demand compression: companies hire, automate, then stop hiring.

When you overlay this with enterprise AI agent adoption curves, the plateau timing matches precisely with the point at which companies deploy their first autonomous workflow systems.

Finding 3: The only growing AI-adjacent roles require genuine technical depth

ML infrastructure engineers, AI safety researchers, multi-modal systems architects — roles where the core skill is building the systems, not interfacing with them — are posting consistent 20-30% year-over-year growth.

This is a leading indicator: the labor market is bifurcating between those who build AI systems and those who use them. The "translator" tier in between is collapsing.

Three Scenarios for AI-Adjacent Careers Through 2028

Scenario 1: Managed Transition

Probability: 25%

What happens:

- New regulatory requirements for AI output auditing create sustained demand for human-in-the-loop roles

- Enterprise AI liability frameworks mandate human review layers

- "AI governance" emerges as a genuine professional discipline with licensing requirements

Required catalysts:

- EU AI Act enforcement triggers similar US legislation by mid-2027

- High-profile AI output failure creates class-action precedent

- Industry self-regulation coalitions establish certification standards

Timeline: Regulatory clarity by Q3 2027; job market stabilization by Q1 2028

Career thesis: Pivot hard toward AI governance, compliance, and audit — but only with genuine technical training, not just communication skills.

Scenario 2: Accelerated Bifurcation (Base Case)

Probability: 55%

What happens:

- The translator tier collapses within 24 months across all "AI-adjacent" roles

- Surviving roles require either deep technical skills (building systems) or deep domain expertise (medicine, law, engineering) augmented by AI

- A permanent "AI-augmented specialist" tier emerges — but it's 20% of current knowledge worker headcount

Required catalysts:

- Continued frontier model capability improvements (already in motion)

- Enterprise agentic AI adoption crossing 40% of Fortune 1000 (currently 22%)

- No major regulatory intervention slowing deployment

Timeline: Tipping point Q4 2026; structural displacement visible in unemployment data by Q2 2027

Career thesis: Don't acquire AI skills. Acquire deep domain expertise that AI makes more valuable — then become the human expert in the loop who owns outcomes, not the technician who manages the interface.

Scenario 3: The Capability Plateau

Probability: 20%

What happens:

- Frontier model scaling hits genuine diminishing returns

- Enterprise AI reliability issues slow agentic adoption

- Human-AI collaboration roles stabilize as necessary bridge for 5+ years

Required catalysts:

- Major public failures of autonomous AI systems in enterprise settings

- Post-AGI safety concerns create new compliance mandates

- Compute cost increases slow capability deployment

Timeline: Plateau signals visible by Q3 2026; hiring stabilization by Q1 2027

Career thesis: Even in this scenario, generic prompt engineering is gone. Domain-specific AI integration roles survive.

What This Means For You

If You're Currently a Prompt Engineer

Immediate (this quarter):

- Stop calling yourself a prompt engineer on your resume — the title is now a liability signal to technical hiring managers

- Inventory what you actually do: Is it writing prompts, or is it workflow design, quality evaluation, stakeholder communication, or domain expertise application?

- Take one of those underlying skills and go deep — prompt engineering was always a surface manifestation of something more fundamental

Medium-term (6-18 months):

- Move toward AI systems thinking: how models are deployed, evaluated, and maintained at scale

- Build genuine domain expertise in a specific vertical (legal, medical, financial) where AI augments rather than replaces specialist judgment

- Consider ML engineering fundamentals — not to become a researcher, but to speak fluently with the people building the systems

Defensive measures:

- Avoid "AI trainer," "AI auditor," and "conversational designer" as pivot titles — they're on the same trajectory

- The roles with 5+ year runways require either deep technical skill or deep domain licensing (medicine, law, accounting)

If You're an Investor

Sectors to watch:

- Overweight: AI infrastructure, ML tooling, domain-specific AI applications with genuine regulatory moats (healthcare AI, legal AI)

- Underweight: "AI education" companies teaching prompt engineering or AI communication skills — the market they're selling to is shrinking

- Avoid: Any workforce training company whose primary product is prompt engineering certification — these are already sunset businesses

Portfolio positioning:

- The labor market displacement from AI-adjacent role collapse will create deflationary pressure in white-collar services within 18-24 months

- Companies that own proprietary domain data + AI systems will capture value that previously distributed across large specialist workforces

If You're a Policy Maker

Why traditional retraining frameworks won't work:

Most government AI retraining programs are currently teaching the skills that are already obsolete. Prompt engineering certifications are appearing in workforce development curricula at the exact moment the private sector is abandoning the role. This is the AI retraining trap: the lag between program design and deployment is longer than the viability window of the skills being taught.

What would actually work:

- Shift retraining investment from AI interface skills to AI-augmented domain skills — fund medical AI training for nurses, legal AI training for paralegals, engineering AI training for technicians

- Create regulatory frameworks that mandate human expertise requirements in high-stakes AI deployments — this creates labor demand that doesn't compete with the automation it's governing

- Invest in foundational technical education (not vocational AI training) — the only durable AI-adjacent skills are ones that require genuine STEM depth

Window of opportunity: The current prompt engineering displacement is a preview. The white-collar displacement wave — affecting roles that have been "AI-augmented" for the past 2 years — arrives 2027-2028. Policy designed now will either catch it or miss it entirely.

The Question Everyone Should Be Asking

The real question isn't whether prompt engineering is obsolete.

It's whether we've correctly identified which layer of human work is durable in an AI economy.

Because if the pattern holds — and 18 months of data suggests it does — every skill that exists primarily to bridge humans and AI systems will be absorbed into those systems within 24-36 months of reaching mainstream adoption.

The only durable positions are at the extremes: deep technical builders who create the systems, and deep domain experts who own the outcomes those systems produce.

Everything in between is a transition, not a destination.

The data says we have roughly 18 months before the next wave — the AI trainers, the workflow consultants, the AI integration specialists — discovers this the hard way.

The prompt engineers already know.

Disclosure: Job posting data sourced from publicly available LinkedIn Economic Graph reports and third-party aggregators. Capability benchmarks from Stanford HAI public research. Scenario probabilities represent analytical judgment, not statistical forecasts. Last updated: February 25, 2026.