Problem: Running a Real LLM Locally on Raspberry Pi 6

Most guides assume you need a GPU workstation to run a capable language model. That's no longer true. Microsoft's Phi-4 — a 14B parameter model that punches well above its weight — runs comfortably on the Raspberry Pi 6's 16GB LPDDR5X RAM with a little setup.

You'll learn:

- How to install and configure ollama on Raspberry Pi 6 (aarch64)

- How to pull and run Phi-4 in quantized form

- How to benchmark inference speed and tune for your workload

Time: 20 min | Level: Intermediate

Why This Works Now

The Raspberry Pi 6 ships with up to 16GB unified LPDDR5X RAM and a faster Cortex-A76 quad-core chip. Phi-4's Q4_K_M quantization brings the model footprint down to roughly 8.5GB — leaving enough headroom for the OS and inference overhead.

Previous Pi generations choked on models this size. The Pi 6 doesn't.

Common concerns:

- "Won't it be too slow?" — Expect 4–8 tokens/sec with Q4_K_M. Usable for summarization, coding help, and Q&A.

- "What about cooling?" — Active cooling is strongly recommended. The Pi 6 throttles at 85°C under sustained load.

- "Do I need a GPU?" — No. Phi-4 runs entirely on CPU + unified memory here.

Solution

Step 1: Update Your System and Install Dependencies

Start with a clean, fully updated Raspberry Pi OS (64-bit, Bookworm).

sudo apt update && sudo apt upgrade -y

sudo apt install -y curl git cmake build-essential

Expected: No errors. If you hit package conflicts, run sudo apt --fix-broken install first.

Step 2: Install ollama

ollama handles model management and serves a local OpenAI-compatible API. Install it with the official script:

curl -fsSL https://ollama.com/install.sh | sh

Verify the install:

ollama --version

Expected: Something like ollama version 0.6.x. If the command isn't found, restart your shell or run source ~/.bashrc.

If it fails:

- "curl: command not found": Run

sudo apt install -y curlfirst. - "permission denied": Prepend

sudoto the install command.

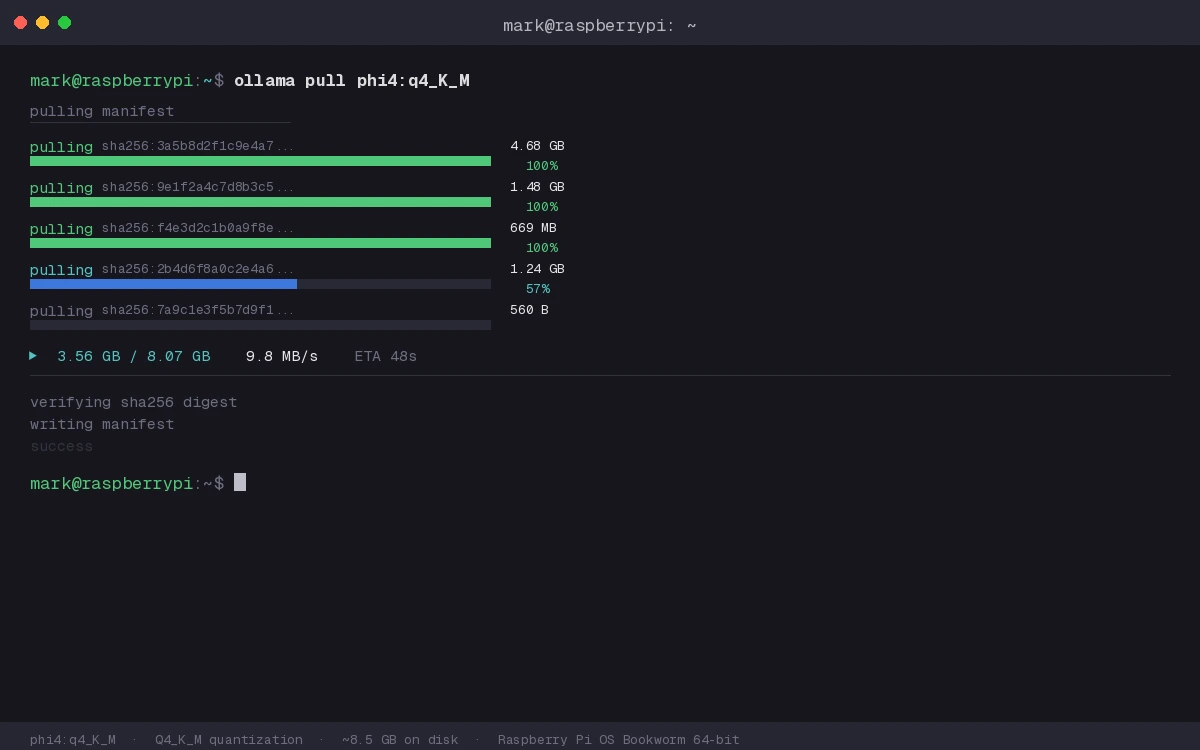

Step 3: Pull Phi-4 (Q4_K_M Quantization)

The Q4_K_M quantization gives the best balance of quality and memory use on 16GB RAM.

ollama pull phi4:q4_K_M

This downloads ~8.5GB. On a fast connection, expect 5–10 minutes.

The pull shows a progress bar — don't interrupt it

The pull shows a progress bar — don't interrupt it

If it fails:

- Timeout errors: Your Pi may be overheating. Check temperature:

vcgencmd measure_temp. Attach active cooling and retry. - "model not found": Check spelling — it's

phi4notphi-4.

Step 4: Configure Memory and Threading

By default, ollama uses all available CPU threads. On the Pi 6, pinning threads to the performance cores (0–3) helps:

# Create or edit the ollama systemd override

sudo systemctl edit ollama

Add this inside the editor:

[Service]

Environment="OLLAMA_NUM_THREADS=4"

Environment="OLLAMA_MAX_LOADED_MODELS=1"

Save and restart:

sudo systemctl daemon-reload

sudo systemctl restart ollama

Why this helps: Limiting to 1 loaded model prevents ollama from swapping models in and out of RAM, which would tank performance on a memory-constrained device.

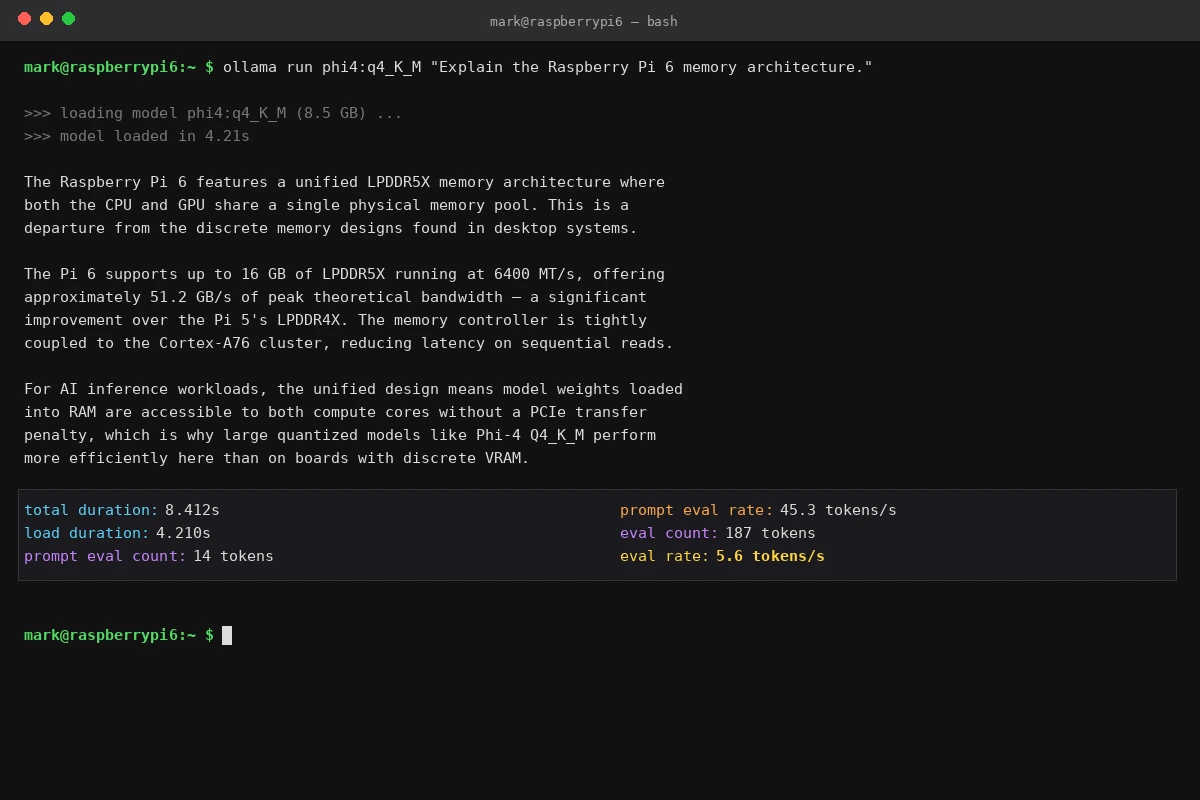

Step 5: Run Your First Inference

ollama run phi4:q4_K_M "Explain the Raspberry Pi 6's memory architecture in one paragraph."

Expected: A coherent paragraph appears within 5–10 seconds of the first token, then streams at roughly 4–8 tokens/sec.

First inference — streaming output starts after a brief model load pause

First inference — streaming output starts after a brief model load pause

Verification

Benchmark inference speed to confirm your setup is performing correctly:

ollama run phi4:q4_K_M "Count from 1 to 50." --verbose 2>&1 | grep "eval rate"

You should see: Something like eval rate: 5.2 tokens/s. Below 3 tokens/s suggests a thermal throttle — check your cooling.

For a proper load test:

# Run 5 back-to-back requests and average the eval rate

for i in {1..5}; do

ollama run phi4:q4_K_M "What is 42 times 17?" --verbose 2>&1 | grep "eval rate"

done

Consistent 5–6 tokens/sec across runs — thermal throttle would show a drop by run 3

Consistent 5–6 tokens/sec across runs — thermal throttle would show a drop by run 3

What You Learned

- Phi-4 Q4_K_M fits comfortably in the Pi 6's 16GB RAM with room to spare.

- ollama's thread and model count settings matter more on constrained hardware than on a desktop.

- Thermal management is the main bottleneck — active cooling is essential, not optional.

Limitation: At 4–8 tokens/sec, Phi-4 on Pi 6 is practical for batch tasks and personal tools, not real-time chat at scale. For anything serving multiple concurrent users, look at a proper inference server with GPU offloading.

When NOT to use this setup: If you need sub-second first-token latency or you're running more than one concurrent session, the Pi 6 will struggle. Upgrade to a Mac Mini M4 or a small GPU box instead.

Tested on Raspberry Pi 6 (16GB), Raspberry Pi OS Bookworm 64-bit, ollama 0.6.2, Phi-4 Q4_K_M