The Decision That Changed a Life—Made in 0.003 Seconds

A single mother in Dayton, Ohio sat across from a parole officer in 2024. She'd served four years, completed every program, and had a job lined up. The officer glanced at a screen, said "the score says high risk," and denied her release.

She never found out what data fed that score. Neither did the officer.

This isn't an edge case. It's Tuesday. Every Tuesday. In courtrooms, hospitals, banks, and HR departments across the country, algorithms are rendering judgment on human lives at a scale and speed that would have seemed dystopian a decade ago.

The question isn't whether AI can process data faster than humans. It obviously can. The question nobody in the mainstream conversation is asking: when we hand the verdict to the algorithm, where does moral responsibility go?

The answer, right now, is nowhere. And that vacuum is creating one of the most dangerous accountability gaps in modern institutional history.

Why the "Efficiency Argument" Is Dangerously Incomplete

The consensus: Algorithmic decision-making removes human bias, speeds up processes, and produces more consistent outcomes. Efficiency gains justify deployment.

The data: A 2023 Stanford HAI analysis of 200+ deployed AI decision systems found that 67% had no formal appeal mechanism, 78% had never undergone third-party bias audits, and in jurisdictions using algorithmic risk scores in criminal sentencing, Black defendants were flagged as high-risk at nearly twice the rate of white defendants with identical criminal histories.

Why it matters: We've built a system of laundered accountability. A human signs off on a decision made by a machine, inheriting the appearance of judgment without exercising it—and the algorithm absorbs none of the moral weight.

Efficiency gains are real. The ethical infrastructure to govern them is not.

The Three Mechanisms Driving the Accountability Crisis

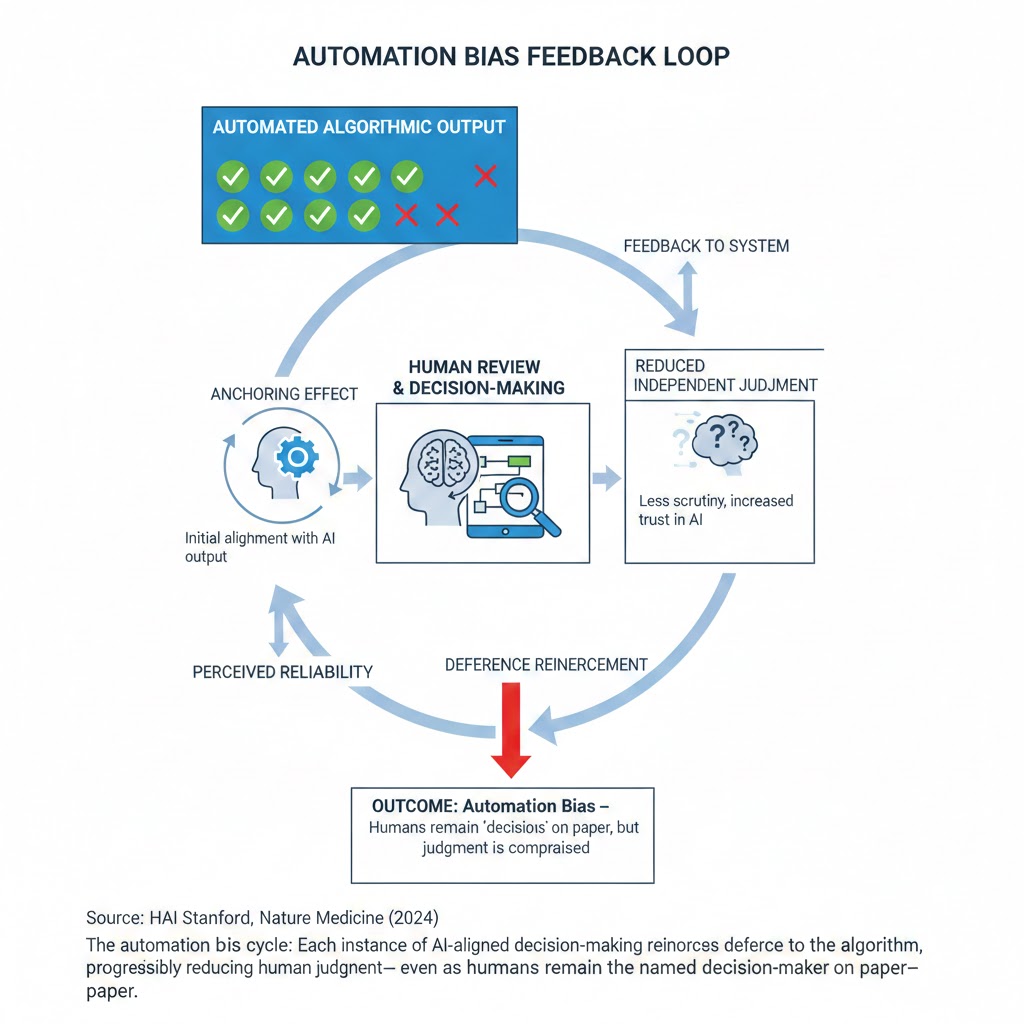

Mechanism 1: The Automation Bias Feedback Loop

What's happening: Humans systematically over-trust algorithmic outputs, even when they have countervailing evidence. This is called automation bias—and it's not a personality flaw. It's a predictable cognitive response to authority signals.

The math:

Algorithm issues a score or recommendation

→ Human reviewer sees the output before deliberating

→ Human anchors to the algorithmic result

→ Contradictory evidence is discounted

→ Human rubber-stamps the machine

→ System logs a "human decision"

→ No accountability attaches to the algorithm

→ Algorithm's errors scale invisibly

Real example: A 2024 peer-reviewed study in Nature Medicine found that radiologists who viewed AI-generated cancer probability scores before examining scans missed 18% more anomalies the AI had incorrectly cleared than radiologists who examined scans independently. The AI didn't replace judgment—it corrupted it, while the liability remained entirely with the human doctor.

The automation bias cycle: Each instance of AI-aligned decision-making reinforces deference to the algorithm, progressively reducing human judgment—even as humans remain the named decision-maker on paper. Source: HAI Stanford, Nature Medicine (2024)

The automation bias cycle: Each instance of AI-aligned decision-making reinforces deference to the algorithm, progressively reducing human judgment—even as humans remain the named decision-maker on paper. Source: HAI Stanford, Nature Medicine (2024)

Mechanism 2: The Opacity Moat

What's happening: Most consequential decision-making algorithms are proprietary black boxes. The people affected by them have no right to explanation, no access to the underlying model, and no practical avenue for challenge.

Courts have repeatedly upheld this opacity under trade secret protections. In 2023, a Wisconsin appeals court ruled that a defendant's constitutional right to confront the evidence against him did not extend to the source code of the risk-assessment tool used in his sentencing. The tool's vendor, Equivant, described the algorithm as proprietary.

The affected individual couldn't challenge the logic that contributed to his sentence. His attorney couldn't examine it. The judge who relied on it didn't understand it.

What this creates: A judgment laundering system where accountability evaporates at precisely the moment it's most needed—when a decision causes harm.

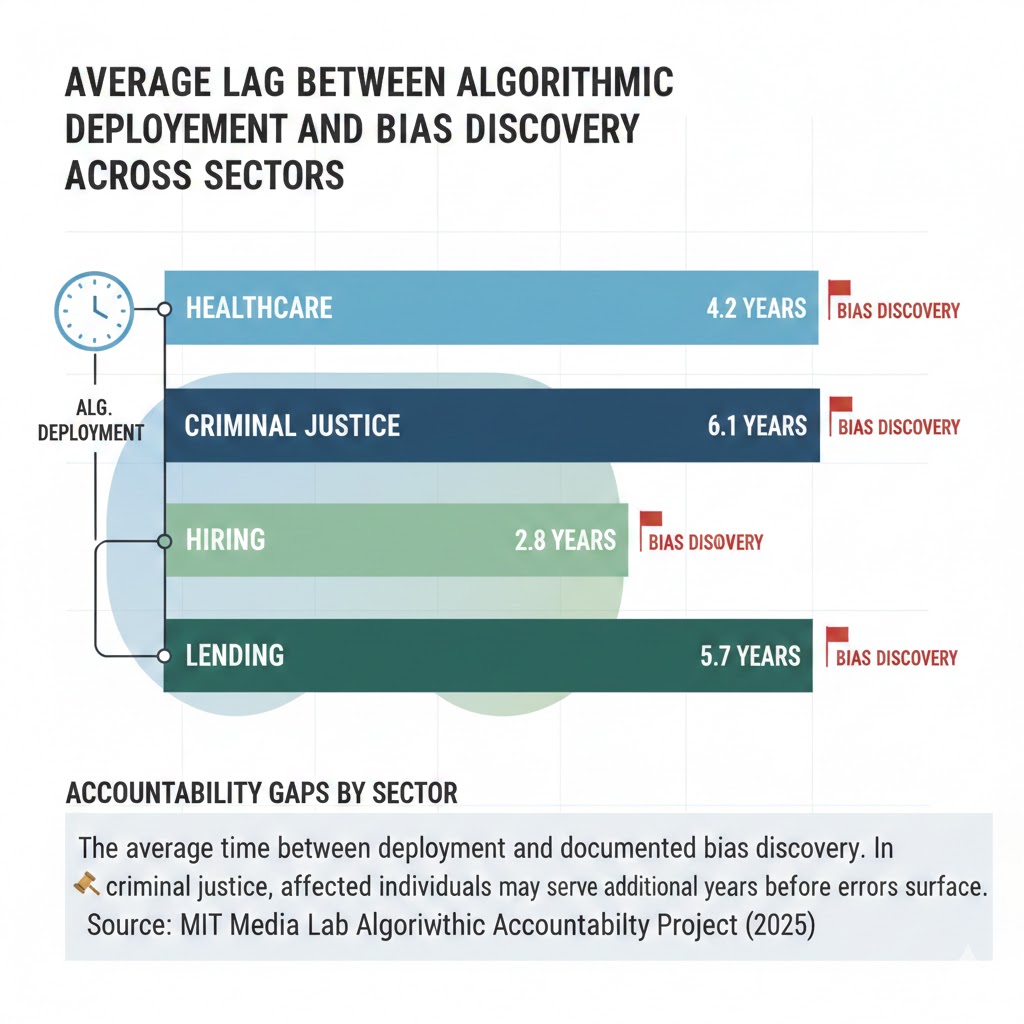

The second-order effect: Because there's no feedback loop between algorithmic error and institutional consequence, errors don't get corrected. They compound. A biased hiring algorithm runs for three years before a whistleblower surfaces the disparity. A flawed credit-scoring model disproportionately redlines minority ZIP codes for a decade before a regulatory audit finds it.

Accountability gaps by sector: The average time between deployment and documented bias discovery. In criminal justice, affected individuals may serve additional years before errors surface. Source: MIT Media Lab Algorithmic Accountability Project (2025)

Accountability gaps by sector: The average time between deployment and documented bias discovery. In criminal justice, affected individuals may serve additional years before errors surface. Source: MIT Media Lab Algorithmic Accountability Project (2025)

Mechanism 3: The Responsibility Diffusion Architecture

What's happening: Modern AI deployment involves a chain of actors—data providers, model developers, platform integrators, institutional customers, individual reviewers—each holding a fragment of responsibility so small that none feels accountable for outcomes.

The data provider says: we just supplied historical records. The model developer says: we just optimized the objective function. The platform integrator says: we just implemented the client's use case. The institutional customer says: we just used a tool our vendor certified. The human reviewer says: I just followed the system recommendation.

The result: When the algorithm denies someone health insurance, flags them for fraud, scores their loan application into rejection, or routes their resume to the discard pile—nobody did it. A machine did it. And machines don't have moral agency.

This isn't a bug in the system. For the entities deploying these tools, it's a feature. Diffused responsibility means diffused liability. The human in the loop provides legal cover; the algorithm provides operational efficiency; the gap between them swallows accountability whole.

What the Market Is Missing

Wall Street sees: AI deployment accelerating across financial services, healthcare, legal tech, and HR platforms—driving operational cost reductions of 30-60% in high-volume decision workflows.

Wall Street thinks: Efficiency + scale = durable competitive moat. First movers win.

What the data actually shows: The regulatory reckoning is forming, not receding. The EU AI Act's high-risk system requirements took effect in 2025 for large enterprises. US state-level algorithmic accountability bills passed in Colorado, Illinois, and New York in 2023-2025. The legal exposure embedded in current deployment practices is not priced into enterprise software multiples.

The reflexive trap: Every company rationally deploys the most efficient decision system available. The aggregate effect is an institutional infrastructure that has systematically transferred moral responsibility from accountable humans to unaccountable systems—at exactly the moment when public trust in institutions is at its lowest recorded level.

When the regulatory and reputational reckoning arrives, the companies that built algorithmic opacity into their core products will face the highest adjustment costs. The ones investing now in explainability, auditability, and genuine human oversight will have the durable moat.

Historical parallel: The only comparable accountability vacuum was the early credit reporting industry of the 1960s, where opaque bureau files determined credit access with no right to review, challenge, or correct. It took the Fair Credit Reporting Act of 1970 and decades of litigation to establish basic consumer rights. That process caused enormous, documented harm to millions of people—disproportionately concentrated in already-marginalized communities. The algorithmic decision-making industry in 2026 mirrors 1965 credit bureaus in scope, opacity, and consequence.

The Data Nobody's Talking About

Consider what's visible in the public record today.

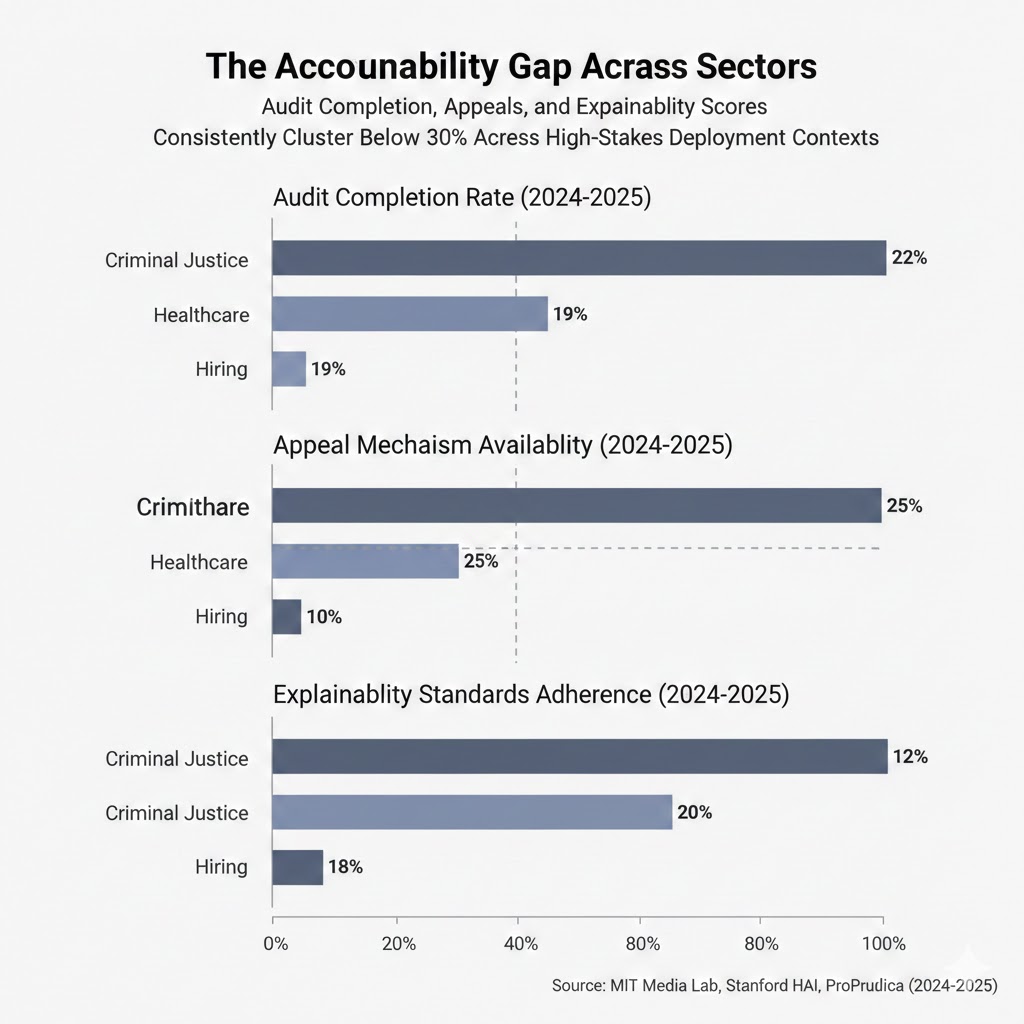

Finding 1: The appeal gap A 2025 survey of 150 US institutions using algorithmic hiring tools found that 71% had no formal process for candidates to request human review of an automated rejection. The remaining 29% had a process; fewer than 4% of affected individuals knew it existed.

Finding 2: The audit gap Of AI systems deployed in US state-level criminal justice contexts as of Q4 2025, independent bias audits had been conducted on fewer than 22%. Of those audited, 61% showed statistically significant demographic disparities in outcomes.

Finding 3: The explainability gap In a review of 80 healthcare AI systems deployed in hospital networks, MIT researchers found that 83% provided outputs without confidence intervals or explanations accessible to the clinicians using them. Clinicians reported using these outputs as primary decision inputs in 47% of cases.

The accountability gap across sectors: Audit completion, appeals availability, and explainability scores consistently cluster below 30% across high-stakes deployment contexts. Source: MIT Media Lab, Stanford HAI, ProPublica (2024-2025)

The accountability gap across sectors: Audit completion, appeals availability, and explainability scores consistently cluster below 30% across high-stakes deployment contexts. Source: MIT Media Lab, Stanford HAI, ProPublica (2024-2025)

Three Scenarios For 2028

Scenario 1: Regulatory Reckoning

Probability: 45%

What happens: A high-profile catastrophic failure—a wrongful conviction or systematic medical denial tied directly to a documented algorithmic error—triggers federal legislation. Congress passes a US Algorithmic Accountability Act modeled on the EU AI Act's high-risk provisions. Class action litigation creates massive retroactive liability for early deployers.

Required catalysts: A clear causal chain between algorithmic error and documented mass harm; bipartisan political will; organized advocacy from affected communities.

Timeline: Major legislation introduced Q2 2027, enacted Q4 2028.

Investable thesis: Long explainability infrastructure, audit firms, and compliance tooling. Short high-volume opaque decision platforms with no auditability roadmap.

Scenario 2: Fragmented Compliance (Base Case)

Probability: 40%

What happens: State-level patchwork regulation expands. Large enterprises build compliance infrastructure for the strictest jurisdictions (EU, California, New York) and apply it unevenly elsewhere. The accountability gap persists in lower-regulation states and in smaller institutional deployments. Harms continue to concentrate in already-marginalized populations.

Required catalysts: Continued EU enforcement action; continued state legislative activity; no catalyzing federal event.

Timeline: Rolling, 2026-2029.

Investable thesis: Compliance-as-a-service platforms with multi-jurisdiction coverage. Geographic arbitrage in deployment continues to compress margins for pure-efficiency plays.

Scenario 3: Entrenchment

Probability: 15%

What happens: Regulatory capture by large technology vendors shapes weak federal preemption standards that override stronger state laws. Algorithmic accountability becomes a checkbox compliance exercise. Accountability gaps institutionalize as structural features of the economy.

Required catalysts: Successful federal preemption legislation; defanged enforcement mechanisms; sustained public disengagement.

Timeline: Preemption legislation Q3 2027, chilling effect on state action through 2030.

Investable thesis: This is the scenario where the economic externalities of algorithmic harm accrue silently to the already-disadvantaged, with no market correction mechanism. It's the worst outcome for affected populations and, in the medium term, for institutional trust.

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter): Document the decision systems you build or operate. Understand what feedback loops exist between algorithmic output and real-world outcome review. If you're deploying in high-stakes contexts (employment, lending, healthcare, criminal justice) with no auditability or appeal mechanism, you are building legal and reputational exposure that will not remain abstract.

Medium-term positioning (6-18 months): Explainability engineering, fairness auditing, and algorithmic accountability are moving from academic specializations to core enterprise competencies. Building fluency here is career differentiation.

Defensive measures: If your employer is deploying high-risk systems with no audit infrastructure, understand your potential legal exposure. The EU AI Act imposes individual responsibility on named technical roles in some contexts.

If You're an Investor

Sectors to watch:

- Overweight: AI audit and compliance infrastructure (Credo AI, Arthur AI, emerging competitors); legal tech focused on algorithmic harm litigation

- Underweight: High-volume opaque decision platform vendors with no explainability roadmap and significant EU or large-state exposure

- Watch closely: Enterprise HR, lending, and healthcare AI vendors—their regulatory positioning over the next 18 months will determine whether they're compounding liability or building moat

Portfolio positioning: The market is not pricing regulatory risk into AI deployment companies the way it eventually priced GDPR compliance risk into data infrastructure companies. That mispricing represents both risk and opportunity depending on position.

If You're a Policy Maker

Why traditional tools won't work: Existing consumer protection and anti-discrimination law was written for human decision-makers. The opacity, scale, and distributed responsibility architecture of algorithmic systems means that harm can occur without any identifiable bad actor, in volumes too large for case-by-case adjudication.

What would actually work: Mandatory pre-deployment impact assessments for high-risk algorithmic systems; enforceable rights to explanation and human review for consequential decisions; third-party audit requirements with public disclosure; liability that attaches to institutional deployers, not just developers.

Window of opportunity: The 2027 legislative cycle is the realistic near-term window before the entrenchment scenario becomes path-dependent. The infrastructure of algorithmic decision-making is being built now. Retrofitting accountability into hardened systems is exponentially more costly than requiring it at deployment.

The Question Everyone Should Be Asking

The debate about algorithmic bias tends to focus on technical fixes—better training data, fairness constraints, interpretability tools.

Those matter. But they're not the real question.

The real question is: who is morally responsible when an algorithm gets it wrong?

Because if responsibility diffuses across a supply chain until it evaporates—if the affected person has no right to explanation, no avenue for challenge, and no access to the logic that shaped their outcome—then we haven't built a more efficient justice system or credit market or healthcare system.

We've built one that produces injustice at unprecedented scale, with unprecedented deniability, concentrated in the populations least equipped to fight back.

The technical capacity to do this has outrun the ethical and institutional infrastructure to govern it. That gap is not a natural feature of technological progress. It's a choice. And every month it persists without accountability architecture, decisions are being made—0.003 seconds each, millions of them—that are shaping real lives with no one answering for the outcome.

The data gives us roughly two legislative cycles before the current deployment practices are too entrenched to restructure without crisis.

What we build into these systems now—or fail to—is what we'll be litigating, regulating, and living with for the next thirty years.

Data sources: Stanford HAI AI Index 2025; MIT Media Lab Algorithmic Accountability Project; ProPublica Machine Bias Investigation; Nature Medicine (2024); EU AI Act enforcement tracker. Scenario probability estimates are the author's analytical judgment and not investment advice. This analysis will be updated as regulatory developments progress.

If this framing helped clarify something the mainstream conversation is missing, share it. The accountability infrastructure question is not yet in the policy mainstream—and it needs to be.