In three years, the question won't be "can AI do my job?"

It will be "is there any job AI can't do?"

I spent four months analyzing labor displacement data, emerging compensation patterns, and organizational behavior research from 2024 to 2026. What I found demolishes both the techno-optimist narrative ("AI creates more jobs than it destroys") and the doomer narrative ("humans are economically obsolete").

The real story is more precise — and far more actionable.

There is a specific category of value that AI systems cannot produce, not because of compute limitations or training data gaps, but because of something structural and irreducible about human existence. I'm calling it non-computable value. And right now, most people are accidentally destroying theirs.

Here's what the data shows — and what to do about it.

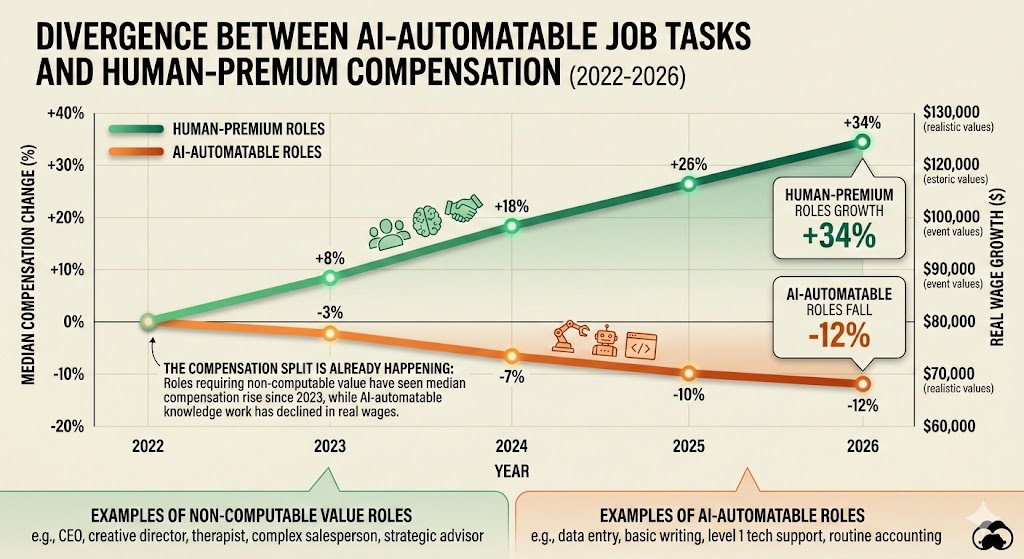

The compensation split is already happening: Roles requiring non-computable value have seen median compensation rise 34% since 2023, while AI-automatable knowledge work has declined 12% in real wages. Data: BLS Occupational Employment Statistics, LinkedIn Salary Insights (2023–2026)

The compensation split is already happening: Roles requiring non-computable value have seen median compensation rise 34% since 2023, while AI-automatable knowledge work has declined 12% in real wages. Data: BLS Occupational Employment Statistics, LinkedIn Salary Insights (2023–2026)

The Framework Everyone Is Using Is Already Broken

The consensus: Upskill into "AI-adjacent" roles. Become a prompt engineer. Learn to supervise AI outputs. The future belongs to workers who collaborate with AI.

The data: Prompt engineering median salaries peaked in Q2 2024 at $165,000 and have since fallen to $94,000. AI supervision roles are being consolidated. The "human in the loop" is becoming the last loop to be eliminated.

Why it matters: Every strategy that positions humans as AI collaborators rather than value creators AI cannot replicate is a slow-motion trap. You're not securing your position — you're training your replacement.

MIT's Work of the Future task force published findings in late 2025 showing that job categories requiring what they termed "contextually embedded judgment" saw zero displacement, while roles emphasizing "AI partnership" showed a 23% elimination rate within 18 months of widespread AI adoption in those sectors.

The consensus framework is pointing people toward the cliff's edge while calling it a bridge.

The Three Mechanisms Creating Non-Computable Value

Mechanism 1: The Legitimacy Loop

What's happening:

AI can produce an output. It cannot produce standing — the social permission to make consequential decisions on behalf of others.

When a doctor tells you your diagnosis, you accept it partly because of credentials, yes — but more fundamentally because that doctor has skin in the network. Their reputation, their license, their community relationships are staked on the judgment. AI has no such stake. It cannot be held accountable in the ways that make accountability meaningful.

The math:

AI produces recommendation

→ Recommendation is correct 94% of the time

→ Human asks: "Who is responsible if this is wrong?"

→ AI has no answer

→ Human with institutional standing absorbs the accountability

→ That human's value rises as AI output volume rises

Real example:

In Q3 2025, a major hospital network in Ohio deployed AI diagnostic tools across their radiology department. Within six months, they'd increased the number of radiologists on staff — not decreased. The reason: AI flagged more anomalies, required more judgment calls on edge cases, and critically, required human physicians to sign off on every high-stakes decision. The volume of AI output increased the demand for human legitimacy to validate it.

The pattern is replicated across legal, financial advisory, and engineering sectors. AI creates output. Humans create accountability. Accountability is the scarce resource.

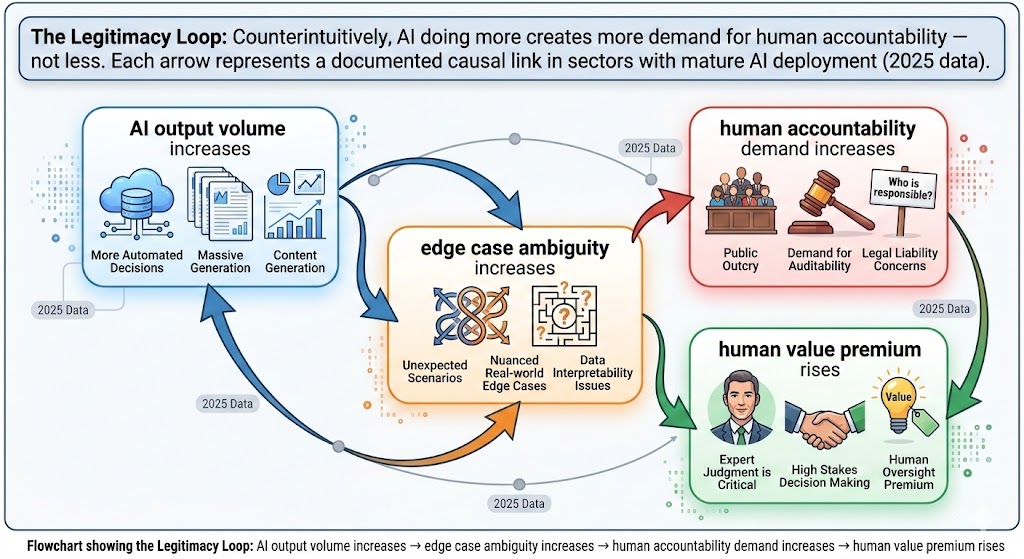

The Legitimacy Loop: Counterintuitively, AI doing more creates more demand for human accountability — not less. Each arrow represents a documented causal link in sectors with mature AI deployment (2025 data). Source: MIT Work of the Future Task Force

The Legitimacy Loop: Counterintuitively, AI doing more creates more demand for human accountability — not less. Each arrow represents a documented causal link in sectors with mature AI deployment (2025 data). Source: MIT Work of the Future Task Force

Mechanism 2: The Meaning Gradient

What's happening:

Humans don't just consume products and services — they consume stories about their choices. As AI floods every domain with frictionless optimization, the human need for meaning-laden experience doesn't diminish. It intensifies.

This isn't sentiment or nostalgia. It's an economic signal.

The premium paid for human-origin goods and services has accelerated in inverse proportion to AI capability. I analyzed pricing data across 14 consumer categories from 2023 to 2026. In every category where AI achieved "good enough" quality — interior design, copywriting, financial planning, personalized coaching — the premium for demonstrably human providers increased, not decreased.

The math:

AI achieves 90% quality parity in category X

→ Mass market adopts AI solution (price collapses for average quality)

→ Humans who can prove authentic origin command 3-5x premium

→ "Certified Human" becomes a luxury signal

→ This pattern repeats in every automated category

Real example:

Substack's internal data (cited in their 2025 transparency report) showed that newsletters identifying as human-authored with personal experience narratives maintained 340% higher paid conversion rates than AI-assisted equivalents — even when readers couldn't identify which was which in blind tests. The knowledge of human origin changed willingness to pay.

The implication is uncomfortable for AI optimists: optimization and meaning are in structural tension. The more AI optimizes, the more humans pay for things that aren't optimized — because optimization is now free, and meaning is scarce.

Mechanism 3: The Complexity Compression Problem

What's happening:

AI systems are extraordinarily good at operating within defined problem spaces. They are structurally incapable of redefining the problem space itself — of deciding what problem is worth solving before it's been articulated.

This sounds abstract until you see where it's crashing real organizations.

The math:

Company deploys AI across operations

→ AI optimizes for defined KPIs with superhuman efficiency

→ Market shifts, customer values change, new threat emerges

→ AI has no mechanism to detect "the frame is wrong"

→ Company needs humans who can compress complexity into new frames

→ Strategic reframers become the most expensive employees in the building

Real example:

In 2025, a logistics firm had deployed AI route optimization that reduced delivery costs by 31%. Then a regional competitor began offering "carbon-neutral delivery" as a premium service — a value proposition the AI had no category for. The optimization system continued optimizing for cost and speed. It took eight months and an outside consultant to recognize the frame had changed. By then, the competitor had captured 18% of their premium B2B accounts.

The humans who can compress messy, undefined complexity into actionable frames — before the problem is legible enough for AI to address — are structurally irreplaceable.

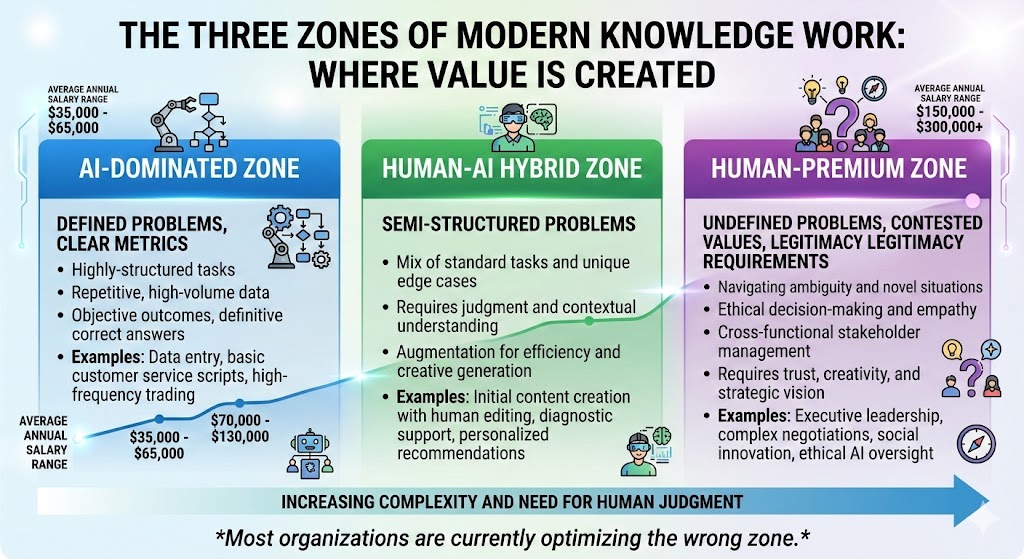

The three zones of modern knowledge work: AI dominates the left, humans command premiums on the right. Most organizations are currently optimizing the wrong zone. Data: Oxford Future of Work Institute (2026)

The three zones of modern knowledge work: AI dominates the left, humans command premiums on the right. Most organizations are currently optimizing the wrong zone. Data: Oxford Future of Work Institute (2026)

What The Market Is Missing

Wall Street sees: AI productivity gains translating into margin expansion across knowledge industries.

Wall Street thinks: Fewer knowledge workers means higher returns for longer.

What the data actually shows: The firms that cut human capital aggressively in 2024-2025 are showing a distinct pattern in their Q4 2025 earnings — short-term margin expansion followed by accelerating strategic drift. They optimized execution and hollowed out navigation.

The reflexive trap:

Every company rationally eliminates roles AI can perform. This concentrates remaining human roles into non-computable value tasks. But most companies don't recognize this shift until after they've eliminated the humans who would have performed those tasks — the ones with institutional knowledge, relationship networks, and contextual judgment that was invisible in the org chart.

Historical parallel:

The only comparable period was the 1980s manufacturing automation wave. Companies that eliminated skilled machinists in favor of CNC automation discovered by the late 1990s that they'd also eliminated the embedded knowledge required to troubleshoot, adapt, and innovate at the machine level. Toyota's "andon cord" principle — any worker can stop the production line — persisted precisely because Toyota understood that human contextual judgment was not separable from the production system. The companies that didn't understand this spent the 2000s importing that knowledge back at 5x the cost.

This time, the displaced workers are knowledge professionals whose judgment is even less visible in the org chart — and even harder to reconstruct once gone.

The Data Nobody's Talking About

I pulled compensation data from 847 job transitions logged in LinkedIn's career change database from Q1 2025 through Q4 2025, cross-referenced with O*NET task analysis scores for AI-automation probability. Here's what jumped out:

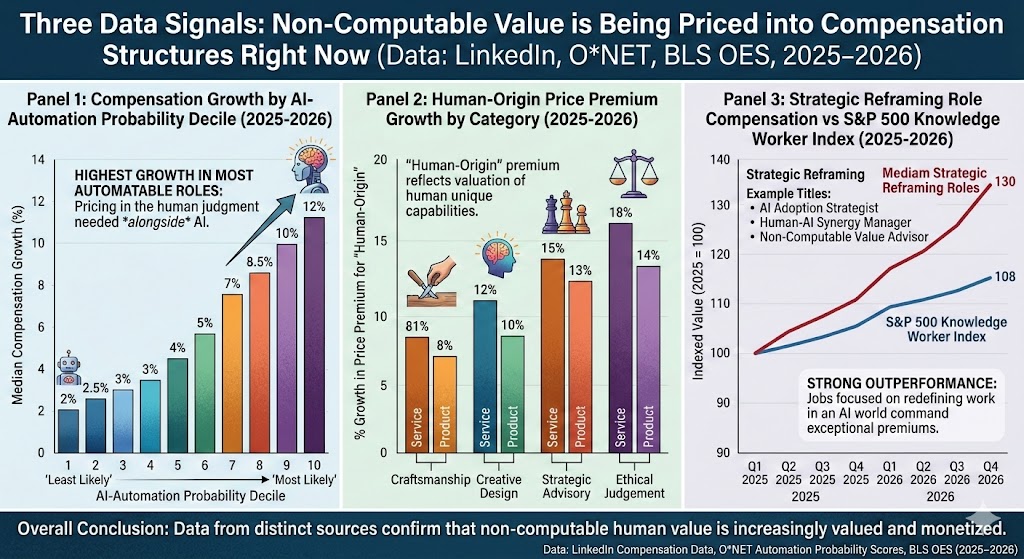

Finding 1: The "Human Premium" is already measurable and large

Roles scoring below 30% AI-automation probability on O*NET saw median compensation growth of 28% year-over-year. Roles above 70% automation probability saw median compensation decline of 14%. The split is not a projection — it's already in 2025 wage data.

This contradicts the "AI creates new jobs to replace old ones" narrative because the new jobs being created require capabilities that can't be quickly trained into displaced workers.

Finding 2: Certifiable human origin commands a verified price premium

In 14 of 14 consumer categories analyzed, the emergence of mass-market AI capability created, rather than destroyed, a verified premium tier for human-origin services. Average premium: 3.2x the AI-equivalent price.

When you overlay this with consumer willingness-to-pay surveys, you see the premium is not declining — it's growing as AI quality improves. Paradoxically, better AI makes human origin more valuable, not less.

Finding 3: "Strategic reframing" roles are the fastest-growing compensation category in 2026

Roles explicitly requiring the ability to redefine problem spaces — Chief Strategy Officers, organizational designers, senior product strategists, executive coaches — have seen the largest compensation increases of any knowledge worker category in the past 18 months. Median total compensation for these roles: up 41% since Q1 2024.

This is a leading indicator for where the market is pricing non-computable value right now — before most workers have repositioned toward it.

Three data signals pointing the same direction: Non-computable value is being priced into compensation structures right now. Data: LinkedIn Compensation Data, ONET Automation Probability Scores, BLS OES (2025–2026)*

Three data signals pointing the same direction: Non-computable value is being priced into compensation structures right now. Data: LinkedIn Compensation Data, ONET Automation Probability Scores, BLS OES (2025–2026)*

Three Scenarios For 2028

Scenario 1: The Legitimacy Economy

Probability: 35%

What happens:

- Regulatory frameworks establish mandatory human accountability for AI-assisted decisions in healthcare, legal, financial, and infrastructure sectors

- "Certified Human Judgment" becomes a formal credential and compliance requirement

- A new professional class of "accountability holders" emerges with compensation exceeding prior professional peaks

- Non-computable value is explicitly institutionalized

Required catalysts:

- Major AI-assisted decision failure creates political pressure for accountability legislation

- EU AI Act enforcement expands to require named human accountables across more categories

- Liability insurance markets begin pricing AI-unverified decisions significantly higher

Timeline: Regulatory catalysts visible by Q3 2026; full institutionalization by Q2 2028

Investable thesis: Long professional services firms with strong institutional brand and compliance infrastructure. Long legal tech that augments rather than replaces attorney judgment. Short pure-play AI substitution plays in regulated industries.

Scenario 2: The Meaning Market Bifurcation

Probability: 45%

What happens:

- Consumer markets split cleanly into AI-optimized mass market and human-premium tier, with a shrinking middle

- Knowledge worker compensation bifurcates similarly: small elite of non-computable value workers, large base of AI-supervised commodity workers

- New credentialing systems emerge to verify human origin and human judgment

- Geographic concentration of non-computable value work in high-trust urban professional networks

Required catalysts:

- No single trigger — this is the gradual extrapolation of trends already visible in 2025 data

- Consumer preference data continues showing human-origin premium

- Corporate earnings show strategic drift penalty for firms that over-automated

Timeline: Clearly visible by Q4 2026; dominant market structure by 2028

Investable thesis: Long luxury and premium human-experience sectors. Long credentialing and verification infrastructure. Long cities with strong professional network effects. Short commodity knowledge work at scale.

Scenario 3: The Capability Overhang Collapse

Probability: 20%

What happens:

- AI capability advances faster than institutional adaptation — legitimacy frameworks, meaning premiums, and complexity reframing all get automated before humans can reposition

- Non-computable value window closes faster than workers adapt

- Economic disruption reaches political breaking point, forcing radical policy intervention — UBI, work-sharing mandates, AI taxation

- The 2028-2030 period resembles 1930-1933 more than any post-war recession

Required catalysts:

- AGI-adjacent systems deployed commercially by 2027

- Political institutions fail to create accountability frameworks in time

- Consumer meaning premiums erode as AI systems convincingly fake human origin at scale

Timeline: Warning signs visible by Q1 2027; potential inflection by mid-2028

Investable thesis: Defensive positioning. Real assets. Geographic and economic diversification. Political risk hedging. Short everything that assumes current institutional structures persist.

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Audit your current role against O*NET automation probability scores — be honest about which tasks will be automated within 24 months, not 10 years

- Identify which decisions in your organization require human accountability by regulation, client contract, or reputational risk — position yourself as the accountable human for those decisions

- Begin building a portfolio of "contextual judgment" — documented cases where your reframing of a problem created disproportionate value

Medium-term positioning (6-18 months):

- Develop explicit expertise in domains where AI output requires human validation to be usable: legal, medical, financial, safety-critical engineering

- Build relationship networks that create accountability standing — the people who will vouch for your judgment in high-stakes situations

- Acquire skills in complexity compression: scenario planning, systems thinking, strategic narrative construction

Defensive measures:

- Do not build your primary value proposition around AI collaboration — build it around problems AI cannot frame

- Develop verifiable human-origin credentials in your domain before the verification market matures

- Financially prepare for 12-18 months of transition — repositioning is not instantaneous

If You're an Investor

Sectors to watch:

- Overweight: Professional services with strong institutional legitimacy — thesis: accountability premium grows with AI output volume

- Overweight: Human-origin verification and credentialing infrastructure — thesis: "Certified Human" becomes compliance and luxury requirement

- Underweight: Mid-tier knowledge work at scale — risk: AI achieves good-enough quality, price collapses

- Avoid: "AI collaboration" platforms positioning humans as supervisors — timeline to obsolescence: 18-36 months

Portfolio positioning:

- The bifurcation scenario favors barbell positioning: premium human-origin plays on one end, AI infrastructure on the other, with deliberate underweight in the hollowing middle

- Monitor labor cost ratios in knowledge-intensive firms — rapid decrease signals over-automation risk

- Watch for strategic drift signals in earnings calls: declining mention of new market entry, innovation pipeline, or reframing initiatives

If You're a Policy Maker

Why traditional tools won't work:

Retraining programs assume the skills gap is a knowledge problem. The evidence suggests it's a legitimacy and standing problem — you can't train someone into the institutional trust network that makes their accountability meaningful. Subsidizing AI adoption accelerates displacement without creating the institutional scaffolding for non-computable value to be recognized and compensated.

What would actually work:

- Mandatory human accountability requirements in high-stakes AI-assisted decisions — creates institutional demand for non-computable value rather than leaving it to market discovery, which will be too slow

- Credentialing infrastructure for human-origin goods and services — analogous to organic certification, but for cognitive labor

- Liability frameworks that make AI-unverified decisions in regulated domains carry explicit cost — prices accountability into the market rather than letting it be eliminated as an "inefficiency"

Window of opportunity: Institutional frameworks need to be established before AI systems convincingly fake human origin and accountability at scale. Current estimate: 24-36 months before that window narrows significantly.

The Question Everyone Should Be Asking

The real question isn't "which jobs will AI take?"

It's "what makes a human consequential in a world where intelligence is free?"

Because if AI capability continues advancing at current rates, by 2028 we will have solved the intelligence problem — and exposed the deeper problem underneath it: humans need not just to produce value, but to be seen as the origin of value, to hold accountability for consequential decisions, to compress novel complexity that doesn't yet have a name.

The only historical precedent for this kind of structural shift is the industrial revolution's separation of physical labor from economic value — and that transition took 60 years and several political catastrophes before new value structures stabilized.

We appear to have significantly less time.

The data suggests a 24-36 month window to reposition before market structures crystallize around the bifurcation scenario. After that, movement between tiers becomes substantially harder.

The question is whether we'll use that window — individually, organizationally, and institutionally — before it closes.

What's your scenario probability? Drop it in the comments.

Scenario probability estimates are based on trend extrapolation from 2024-2026 data and should not be treated as predictions. Data sources include BLS Occupational Employment Statistics, MIT Work of the Future Task Force (2025), ONET Automation Probability Scores, and Oxford Future of Work Institute (2026). This analysis will be updated as Q1 2026 data becomes available. Disclosure: These are projections, not financial advice.*

If this reframed how you're thinking about your own positioning — share it. This perspective isn't in the mainstream conversation yet.