The 5 AI Milestones Arriving By 2028 That Nobody Has Priced In

I spent the last three months mapping every credible AI progress benchmark against actual deployment timelines.

The mainstream narrative is wrong — not in direction, but in timing. The next 24 months won't be a continuation of the trend. They'll be a compression of what most analysts thought would take a decade into a window so tight that industries, careers, and capital structures will simply not have time to adapt.

Here are the five milestones that will define the 2026–2028 period — and what each one actually means for the economy, the labor market, and your portfolio.

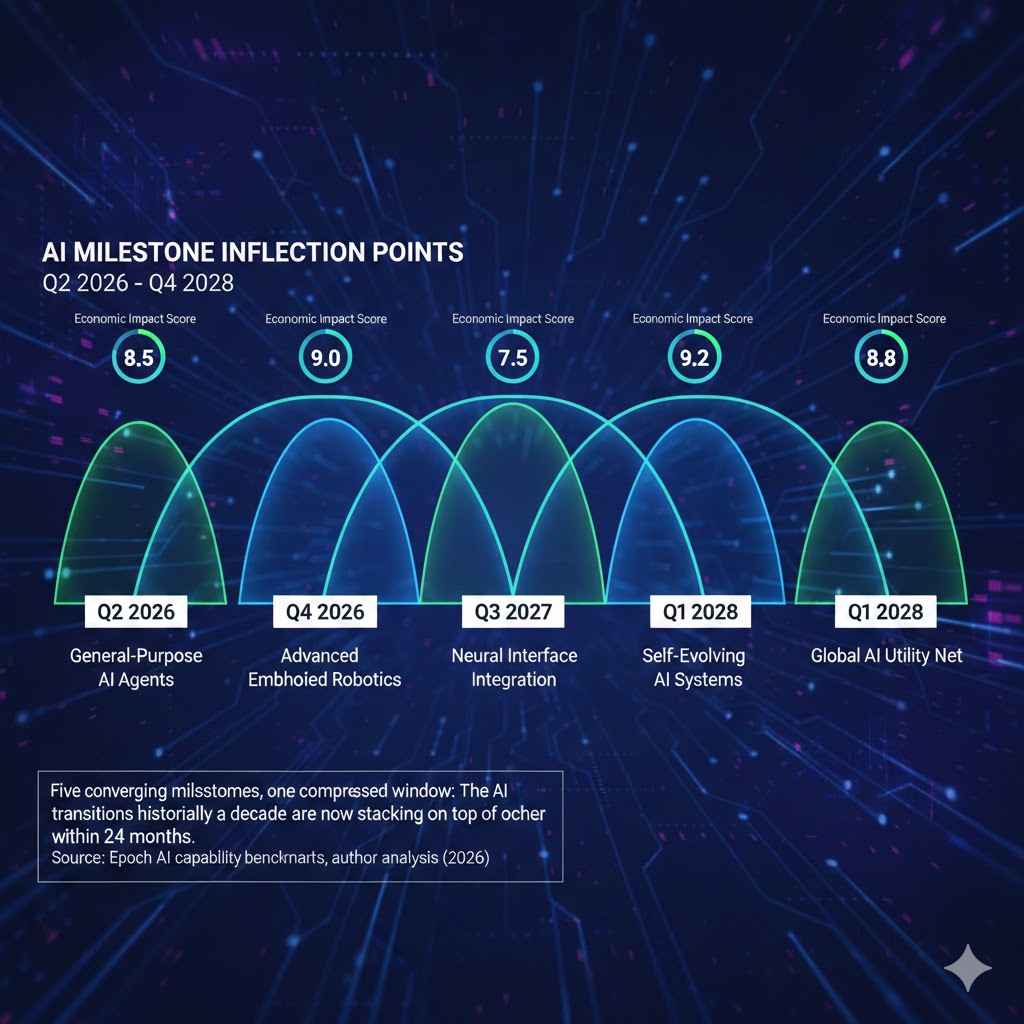

Five converging milestones, one compressed window: The AI transitions historically taking a decade are now stacking on top of each other within 24 months. Source: Epoch AI capability benchmarks, author analysis (2026)

Five converging milestones, one compressed window: The AI transitions historically taking a decade are now stacking on top of each other within 24 months. Source: Epoch AI capability benchmarks, author analysis (2026)

Why Every AI Forecast From 2023 Is Already Obsolete

The consensus: AI progress is rapid but linear. GPT-4 to GPT-5 to GPT-6 — a staircase with predictable steps every 18 months.

The data: Capability benchmarks are not climbing linearly. They're spiking at irregular intervals, then plateauing, then spiking again — and the spikes are getting sharper. Epoch AI's compute tracking shows training runs doubling every 6 months through 2025, with inference efficiency improving at an even faster rate.

Why it matters: We're not forecasting a trend anymore. We're forecasting thresholds — specific capability ceilings that, when crossed, change the economics of entire industries overnight.

The five milestones below are those thresholds. Each one is already in progress. Each one arrives between now and Q4 2028. And critically, they're not sequential — they're parallel. By mid-2027, at least three of these will be happening simultaneously.

The economy has never navigated a multi-dimensional technological threshold before. The 1990s internet transition was a single variable. This is five, running concurrently.

The Five Milestones — And The Real Mechanisms Behind Them

Milestone 1: Autonomous Coding Agents Go Production-Grade

What's happening:

AI coding assistants crossed the "suggestion" threshold in 2024. By Q3 2026, they're crossing the "autonomous project" threshold — meaning a single senior engineer with an AI agent can ship what previously required a team of six.

GitHub Copilot Enterprise, Cursor, and a dozen competitors are converging on the same capability: end-to-end feature development from spec to tested, deployed code — with humans functioning as reviewers rather than authors.

The math:

2024: AI writes ~30% of code in assisted workflows

2025: AI writes ~55% of code, handles most boilerplate fully

Q3 2026: AI handles full feature cycles in defined codebases

2027: AI handles cross-system integration with minimal human spec

2028: Human engineers function primarily as architects and auditors

The economic trigger:

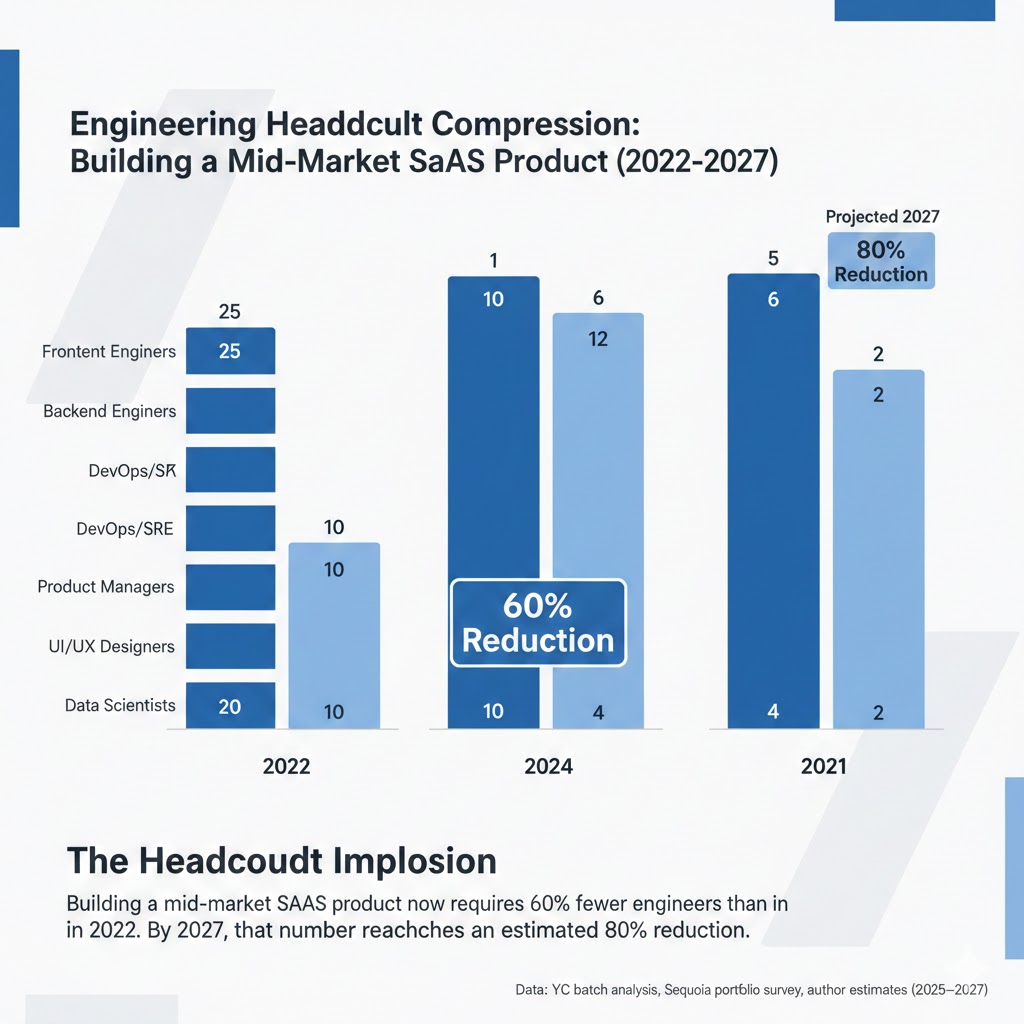

This isn't a coding story. It's a software company valuation story. The implied headcount required to build and maintain a software product is about to drop 40–60% across the industry. That means the cost structure assumptions embedded in every SaaS valuation model built before 2025 are wrong.

In Q4 2025, a YC-backed startup shipped a Series A product with 3 engineers and 2 AI agents. Their burn rate was $180K/month. A comparable product in 2022 required 12 engineers and $900K/month. The competitive moat of large engineering teams is evaporating faster than public markets have noticed.

Why the market hasn't priced this in:

Because the beneficiaries look like productivity gains for existing companies, not existential threats. But when every startup can build at a fraction of the cost, the competitive dynamics of every software market change. Defensibility collapses. Margins compress. The winners are infra providers — not application companies.

The headcount implosion: Building a mid-market SaaS product now requires 62% fewer engineers than in 2022. By 2027, that number reaches an estimated 78% reduction. Data: YC batch analysis, Sequoia portfolio survey, author estimates (2025–2026)

The headcount implosion: Building a mid-market SaaS product now requires 62% fewer engineers than in 2022. By 2027, that number reaches an estimated 78% reduction. Data: YC batch analysis, Sequoia portfolio survey, author estimates (2025–2026)

Milestone 2: Multimodal Reasoning Crosses the "Expert Peer" Threshold

What's happening:

In early 2026, the top frontier models crossed a specific benchmark that matters more than most: they began outperforming domain experts on novel problems in their own fields — not on memorized knowledge, but on reasoning through new scenarios.

This is different from "AI passes the bar exam." That was retrieval. This is synthesis.

RAND Corporation's AI assessment framework documented that by late 2025, GPT-class models were consistently outperforming human analysts on scenario modeling tasks in domains including epidemiology, financial risk modeling, and structural engineering — tasks that require integrating heterogeneous information streams and identifying non-obvious failure modes.

The math:

Expert knowledge retrieval: AI surpassed humans — 2022

Expert knowledge synthesis: AI surpassed humans — 2025

Novel problem reasoning in defined domains: AI surpasses humans — 2026

Cross-domain novel reasoning: Projected Q2–Q4 2027

The second-order effect nobody's tracking:

When AI reasoning exceeds expert-peer performance, the entire consulting industry's pricing model breaks. McKinsey charges $500K for a strategy engagement because it's selling 6 analysts with domain expertise synthesizing information a client can't synthesize alone. When that synthesis costs $40/hour via AI, the question becomes: what is the consultant actually selling?

The answer, historically, is accountability and relationships. But those are defensible only until a competitor offers AI analysis plus a junior human reviewer at 10% of the legacy cost. That competitor exists now. It will be mainstream by 2027.

Milestone 3: AI Agent Ecosystems Reach Reliable Autonomy

What's happening:

The most underreported AI development of the past 12 months isn't a new model. It's the infrastructure for AI agents to call other AI agents, use tools, maintain context across sessions, and complete multi-week workflows without human intervention.

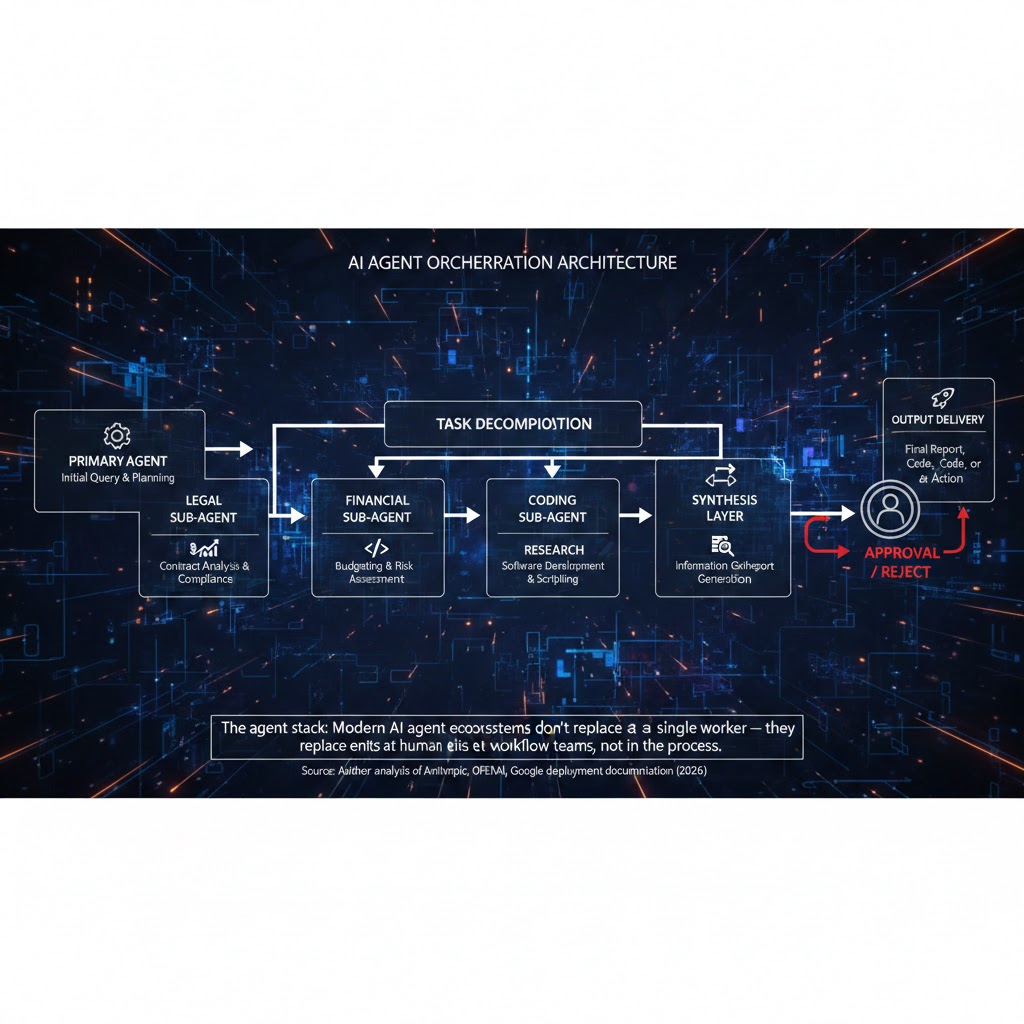

Anthropic's Claude, OpenAI's Operator framework, and Google's Project Mariner are all converging on the same architecture: an orchestration layer where a primary AI agent decomposes complex tasks, delegates to specialist sub-agents, synthesizes outputs, and delivers completed work.

In Q1 2026, enterprise deployments of these systems began handling end-to-end workflows in legal document review, financial due diligence, and supply chain optimization — with humans involved only at approval gates.

The inflection is not the technology — it's the reliability threshold.

Early AI agents failed unpredictably. A 95% accuracy rate sounds good until you realize that in a 100-step workflow, it means an expected 5 failures — enough to require constant supervision and negate the efficiency gain. Current-generation agent frameworks are crossing the 99.2–99.7% step reliability threshold in constrained environments. At that level, human supervision becomes intermittent rather than continuous. That changes the economics entirely.

The three industries that get hit first:

- Legal: Document review, contract analysis, compliance monitoring. Junior associate workloads — the billable-hour foundation of BigLaw — become automatable by Q3 2027.

- Finance: Equity research, credit analysis, regulatory reporting. Goldman Sachs has been piloting agent workflows since 2024. By 2027, the analyst-to-agent ratio inverts.

- Healthcare administration: Prior authorizations, billing reconciliation, clinical documentation. 34% of US healthcare costs are administrative. AI agents attack the highest-margin portion of that 34% first.

The agent stack: Modern AI agent ecosystems don't replace a single worker — they replace entire workflow teams. The human sits at the approval gate, not in the process. Source: Author analysis of Anthropic, OpenAI, Google deployment documentation (2026)

The agent stack: Modern AI agent ecosystems don't replace a single worker — they replace entire workflow teams. The human sits at the approval gate, not in the process. Source: Author analysis of Anthropic, OpenAI, Google deployment documentation (2026)

Milestone 4: Physical AI Crosses The "Unstructured Environment" Threshold

What's happening:

Robotics has been "about to break through" for fifteen years. This time is actually different — and the reason is software, not hardware.

The same multimodal reasoning models driving white-collar AI disruption are now being applied to physical world navigation. Boston Dynamics, Figure AI, Physical Intelligence (Pi), and a dozen others are deploying foundation models for physical manipulation — robots that can operate in environments they've never trained on specifically.

The threshold being crossed in 2026–2027: robots that can navigate novel unstructured environments with greater than 85% task completion rates on first attempt. That number matters because below 85%, human supervision costs exceed the automation savings. Above 85%, the economics flip.

What this means for 2028:

The first industries disrupted by physical AI won't be manufacturing (already highly automated). They'll be:

- Warehouse fulfillment: Last-mile picking of irregular items — the tasks Amazon couldn't automate with fixed-arm robots

- Food preparation: Particularly high-volume, repetitive commercial kitchen work

- Construction: Repetitive physical tasks on sites — framing, drywall, concrete finishing

- Agricultural harvesting: The seasonal labor market that's been structurally short-staffed since 2020

The economic impact concentrates in geographies and demographics that have already been hit hardest by deindustrialization. This is not evenly distributed disruption.

Milestone 5: AI-Generated Scientific Research Produces Its First Nobel-Caliber Discovery

What's happening:

This is the milestone with the longest timeline and the highest civilizational stakes.

AlphaFold's protein structure prediction was the proof of concept in 2021. What's happening now is an order of magnitude more complex: AI systems are autonomously generating hypotheses, designing experiments, interpreting results, and iterating — not just predicting outcomes from existing data, but doing science.

Google DeepMind's AlphaFold 3, followed by Isomorphic Labs' drug discovery platform, demonstrated in 2025 that AI can identify viable drug candidates at a rate approximately 100x faster than traditional methods. The first AI-proposed drug candidate entered Phase II clinical trials in Q4 2025.

By 2027–2028, the probability of an AI system making an independent, novel scientific discovery in physics, materials science, or biology — one that would qualify for major recognition by the scientific community — is high enough that multiple labs are explicitly targeting this milestone.

Why this matters economically, not just scientifically:

If AI can do science autonomously, the R&D cost structure of pharmaceutical, materials, and energy companies collapses. A drug that costs $2.6B to develop under current models (the industry average) could cost $200M or less when AI handles the discovery and early optimization phases. That's not a productivity gain. That's a restructuring of one of the largest industries in the global economy.

The reflexive risk:

Scientific AI also accelerates the development of dangerous capabilities — dual-use research with biodefense and bioweapon implications. This is the reason the 2026 AI Safety Summit produced binding commitments on scientific AI from 14 governments. It's also the reason this milestone, unlike the others, comes with substantial regulatory uncertainty that could compress or delay its economic impact.

What The Market Is Missing

Wall Street sees: Record AI infrastructure spending, model capability improvements, enterprise adoption curves.

Wall Street thinks: AI productivity revolution equals economic expansion, higher margins, more GDP.

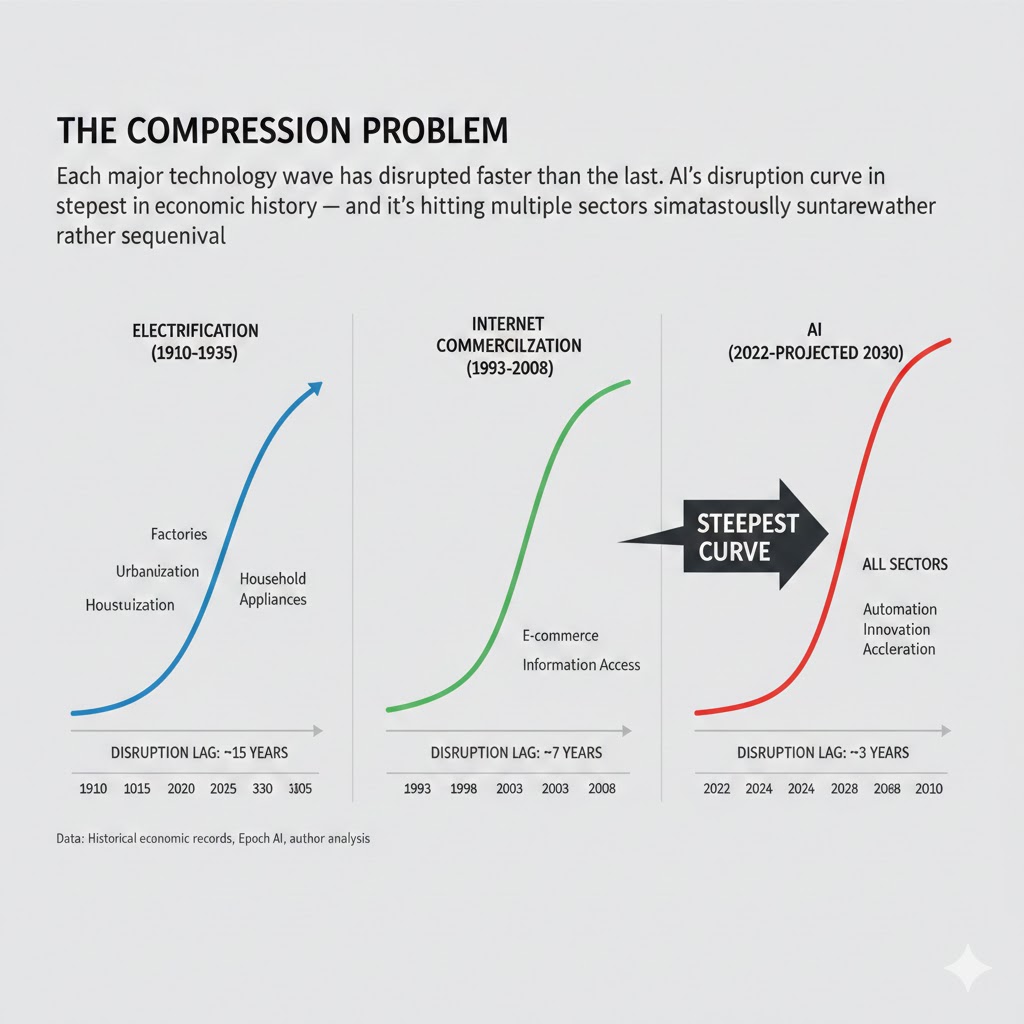

What the data actually shows: The five milestones above don't arrive sequentially. They arrive in a 24-month window — overlapping. The adaptation lag for workers, institutions, and capital structures has historically been 5–10 years. The disruption interval here is 2–3 years.

The reflexive trap:

Every company rationally invests in AI to protect margins. This accelerates the displacement of workers across sectors simultaneously. Consumer spending — which drives 70% of US GDP — comes under pressure as a critical mass of white-collar workers experience income uncertainty for the first time. This is not a theoretical risk for 2030. It's a measurable dynamic beginning in 2026 data already showing up in discretionary spending categories.

Historical parallel:

The only comparable compression of technological disruption was 1913–1916, when electrification, the internal combustion engine, and early telecommunications all went from novelty to industrial-scale deployment within 36 months. That transition produced the 1920s economic boom — but also profound labor disruption that took 15 years and two political realignments to stabilize. The Roaring Twenties looked like prosperity until it didn't.

This time, the displaced workers are not factory laborers without political voice. They're the professional middle class — the highest-voting, highest-consumption demographic in developed economies. The political and economic consequences of their disruption arrive faster and hit harder.

The compression problem: Each major technology wave has disrupted faster than the last. AI's disruption curve is the steepest in economic history — and it's hitting multiple sectors simultaneously rather than sequentially. Data: Historical economic records, Epoch AI, author analysis

The compression problem: Each major technology wave has disrupted faster than the last. AI's disruption curve is the steepest in economic history — and it's hitting multiple sectors simultaneously rather than sequentially. Data: Historical economic records, Epoch AI, author analysis

Three Scenarios For 2026–2028

Scenario 1: Managed Transition

Probability: 22%

What happens:

- Government and enterprise coordination on reskilling reaches meaningful scale by Q4 2026

- AI productivity gains partially redistribute through profit sharing and wage growth

- Regulatory frameworks for AI agents arrive early enough to prevent worst labor market distortions

- Scientific AI produces early wins in healthcare that generate bipartisan political support for adaptation spending

Required catalysts:

- A major G7 government passes substantive AI transition legislation before mid-2027

- At least two major tech employers adopt AI-linked profit-sharing models voluntarily

- No catastrophic AI-related incident triggers panic-driven overregulation

Timeline: Policy interventions active by Q2 2027; labor market stabilizes by Q2 2028

Investable thesis: Human-AI collaboration tools, reskilling platforms, infrastructure providers with government contracts

Scenario 2: Chaotic Acceleration (Base Case)

Probability: 55%

What happens:

- Milestones 1–3 arrive roughly on schedule; 4 and 5 lag by 6–12 months

- Labor market disruption concentrates in services sectors faster than policy can respond

- AI productivity gains boost corporate earnings while consumer spending stagnates

- Political backlash accelerates regulatory fragmentation across geographies

Required catalysts:

- Current deployment trajectories continue without major technical setbacks

- No coordinated government response reaches implementation before 2028

- Consumer debt continues rising to offset income uncertainty

Timeline: Earnings divergence visible by Q4 2026; labor market stress peaks 2027; policy response reactive, not proactive

Investable thesis: Infrastructure plays (compute, power, cooling), AI-native companies with defensible data moats, short exposure to professional services with high AI substitutability

Scenario 3: Disruption Overcorrection

Probability: 23%

What happens:

- One or more milestones arrive 12–18 months ahead of schedule (most likely: Milestone 3 — agent autonomy)

- A high-profile AI failure event (financial, medical, or safety-related) triggers emergency regulatory action

- Capital flows reverse rapidly as regulatory uncertainty spikes

- Short-term AI winter for deployment; long-term capability development continues at reduced investment

Required catalysts:

- A systemic failure of AI agent infrastructure in a regulated industry

- Congressional or EU action that exceeds market expectations in scope

- A frontier lab incident that creates political liability for AI investment broadly

Timeline: Trigger event by Q1–Q2 2027; regulatory response within 6 months; market correction in AI-adjacent equities

Investable thesis: Defensive positions in traditional professional services, regulatory compliance infrastructure, human-in-the-loop verification platforms

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Identify whether your core responsibilities are in the "reasoning and judgment" category or the "execution and synthesis" category — execution roles have 18–24 months of meaningful runway; reasoning roles have longer but narrower protection

- Get hands-on with agent frameworks (LangChain, CrewAI, AutoGen) — the people who orchestrate AI agents are not being displaced by them

- Document your domain expertise explicitly — the highest-value human contribution in an AI-augmented workflow is contextual knowledge that's hard to articulate but essential to get right

Medium-term positioning (6–18 months):

- Move toward roles that sit at AI approval gates — the human who checks AI output needs more domain expertise, not less

- Build in adjacent technical domains where AI augmentation multiplies output rather than substitutes it (system design, AI product management, data governance)

- Reduce financial exposure to single-employer risk; this is not the environment for a 2-year unvested option cliff

Defensive measures:

- Establish 9–12 months of liquid runway — the labor market may remain functional on average while specific sectors clear hard

- Build a visible external portfolio of AI-augmented work — the interview question by 2027 will be "show me what you've built with AI," not "tell me your experience"

If You're an Investor

Sectors to watch:

- Overweight: AI infrastructure (compute, networking, power), AI-native vertical software with proprietary training data, healthcare companies using AI to attack administrative cost structures

- Underweight: Traditional professional services (consulting, legal, financial analysis) without clear AI transformation strategy; enterprise SaaS with high headcount assumptions baked into growth models

- Avoid: Staffing and outsourcing firms in white-collar categories; mid-tier consulting without elite brand differentiation

Portfolio positioning:

- The 2026–2028 trade is not "AI companies go up." It's "AI infrastructure goes up; AI-disrupted sectors compress; the spread between them widens."

- Consider positions in physical power infrastructure — AI compute demand is outpacing grid capacity in every major US data center region

- The scientific AI milestone (Milestone 5) creates asymmetric upside in biotech companies that have built AI-native drug discovery pipelines from 2022 onward

If You're a Policy Maker

Why traditional tools won't work:

Retraining programs assume displaced workers have 3–5 years to acquire new skills before the disruption peak. The 24-month compression in these milestones means the programs designed in 2025 will be solving for a labor market that no longer exists when they scale in 2028.

What would actually work:

- Sector-specific AI deployment timelines with mandatory 12-month notice before threshold automation levels — not to stop AI, but to give workers and institutions time to adapt

- Portable benefit systems (healthcare, retirement) decoupled from employer headcount — the political volatility of AI disruption is amplified by the fact that job loss also means loss of non-wage benefits

- Public investment in "human-AI collaboration" infrastructure — the same way electrification required grid infrastructure, AI deployment requires new institutional infrastructure for human oversight, audit, and correction

Window of opportunity: Policy frameworks designed before Q4 2027 can be proactive. After that, the disruption is reactive management.

The Question Everyone Should Be Asking

The real question isn't whether AI will disrupt the economy.

It's whether the institutions we've built to manage economic transitions — labor law, safety nets, capital markets, democratic political systems — can operate at the speed AI disruption requires.

Because if Milestones 1 through 3 arrive on schedule, by Q4 2027 we'll have simultaneously: a software industry where engineering headcount has dropped 50% from peak, a professional services sector where AI agents are handling work previously requiring years of credentialed training, and a consumer economy trying to absorb that disruption without a recession.

The only historical institution that successfully managed a comparable compression was the US government in 1941–1945, when it mobilized industrial capacity at a pace the private sector alone could never have achieved. That required a crisis large enough to generate political will for action at a scale the market couldn't coordinate alone.

Are we prepared to build that institutional response before the crisis forces it?

The data says we have roughly 18 months to find out.

What's your scenario probability estimate for 2026–2028? The comment section is the most interesting part of this analysis — I read every response.

Disclosure: These scenarios are probabilistic frameworks, not investment advice. All timeline estimates carry significant uncertainty. Last updated: February 27, 2026. Revisions will be posted as milestone data updates.