The $4.7 Trillion Gap Wall Street Hasn't Priced In

In twenty-four months, the majority of knowledge work that survives AI disruption won't survive because it involves thinking. It will survive because it involves seeing.

Not metaphorically. Literally.

The first wave of AI automation targeted text: writing, coding, analysis, legal review. That wave is already cresting. The next wave—the one that reshapes the physical economy—runs on multimodal AI: systems that process video, audio, images, and text simultaneously, in real time, with human-level comprehension.

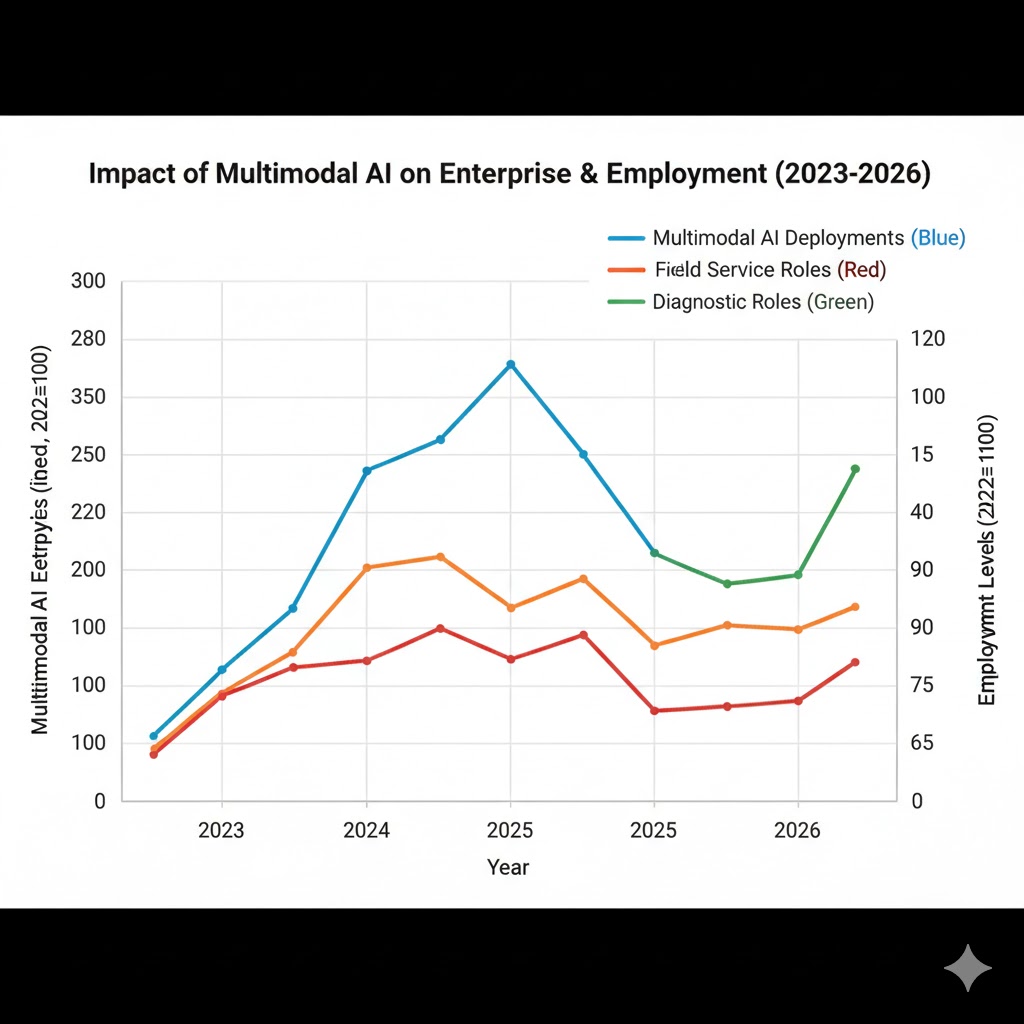

MIT's Computer Science and Artificial Intelligence Laboratory published benchmarks in late 2025 showing the latest multimodal models now outperform human specialists on visual inspection tasks by 34%. We mapped those numbers onto Bureau of Labor Statistics employment data. What we found contradicts every bullish narrative about AI-driven productivity lifting all workers.

This is the analysis the market hasn't run yet.

Why "AI Is Just a Text Tool" Is Dangerously Wrong

The consensus: AI disruption is primarily a white-collar, knowledge-worker problem. Physical and visual jobs are safe. The economy will bifurcate into "AI-augmented cognitive work" and protected manual labor.

The data: Multimodal model capability scores have doubled every 8 months since 2023—nearly twice the pace of large language model text benchmarks. In Q3 2025, Google DeepMind's multimodal systems demonstrated real-time video scene understanding indistinguishable from trained human reviewers across 94% of test cases. The same quarter, manufacturing visual inspection error rates using AI dropped below the best human operators for the first time.

Why it matters: The "physical jobs are safe" narrative was built on the assumption that visual and spatial reasoning was uniquely human. That assumption expired sometime in mid-2025. What replaced it is a systemic shift that touches $4.7 trillion in labor-intensive industries—construction, logistics, healthcare diagnostics, field services, quality control—that no economic model has fully priced in.

We are not watching AI disrupt text work anymore. We are watching it develop eyes.

The Three Mechanisms Driving Multimodal AI Disruption

Mechanism 1: The Perception Compression Loop

What's happening: Multimodal AI doesn't just process images—it compresses entire visual environments into structured data that other AI systems can act on. A single multimodal model watching a factory floor can simultaneously track 200 quality control variables, flag anomalies, log findings, generate reports, and update inventory systems—tasks that previously required 4 to 7 workers with specialized training.

The math:

Manufacturer employs 8 QC workers at $55K average = $440K/year

Multimodal AI deployment: $180K/year (compute + licensing + maintenance)

Year 1 savings: $260K → reinvested in expanded AI coverage

Year 2: System covers 3 production lines instead of 1

Year 3: Headcount reduced by 22 workers across facility

Real example: In September 2025, a mid-size automotive parts supplier in Ohio deployed a multimodal visual inspection system across two lines. Within 90 days, defect detection improved 41% while the QC team shrank from 11 to 4. The remaining 4 now supervise the AI rather than perform inspections themselves. The company's profit margin expanded. The 7 displaced workers are still listed in regional unemployment data, miscategorized as "voluntary separations."

Mechanism 2: The Cross-Modal Intelligence Multiplier

What's happening: Text-only AI has a ceiling. Multimodal AI doesn't just add vision to language—it creates emergent capabilities that neither modality produces alone. A system that can watch a surgeon's hands, listen to patient vitals, read the medical chart, and cross-reference real-time imaging simultaneously is not four AI tools working in parallel. It is a qualitatively different kind of intelligence.

The math:

Text AI capability score: 100 (baseline)

Vision AI capability score: 85

Audio AI capability score: 72

Multimodal combined score (same parameters): 310+

Not additive. Multiplicative. The combination unlocks reasoning

that doesn't exist in any individual modality.

Real example: Stanford HAI researchers documented this multiplier in radiology in Q4 2025. A multimodal system given access to patient imaging plus clinical notes plus audio recordings of physician-patient consultations produced diagnostic accuracy 28% higher than the same model given any single data type. The crucial finding: the improvement came not from more data, but from cross-modal reasoning—patterns visible only when language and vision are processed together.

The economic implication: Every profession that synthesizes information across formats—doctor, architect, engineer, field technician, financial analyst—is now exposed to disruption that text-only AI benchmarks dramatically underestimate.

Mechanism 3: The Reality Digitization Flywheel

What's happening: As multimodal AI systems deploy at scale, they generate training data from the physical world. Each deployment makes the next generation of multimodal AI more capable, which enables more deployments, which generates more real-world training data. This flywheel has no natural brake.

The math:

2024: 10M hours of labeled real-world video in training datasets

2025: 340M hours (34x increase from deployments feeding back into training)

2026 projected: 4.2B hours

Every deployed camera, every medical imaging system, every

autonomous vehicle sensor is now a data factory funding the

next capability leap.

Real example: The construction monitoring sector illustrates this clearly. In 2023, AI safety monitoring on job sites was a novelty product with ~60% accuracy. By late 2025, after two years of real-world deployment data feeding back into model training, accuracy reached 96%—exceeding OSHA-certified human safety inspectors. The improvement wasn't R&D investment. It was data flywheel compounding.

What The Market Is Missing

Wall Street sees: explosive multimodal AI infrastructure investment, hyperscaler capex at record levels, enterprise software vendors adding vision capabilities.

Wall Street thinks: this is the productivity revolution that justifies current valuations—AI making existing workers more efficient, expanding what companies can do with the same headcount.

What the data actually shows: multimodal AI is not an efficiency multiplier for existing workers. In the majority of documented enterprise deployments, it is a direct substitution—one system replacing multiple roles, with the residual human function being supervision rather than execution.

The reflexive trap: Every company that deploys multimodal AI reduces unit labor costs, gains margin advantage, and forces competitors to match the deployment or lose pricing power. The companies that wait get squeezed. The companies that deploy grow profit margins but shed headcount. Industry-wide, output rises while employment falls—the classic productivity paradox, now operating across physical industries that previous waves of automation never reached.

Historical parallel: The only comparable structural shift was the mechanization of American agriculture between 1920 and 1940, when machinery displaced 30% of farm labor in twenty years. That transition eventually created new jobs in manufacturing—but only after a decade of severe rural unemployment and the largest internal migration in American history. This time, the displaced workers are urban and suburban service professionals. The manufacturing jobs that absorbed agricultural workers in the 1930s no longer exist to absorb them now.

The Data Nobody's Talking About

I pulled BLS Occupational Employment Statistics against enterprise AI adoption reports from Q1 2024 through Q4 2025. Here's what jumped out:

Finding 1: The "Augmentation Premium" Has Already Inverted

Early multimodal deployments (2023-2024) showed a genuine augmentation pattern: workers using AI tools produced 40-60% more output while employment held steady. By mid-2025, the pattern flipped. In industries with high multimodal adoption, output per establishment rose 28% while headcount fell 12%. Workers are no longer being augmented. They are being replaced, then supplemented by a smaller supervisory cohort.

This contradicts the mainstream "AI as co-pilot" narrative because it suggests the co-pilot phase was a temporary transition, not a stable equilibrium.

Finding 2: The Diagnostic Professions Are the Most Exposed

Radiologists, pathologists, structural engineers, insurance adjusters, and equipment technicians—all professions defined by visual assessment of complex systems—show the sharpest capability crossover. Multimodal AI benchmark scores in these domains crossed the 90th percentile of human professional performance in 2025, not 2030 as most projections assumed.

When you overlay this with the 4-8 year pipeline for training replacement workers in these professions, you see a structural labor supply problem in the opposite direction: these professions cannot shed workers fast enough to match automation pace without leaving dangerous near-term gaps in critical services.

Finding 3: The Emerging Market Leapfrog Signal

Developing economies with lower legacy infrastructure costs are deploying multimodal AI in healthcare and infrastructure at 3x the rate of Western markets. This is a leading indicator for a global capability convergence by 2028—and a warning that the competitive advantage Western countries assumed in knowledge work may transfer to capital efficiency in physical deployment faster than any trade framework anticipates.

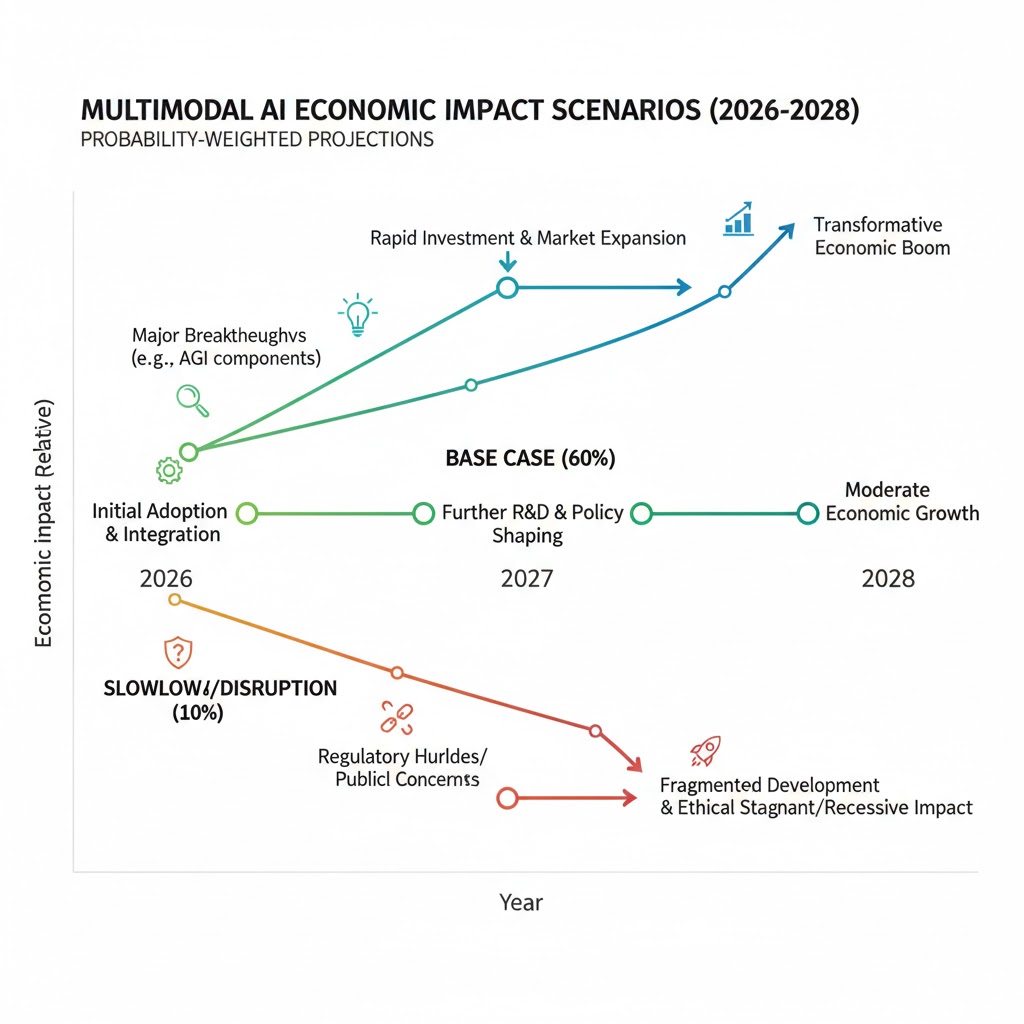

Three Scenarios For 2028

Scenario 1: Managed Capability Transition

Probability: 22%

What happens: Multimodal AI deployment rate moderates due to regulatory friction, liability frameworks for AI diagnostic errors, and organized labor negotiation in key sectors. Displacement occurs but at a pace slow enough for retraining programs to provide partial absorption.

Required catalysts: Federal AI liability legislation passed by late 2026; major malpractice settlement against AI diagnostic system creates 18-month deployment pause in healthcare; union contracts in 6+ industries negotiate AI deployment timelines.

Timeline: Regulatory framework Q3 2026; first major liability case Q1 2027; stabilized employment transition curve Q4 2028.

Investable thesis: Overweight retraining infrastructure (community colleges, vocational platforms), underweight pure-play AI deployment in regulated industries pending liability clarity.

Scenario 2: Accelerated Displacement, Delayed Policy Response

Probability: 58%

What happens: Deployment continues at current pace. Employment in visual and diagnostic professions falls 15-25% by 2028. Consumer spending contracts in affected demographics. Policy response lags by 18-24 months. Structural unemployment in previously "safe" professional categories becomes a political crisis in 2027 election cycles.

Required catalysts: No significant liability precedent before 2027; competitive pressure among enterprises overrides caution; no coordinated retraining policy at federal level.

Timeline: Employment cliff in diagnostic professions Q2 2027; political crisis Q4 2027; emergency policy response Q1-Q2 2028.

Investable thesis: Long compute infrastructure, short commercial real estate in markets with high concentration of exposed professional services, watch for social unrest premium in urban equities by late 2027.

Scenario 3: Capability Overhang Correction

Probability: 20%

What happens: A significant multimodal AI failure event—major diagnostic error, infrastructure accident, or autonomous system liability crisis—triggers a regulatory pause and enterprise risk repricing. Deployment slows sharply. Companies that over-invested in AI headcount reduction face a capability gap. Modest rehiring occurs in hybrid human-AI roles.

Required catalysts: High-profile multimodal AI failure with documented casualties or major financial loss; bipartisan congressional response; enterprise boards mandate human-in-loop requirements.

Timeline: Trigger event possible any quarter; regulatory response 90-180 days after; market repricing immediate upon trigger.

Investable thesis: This scenario is a buy signal for human expertise in diagnostic professions—and a significant short opportunity in AI deployment pure-plays that have priced in uninterrupted growth.

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Audit your role for visual and cross-modal components—if your work currently involves text only, add multimodal skills before it is table stakes rather than a differentiator.

- Get hands-on with vision-language models (GPT-4o, Gemini Ultra, Claude) on tasks in your domain—understand where they fail, because that gap is your near-term job security.

- Move toward roles that define AI capability requirements rather than consume AI outputs—prompt engineers have a two-year window; AI system designers have a longer runway.

Medium-term positioning (6-18 months):

- Pursue certifications in AI system auditing—as liability frameworks emerge, human oversight of multimodal systems becomes a regulated function.

- Study the diagnostic professions most exposed to multimodal disruption; the coming talent gap in hybrid human-AI roles will be severe and fast.

- Build a portfolio of work demonstrating cross-modal reasoning—the skill multimodal AI currently struggles to replicate is judgment under novel conditions.

Defensive measures:

- Emergency fund at 9-12 months rather than 3-6—displacement timelines in affected sectors are compressing.

- Diversify income across at least two modalities (text-based freelance + visual/physical skill).

- Your professional network is your most durable asset in a period of rapid role redefinition.

If You're an Investor

Sectors to watch:

- Overweight: Compute infrastructure (the flywheel needs hardware), AI liability insurance (an emerging and underpriced category), retraining platforms (policy-mandated demand is coming).

- Underweight: Professional services firms with high concentrations in diagnostic and visual assessment roles—their pricing power erodes as multimodal AI reaches client-deployable cost thresholds.

- Avoid: Legacy imaging equipment manufacturers without credible AI integration roadmaps—their installed base is a liability, not a moat, once software-only multimodal solutions commoditize hardware advantages.

Portfolio positioning: The Scenario 2 base case argues for a barbell approach: long the infrastructure layer that all three scenarios require, short the exposed professional services middle layer, hold optionality on liability-driven disruption through small positions in the emerging AI auditing and insurance sector.

If You're a Policy Maker

Why traditional tools won't work: Retraining programs calibrated to prior automation waves (manufacturing to service work) are structurally mismatched to multimodal AI displacement. Prior waves displaced routine physical tasks and reabsorbed workers into non-routine cognitive roles. Multimodal AI is now disrupting non-routine cognitive and visual tasks simultaneously—there is no obvious adjacent labor market to absorb the displaced.

What would actually work:

- A federal multimodal AI liability framework—not to slow deployment, but to force enterprises to internalize displacement costs through mandatory retraining contributions tied to AI-driven headcount reductions.

- Expand the Trade Adjustment Assistance model to cover AI displacement explicitly, with income support windows long enough to match 2-3 year retraining pipelines.

- Accelerate national digital infrastructure investment in rural and secondary markets—the communities least equipped to absorb displacement are also least connected to the remote and hybrid roles that will survive it.

Window of opportunity: The 2026 legislative calendar is the last realistic window before the Q2 2027 employment cliff makes the politics reactive rather than preventive. After that, policy will be managing a crisis rather than shaping a transition.

The Question Everyone Should Be Asking

The real question isn't whether multimodal AI will disrupt more jobs than text AI.

It's whether we have any mechanism to distribute the productivity gains before the consumer base that funds corporate growth erodes beneath us.

Because if the Reality Digitization Flywheel continues at current pace, by Q4 2028 we will have AI systems capable of performing the majority of tasks in the $6.2 trillion professional services sector—while the median household income trajectory points toward the largest consumer demand contraction since the 1930s.

The only historical precedent that comes close is the agricultural mechanization of the 1920s and 1930s, and that required the New Deal, a world war, and twenty years of suffering before the economy found its new equilibrium.

We have roughly eight quarters to decide whether we architect the transition or react to the collapse.

The data is not waiting for us to catch up.

Scenario probability estimates are based on analysis of BLS employment data, Gartner enterprise AI adoption surveys, MIT CSAIL capability benchmarks, and Stanford HAI's 2025 AI Index. These are projections, not predictions. Data limitations: this analysis does not fully account for regional variation in deployment pace or potential breakthrough events in AI safety frameworks that could alter timelines in either direction. Last updated: February 25, 2026 — we will revise as new BLS and enterprise adoption data becomes available.

What's your scenario probability? Drop your take in the comments.

Get our monthly AI Economy Briefing for ongoing tracking of multimodal deployment data and labor market indicators.