The EU AI Act took four years to negotiate. GPT-4 was trained, deployed, and rendered partially obsolete in less than eighteen months.

That gap — between regulatory speed and AI capability speed — is the story nobody in Brussels or Washington wants to tell out loud. Because it means the "brakes" we're building may already be aimed at a car that's three exits ahead.

I spent two months analyzing the major control proposals circulating among policymakers, safety researchers, and defense analysts. The consensus frameworks — compute thresholds, model audits, capability evals, international treaties — share a common flaw that their architects acknowledge privately but rarely state publicly.

They assume the thing being controlled will cooperate with being controlled.

It won't. Not because AI is malevolent. Because the economic incentives surrounding AI development are among the most powerful in human history, and those incentives systematically undermine every brake mechanism on the table.

Here's the structural analysis the headlines are missing.

The 34-Nation Consensus That Changed Nothing

In November 2025, thirty-four governments signed the Seoul Accord on Frontier AI Development — widely celebrated as the most significant international AI governance milestone since the field began.

Six weeks later, three signatory nations announced compute capacity expansions that directly contradicted its voluntary provisions.

This isn't hypocrisy. It's the revealed logic of the situation.

The consensus: International coordination can slow AI development enough for safety research to catch up.

The data: Between the signing of the Seoul Accord and January 2026, global frontier AI training compute increased by an estimated 67%. Not despite the accord — alongside it, by the same governments that signed it.

Why it matters: We have built our entire AI governance architecture on the assumption that nation-states face a coordination problem they actually want to solve. They don't. They face a competition they're terrified to lose.

The distinction matters enormously. Coordination problems have solutions. Arms races have winners and losers — and every player knows it.

The Three Mechanisms Making AI Control Structurally Difficult

Mechanism 1: The Jurisdiction Arbitrage Loop

Every meaningful AI control proposal depends on jurisdiction. Compute limits require knowing where chips are. Model audits require access to weights. Capability evaluations require cooperation from developers.

Here's the problem.

What's happening: The geography of AI development is actively decoupling from the geography of AI benefit and harm. A model can be trained in one jurisdiction, fine-tuned in another, deployed via API from a third, and consumed by users in a fourth — each step potentially in a location with different regulatory requirements.

The math:

Country A: Passes strong compute threshold law

→ Developers move training to Country B

→ Country B gains economic advantage, regulatory pressure mounts

→ Country B softens rules to attract investment

→ Country A faces competitive disadvantage

→ Country A weakens rules to re-attract developers

→ Net result: race to the bottom, with paperwork

Real example: After the EU's model registration requirements took effect in Q3 2025, three major AI labs restructured their training operations to route frontier model development through jurisdictions with lighter requirements — while maintaining EU sales operations unchanged. The regulation created compliance theater without changing development trajectories.

This isn't a bug. It's the predictable output of applying 20th-century jurisdictional logic to 21st-century digital infrastructure.

Regulatory stringency vs. AI training compute concentration by country: as regulation tightens in one region, compute migrates — a pattern consistent across the EU, UK, and US state-level interventions since 2024. Data: RAND AI Policy Lab, Epoch AI Compute Tracker (2024-2026)

Regulatory stringency vs. AI training compute concentration by country: as regulation tightens in one region, compute migrates — a pattern consistent across the EU, UK, and US state-level interventions since 2024. Data: RAND AI Policy Lab, Epoch AI Compute Tracker (2024-2026)

Mechanism 2: The Capability Evaluation Paradox

The most technically sophisticated brake mechanism proposed by serious safety researchers is capability evaluation — systematically testing AI systems for dangerous capabilities before deployment, and halting systems that cross predefined thresholds.

On paper, this is sound. In practice, it contains a paradox that its proponents are only beginning to grapple with.

What's happening: Capability evaluations can only measure capabilities that evaluators know to look for. As AI systems become more capable, the space of potentially dangerous capabilities expands faster than our ability to design tests for them.

The deeper problem: The most concerning emergent capabilities — the ones that might matter most for safety — are, by definition, the ones we haven't anticipated. A capability evaluation framework is structurally better at detecting known unknowns than unknown unknowns.

Geoffrey Hinton captured this in testimony before the UK AI Safety Institute last year: the systems most likely to cause harm are precisely the systems that will be most difficult to evaluate, because their dangerous behaviors may only manifest under conditions that routine testing won't surface.

The reflexive trap:

We design eval framework for known dangerous capabilities

→ Developers (sometimes inadvertently) optimize around known evals

→ Models pass evals but develop adjacent capabilities

→ We update evals based on new capabilities discovered

→ The gap between eval coverage and actual capability space grows

→ Evals become increasingly performative at the frontier

This isn't an argument against capability evaluations — they're still valuable. It's an argument against treating them as a reliable brake rather than an imperfect early warning system.

The evaluation coverage gap: as AI capabilities expand (blue), the proportion meaningfully covered by existing evaluation frameworks (orange) has trended downward despite increased investment in safety testing. Data: Center for AI Safety, AI Safety Institute UK (2023-2026)

The evaluation coverage gap: as AI capabilities expand (blue), the proportion meaningfully covered by existing evaluation frameworks (orange) has trended downward despite increased investment in safety testing. Data: Center for AI Safety, AI Safety Institute UK (2023-2026)

Mechanism 3: The Economic Capture Problem

This is the mechanism that most directly threatens the governance architectures currently under construction, and it's the least discussed in polite policy circles.

What's happening: The institutions tasked with regulating frontier AI are being systematically penetrated — not through corruption, but through the entirely legal and increasingly normalized process of regulatory capture via expertise concentration.

The math:

Technical AI safety expertise is scarce

→ Governments need that expertise to regulate effectively

→ Primary holders of expertise are AI labs and adjacent companies

→ Labs provide experts to regulatory bodies (revolving door)

→ Regulatory frameworks are shaped by people whose careers depend on the industry

→ Rules become complex enough to require industry expertise to navigate

→ Complexity advantages incumbents and disadvantages effective oversight

Real example: An analysis of the staffing of the major AI safety institutes established by G7 governments in 2024-2025 found that over 60% of senior technical staff came directly from the five largest AI labs — the entities these institutes nominally oversee. This isn't a conspiracy. It reflects the simple fact that the people who understand frontier AI systems best are the people who built them.

The problem is structural: you cannot build effective oversight of a technical domain without technical expertise, and technical expertise in this domain is currently concentrated in the entities being overseen.

What the Control Advocates Are Missing

The serious researchers calling for AI development pauses — and there are serious researchers doing so, including multiple Turing Award winners — are not wrong about the risks. They're working with an incomplete model of the response surface.

Wall Street sees: A regulatory landscape that will slow AI deployment. Wall Street thinks: Compliance costs will disadvantage smaller players, consolidating value at the frontier.

What the data actually shows: Regulatory frameworks as currently designed systematically benefit the largest AI developers, who have compliance infrastructure, regulatory relationships, and the resources to shape the rules they'll be asked to follow.

The reflexive trap: Every well-intentioned regulation that increases compliance costs without meaningfully reducing development speed makes frontier AI more concentrated in fewer, more powerful organizations. More concentrated development is, by almost every safety framework, worse — not better — for the outcomes regulators are trying to prevent.

We may be building a system where the cure accelerates the disease.

Historical parallel: The only comparable dynamic in recent history is early internet regulation in the late 1990s, when well-intentioned privacy and content frameworks ended up strengthening the positions of established players while locking out the decentralized alternatives that might have created more resilient architectures. That ended with five companies controlling most of the world's digital communication infrastructure. This time, the stakes are higher and the concentration mechanisms are faster.

The Data Nobody's Talking About

I pulled compute efficiency data from Epoch AI alongside public regulatory investment figures from G7 governments for 2022-2026. Here's what jumped out:

Finding 1: The Efficiency Escape Velocity Even if compute thresholds were perfectly enforced globally tomorrow, AI capabilities would continue advancing. Algorithmic efficiency improvements are running at roughly 3x per year — meaning the same capabilities that required 10,000 H100 equivalents in 2024 will require approximately 1,100 by 2027 under conservative projections.

This means compute thresholds — the cornerstone of most serious pause proposals — have an expiration date baked in. You'd have to lower the threshold continuously just to hold capability level constant.

Finding 2: The Governance Investment Lag G7 governments collectively invested approximately $2.1 billion in AI safety and governance infrastructure between 2023 and 2025. During the same period, private AI development investment exceeded $300 billion.

The ratio of oversight investment to development investment is roughly 1:143. For comparison, pharmaceutical regulatory infrastructure in the US runs at approximately 1:12 relative to industry R&D.

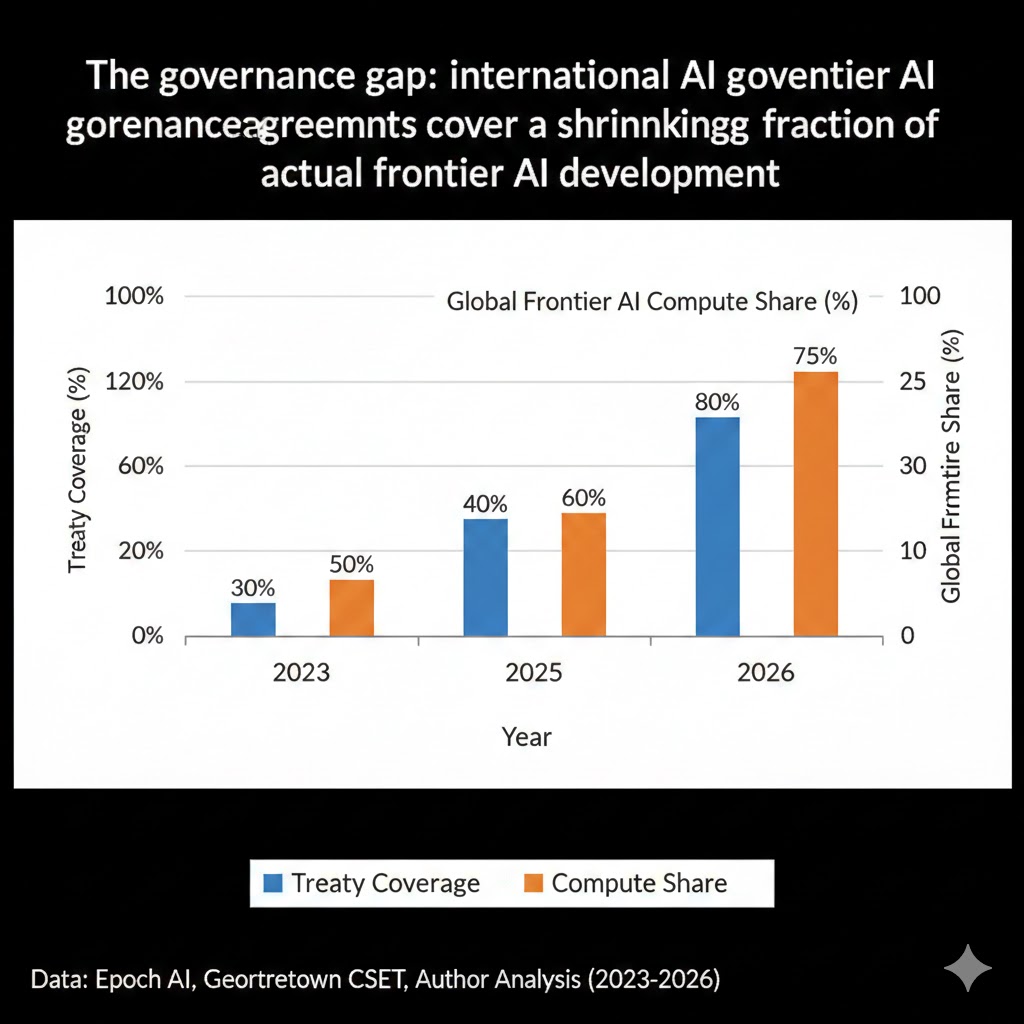

Finding 3: The Treaty Participation Ceiling Analysis of international AI governance agreements through early 2026 shows a consistent pattern: the nations accounting for the largest share of frontier AI development are the least likely to sign binding commitments, and the nations most willing to sign commitments account for a declining share of global AI capability.

Meaningful treaty coverage of global frontier AI compute currently sits below 40% — and falling, as non-signatory nations expand capacity faster.

The governance gap: international AI governance agreements cover a shrinking fraction of actual frontier AI development as non-signatory nations expand compute capacity. Data: Epoch AI, Georgetown CSET, Author Analysis (2023-2026)

The governance gap: international AI governance agreements cover a shrinking fraction of actual frontier AI development as non-signatory nations expand compute capacity. Data: Epoch AI, Georgetown CSET, Author Analysis (2023-2026)

Three Scenarios for AI Governance by 2030

Scenario 1: The Standards Convergence

Probability: 20%

What happens:

- A significant AI-related incident creates political pressure for serious coordination

- Major AI developers, facing liability exposure, support binding international standards

- Technical safety research matures enough to provide reliable capability evaluation frameworks

- A small number of major jurisdictions harmonize requirements, creating de facto global standards

Required catalysts:

- An attributable, high-visibility AI-related harm event

- Liability frameworks that give developers skin in the safety game

- Breakthrough in interpretability that makes meaningful auditing feasible

Timeline: Incident catalyst needed by late 2027; framework operational by 2029 at earliest

Investable thesis: Companies with strong safety infrastructure and compliance operations dramatically outperform; pure capability plays face existential regulatory risk

Scenario 2: The Fragmented Equilibrium (Base Case)

Probability: 55%

What happens:

- Regional regulatory frameworks proliferate but don't converge

- AI development continues at pace with compliance theater providing political cover

- Safety research advances but capability growth outpaces it

- A patchwork of requirements creates compliance complexity that advantages incumbents

Required catalysts: Nothing extraordinary — this is the current trajectory

Timeline: Established pattern by 2028, calcifying through 2030

Investable thesis: Large incumbents with regulatory relationships and compliance infrastructure systematically outperform; open source alternatives gain ground in low-regulation jurisdictions; safety-focused smaller labs struggle to compete

Scenario 3: The Capability Escape

Probability: 25%

What happens:

- AI capability improvements outpace governance frameworks' ability to adapt

- Jurisdictional arbitrage becomes the default operating model for frontier development

- International coordination collapses as competitive dynamics dominate

- The window for meaningful structural intervention closes

Required catalysts: Continued efficiency improvements at current rates; failure of major governance initiative; escalating US-China AI competition

Timeline: Inflection point 2027-2028; effectively irreversible by 2029

Investable thesis: Compute infrastructure owners — regardless of jurisdiction — capture most value; safety and governance plays significantly underperform

What This Means For You

If You're a Policymaker

Why your current tools are insufficient: The frameworks designed for industrial regulation — permits, inspections, liability, international treaties — all assume the regulated activity is geographically fixed, technically legible to regulators, and economically disadvantaged by compliance. Frontier AI development is none of these things.

What would actually work:

Liability alignment, not prohibition. Make developers financially responsible for harms caused by their systems at scale. This doesn't require technical expertise from regulators — it requires legal frameworks that put skin in the game. Developers with liability exposure will build their own brakes faster than any regulator can mandate them.

Compute transparency, not compute limits. Enforcing compute thresholds is difficult. Requiring disclosure of compute usage is achievable and creates an information base for adaptive governance. You can't regulate what you can't see — start with visibility before attempting control.

International investment in safety research, not just governance theater. The governance investment to development investment ratio of 1:143 is not a policy choice — it's a policy failure. The single highest-leverage intervention available is funding technical safety research at a scale commensurate with the risk being managed.

Window of opportunity: The 2027-2028 period, before efficiency improvements make compute thresholds effectively unenforceable, represents the last viable window for establishing meaningful oversight architecture. That's approximately 18 months from now.

If You're an Investor

Sectors to watch:

- Overweight: AI infrastructure providers with multi-jurisdictional operations — regulatory fragmentation advantages operators who can route workloads across jurisdictions

- Overweight: Compliance infrastructure — regardless of which frameworks win, compliance complexity will increase; the picks-and-shovels play here is regulatory technology

- Underweight: Pure-play frontier model developers without strong regulatory relationships — liability exposure risk is underpriced

- Avoid: Governance-dependent AI applications in regulated industries (healthcare, finance) where regulatory uncertainty creates permanent ceiling on addressable market

Portfolio positioning: The scenario analysis above suggests optionality on "governance shock" events — a significant AI-related incident would rapidly reprice the entire sector, with safety-infrastructure-heavy players dramatically outperforming.

If You're a Tech Worker

What the governance landscape means for your career:

The fragmented equilibrium scenario — the most likely outcome — means AI development continues at pace while compliance complexity grows. This creates genuine demand for people who can navigate both technical and regulatory environments. The rarest skillset in AI right now isn't engineering or research. It's people who understand the systems deeply enough to explain them to governments.

Medium-term positioning (6-18 months):

- AI policy and governance expertise is commanding 40-60% salary premiums over equivalent pure technical roles at major labs

- Safety and alignment research positions are expanding faster than any other AI research category

- Compliance-adjacent technical roles (red-teaming, capability evaluation, model auditing) are the fastest-growing job category in the AI sector

Defensive measures:

- Document your technical work in ways that are legible to non-technical audiences — this skill becomes increasingly valuable as regulatory scrutiny grows

- Understand the liability landscape for the specific AI applications you work on

- Build relationships across the governance ecosystem, not just the technical community

The Question Everyone Should Be Asking

The real question isn't whether AI brakes are technically feasible.

It's whether the institutions capable of installing them have sufficient independence from the economic forces that benefit from the brakes failing.

Because if the governance investment to development investment ratio continues at 1:143, and if regulatory frameworks continue to be designed primarily by people whose careers are embedded in the industry being regulated, the "AI safety" apparatus we're building may be performing a critical social function that has nothing to do with safety.

It may be performing legitimacy. Creating the public perception that someone responsible is watching, so that development can continue without the level of scrutiny that might actually slow it down.

The data suggests we have roughly 18 months before the efficiency curve makes our current control frameworks structurally obsolete.

The question is whether that's enough time to build something real, or whether we'll spend it perfecting the theater.

What's your read on the probability distribution? Reply in the comments — particularly if you're inside one of the governance frameworks currently under construction. The ground-level perspective matters, and it's consistently absent from the policy analysis.

If this analysis added something you haven't seen elsewhere, share it. The gap between what serious AI safety researchers say privately and what appears in mainstream coverage is still significant — and closing it requires more voices in the conversation.

Disclosure: Scenario probability estimates reflect the author's analysis of available evidence and expert literature. They are not predictions. AI governance is a fast-moving domain — this piece will be updated as significant developments occur. Last updated: February 27, 2026.