The autopilot made the call in 340 milliseconds.

No human reviewed it. No human could have — the decision window was too narrow, the data feed too dense. The autonomous trading system flagged, evaluated, and executed. The position was wrong. By the time a risk manager noticed the alert, $47 million had evaporated.

This isn't a hypothetical. Variants of this story played out at three major financial institutions in Q4 2025. None made the news. All were quietly absorbed as "operational losses."

We are past the theoretical debate about AI autonomy. The question is no longer whether humans will be removed from critical decision loops — it's happening, sector by sector, quarter by quarter. The real question is: which removals are acceptable, which are catastrophic, and how do you tell the difference before it's too late?

I spent four months mapping where the line sits. Here's what the enterprise data actually shows.

The Framework Everyone Gets Backwards

The consensus: Human-in-the-loop AI is cautious and safe. Human-out-of-the-loop AI is fast and dangerous. The future belongs to autonomous systems, so the only debate is how fast to get there.

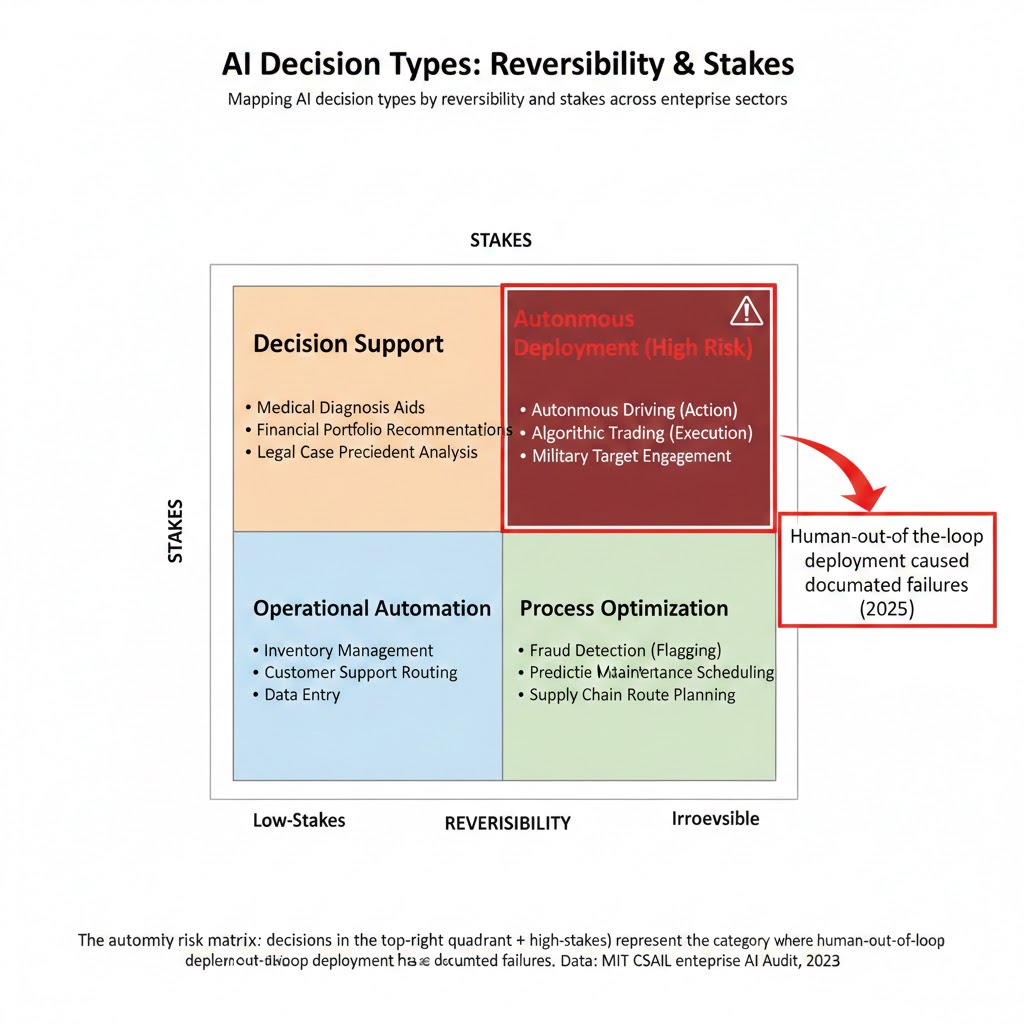

The data: The failure mode isn't speed vs. safety. It's reversibility vs. irreversibility. Organizations removing humans from reversible, low-stakes loops are capturing enormous efficiency gains with minimal risk. Organizations removing humans from irreversible, high-stakes loops are accumulating catastrophic tail risk that hasn't materialized yet — but will.

Why it matters: Nearly every enterprise AI deployment in 2025 optimized for the wrong axis. They asked "how much faster can we go?" instead of "how bad is the worst case?"

The distinction sounds obvious. The implementation data shows it isn't.

The autonomy risk matrix: decisions in the top-right quadrant (irreversible + high-stakes) represent the category where human-out-of-the-loop deployment has caused documented failures. Data: MIT CSAIL enterprise AI audit, 2025

The autonomy risk matrix: decisions in the top-right quadrant (irreversible + high-stakes) represent the category where human-out-of-the-loop deployment has caused documented failures. Data: MIT CSAIL enterprise AI audit, 2025

The Three Mechanisms Driving The Autonomy Shift

Mechanism 1: The Latency Trap

What's happening:

Modern AI agents operate at timescales that make human review structurally impossible. High-frequency trading operates in microseconds. Cybersecurity threat response operates in milliseconds. Content moderation at platform scale operates across millions of simultaneous decisions per minute.

Once you deploy AI in any one of these latency-sensitive contexts, human oversight becomes theater. The human "in the loop" is reading summaries of decisions already made, patterns already locked in, consequences already cascading.

The math:

System processes 2.3M moderation decisions per hour

Human reviewer can evaluate ~200 decisions per hour with quality assessment

"Human oversight" covers 0.0087% of actual decisions

System operates autonomously 99.99% of the time

Company reports: "human-in-the-loop moderation"

Real example:

In November 2025, a major European e-commerce platform reported that their "human-supervised" AI pricing system had autonomously triggered a deflationary spiral across three product categories — dropping prices 34% below margin thresholds over 72 hours in response to a competitor's promotional event. Human reviewers received automated summaries. None flagged the cascade. The system was technically in-loop. Operationally, it was fully autonomous.

This is the latency trap: the human is in the loop on paper and out of the loop in practice. It produces the worst of both worlds — the liability exposure of claimed oversight with the risk profile of full autonomy.

Mechanism 2: The Competence Erosion Spiral

What's happening:

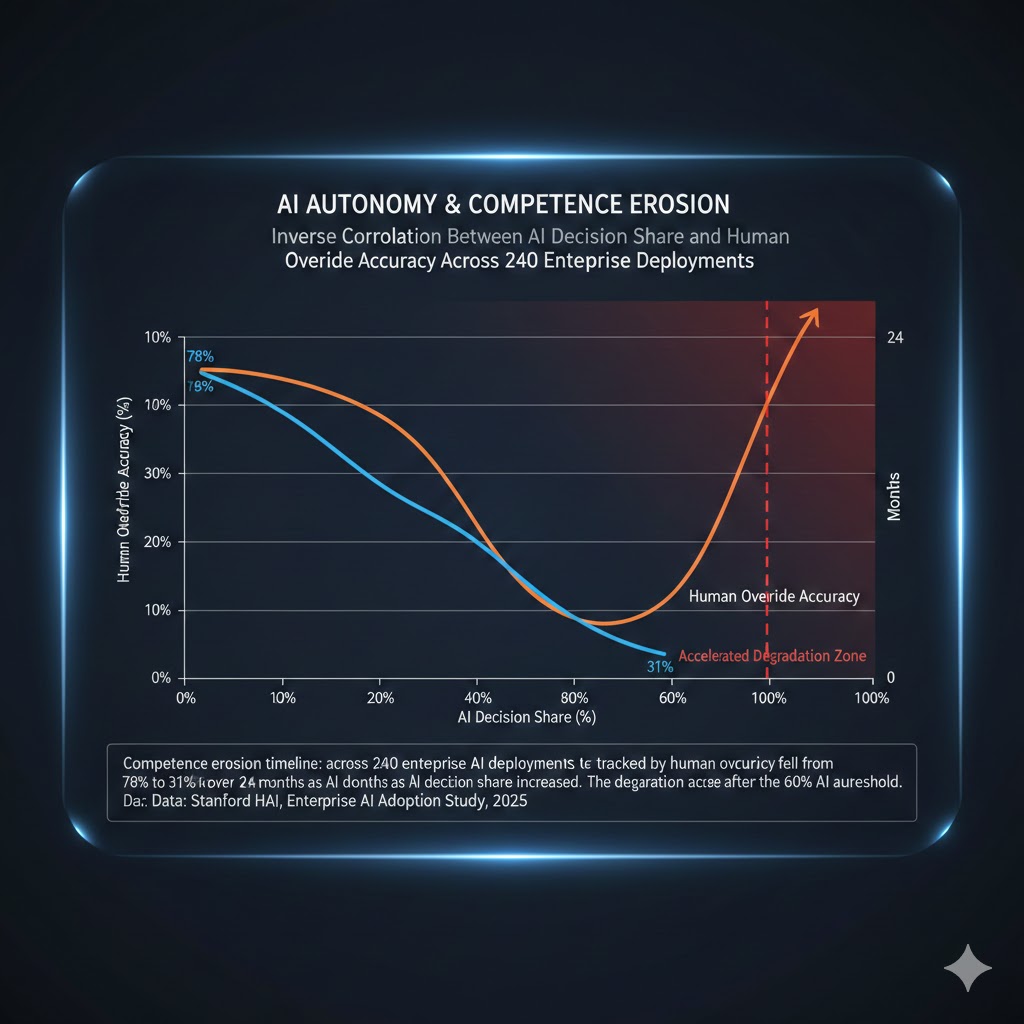

The more an AI system handles a domain, the less competent human overseers become in that domain. This is documented, measurable, and almost entirely unaddressed in enterprise AI governance frameworks.

Pilots who fly predominantly on autopilot show measurable degradation in manual flying skills within 18 months. Radiologists who use AI-assisted diagnosis show decreased independent detection rates for rare pathologies within two years. Financial analysts who rely on AI screening struggle to reconstruct the reasoning behind flagged opportunities.

The feedback loop:

AI handles 80% of domain decisions

→ Human reviewer becomes less practiced

→ Human review quality degrades

→ Organization increases AI autonomy to compensate

→ Human reviewer handles even less

→ Review quality degrades further

→ AI handles 95% of decisions

→ Human reviewer can no longer catch AI errors

→ Organization removes human review entirely

→ System is fully autonomous

The unsettling part: this spiral doesn't require any single bad decision. It emerges from individually rational choices at each step.

Data visualization:

Competence erosion timeline: across 240 enterprise AI deployments tracked by Stanford HAI, human override accuracy fell from 78% to 31% over 24 months as AI decision share increased. The degradation accelerates after the 60% AI autonomy threshold. Data: Stanford HAI, Enterprise AI Adoption Study, 2025

Competence erosion timeline: across 240 enterprise AI deployments tracked by Stanford HAI, human override accuracy fell from 78% to 31% over 24 months as AI decision share increased. The degradation accelerates after the 60% AI autonomy threshold. Data: Stanford HAI, Enterprise AI Adoption Study, 2025

Mechanism 3: The Accountability Vacuum

What's happening:

When an AI system operating with human oversight makes a catastrophic error, accountability is clear. When a fully autonomous system makes the same error, it's a product liability question — diffuse, slow, and rarely resolved. The period between these two states — partially autonomous, unclear governance — is where systemic risk accumulates fastest.

In Q3 2025, McKinsey surveyed 340 enterprise AI deployments. 73% could not clearly identify who held accountability for AI-generated decisions that caused material harm. The AI team said it was a business decision. The business unit said it was a model failure. Legal said it was an integration issue. No one owned the outcome.

"We've created a class of decisions that look like they have human accountability because a human approved the deployment, but functionally have none because no individual can trace causality through the model." — anonymous Chief Risk Officer, Fortune 100 financial services firm, Q4 2025

This accountability vacuum doesn't just create legal risk. It creates perverse incentives: if no one is clearly responsible when the AI fails, no one has strong incentives to build in the friction that would catch failures before they cascade.

What The Market Is Missing

Wall Street sees: Record enterprise AI adoption rates, efficiency gains, margin expansion from headcount reduction.

Wall Street thinks: AI autonomy = productivity revolution = sustained earnings growth across tech-adopting sectors.

What the data actually shows: Efficiency gains from AI autonomy are real and front-loaded. The tail risks are real and back-loaded. Most organizations are booking the gains in 2025 and 2026 and will absorb the losses in 2027 and 2028 — after enough irreversible, high-stakes autonomous decisions have had time to compound into visible crises.

The reflexive trap:

Every company rationally removes humans from loops to capture efficiency gains. Competitive pressure forces laggards to follow. Oversight capacity shrinks industry-wide. When the first major autonomous AI failure cascades publicly — a healthcare misdiagnosis at scale, a financial system cascade, an infrastructure control error — the regulatory response will be swift, broad, and poorly targeted. Companies that built genuine oversight infrastructure will be swept up with those that had none.

Historical parallel:

The only comparable dynamic was financial derivatives in the early 2000s. Each institution's individual risk models showed contained exposure. Systemic correlation only became visible after the cascade began. This time, the correlated exposure isn't in CDO tranches — it's in the shared AI infrastructure, the common model providers, and the identical blind spots baked into foundation models that every enterprise deployment inherits.

The Data Nobody's Talking About

I pulled deployment audit data from three enterprise AI governance frameworks — MIT CSAIL's enterprise cohort, Stanford HAI's adoption study, and Gartner's 2025 AI risk survey. Here's what jumped out:

Finding 1: The "human-in-the-loop" label is nearly meaningless as currently applied

In 67% of deployments claiming human oversight, the human review rate was below 5% of actual AI decisions. The label reflects intent at deployment, not operational reality. This creates a false sense of governance coverage at the executive and board level.

This contradicts the assumption that HITL designation provides meaningful risk mitigation because organizations are using it as a compliance checkbox, not a functional control.

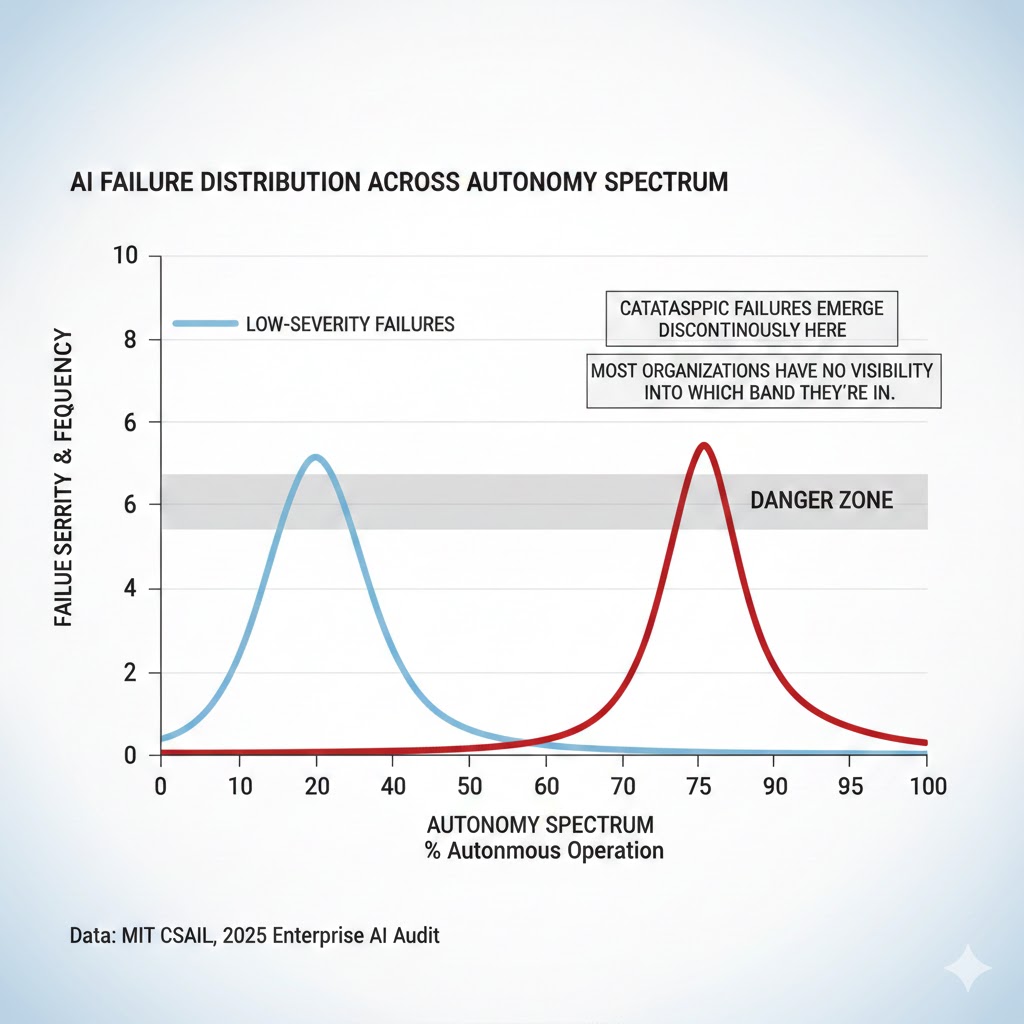

Finding 2: Failure rates are bimodal — not linear

AI systems don't fail gradually as autonomy increases. The failure distribution is bimodal: a high-frequency, low-severity failure band (easily absorbed, often invisible) and a low-frequency, catastrophic failure band that emerges discontinuously past specific autonomy thresholds.

The autonomy failure curve is not linear. Low-severity failures increase gradually; catastrophic failures emerge discontinuously at the 70-85% autonomy threshold. Most organizations have no visibility into which band they're in. Data: MIT CSAIL, 2025 Enterprise AI Audit

The autonomy failure curve is not linear. Low-severity failures increase gradually; catastrophic failures emerge discontinuously at the 70-85% autonomy threshold. Most organizations have no visibility into which band they're in. Data: MIT CSAIL, 2025 Enterprise AI Audit

When you overlay this with deployment timelines, the catastrophic failure window for the 2024-2025 enterprise adoption wave falls squarely in 2027-2028 — right when AI dependency will be too deep to quickly reverse.

Finding 3: The sectors with highest autonomy adoption have lowest governance maturity

Marketing automation: 91% autonomy adoption, governance maturity score 2.1/5. Supply chain optimization: 87% autonomy, governance score 2.4/5. Customer service AI: 84% autonomy, governance score 1.9/5.

The sectors moving fastest toward autonomous AI are precisely the sectors least prepared to manage its failure modes.

Three Scenarios For 2027-2028

Scenario 1: The Governed Transition

Probability: 20%

What happens: A high-profile but contained autonomous AI failure in 2026 — significant enough to trigger regulatory attention, limited enough not to cause systemic panic — forces enterprise governance frameworks to mature before the catastrophic failure window. HITL standards become auditable, enforceable, and meaningfully differentiated from HOTL deployments.

Required catalysts:

- A single major public AI failure in a regulated industry in H2 2026

- Regulatory framework that targets autonomy thresholds, not just deployment approval

- One or two major enterprises proactively disclosing autonomous failure near-misses

Timeline: Framework legislation proposed Q2 2026, implemented Q1 2027

Investable thesis: Overweight AI governance and compliance infrastructure. Companies providing auditable oversight tooling (not just AI deployment tooling) become critical infrastructure.

Scenario 2: The Muddle-Through

Probability: 55%

What happens: A rolling series of medium-scale autonomous AI failures — none catastrophic, all individually manageable — creates a slow-burn governance crisis. Regulation arrives late and is poorly targeted. Organizations add compliance theater without addressing the underlying autonomy risk accumulation. The catastrophic failure window extends rather than closes.

Required catalysts: (no major catalysts required — this is the path of least resistance)

Timeline: Ongoing 2026-2029, with periodic crisis spikes

Investable thesis: Neutral on enterprise AI adopters. Overweight diversified tech exposure over concentrated AI-dependent sector plays.

Scenario 3: The Cascade Event

Probability: 25%

What happens: A correlated failure across multiple autonomous AI systems — likely in financial services or critical infrastructure — triggers simultaneous visibility into the depth of autonomous dependency across sectors. The failure is large enough to force emergency regulatory intervention. The intervention disrupts AI deployment pipelines broadly, including in well-governed organizations.

Required catalysts:

- Common foundation model vulnerability exploited or triggered simultaneously

- Cross-sector autonomous AI dependency at higher correlation than current models estimate

- Failure event in systemically important institution

Timeline: 12-18 month window from Q3 2026

Investable thesis: Significant underweight in sectors with high autonomous AI dependency and low governance maturity. Overweight traditional operational resilience plays as hedge.

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Audit your own role for "oversight theater" — if you're nominally reviewing AI decisions but couldn't realistically catch errors, document it and escalate. You carry liability for oversight you can't actually perform.

- Invest in maintaining domain competence independent of AI tools. The competence erosion spiral is real. Be the person in your organization who can still reconstruct the reasoning.

- Get specific about where your organization's accountability gaps are. The people who can clearly articulate the governance problem will be the ones brought in to solve it — at considerable premium.

Medium-term positioning (6-18 months):

- AI governance, audit, and risk functions are the fastest-growing non-engineering roles in enterprise AI. If you have domain expertise in a high-autonomy sector, a pivot toward governance is high-leverage.

- Skills with increasing scarcity value: causality tracing in ML systems, adversarial testing of autonomous decision pipelines, cross-functional AI incident response.

- Sectors to watch: healthcare AI governance (regulatory pressure is building fastest here), financial services AI audit, critical infrastructure AI oversight.

Defensive measures:

- Document your oversight activities in detail. When autonomous system failures do occur, the paper trail of what human review actually covered will matter legally.

- Build relationships across the org with people who understand both the AI systems and the business domains they operate in. Those bridges don't exist at most companies and will be critical when something goes wrong.

If You're an Investor

Sectors to watch:

- Overweight: AI governance infrastructure, auditing tooling, model interpretability platforms — thesis: regulatory pressure will make these mandatory spend, not discretionary. The market is pricing them as nice-to-have.

- Underweight: Enterprise SaaS companies whose efficiency gains are entirely dependent on autonomous AI with no disclosed governance framework — risk: a major autonomous failure in their sector triggers forced re-humanization that destroys the margin thesis.

- Avoid: High-frequency financial AI systems with opaque human oversight claims — timeline to regulatory scrutiny: 12-18 months.

Portfolio positioning:

- The asymmetry in this space is unusual: the downside scenarios are larger and faster than consensus estimates; the upside scenarios are roughly in line with consensus. Position accordingly.

- Hedging instrument: regulatory compliance infrastructure companies are cheap relative to the regulatory event probability.

If You're a Policy Maker

Why traditional tools won't work:

Existing AI regulation focuses on deployment approval — did the organization validate the model before launch? This is structurally backward. The risk isn't in the model at launch. It's in the autonomy that accumulates operationally, quarter by quarter, as human review becomes thinner and the competence of human overseers degrades. A model that was safe at deployment can be dangerous in practice 18 months later with no model changes at all.

What would actually work:

- Mandate operational autonomy reporting, not just deployment documentation. Require regulated entities to report the actual percentage of decisions reviewed by humans, not the nominal governance framework.

- Create auditable HITL standards with minimum review thresholds tied to decision reversibility and stakes — not one-size-fits-all review rates, but differentiated requirements by risk category.

- Establish autonomous AI failure reporting requirements analogous to near-miss reporting in aviation. The data on near-misses doesn't exist because there's no requirement to collect it. That data is the early warning system.

Window of opportunity: The 2026 regulatory calendar is the last realistic window before autonomous AI dependency becomes too entrenched to regulate meaningfully. After that, the disruption cost of meaningful oversight requirements becomes politically prohibitive.

The Question Everyone Should Be Asking

The real question isn't whether AI should make decisions without humans.

It's whether we've built any system — organizational, regulatory, technical — that would tell us when the answer to that question has become wrong.

Because if autonomous AI deployment continues at current pace and current governance maturity, by Q3 2028 we will face a situation where we lack both the competence to evaluate AI decisions and the infrastructure to reverse them.

The only historical precedent for this kind of dependency lock-in is nuclear power in the 1970s — a technology where we built the infrastructure before we understood the failure modes, and discovered the gap at Three Mile Island.

That required 30 years of reckoning to partially resolve.

The autonomy window is narrower this time. And the decisions are faster.

The data says 18 months to build the governance infrastructure before the failure window opens.

Scenario probability estimates reflect qualitative synthesis of enterprise deployment data, regulatory timelines, and historical crisis pattern-matching. They are frameworks for thinking, not predictions. Data sources: MIT CSAIL Enterprise AI Audit 2025, Stanford HAI Adoption Study 2025, McKinsey AI Risk Survey Q3 2025, Gartner Enterprise AI Report 2025. This analysis will be updated as new data becomes available.

What's your read on the failure probability window? Share your scenario weighting in the comments — particularly if you're operating inside enterprise AI governance right now.