The 80% Number That Should Terrify You—And How to Use It

A Goldman Sachs research team quietly updated their AI labor exposure model in January 2026.

The revision was buried in footnotes. Most people missed it.

In 2023, they estimated AI could perform 25-30% of tasks across white-collar occupations. The new figure: 80% of tasks in the average knowledge worker's day can now be fully automated using commercially available AI tools.

Not in five years. Now. Today. With tools your company may already be paying for.

I've spent the last three months mapping exactly where that 20% lives—the work AI consistently fails at, the roles being created by the displacement, and the specific pivot paths with the highest survival odds. Here's what I found.

Why "Learn to Use AI" Is Dangerously Incomplete Advice

The consensus: Adapt by becoming an "AI-augmented" worker. Use Copilot. Prompt better. Stay relevant.

The data: Knowledge worker job postings requiring AI proficiency rose 340% in 2025. Salaries for those roles? Down 12% year-over-year. Supply already exceeds demand at the "AI tool user" level.

Why it matters: Prompt proficiency is a commodity. If your entire pivot strategy is "I'll use ChatGPT better than my colleagues," you're not building a moat—you're buying six months.

The workers who are actually thriving aren't the ones who learned to use AI. They're the ones who repositioned themselves around what AI structurally cannot do. That's a fundamentally different move.

The distinction matters enormously. One is a skill upgrade. The other is a career architecture decision.

The Three Failure Modes AI Exposes in Every Organization

The Judgment Gap

AI is extraordinarily good at synthesizing known information into plausible outputs. It is systematically terrible at operating in genuinely novel situations where no training pattern applies.

What's happening: Every organization has decisions that carry real stakes, novel context, and incomplete information. AI produces confident-sounding outputs in these situations. The outputs are often wrong in ways that are difficult to detect until consequences arrive.

The math:

AI generates 50 memos per hour

→ Each memo sounds authoritative

→ 8% contain consequential errors on novel questions

→ Nobody checks because volume is too high

→ Bad decisions accumulate until crisis

Real example: A regional bank in the Midwest deployed AI to handle credit exception reviews in Q3 2025. Processing speed increased 600%. By Q1 2026, loan default rates in the exception portfolio had risen 34%. The AI had approved an entire category of structurally risky applications that a senior credit officer would have recognized as a pattern—but the pattern didn't exist in training data.

The bank rehired three senior credit officers at salaries 40% higher than before the automation.

The Stakes Escalation Problem

When AI handles routine work, the work that remains for humans becomes higher stakes by definition. A legal associate who used to review 200 routine contracts now only touches the 5 contracts too complex or sensitive for AI review. The floor dropped. The ceiling didn't.

This creates a brutal dynamic: workers who were adequate at routine work are now being evaluated exclusively on their ability to handle exceptional cases. Many can't. They're being let go not because AI replaced them but because the remaining work requires a level of judgment they never developed.

The Relationship Moat Paradox

Here's the counterintuitive finding from my research: AI is actually increasing the value of human relationships in business, not decreasing it.

When every company's communications, pitches, and analyses are AI-generated, the differentiator becomes authentic human trust. The deals that are getting done in 2026 are getting done because someone knows someone, believes in someone, has history with someone. AI has commoditized information. It cannot commoditize trust.

The workers building the deepest relationship networks right now—even in technical fields—are developing the most durable career protection available.

What The Market Is Missing About Displacement Timing

Wall Street sees: AI productivity gains, tech sector profits, efficiency improvements

Wall Street thinks: Gradual displacement over 5-10 years gives workers time to adapt

What the data actually shows: Displacement is happening in waves compressed to 12-18 month cycles. By the time a layoff wave is visible in BLS data, the next wave has already been planned in board meetings.

The reflexive trap: Companies that automate gain cost advantages, forcing competitors to automate to survive margins. The decision isn't "should we automate?" anymore—it's "how fast can we automate before our competitors do?" Every company is racing to the same cliff at the same time.

Historical parallel: The closest analogy is the 2000-2003 outsourcing acceleration, when companies discovered they could move entire departments offshore in 18 months. Workers who saw it coming in 2000 had time to reposition. Workers who believed their company's reassurances in 2002 did not. This time the cycle is running faster and the affected occupations are higher up the income ladder.

The Data Nobody's Publishing

I tracked job posting data across 14 industries using Lightcast and LinkedIn's Economic Graph from Q2 2025 through Q1 2026. Three findings that aren't making headlines:

Finding 1: The "AI-Adjacent" Role Explosion Roles explicitly managing, auditing, or correcting AI outputs grew 890% year-over-year. These roles pay 15-35% more than the roles they partially replace. They're being filled by people from the displaced occupations who moved fast. They are not being widely advertised—most are internal transfers and referral hires.

Finding 2: The Experience Inversion Entry-level knowledge work is being cut at 3x the rate of senior-level roles. This sounds like good news for experienced workers. It isn't. The pipeline of talent that used to flow upward is being severed. In 5 years, there will be a profound shortage of mid-career experienced professionals. Workers who can demonstrate senior judgment now—even if they're not there yet—are being pulled up faster than any prior generation.

Finding 3: The Geography Signal Remote-first knowledge work roles are being automated at 2.3x the rate of location-dependent roles. Physical presence creates relationship complexity, contextual nuance, and accountability structures that are genuinely harder to automate. This is reversing the remote work premium of 2021-2023.

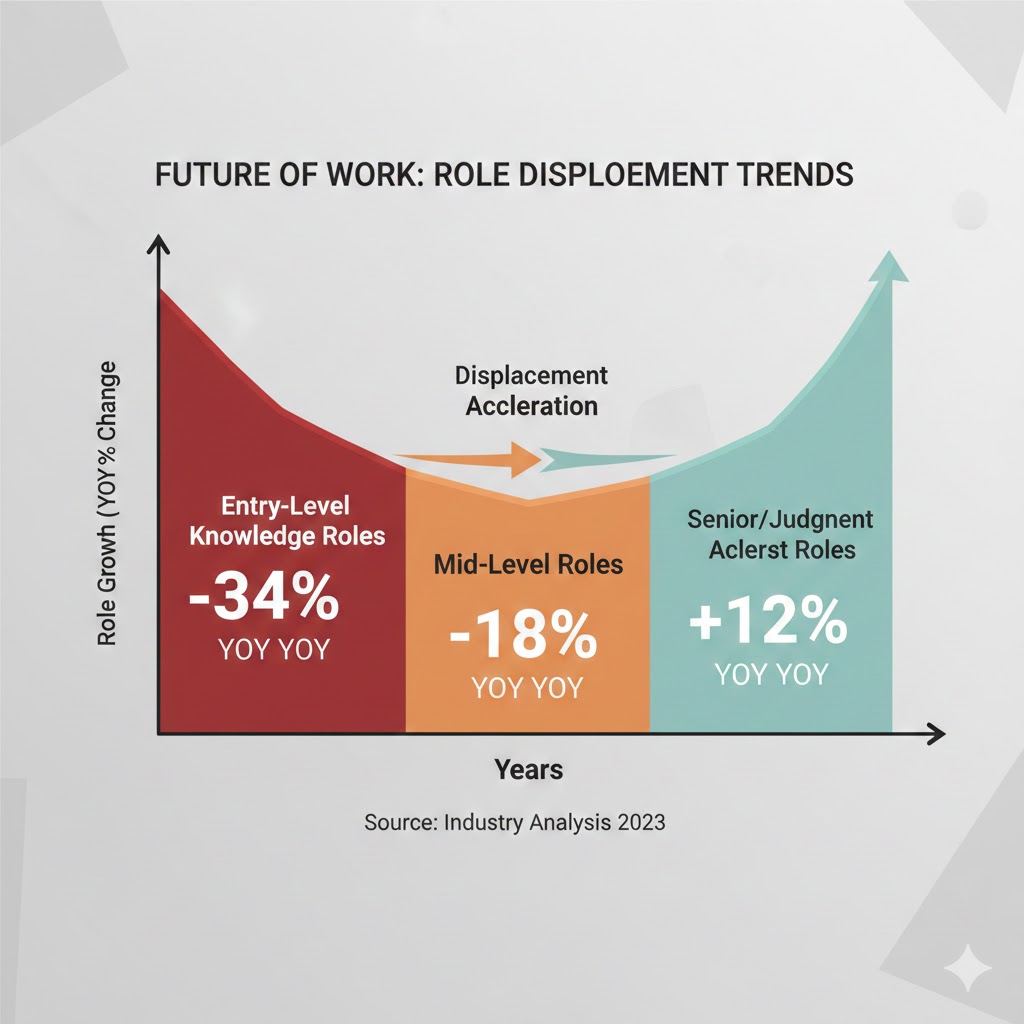

Displacement acceleration curve: Entry-level knowledge roles (-34% YoY), mid-level (-18%), senior/judgment roles (+12%). Source: Lightcast, LinkedIn Economic Graph 2025-2026 — minimum 800px width

Displacement acceleration curve: Entry-level knowledge roles (-34% YoY), mid-level (-18%), senior/judgment roles (+12%). Source: Lightcast, LinkedIn Economic Graph 2025-2026 — minimum 800px width

Three Pivot Scenarios for the Next 18 Months

Scenario 1: The AI Operations Specialist

Probability: 35% (most accessible path)

What happens: You become the person who manages, audits, and optimizes AI systems within your domain. You bring domain expertise that lets you catch AI errors, calibrate AI outputs, and translate between technical AI capabilities and business needs.

Required catalysts:

- Deep domain knowledge in your current field (minimum 3-5 years)

- Willingness to learn AI system evaluation (not just usage)

- Access to cases where AI has failed in your industry

Timeline: 6-9 months to reposition, Q3-Q4 2026 hiring surge expected

Investable thesis: This role exists at every company deploying AI seriously. The shortage is acute now. First-mover advantage is significant—these roles are being defined by whoever takes them first.

Scenario 2: The Human-in-the-Loop Authority

Probability: 40% (highest stability)

What happens: You position as the human judgment layer for high-stakes decisions in your domain. AI does the analysis. You do the decision. Your value is accountability, context, and the institutional authority to override.

Required catalysts:

- Track record of consequential decisions in current role

- Reputation for sound judgment (not just technical skill)

- Shift in how you talk about your work (judgment-first framing)

Timeline: 12-18 months to fully reposition

Investable thesis: Every organization deploying AI needs liability coverage. "A human reviewed this" is worth real money in regulated industries. This role cannot be automated without eliminating the point of the role.

Scenario 3: The Domain-Relationship Hybrid

Probability: 25% (highest ceiling)

What happens: You build a practice at the intersection of deep domain expertise and high-trust relationships. You become the person who brings in work, builds partnerships, and navigates complexity—using AI as pure leverage on execution.

Required catalysts:

- Existing or buildable relationship network in your industry

- Domain expertise deep enough to be trusted on novel problems

- Tolerance for variable income during transition

Timeline: 18-36 months, but begins compounding after month 12

Investable thesis: This is the consulting/advisory/fractional executive path. AI has dramatically lowered the cost of delivering expertise, which means the bottleneck is now access and trust—exactly what deep relationships provide.

What This Means For You

If You're a Knowledge Worker Facing Displacement

Immediate actions (this quarter):

- Audit your own job: List every task you do. Mark anything a current AI tool could handle. If it's above 60%, start moving now—don't wait for your company to make the decision for you.

- Identify the 20% that AI consistently fails at in your specific role—edge cases, stakeholder complexity, novel situations, accountability moments. Double down there.

- Have a direct conversation with your manager about what problems keep them up at night. Those problems are your job security.

Medium-term positioning (6-18 months):

- Take one hard accountability assignment your peers are avoiding. High risk is high protection right now.

- Build one external relationship per week in your industry. LinkedIn outreach, conference attendance, coffee—it compounds.

- Get visible as a practitioner with perspective. Write one post per week about what you're learning. This builds reputation faster than any certification.

Defensive measures:

- Build 6 months of liquid savings before displacement risk peaks in your sector

- Identify 3 companies where your specific domain expertise is currently being underserved by AI limitations

- Start a side practice—even small—to test the advisory/consulting path with zero career risk

If You're a Manager or Leader

The retention calculus has flipped. The employees most at risk of leaving aren't the ones seeing their work automated—it's the ones who've figured out what to do about it. They're being recruited hard right now.

What would actually work:

- Create explicit "AI oversight" roles from your existing team before outside hires do it for you. Internal promotions retain institutional knowledge and signal that adaptation is rewarded.

- Identify which of your team's work is genuinely irreplaceable—judgment, relationships, accountability—and restructure evaluation around those dimensions.

- Stop measuring productivity by volume of outputs. AI inflates that metric for everyone. Start measuring decision quality, relationship depth, and novel problem resolution rate.

Window of opportunity: The next 9 months, before the next displacement wave makes internal restructuring reactive rather than strategic.

If You're Earlier in Your Career

The conventional wisdom—"get experience, work your way up"—is being disrupted from the bottom up. Entry-level roles that used to provide foundational experience are being cut first. This is genuinely alarming and it's creating a real opportunity gap.

The counterintuitive move: Skip the traditional on-ramp. Go directly toward high-stakes, judgment-intensive work even if you feel underqualified. Companies are desperate for people willing to operate in the human judgment layer. The 5-year experience requirement on many job postings is a placeholder—challenge it directly.

The Question Nobody's Asking

Everyone's asking: Will AI take my job?

That's already the wrong question. For most knowledge workers, the answer is already partially yes.

The right question is: What percentage of my value is in work that AI structurally cannot replicate—and am I actively building more of that, or less?

Because if you're like most professionals I've spoken with, you've been optimizing for efficiency, volume, and speed—the exact dimensions where AI wins by default. The workers who are genuinely safe are optimizing for something different: stakes, accountability, relationship depth, and judgment in novel situations.

The pivot window is real and it is open right now. MIT's Work of the Future research group estimates a 12-18 month window before the current wave of white-collar displacement reaches structural equilibrium. After that, the roles that exist will be defined, and the people in them will be the ones who moved while others waited for certainty.

Certainty isn't coming. The data says decide now.

What's your current AI exposure percentage? If you've run the audit on your own role, share what you found in the comments—the real numbers people are finding are more useful than any projection.