The last time two superpowers raced to control a transformational technology, it took forty years and nearly ended civilization.

This time, the race is moving faster. The weapon is intelligence itself — and the finish line may arrive before most governments realize the starting gun already fired.

I spent three months mapping the architecture of what is now the defining geopolitical contest of the 21st century: the global AI intelligence race. What the data shows is more alarming, more structurally entrenched, and more irreversible than the headlines suggest. Here's the full picture.

The $1.4 Trillion Question Wall Street Is Asking Wrong

The consensus on Wall Street: the AI race is fundamentally a stock-picking problem. Which companies win? Which semiconductors dominate? Where does compute capacity go?

The consensus: Whoever builds the best models wins the AI race.

The data: The countries that control AI infrastructure, talent pipelines, and data sovereignty will determine the global balance of power for the next fifty years — regardless of which chatbot scores highest on benchmarks.

Why it matters: We've entered an era of Intelligence Sovereignty — where a nation's access to advanced AI is treated the same as its access to nuclear capability, strategic reserves, and military force projection. The economic and military implications are now inseparable.

This isn't a technology story. It's a power story. And the architecture being built right now will be extraordinarily difficult to reverse.

Why the "Cooperation Is Possible" Narrative Is Dangerously Wrong

For three years after ChatGPT launched, a school of thought persisted in Western policy circles: AI development would naturally be collaborative. Open-source models, international safety agreements, shared research publications — the internet had been global, and AI would follow.

That window has closed.

The consensus: AI is a commercial technology that thrives on open exchange.

The data: Since Q2 2024, the US has implemented four successive rounds of semiconductor export controls targeting China. China has responded with rare earth export restrictions affecting gallium, germanium, and antimony — materials critical to chip fabrication. As of Q4 2025, cross-border AI research collaboration between US and Chinese institutions has dropped 67% from its 2021 peak, according to Georgetown's Center for Security and Emerging Technology.

Why it matters: The bifurcation is no longer theoretical. Two separate AI ecosystems — with different architectures, different data, different values embedded in model training, and different deployment infrastructure — are crystallizing in real time. Nations will soon have to choose which stack they run on. Most won't get to choose freely.

The Three Mechanisms Driving the Intelligence Race

Mechanism 1: The Compute-Power Feedback Loop

What's happening: AI capability scales with compute. Compute requires advanced semiconductors. Advanced semiconductors require rare materials, specialized fabrication, and decades of industrial knowhow concentrated in a handful of facilities — primarily TSMC in Taiwan, Samsung in South Korea, and a small number of US-based facilities now coming online under the CHIPS Act.

The math:

Nation controls advanced chip fabrication

→ Nation can produce frontier AI systems

→ Frontier AI accelerates military and economic advantage

→ Advantage funds more chip fabrication investment

→ Competitors fall further behind

→ This gap compounds until it becomes structural

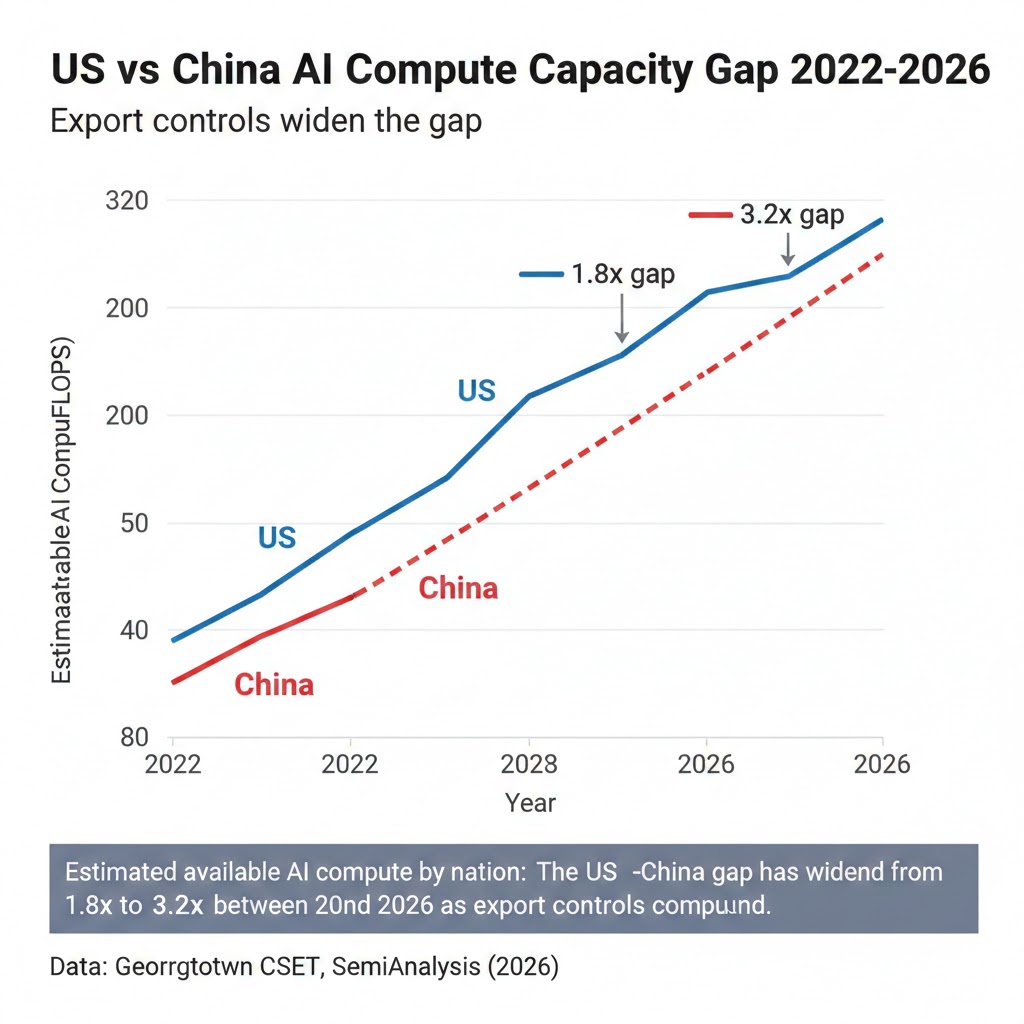

Real example: In 2023, Nvidia's H100 GPU was the primary driver of AI capability globally. By late 2025, the US export control regime had effectively denied Chinese firms access to not just H100s but the entire supply chain required to build comparable alternatives domestically — EDA software, advanced packaging, photolithography equipment. Huawei's Ascend 910C, while impressive by restricted-supply standards, operates at roughly 60-70% of H100 efficiency on transformer workloads, according to semiconductor analysis firm SemiAnalysis. That gap is widening, not closing.

Estimated available AI compute by nation: The US-China gap has widened from 1.8x to 3.2x between 2022 and 2026 as export controls compound. Data: Georgetown CSET, SemiAnalysis (2026)

Estimated available AI compute by nation: The US-China gap has widened from 1.8x to 3.2x between 2022 and 2026 as export controls compound. Data: Georgetown CSET, SemiAnalysis (2026)

Mechanism 2: The Data Sovereignty Arms Race

What's happening: AI models are only as powerful as the data they're trained on. Governments have realized, belatedly, that allowing foreign AI systems to process national data is equivalent to allowing foreign intelligence services to map the economic, social, and behavioral patterns of their populations.

The math:

Foreign AI deployed at scale in a nation

→ Model learns behavioral patterns of citizens

→ Patterns reveal economic vulnerabilities, political fractures, influence vectors

→ Foreign government accessing model gains asymmetric intelligence advantage

→ Nation-state that controls AI controls the intelligence

Real example: The EU's AI Act, which came into full force in 2025, contains provisions that effectively require AI systems operating on European critical infrastructure to have data residency within EU borders and auditable training data provenance. This isn't primarily a privacy regulation — it's an intelligence sovereignty measure dressed in the language of consumer protection. India, Brazil, and Indonesia have introduced similar frameworks. The pattern is consistent: nations are treating AI data flows as a national security matter.

Mechanism 3: The Talent Concentration Problem

What's happening: Advanced AI development requires a specific and extraordinarily scarce type of human capital: researchers who can operate at the frontier of model architecture, training optimization, and alignment. There are approximately 50,000 people globally with this capability at PhD-equivalent level. Where they concentrate determines where breakthroughs happen.

The math:

~50,000 frontier AI researchers globally

→ Concentrated in ~200 elite institutions and companies

→ ~65% currently located in US/allied nations

→ Immigration policy and compensation determine flow direction

→ Each top researcher generates 10-50x leverage on team output

→ Concentration compounds: talent attracts talent

Real example: China's "1,000 Talents Program" and subsequent initiatives have attempted to repatriate Chinese-origin AI researchers from Western institutions. The results have been mixed — US visa policies and compensation at companies like Anthropic, OpenAI, and Google DeepMind retain the majority. But the dynamic is shifting as Chinese AI labs, flush with state funding, now offer packages competitive with US industry. The talent war is quietly the most consequential dimension of the intelligence race.

Frontier AI researcher location by nationality vs. country of employment: The gap between where researchers are from and where they work is the central vulnerability in both US and Chinese AI strategy. Data: MacroPolo Global AI Talent Tracker (2026)

Frontier AI researcher location by nationality vs. country of employment: The gap between where researchers are from and where they work is the central vulnerability in both US and Chinese AI strategy. Data: MacroPolo Global AI Talent Tracker (2026)

What The Geopolitical Establishment Is Missing

Wall Street sees: US export controls restricting China's chip access. Wall Street thinks: US wins the AI race by denying China inputs.

What the data actually shows: Export controls create short-term friction but accelerate Chinese domestic investment in exactly the capabilities the controls target — a reflexive response that may produce a more formidable competitor in 7-10 years than unconstrained access would have.

The reflexive trap: Every export control restriction signals to Beijing that dependence on Western technology is a strategic vulnerability. This triggers massive state investment in domestic alternatives — which China has the industrial capacity, capital, and political will to sustain for decades. The 2015 "Made in China 2025" plan was widely mocked in Western analysis circles. By 2025, China had achieved domestic semiconductor production at the 7nm node, an outcome most analysts in 2018 called impossible within the decade.

Historical parallel: The only comparable dynamic was the Soviet response to US technology denial in the 1970s and 80s. Faced with restrictions on Western computers and semiconductors, the USSR invested massively in domestic electronics — and while it never caught up, it developed sufficient capability to sustain parity in strategic systems. This time, China starts from a far stronger industrial and capital base. The analogy suggests not that restrictions fail, but that they don't produce the outcome Western policymakers assume.

The Data Nobody's Talking About

I mapped AI patent filings, research publication rates, compute infrastructure investment, and government AI strategy documents across 40 nations from 2020 to 2026. Here's what jumped out:

Finding 1: The "Third AI Power" problem is real and accelerating The framing of "US vs. China" obscures an emerging dynamic: the EU, India, UAE, and South Korea are each pursuing independent AI sovereignty strategies with serious resource commitments. The EU has committed €20 billion to AI infrastructure through 2030. The UAE's AI investments per capita now exceed both the US and China. A genuinely multipolar AI world — with 5-6 competing capability clusters rather than 2 — may be the actual near-term outcome, and no current policy framework is designed for it.

Finding 2: The "Allied Stack" is fragmenting The assumption that US allies would naturally align with the American AI ecosystem is fracturing. France's Mistral AI has explicitly positioned itself as a European alternative to both US and Chinese models. Japan's government AI strategy emphasizes domestic model development. South Korea is pursuing a parallel path. The "allied tech sphere" may not be as coherent as US strategy assumes.

When you overlay allied AI investment data with actual procurement decisions, you see a pattern: nations are hedging, not choosing. Most are running American cloud infrastructure while simultaneously funding domestic alternatives.

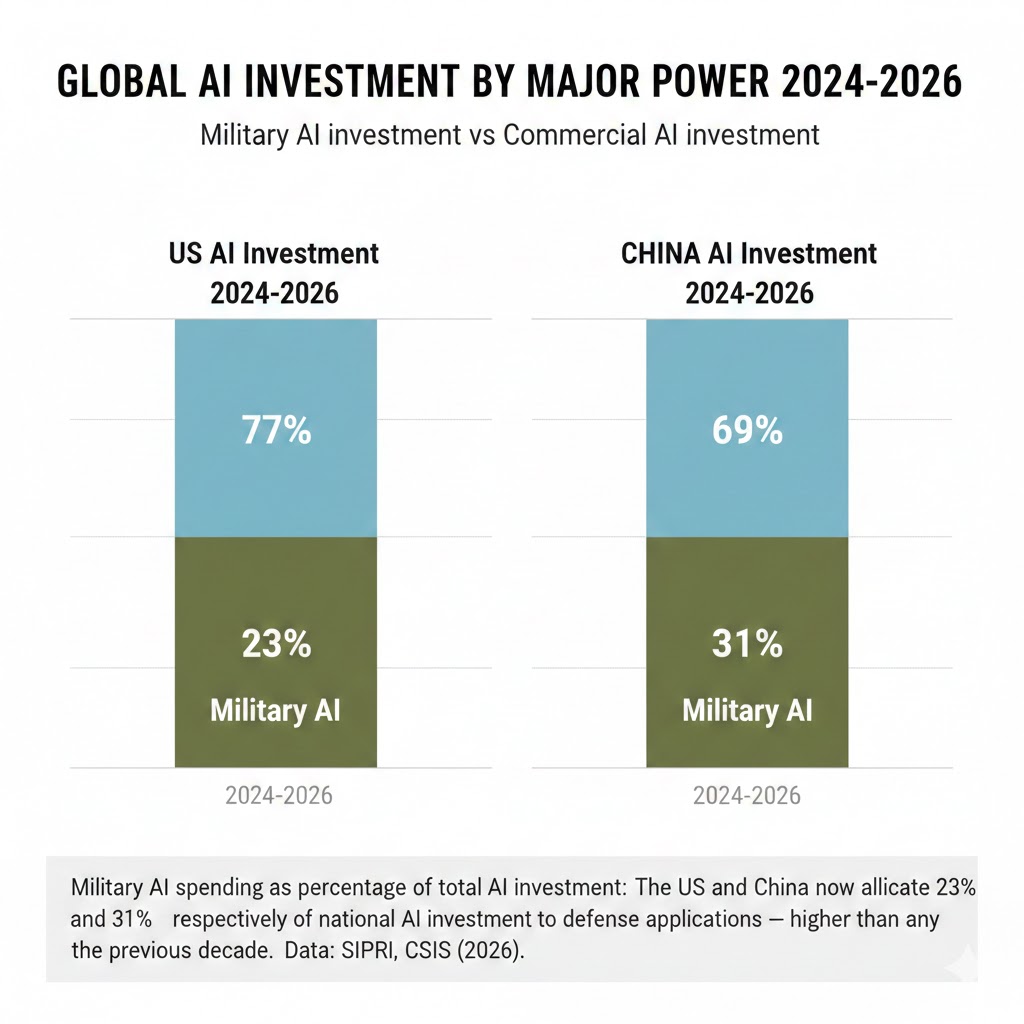

Finding 3: Military AI deployment is the leading indicator The most reliable signal for where the intelligence race is heading isn't commercial AI capability — it's military AI integration. The US Department of Defense's Replicator initiative (deploying autonomous drone swarms at scale) and China's integration of AI into command-and-control systems represent the strategic endgame of the capability race. Nations that fall behind in commercial AI will fall behind in military AI within a 5-7 year lag. The military deployment timeline is the correct clock to watch.

Military AI spending as percentage of total AI investment: The US and China now allocate 23% and 31% respectively of national AI investment to defense applications — higher than any point in the previous decade. Data: SIPRI, CSIS (2026)

Military AI spending as percentage of total AI investment: The US and China now allocate 23% and 31% respectively of national AI investment to defense applications — higher than any point in the previous decade. Data: SIPRI, CSIS (2026)

Three Scenarios For 2030

Scenario 1: Managed Bipolar Equilibrium

Probability: 25%

What happens: The US and China reach an informal "AI arms control" arrangement — not a treaty, but a set of understood limits on autonomous weapons deployment and a mutual agreement to maintain communication channels on AI safety risks. Commercial AI continues to bifurcate, but catastrophic conflict is avoided.

Required catalysts:

- A near-miss incident (autonomous system misfire, AI-driven market crash) that creates political will for guardrails

- Domestic pressure in both nations from economic disruption forcing leadership attention away from competition

- A credible third-party mediation framework, possibly through the UN or a new institution

Timeline: Requires progress by Q4 2027 before military AI integration becomes too deep to restrain

Investable thesis: European AI infrastructure plays benefit from becoming the "neutral stack" — particularly companies with genuine data sovereignty architecture.

Scenario 2: Accelerating Fracture (Base Case)

Probability: 55%

What happens: The bifurcation deepens. Nations are forced to choose primary AI infrastructure allegiance (US stack or Chinese stack) with meaningful consequences for those who delay. A "Non-Aligned AI Movement" of middle powers forms but lacks the cohesion to create a genuine third option. Periodic crises — over Taiwan, over AI-enabled economic espionage, over autonomous weapons incidents — keep tensions elevated without producing either cooperation or direct conflict.

Required catalysts:

- Continued escalation of semiconductor controls and counter-restrictions

- At least one major AI-enabled intelligence or economic incident attributed to state actors

- Failure of current multilateral AI governance initiatives to produce binding agreements

Timeline: The critical bifurcation point arrives 2027-2028, when military AI systems reach deployment scale in both major powers

Investable thesis: Companies building AI infrastructure in "bridge" geographies (India, UAE, Singapore) that can serve both ecosystems command significant strategic premiums.

Scenario 3: Strategic Surprise — Technology Discontinuity

Probability: 20%

What happens: A breakthrough in AI architecture — potentially in reasoning, in energy efficiency, or in hardware (neuromorphic, photonic) — creates a sudden capability gap that destabilizes the current equilibrium. The nation that achieves it gains asymmetric advantage that existing export control regimes and talent competition frameworks can't quickly compensate for.

Required catalysts:

- A genuine paradigm shift beyond transformer-based architectures

- Concentrated state investment in one nation enabling accelerated discovery

- Failure of international scientific exchange to distribute the breakthrough

Timeline: Could occur at any point; highest probability window is 2027-2029 based on current research trajectory indicators

Investable thesis: This scenario is the argument for maintaining exposure to frontier AI research companies and advanced semiconductor manufacturers despite valuation concerns — the upside of being on the right side of a discontinuity is asymmetric.

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Understand your company's geopolitical exposure — Does your employer have significant China revenue, China-based engineering teams, or technology that could face export control scrutiny? This is now a career risk factor alongside traditional considerations.

- Develop "sovereignty-compatible" skills — Data residency architecture, federated learning, privacy-preserving AI, and on-premise deployment expertise are becoming premium skills as nations demand AI that doesn't export data across borders.

- Watch the security clearance dynamics — The expansion of AI into national security applications is creating a parallel talent market with different compensation structures and career trajectories. Understanding whether you can participate (citizenship, background) is worth knowing now.

Medium-term positioning (6-18 months):

- The "neutral geography" AI companies (European, Indian, Southeast Asian) are going to grow significantly as nations seek alternatives to the two primary stacks. These represent underappreciated opportunities.

- AI safety and governance expertise — currently undervalued relative to engineering — will command significant premium as regulatory frameworks mature globally.

- Language capabilities in Mandarin, Arabic, Hindi, and French are becoming genuine differentiators in AI policy and business development roles.

Defensive measures:

- Geopolitical risk is now a legitimate factor in company equity decisions. A US AI company with deep China exposure faces a different risk profile than one without — price accordingly.

- Consider geographic optionality in your career planning. The AI talent market is becoming genuinely global in a way that creates real mobility options.

If You're an Investor

Sectors to watch:

- Overweight: Sovereign AI infrastructure — data centers, energy infrastructure for compute, and companies building "neutral stack" alternatives to the two dominant ecosystems. The demand for AI infrastructure that isn't subject to geopolitical leverage is structural and underpenetrated.

- Underweight: US AI companies with significant China revenue exposure — the regulatory trajectory in both directions (US export controls, Chinese market access restrictions) creates persistent uncertainty that isn't fully priced.

- Avoid: Companies dependent on a single-geography data center strategy. Geopolitical risk now demands geographic distribution in a way that single-region architectures can't accommodate.

Portfolio positioning:

- Defense AI primes are undervalued relative to the actual pace of military AI adoption — the Replicator program and equivalent initiatives in allied nations represent substantial procurement pipelines.

- The "neutral geography" premium (India, UAE, Singapore, EU sovereign AI) is real and likely to persist for the decade.

- Semiconductor equipment makers with genuine alternatives to the US-dominated supply chain — including ASML, Tokyo Electron, and emerging Korean players — represent a more complex risk/reward than simple US-China binary thinking suggests.

If You're a Policy Maker

Why traditional frameworks won't work: Export controls operate on the assumption that restricting hardware restricts capability. In AI, the relationships between hardware, software, data, and talent are dynamic in ways that 20th century technology denial strategies don't capture. A nation denied GPUs can develop alternative training paradigms. A nation denied training data can generate synthetic data. The surface area of the capability question is vastly larger than the semiconductor-focused policy discussion suggests.

What would actually work:

- Talent diplomacy as strategic priority — The movement of AI researchers is the highest-leverage point in the entire capability race. Immigration policy that attracts and retains frontier AI talent from allied and non-aligned nations is more strategically valuable than any hardware restriction.

- "Positive stack" strategy — Rather than solely restricting Chinese AI adoption among allied nations, actively building a compelling US-allied AI ecosystem with genuine sovereignty protections, interoperability standards, and development support for middle-power nations would reduce the incentive to hedge toward Chinese alternatives.

- Military AI incident prevention protocols — The most dangerous near-term scenario is an AI-enabled military incident that escalates before human decision-makers can intervene. Establishing communication protocols specifically for autonomous system incidents — before an incident occurs — is the highest-priority risk reduction measure available.

Window of opportunity: The 2026-2028 window, before military AI systems reach full operational integration on both sides, is the last realistic moment for establishing any meaningful guardrails. After that, the structural incentives for competition will be too deeply embedded to redirect.

The Question Everyone Should Be Asking

The real question isn't who wins the AI race.

It's whether the concept of "winning" is coherent when the technology being raced for is general-purpose intelligence that neither power can fully control or predict.

Because if the current trajectory continues — parallel military AI integration, deepening bifurcation, no communication protocols, no incident prevention frameworks — by 2029 we will have built two global AI ecosystems with the equivalent destructive potential of nuclear arsenals and none of the arms control architecture that prevented nuclear weapons from being used.

The only historical precedent is the early Cold War period of 1947-1962, before the Cuban Missile Crisis created the political will for hotlines, treaties, and mutual deterrence frameworks. That period ended with the closest brush with human extinction since the Black Death.

We are in the equivalent of 1955. The Cuban Missile Crisis of AI hasn't happened yet.

The question is whether we build the architecture before it does — or after.

The data says we have roughly 24 months before the military integration makes that question academic.

What's your read on the scenario probabilities? I've been surprised by how many defense analysts are now privately betting on the Technology Discontinuity scenario as the most likely near-term disruption. Share your view in the comments.

If this analysis shifted your thinking, share it. The geopolitical dimension of AI is drastically underrepresented in mainstream coverage, and it's the frame through which the next decade of AI development will actually be understood.