Problem: Your AI App Is Live but Not Legally Compliant

You've shipped an AI feature or product serving EU users. GDPR has been around since 2018, and the EU AI Act started applying in 2024. But most AI apps weren't built with either in mind.

Fines for GDPR violations reach €20M or 4% of global annual turnover. EU AI Act penalties for high-risk violations go even higher. Ignorance is not a defense.

You'll learn:

- How to classify your AI app's risk level under the EU AI Act

- What GDPR obligations are specific to AI systems

- How to implement audit logging, consent flows, and data minimization in code

Time: 20 min | Level: Intermediate

Why This Happens

Most developers treat compliance as a legal team problem. But both GDPR and the EU AI Act impose technical obligations — things only engineers can actually implement: explainability hooks, data retention policies, opt-out mechanisms, and audit trails baked into the system.

The EU AI Act became fully applicable in August 2026, with high-risk system obligations phased in. If you're building anything that influences hiring, credit, healthcare, education, or law enforcement in the EU, you're likely in scope.

Common gaps:

- Personal data passed directly to third-party LLM APIs without a data processing agreement

- No mechanism for users to request deletion of their data used in AI outputs

- No logging of model decisions for audit or challenge purposes

- Automated decisions made without human oversight or opt-out paths

Solution

Step 1: Classify Your System Under the EU AI Act

The EU AI Act uses a tiered risk model. Your obligations depend on where you land.

Unacceptable risk → Prohibited (e.g., social scoring, real-time biometric surveillance)

High risk → Full compliance obligations (hiring, credit, education, critical infra)

Limited risk → Transparency obligations (chatbots, deepfakes)

Minimal risk → No specific obligations (spam filters, AI in games)

Run this classification check first:

# compliance/risk_classifier.py

HIGH_RISK_DOMAINS = [

"hiring", "credit_scoring", "education_evaluation",

"healthcare_diagnosis", "law_enforcement", "border_control",

"critical_infrastructure"

]

LIMITED_RISK_INDICATORS = [

"chatbot", "content_generation", "recommendation",

"image_synthesis", "voice_synthesis"

]

def classify_risk(domain: str, interacts_with_humans: bool) -> str:

"""

Returns risk tier based on EU AI Act Annex III.

Used to determine which compliance obligations apply.

"""

if domain in HIGH_RISK_DOMAINS:

return "HIGH_RISK"

if interacts_with_humans or domain in LIMITED_RISK_INDICATORS:

return "LIMITED_RISK"

return "MINIMAL_RISK"

Expected: A clear tier that drives the rest of your compliance work.

If your domain is ambiguous: Err toward HIGH_RISK. Regulators apply the broader interpretation.

Step 2: Audit Your Data Flows for GDPR

Every personal data point that touches your AI pipeline needs a lawful basis, a retention limit, and a deletion path.

# compliance/data_flow_audit.py

from dataclasses import dataclass

from enum import Enum

from datetime import timedelta

class LawfulBasis(Enum):

CONSENT = "consent"

CONTRACT = "contract"

LEGITIMATE_INTEREST = "legitimate_interest"

LEGAL_OBLIGATION = "legal_obligation"

@dataclass

class DataFlowRecord:

data_type: str # e.g., "user_message", "profile_embedding"

lawful_basis: LawfulBasis

retention_period: timedelta

third_parties: list[str] # LLM providers, vector DBs, logging services

has_deletion_path: bool

# Example: Audit record for a chatbot storing conversation history

conversation_flow = DataFlowRecord(

data_type="user_message",

lawful_basis=LawfulBasis.CONSENT,

retention_period=timedelta(days=90),

third_parties=["openai", "pinecone"], # These need DPAs in place

has_deletion_path=True # Must be True for personal data

)

Critical check: If third_parties contains any LLM API provider, you need a signed Data Processing Agreement (DPA) with them. OpenAI, Anthropic, Google, and Mistral all offer DPAs — but you have to opt in and sign them explicitly.

If has_deletion_path is False: Stop here. GDPR Article 17 (right to erasure) is non-negotiable for consent-based processing.

Step 3: Implement Consent and Opt-Out

For AI features that process personal data under the CONSENT basis, the consent mechanism must be granular, withdrawable, and logged.

// lib/consent-manager.ts

interface ConsentRecord {

userId: string;

feature: string; // e.g., "ai_personalization", "ai_chat_history"

granted: boolean;

timestamp: string; // ISO 8601

ipAddress: string; // For audit trail

version: string; // Consent text version — bump when wording changes

}

async function recordConsent(

userId: string,

feature: string,

granted: boolean

): Promise<void> {

const record: ConsentRecord = {

userId,

feature,

granted,

timestamp: new Date().toISOString(),

ipAddress: getRequestIp(), // Log for compliance audit

version: CONSENT_TEXT_VERSION // "v2.1" — track this in your codebase

};

// Store in append-only log — do NOT update in place

await db.consentLog.insert(record);

// Propagate withdrawal immediately

if (!granted) {

await purgeUserAiData(userId, feature);

}

}

Why append-only: GDPR requires proof of consent at the time it was given. Overwriting records destroys your audit trail.

If withdrawing consent: The purgeUserAiData call must cascade to all third-party stores — your vector DB, any fine-tuning datasets, and cached embeddings. This is the part teams miss most.

Step 4: Add Decision Logging for High-Risk Systems

If your system is HIGH_RISK, the EU AI Act requires you to log enough information to explain and audit every decision.

# compliance/decision_logger.py

import json

import hashlib

from datetime import datetime

def log_ai_decision(

user_id: str,

decision_type: str, # e.g., "loan_approval", "candidate_ranking"

model_id: str,

inputs: dict,

output: dict,

confidence: float

) -> str:

"""

Creates a tamper-evident audit record for each AI decision.

Required for EU AI Act Article 12 (record-keeping).

"""

record = {

"timestamp": datetime.utcnow().isoformat(),

"user_id": hashlib.sha256(user_id.encode()).hexdigest(), # Pseudonymize

"decision_type": decision_type,

"model_id": model_id,

# Store only what's needed to reconstruct the decision — not raw PII

"input_summary": summarize_inputs(inputs),

"output": output,

"confidence": confidence,

"human_review_required": confidence < 0.85 # Flag for oversight

}

record["checksum"] = hashlib.sha256(

json.dumps(record, sort_keys=True).encode()

).hexdigest() # Detect tampering

store_to_immutable_log(record)

return record["checksum"]

Why pseudonymize inputs: You still need to investigate complaints, but storing raw PII in logs creates a secondary GDPR obligation. Use a reversible pseudonym keyed off a separate secrets store.

If human_review_required is True: Your system must route this to a human before acting. EU AI Act Article 14 mandates human oversight for consequential high-risk decisions.

Step 5: Add the Required Transparency Disclosures

For LIMITED_RISK systems (chatbots, generative AI), GDPR Article 13 and EU AI Act Article 50 both require disclosure.

// components/AiDisclosureBanner.tsx

// Show this before the user interacts with any AI feature

export function AiDisclosureBanner() {

return (

<div role="note" aria-label="AI disclosure">

<p>

This feature uses AI to generate responses. It may make mistakes.

Outputs do not constitute professional advice.

</p>

<p>

Your messages are processed by [Provider] under our{" "}

<a href="/privacy">Privacy Policy</a>. You can{" "}

<a href="/settings/ai">manage or delete your AI data</a> at any time.

</p>

</div>

);

}

For fully automated decisions with legal or similarly significant effects, GDPR Article 22 also requires you to offer a human review option — not just a disclosure.

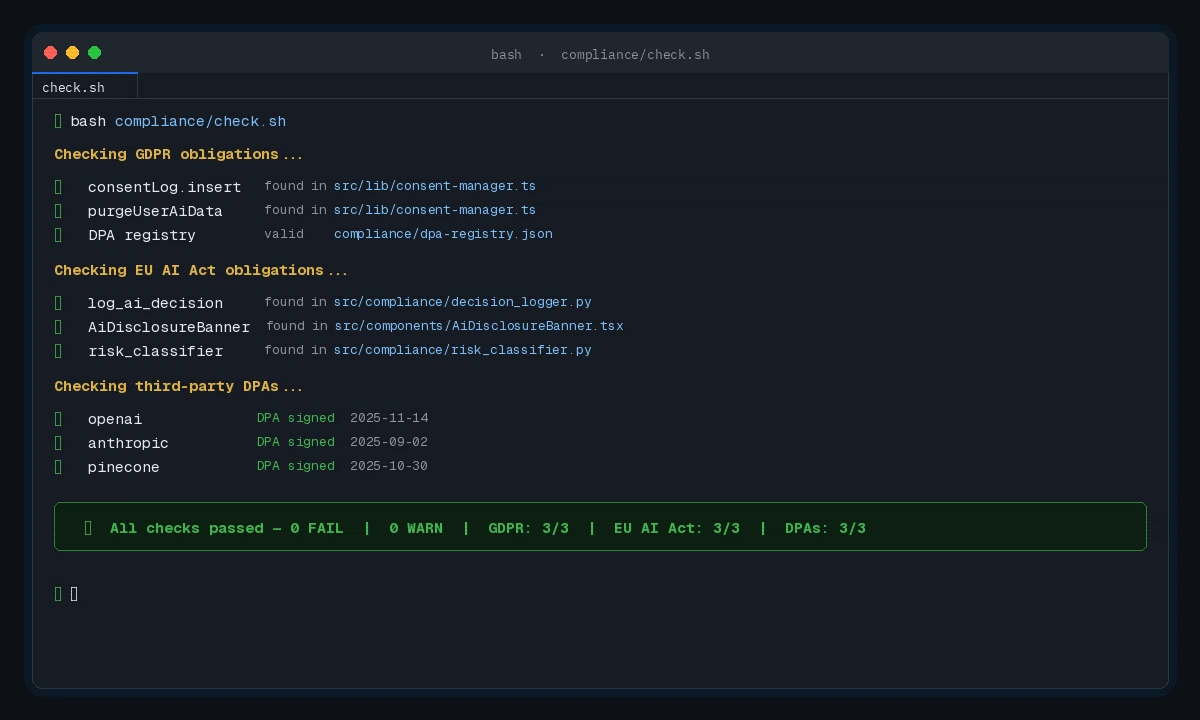

Verification

Run a compliance self-assessment against the key obligations:

# compliance/check.sh — run before each release

echo "Checking GDPR obligations..."

grep -r "consentLog.insert" src/ || echo "FAIL: No consent logging found"

grep -r "purgeUserAiData" src/ || echo "FAIL: No deletion path found"

echo "Checking EU AI Act obligations..."

grep -r "log_ai_decision" src/ || echo "WARN: No decision logging (required for HIGH_RISK)"

grep -r "AiDisclosureBanner" src/ || echo "FAIL: No AI disclosure in UI"

echo "Checking third-party DPAs..."

cat compliance/dpa-registry.json | python3 -m json.tool || echo "FAIL: DPA registry missing or malformed"

You should see: All checks pass with no FAIL lines. WARN lines are acceptable only for MINIMAL_RISK systems.

All checks green before a release — failures block deployment in CI

All checks green before a release — failures block deployment in CI

What You Learned

- The EU AI Act risk tier determines your obligations — classify early, before you build

- GDPR consent must be version-tracked, append-only, and immediately propagated to third-party data stores on withdrawal

- Decision logs need pseudonymized inputs, tamper-detection checksums, and human-review flags for low-confidence outputs

- Chatbots and generative AI features require disclosure even at LIMITED_RISK — this is the easiest check teams fail

Limitation: This covers the core technical obligations. You'll still need legal review for your specific use case, jurisdiction, and whether your DPAs cover your actual data flows.

When NOT to use this alone: If your system touches healthcare, employment, or credit decisions, engage a GDPR/AI Act specialist before launch. The fines are not theoretical — enforcement started in 2025.

Verified against GDPR (2016/679), EU AI Act (2024/1689), and guidance from the European Data Protection Board as of early 2026. Not legal advice.