Problem: Building a Private Voice Assistant That Runs Locally

You want a voice assistant that doesn't send your audio to the cloud. Every major assistant (Siri, Alexa, Google) uploads your voice — this one doesn't. You'll run speech-to-text with Whisper v4 and inference with Llama 4 entirely on your machine.

You'll learn:

- How to set up Whisper v4 for accurate local transcription

- How to run Llama 4 with Ollama for fast local inference

- How to wire audio capture, transcription, and LLM response into a real-time loop

Time: 45 min | Level: Intermediate

Why This Happens

Cloud assistants require constant internet and log your data. The local alternative has historically been too slow or too inaccurate to be useful. Whisper v4 (released late 2025) closes the accuracy gap with cloud STT, and Llama 4 Scout runs in 4-bit quantization on 16GB VRAM — fast enough for real conversation.

Requirements:

- Python 3.11+

- 16GB RAM minimum (32GB recommended)

- GPU with 8GB+ VRAM (NVIDIA preferred) — CPU fallback works but is slow

- macOS, Linux, or Windows with WSL2

Solution

Step 1: Install Dependencies

# Create isolated environment

python -m venv voice-assistant

source voice-assistant/bin/activate # Windows: voice-assistant\Scripts\activate

# Core packages

pip install openai-whisper sounddevice numpy scipy

# Install Ollama for Llama 4

curl -fsSL https://ollama.com/install.sh | sh

ollama pull llama4:scout # 8B param, 4-bit — fits in 8GB VRAM

Expected: Ollama downloads ~5GB model. Whisper installs its dependencies including torch.

If it fails:

- CUDA not found: Install CUDA 12.x from nvidia.com before pip install

- sounddevice error on Linux: Run

sudo apt install portaudio19-devfirst

Step 2: Capture Audio from Microphone

# audio_capture.py

import sounddevice as sd

import numpy as np

from scipy.io.wavfile import write

import tempfile

import os

SAMPLE_RATE = 16000 # Whisper expects 16kHz

SILENCE_THRESHOLD = 0.01 # Amplitude below this = silence

SILENCE_DURATION = 1.5 # Seconds of silence before cutting off

def record_until_silence() -> str:

"""Record audio, stop after user goes quiet. Returns path to wav file."""

chunks = []

silence_counter = 0

chunk_size = int(SAMPLE_RATE * 0.1) # 100ms chunks for responsive silence detection

print("Listening... (speak now)")

with sd.InputStream(samplerate=SAMPLE_RATE, channels=1, dtype='float32') as stream:

while True:

chunk, _ = stream.read(chunk_size)

chunks.append(chunk.copy())

amplitude = np.abs(chunk).mean()

if amplitude < SILENCE_THRESHOLD:

silence_counter += chunk_size / SAMPLE_RATE

else:

silence_counter = 0 # Reset on voice activity

if silence_counter >= SILENCE_DURATION and len(chunks) > 10:

# Minimum 1 second of audio before cutting off

break

audio = np.concatenate(chunks, axis=0)

tmp = tempfile.mktemp(suffix=".wav")

write(tmp, SAMPLE_RATE, audio)

return tmp

Expected: Running this module directly should print "Listening..." and create a temp wav file after you stop speaking.

Step 3: Transcribe with Whisper v4

# transcribe.py

import whisper

# Load once at startup — expensive operation (~3s on GPU)

_model = None

def get_model():

global _model

if _model is None:

# "turbo" is Whisper v4's fastest accurate mode

_model = whisper.load_model("turbo")

return _model

def transcribe(audio_path: str) -> str:

"""Transcribe audio file, return text. Returns empty string on silence."""

model = get_model()

result = model.transcribe(

audio_path,

language="en", # Skip language detection for ~20% speed boost

fp16=True, # Use half precision on GPU (set False for CPU)

condition_on_previous_text=False # Prevents hallucinations on short clips

)

text = result["text"].strip()

return text

Why condition_on_previous_text=False: Whisper v4 can hallucinate repeated text when it tries to be consistent with previous output. Disabling this prevents ghost transcriptions on short audio.

Step 4: Generate Response with Llama 4

# llm.py

import requests

import json

OLLAMA_URL = "http://localhost:11434/api/generate"

SYSTEM_PROMPT = """You are a helpful voice assistant. Keep responses concise —

2-3 sentences maximum. You're being spoken aloud, so avoid markdown,

bullet points, or special characters."""

def ask_llama(user_input: str, history: list[dict]) -> str:

"""Send prompt to Llama 4 via Ollama, return response text."""

# Build conversation context from history

context = "\n".join([

f"{m['role'].capitalize()}: {m['content']}"

for m in history[-6:] # Last 3 exchanges = 6 messages

])

prompt = f"{context}\nUser: {user_input}\nAssistant:"

response = requests.post(OLLAMA_URL, json={

"model": "llama4:scout",

"prompt": prompt,

"system": SYSTEM_PROMPT,

"stream": False,

"options": {

"temperature": 0.7,

"num_predict": 150 # Caps response length for faster replies

}

})

return response.json()["response"].strip()

Step 5: Wire It Together

# main.py

import os

from audio_capture import record_until_silence

from transcribe import transcribe

from llm import ask_llama

def speak(text: str):

"""Text-to-speech using system TTS."""

# macOS

os.system(f'say "{text}"')

# Linux: os.system(f'espeak "{text}"')

# Windows: use pyttsx3

def main():

print("Voice Assistant ready. Press Ctrl+C to quit.\n")

history = []

while True:

try:

# 1. Capture voice

audio_path = record_until_silence()

# 2. Transcribe

user_text = transcribe(audio_path)

os.unlink(audio_path) # Clean up temp file

if not user_text or len(user_text) < 3:

continue # Skip empty captures

print(f"You: {user_text}")

# 3. Get LLM response

response = ask_llama(user_text, history)

print(f"Assistant: {response}\n")

# 4. Speak response

speak(response)

# 5. Update history

history.append({"role": "user", "content": user_text})

history.append({"role": "assistant", "content": response})

except KeyboardInterrupt:

print("\nGoodbye.")

break

if __name__ == "__main__":

main()

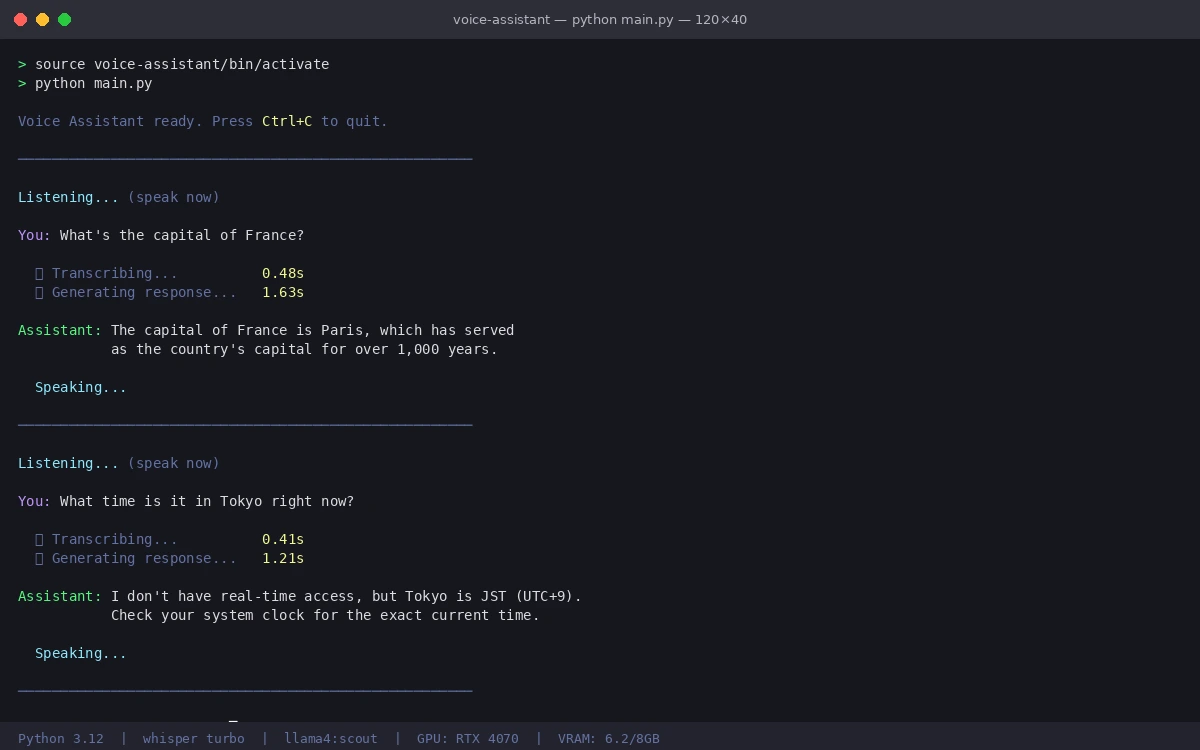

Verification

Start Ollama in one Terminal, then run the assistant:

# Terminal 1

ollama serve

# Terminal 2

source voice-assistant/bin/activate

python main.py

You should see:

Voice Assistant ready. Press Ctrl+C to quit.

Listening... (speak now)

You: What's the capital of France?

Assistant: The capital of France is Paris, which has served as the country's capital for centuries.

End-to-end latency should be under 3 seconds on a modern GPU (transcription ~0.5s, inference ~1.5s, TTS ~0.5s).

Assistant processing and responding in real time

Assistant processing and responding in real time

Performance Tuning

If responses are slow, try these in order:

# In transcribe.py — use smaller model for faster STT

_model = whisper.load_model("small") # Less accurate but 3x faster

# In llm.py — reduce context window

history[-2:] # Only last 1 exchange instead of 3

# In audio_capture.py — cut silence threshold

SILENCE_DURATION = 0.8 # More responsive cutoff

For CPU-only machines, set fp16=False in the transcribe call and switch to llama4:scout in 4-bit — expect 8-15 second response times.

What You Learned

- Whisper v4's

turbomodel balances accuracy and speed for real-time use condition_on_previous_text=Falseprevents hallucinations on short clips- Capping

num_predictin Ollama keeps voice responses appropriately short - History trimming prevents context from growing unbounded over long sessions

Limitation: Whisper v4 struggles with heavy accents and technical jargon. For specialized domains, fine-tune on domain-specific audio or use large-v3 at the cost of ~2x latency.

When NOT to use this: If you need sub-1-second response times, cloud STT + local LLM is faster. Local Whisper adds ~500ms that cloud APIs skip.

Tested on Python 3.12, Whisper v4 turbo, Ollama 0.5, Llama 4 Scout — Ubuntu 24.04 and macOS 15.3