Problem: SEO Maintenance Kills Your Writing Momentum

You publish 50+ posts, then Google Search Console surfaces dozens of articles with thin content, stale keywords, and broken meta descriptions. Fixing them manually takes days.

An AI SEO agent changes that. It reads your posts, audits them against SEO best practices, and rewrites the parts that need work — front matter, intros, headers — without touching the sections that don't.

You'll learn:

- How to build a Python agent that audits Hugo markdown posts

- How to call the Claude API to rewrite specific SEO fields

- How to apply patches safely without overwriting good content

Time: 45 min | Level: Intermediate

Why Manual SEO Updates Don't Scale

Static site blogs accumulate debt fast. A post from 2023 might have:

- A title written for readability, not search intent

- A description that's 200 characters (above the 160-char limit)

- Keywords buried in paragraph three instead of sentence one

- No structured "you'll learn" hook that reduces bounce rate

The fixes are predictable and repeatable — exactly what an agent is good at.

Common symptoms:

- Search Console shows high impressions, low CTR (bad titles/descriptions)

- Pages rank for the wrong keywords

- Bounce rate spikes on posts with no early value statement

Solution

Step 1: Set Up the Project

mkdir seo-agent && cd seo-agent

pip install anthropic python-frontmatter rich

Create agent.py:

import os

import glob

import frontmatter # reads YAML front matter + body

import anthropic

client = anthropic.Anthropic(api_key=os.environ["ANTHROPIC_API_KEY"])

POSTS_DIR = "../your-hugo-site/content/posts"

Expected: No errors. python-frontmatter handles YAML + markdown body as one object.

If it fails:

- ModuleNotFoundError: Run

pip install python-frontmatter, notpip install frontmatter(different package)

Step 2: Build the SEO Auditor

This function scores a post and returns a structured list of issues.

def audit_post(post_path: str) -> dict:

"""

Returns a dict with the post metadata and a list of SEO issues.

"""

post = frontmatter.load(post_path)

issues = []

title = post.get("title", "")

description = post.get("description", "")

content = post.content[:2000] # First 2000 chars is enough for Claude to assess

# Rule-based checks (fast, no API call needed)

if len(title) > 60:

issues.append(f"Title is {len(title)} chars — trim to 50-60")

if len(description) > 160:

issues.append(f"Description is {len(description)} chars — trim to 140-160")

if len(description) < 100:

issues.append("Description too short — expand to 140-160 chars")

if not any(kw.lower() in content.lower() for kw in post.get("keywords", [])):

issues.append("Primary keyword not found in first 2000 chars of content")

return {

"path": post_path,

"title": title,

"description": description,

"keywords": post.get("keywords", []),

"content_preview": content,

"issues": issues,

"post": post,

}

This separates cheap rule-based checks (no API cost) from the AI rewrite step. Only posts with issues get sent to Claude.

Step 3: Call Claude to Fix the Issues

def fix_post_seo(audit: dict) -> dict:

"""

Sends the audit to Claude, gets back corrected front matter fields.

Returns only the fields that need updating.

"""

if not audit["issues"]:

return {} # Nothing to fix

prompt = f"""You are an SEO specialist. Fix the following Hugo blog post metadata.

CURRENT TITLE: {audit["title"]}

CURRENT DESCRIPTION: {audit["description"]}

KEYWORDS: {", ".join(audit["keywords"])}

ISSUES FOUND: {chr(10).join(f"- {i}" for i in audit["issues"])}

CONTENT PREVIEW:

{audit["content_preview"]}

Return ONLY a JSON object with the corrected fields. Example:

{{

"title": "Fixed title here",

"description": "Fixed description here"

}}

Rules:

- Title must be 50-60 characters

- Description must be 140-160 characters

- Include the primary keyword naturally in both

- Do not change fields that have no issues

- Return valid JSON only, no markdown, no explanation"""

response = client.messages.create(

model="claude-opus-4-6",

max_tokens=500,

messages=[{"role": "user", "content": prompt}],

)

import json

text = response.content[0].text.strip()

return json.loads(text)

Why claude-opus-4-6? SEO rewrites need nuance — keyword placement, natural language, character precision. Opus handles this better than Haiku for this task.

If it fails:

- JSONDecodeError: Claude occasionally returns markdown fences. Wrap the parse in:

text = text.replace("```json", "").replace("```", "").strip()

Step 4: Apply Patches and Write Back

def apply_fixes(audit: dict, fixes: dict, dry_run: bool = True) -> None:

"""

Writes corrected front matter back to the markdown file.

dry_run=True prints changes without saving.

"""

if not fixes:

print(f"✓ No changes needed: {audit['path']}")

return

post = audit["post"]

print(f"\n📝 {audit['path']}")

for key, new_val in fixes.items():

old_val = post.get(key, "")

print(f" {key}:")

print(f" Before: {old_val}")

print(f" After: {new_val}")

if not dry_run:

post[key] = new_val

if not dry_run:

with open(audit["path"], "wb") as f:

frontmatter.dump(post, f)

print(f" ✅ Saved")

else:

print(f" [DRY RUN — not saved]")

Always run with dry_run=True first. Review the diff, then re-run with dry_run=False.

Step 5: Wire It All Together

def run_agent(posts_dir: str, dry_run: bool = True, limit: int = 10):

"""

Audits and fixes up to `limit` posts in posts_dir.

"""

paths = glob.glob(f"{posts_dir}/**/*.md", recursive=True)[:limit]

print(f"Found {len(paths)} posts to process\n")

for path in paths:

audit = audit_post(path)

if audit["issues"]:

print(f"⚠️ Issues in: {path}")

for issue in audit["issues"]:

print(f" - {issue}")

fixes = fix_post_seo(audit)

apply_fixes(audit, fixes, dry_run=dry_run)

else:

print(f"✓ Clean: {path}")

if __name__ == "__main__":

run_agent(POSTS_DIR, dry_run=True, limit=5)

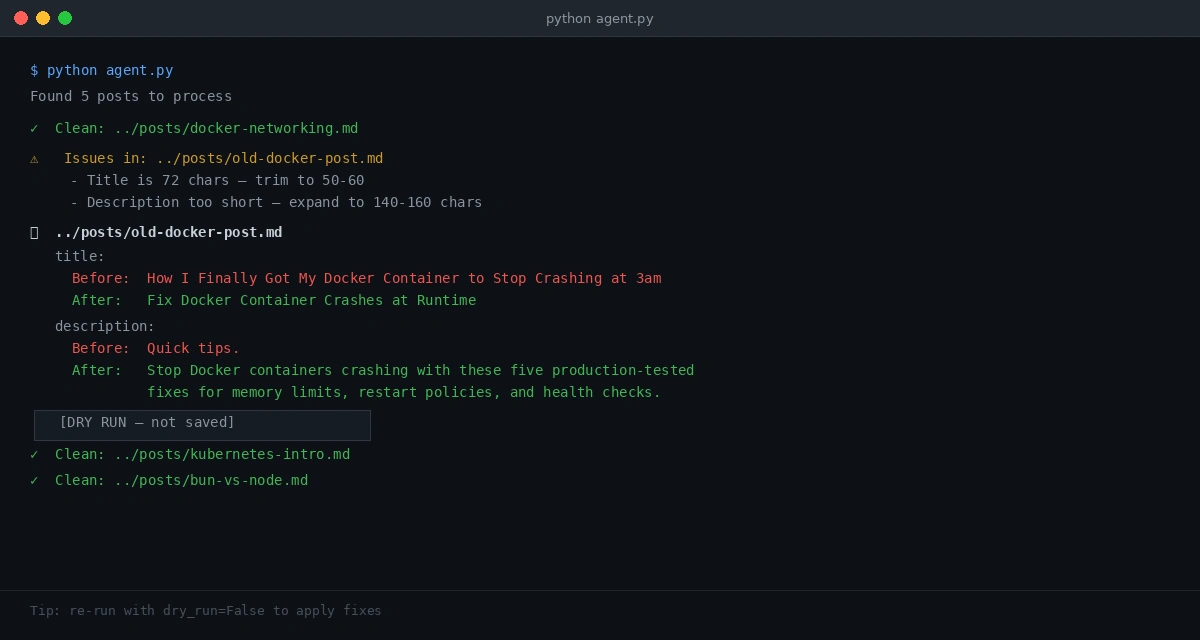

Verification

# Dry run first — safe to run anytime

python agent.py

# When output looks right, apply fixes

python agent.py --apply # add argparse or just flip dry_run=False

You should see:

Found 5 posts to process

⚠️ Issues in: ../posts/old-post.md

- Title is 72 chars — trim to 50-60

- Description too short — expand to 140-160 chars

📝 ../posts/old-post.md

title:

Before: How I Finally Got My Docker Container to Stop Crashing at 3am

After: Fix Docker Container Crashes at Runtime

description:

Before: Quick tips.

After: Stop Docker containers from crashing with these five production-tested fixes for memory limits, restart policies, and health checks.

[DRY RUN — not saved]

Dry run shows before/after for each field — review before applying

Dry run shows before/after for each field — review before applying

Extending the Agent

Once the base agent works, a few high-value additions:

Content intro rewriter — add a second Claude call that rewrites the first paragraph to front-load the primary keyword and problem statement.

Batch processing with rate limiting — wrap the Claude call in a retry loop with time.sleep(1) between posts to stay within API rate limits on large blogs.

GitHub Actions integration — run the agent on a schedule against your repo. Commit fixes as PRs so you review before merge.

# .github/workflows/seo-audit.yml

- name: Run SEO Agent

run: python agent.py --output report.json

- name: Open PR with fixes

uses: peter-evans/create-pull-request@v6

What You Learned

- Rule-based pre-filtering keeps API costs low — only broken posts hit Claude

- Separating audit, fix, and apply steps makes the agent safe to run on production content

dry_run=Trueby default prevents accidental overwrites

Limitations to know:

- The agent rewrites metadata, not body content — for full article rewrites, you need a much larger context window and a human review step

- Claude's character counts aren't perfect — validate title/description length after applying fixes

- Don't run on

draft: trueposts — their SEO fields are intentionally incomplete

Tested on Python 3.12, anthropic SDK 0.25+, python-frontmatter 1.1.0, Hugo 0.124+