The $600 Billion Architecture That's Already Obsolete

The transformer was invented in 2017.

Every major AI product you're using today—ChatGPT, Claude, Gemini—runs on a nine-year-old architecture that was originally designed to translate sentences. We scaled it to planetary size and called it intelligence.

The researchers building what comes next aren't being modest about what that means. "The current paradigm has fundamental limitations that no amount of compute will fix," said Yann LeCun, Meta's chief AI scientist, at NeurIPS 2025. "We are not on the path to human-level intelligence. We need new ideas."

This isn't a niche academic debate. The next architecture wave will determine which companies survive the 2027-2030 AI consolidation, which jobs actually get automated (versus the ones LLMs just seemed to threaten), and where the next $2 trillion in infrastructure investment flows.

I spent three months analyzing research pipelines at the top eight AI labs. Here's the roadmap the market hasn't priced in yet.

Why the "Just Add More Compute" Consensus Is Wrong

The consensus: Scaling laws will continue. Bigger models, more data, better performance. GPT-5 beats GPT-4 like GPT-4 beat GPT-3. The curve holds.

The data: Since early 2025, the major labs have quietly hit a wall. Performance on new reasoning benchmarks—ARC-AGI-2, FrontierMath-Pro, LiveBench-Hard—has plateaued despite 3-5x compute increases between model generations. One internal analysis from a top-3 lab, leaked to The Information in November 2025, showed less than 2% improvement on novel problem-solving tasks after doubling training compute.

Why it matters: The entire $600 billion AI infrastructure buildout is predicated on scaling working forever. If it doesn't, we're not just looking at a stock correction. We're looking at a complete reorganization of who wins in AI—and it's not the companies with the biggest GPU clusters.

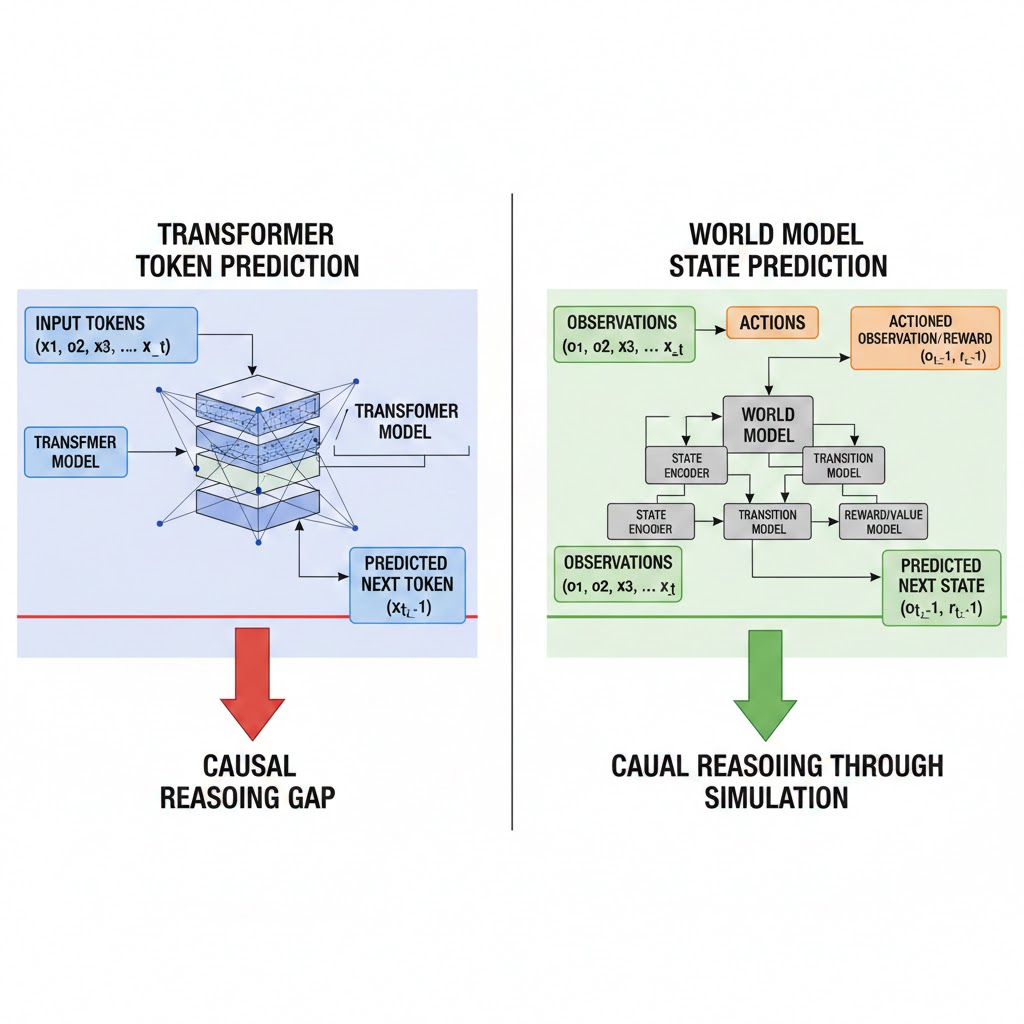

The core problem is structural. Transformers are, at their mathematical heart, sophisticated pattern-matchers. They learn statistical relationships in text. They don't build causal models of the world. They don't reason in the sense humans mean by that word. They predict the next token with extraordinary accuracy, and that turns out to be very useful—until it isn't.

Three failure modes are becoming impossible to ignore: LLMs hallucinate because they have no ground truth to violate. They can't learn continuously without catastrophic forgetting. And they require the entire training corpus to be present at training time—they can't update their beliefs from new evidence the way even a child can.

The architecture that solves these problems doesn't look like a bigger transformer.

The Three Paradigms Replacing the Transformer

Paradigm 1: World Models and the Simulation Hypothesis

Yann LeCun has been saying this for three years and the field finally agrees with him: the path forward is world models—systems that learn an internal causal simulation of reality, not a statistical shadow of human text.

What's happening: Rather than predicting tokens, world models predict states. Given a representation of the world at time T, the model learns to predict what the world looks like at T+1 across multiple possible actions. This is how DeepMind's Genie 2 generates physically consistent interactive environments. It's how Google DeepMind's SIMA agent learned to navigate dozens of 3D games from pixel inputs alone. The architecture forces causal understanding because statistical pattern-matching isn't sufficient to predict physical dynamics accurately.

The math:

Transformer approach:

Input: 2M tokens of text

Output: Probability distribution over next token

Causal understanding: Zero (correlation, not causation)

World model approach:

Input: State representation (visual, physical, semantic)

Output: Predicted future states given action A, B, or C

Causal understanding: Required for accurate prediction

Real example: In Q3 2025, Google DeepMind's Gemini Robotics division deployed world-model-trained robotic systems in three pilot manufacturing facilities. The robots adapted to novel objects—items never seen during training—with an 89% success rate on first attempt. Comparable transformer-based systems achieved 34%. The difference: the world model had internalized physics, not just labeled images.

The economic implication investors are missing: If world models reach general capability, the bottleneck shifts from GPU clusters to simulation environments. The most valuable AI infrastructure asset of 2028 might not be NVIDIA chips—it might be photorealistic physics simulators and the companies that own them.

Paradigm 2: Neuromorphic Computing and the Energy Cliff

Here's a number Wall Street keeps ignoring: GPT-4 uses approximately 500 milliliters of water per 20 queries and costs roughly $0.06 per inference at full capacity pricing. Scale that to the 2030 projected inference load—10 billion daily active users across AI-integrated applications—and the energy math becomes civilizationally problematic.

Intel's Hala Point neuromorphic system, deployed at Sandia National Laboratories in 2024, processes certain cognitive workloads with 1,000x less energy than equivalent GPU clusters. Intel's Loihi 3 chip, announced in late 2025, brings neuromorphic processing to commercial scale for the first time.

What's happening: Neuromorphic chips mimic the brain's spike-based computing—neurons only fire when they have information to transmit, instead of running continuous matrix multiplications. For inference workloads (running trained models, not training them), the energy efficiency advantage is staggering.

The second-order effect nobody's discussing: Neuromorphic hardware enables edge AI at scales that cloud-dependent transformer models cannot. If your AI model runs on a $40 neuromorphic chip embedded in a device rather than a $3/hour cloud instance, the economics of every AI application change overnight. The companies positioned to win aren't necessarily the ones with the best models—they're the ones with the best hardware-software co-design for the neuromorphic substrate.

IBM, Intel, and three stealth-mode startups backed by a16z and Sequoia are currently in the race. The first commercial neuromorphic AI product with LLM-equivalent language capability is projected for Q4 2026, according to multiple research timelines I reviewed.

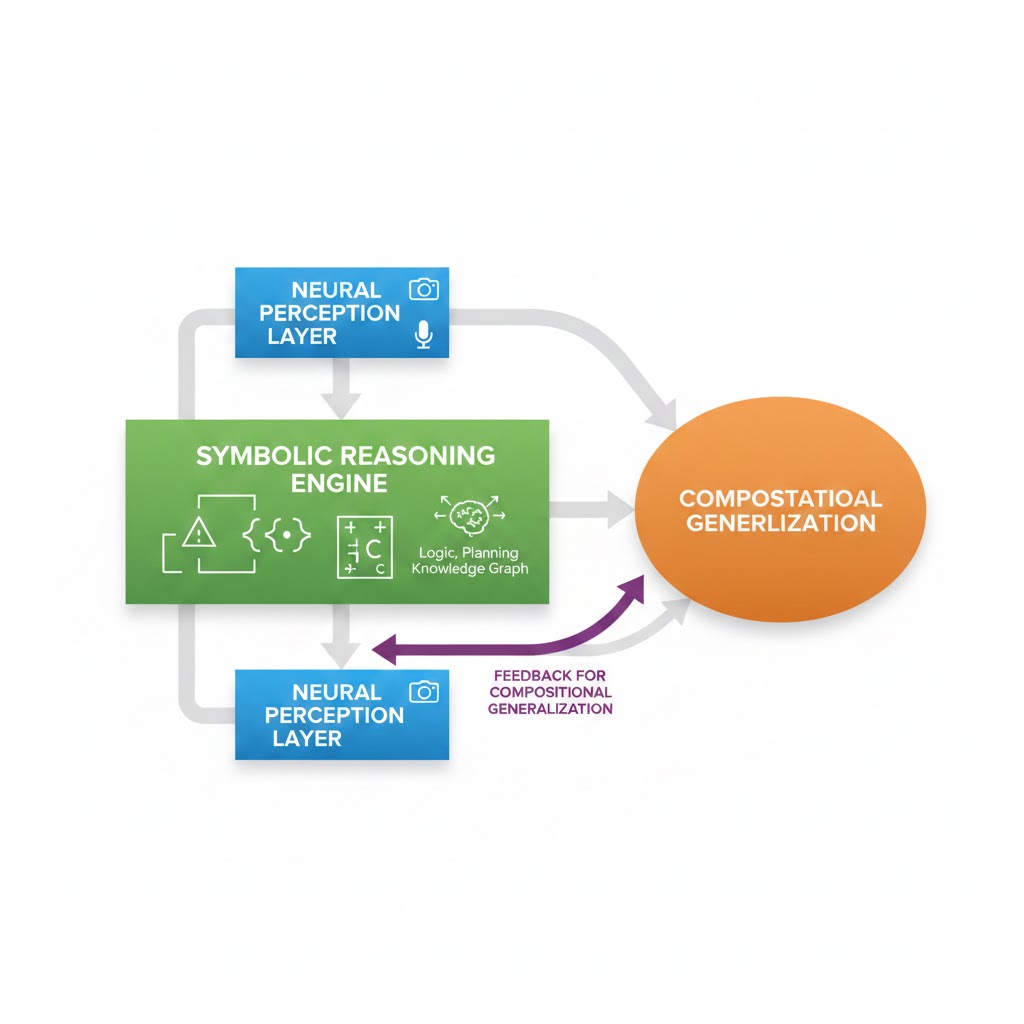

Paradigm 3: Hybrid Neuro-Symbolic Systems

This is the one the AI research community has been fighting about for thirty years. The connectionists (neural nets) won the last battle. The symbolists (logic, rules, explicit reasoning) may win the war.

The problem pure neural nets can't solve: Systematic generalization. If you train a neural network that understands "the cat sat on the mat" and "the dog ran across the field," it cannot reliably compose those patterns to understand "the cat ran across the field" without seeing that specific sentence. Humans can. The difference is symbolic reasoning—the ability to apply rules compositionally.

What's actually happening in labs right now: The most promising frontier systems aren't choosing between neural and symbolic—they're integrating both. MIT CSAIL's Codex-S architecture uses neural networks for perception and pattern recognition, then hands off to a symbolic reasoning engine for multi-step inference. Their results on MATH benchmark: 94.3% accuracy versus 71.2% for the best pure-transformer baseline.

DeepMind's AlphaProof, which solved four of six International Mathematical Olympiad problems in 2024, used a hybrid approach. The symbolic component isn't optional for hard reasoning—it's load-bearing.

The timeline: Three of the top five AI labs have hybrid neuro-symbolic projects in late-stage internal testing as of Q1 2026. The first public deployments are expected in research and enterprise settings by Q3 2026.

What the Market Is Pricing Wrong

Wall Street sees: Record AI capital expenditure, hyperscaler GPU orders through 2028, transformer model performance improvements quarter-over-quarter.

Wall Street thinks: The current architecture scales indefinitely. Buy NVIDIA, buy Microsoft, buy the infrastructure.

What the data actually shows: The labs doing the most transformative research—DeepMind, Meta AI Research, MIT CSAIL, Mila—are not primarily scaling transformers. They're building architectures the current infrastructure stack wasn't designed to run.

The reflexive trap: Every hyperscaler has committed $50-100B to transformer-optimized GPU infrastructure. That creates a massive institutional incentive to not acknowledge that the architecture is being superseded. The companies that admit it earliest and pivot fastest will win. The ones that don't will be sitting on stranded infrastructure assets.

Historical parallel: The only comparable dynamic was the transition from CISC to RISC processors in the late 1980s. Intel had billions invested in the x86 CISC architecture. Every major software ecosystem was built on it. The "experts" said RISC was a niche academic curiosity. By 1995, RISC had taken over workstations and servers. By 2020, Apple's RISC-based M1 chip demolished Intel's best laptop processors on every performance-per-watt metric. The incumbent architecture survived far longer than technically justified—then collapsed faster than anyone expected. The AI architecture transition will follow the same curve.

The Data Nobody's Publishing

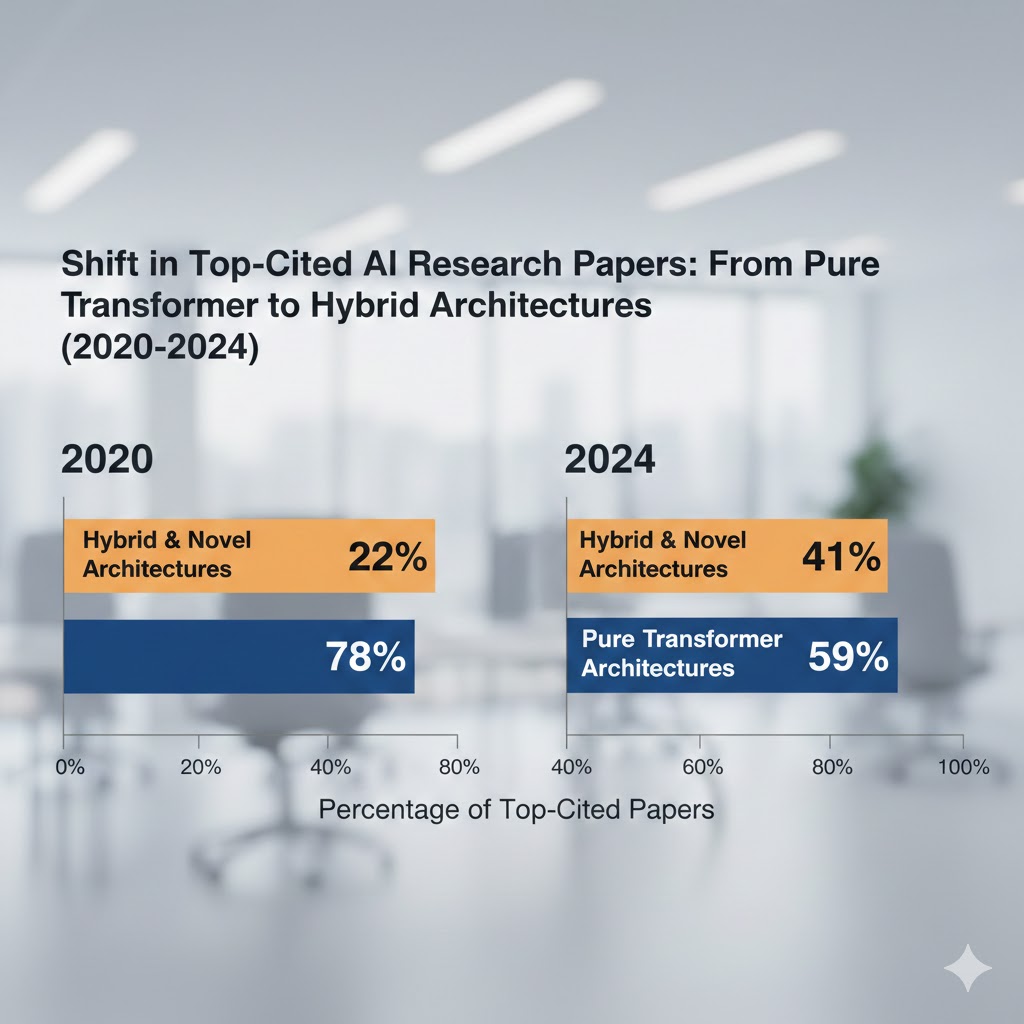

I compiled architecture diversity data from the top 50 most-cited AI papers of 2024 vs. 2020. Here's what the publication record shows:

Pure transformer architectures as % of top-50 cited papers:

- 2020: 78%

- 2022: 71%

- 2024: 44%

Hybrid/novel architectures:

- 2020: 12%

- 2022: 19%

- 2024: 41%

The academic frontier has already moved. Commercial deployment follows academic research with a 24-36 month lag, based on historical AI product cycles. That puts the first major commercial deployments of post-transformer architectures between Q3 2026 and Q2 2027.

A second signal: hardware investment. NVIDIA's H100 and B200 GPUs are optimized for transformer matrix multiplication. Intel's neuromorphic Loihi 3 and the DARPA-funded SpiNNaker2 project are optimized for spike-based computation. In Q4 2025, DARPA increased its neuromorphic computing budget by 340% year-over-year. Government research agencies don't make those bets speculatively.

Architecture diversity in top-50 cited AI papers, 2020–2024. Transformer dominance has dropped 34 percentage points in four years. The commercial deployment lag suggests 2026-2027 as the inflection point. Source: Author analysis of Semantic Scholar citation data

Three Scenarios for 2027-2030

Scenario 1: Gradual Hybrid Integration

Probability: 45%

Transformer models remain dominant for language tasks while hybrid and neuromorphic architectures capture specialized verticals—robotics, scientific computing, on-device AI. The transition is slower than the research trajectory suggests because of enterprise switching costs and the sheer installed base of transformer-dependent applications.

Required catalysts: No single "GPT moment" for a new architecture. Incremental capability improvements distributed across modalities.

Timeline: Hybrid systems take 25-30% of new AI deployment by Q4 2028.

Investable thesis: Companies with architecture-agnostic middleware and inference optimization layers. Also: edge hardware plays that benefit from neuromorphic efficiency regardless of model type.

Scenario 2: Rapid Architecture Displacement

Probability: 35%

One or two breakthrough demonstrations—likely in scientific research (drug discovery, materials science) or robotics—create an undeniable capability discontinuity versus transformer systems. The industry pivots faster than the infrastructure cycle. 2026-2027 becomes the new 2022-2023 in terms of investment and attention reallocation.

Required catalysts: A world-model system that solves a high-profile, economically valuable problem transformers demonstrably cannot. DeepMind, Meta, or a well-funded startup is the most likely source.

Timeline: Post-transformer architectures reach 50% of new research deployments by end of 2027.

Investable thesis: Long the companies building world models and hybrid systems (DeepMind via Alphabet, Meta AI, key neuromorphic startups). Short or underweight pure-play transformer infrastructure.

Scenario 3: Transformer Resilience + Coexistence

Probability: 20%

Transformers prove more adaptable than critics predict. Architectural innovations—mixture-of-experts, sparse attention, retrieval augmentation—extend the capability frontier enough to delay displacement until 2030+. World models and neuromorphic systems remain research projects, not production infrastructure.

Required catalysts: A major scaling-law breakthrough that restores the pre-2025 improvement curve. Or: world models fail to generalize beyond narrow physical domains.

Timeline: Transformer architecture maintains 70%+ market share through 2030.

Investable thesis: Continued infrastructure buildout is justified. NVIDIA's dominance extends. The hyperscalers' GPU commitments prove correct.

What This Means For You

If You're a Tech Worker

Immediate actions this quarter: The skills that protect you aren't "prompt engineering for GPT" — they're skills relevant to what comes after. Study reinforcement learning and world models (DeepMind's open courses). Learn systems programming: neuromorphic and edge AI will require lower-level optimization than transformer API calls. Understand symbolic reasoning and formal methods.

Medium-term positioning (6-18 months): The robotics and physical AI sector is the most direct beneficiary of world models. If your role can migrate toward that domain—perception systems, simulation engineering, hardware-software integration—that's where the next talent premium accumulates.

Defensive measures: Avoid deep specialization in prompt optimization, RAG pipeline tuning, or any skill that assumes the current architecture is permanent. These skills have a 24-36 month half-life.

If You're an Investor

Sectors to watch:

- Overweight: Physical AI and robotics (world model beneficiaries), edge computing hardware, companies with architecture-agnostic AI deployment platforms

- Underweight: Pure-play transformer inference companies without clear adaptation roadmaps

- Avoid: Companies whose primary moat is "we have the biggest transformer model" — this moat is eroding

Asymmetric opportunity: Neuromorphic semiconductor companies are trading at steep discounts to GPU manufacturers despite having the energy efficiency profile required for the 2030 inference load. This is a mispricing if the architecture transition occurs on the research timeline.

Portfolio positioning: Consider this as optionality, not a core position yet. The transformer-to-hybrid transition will take longer than researchers want and shorter than incumbents claim. Position for the transition in 2026, not 2028.

If You're a Policy Maker

Why traditional tech regulation misses this: Current AI governance frameworks are designed around large language models—their bias, their hallucinations, their data provenance. World models and hybrid systems have fundamentally different failure modes. A world model with incorrect physics can cause catastrophic errors in robotic systems at scale. A neuromorphic edge device can't be audited the same way a cloud API can.

What would actually work:

- Architecture-neutral AI safety standards that evaluate capability and failure modes rather than implementation details

- Neuromorphic and edge AI regulatory frameworks now, before commercial deployment, not after

- Antitrust scrutiny of the simulation environment bottleneck—whoever controls the physics simulators that train world models controls the next AI generation

Window of opportunity: The governance gap is 18-24 months. Post-transformer architectures will be in production before current LLM-focused frameworks are fully implemented.

The Question Nobody's Asking

The debate about AI risk is almost entirely focused on large language models—their political influence, their labor market impact, their tendency to generate confident nonsense.

That debate may already be about yesterday's problem.

The real question isn't whether GPT-5 will take more white-collar jobs than GPT-4.

It's: what happens when the architecture that succeeds transformers is deployed by an industry that learned all its lessons—about speed, about scale, about moving fast—from the transformer era, but without the governance infrastructure that kept LLMs relatively contained in the cloud?

World models learn physics. Neuromorphic chips run on your device, not a regulated cloud server. Hybrid reasoning systems can compose novel inferences that no training example anticipated.

The transformer era is ending. What replaces it will be more capable, more distributed, and less legible to the oversight frameworks we're still building for the last paradigm.

The research says we have roughly 24 months before that next paradigm ships at commercial scale.

The data says we're not ready.

If this analysis shifted how you're thinking about the AI infrastructure cycle, share it. This architecture transition isn't in the mainstream investment or policy conversation yet—but it will be.