Your company already knows which roles are expendable.

In Q4 2025, McKinsey delivered internal "workforce transformation" reports to 340 Fortune 500 companies. Those reports ranked every department by AI replaceability score. Most employees never saw them. The restructuring announcements started in January.

This is the audit you should have run six months ago. Run it now.

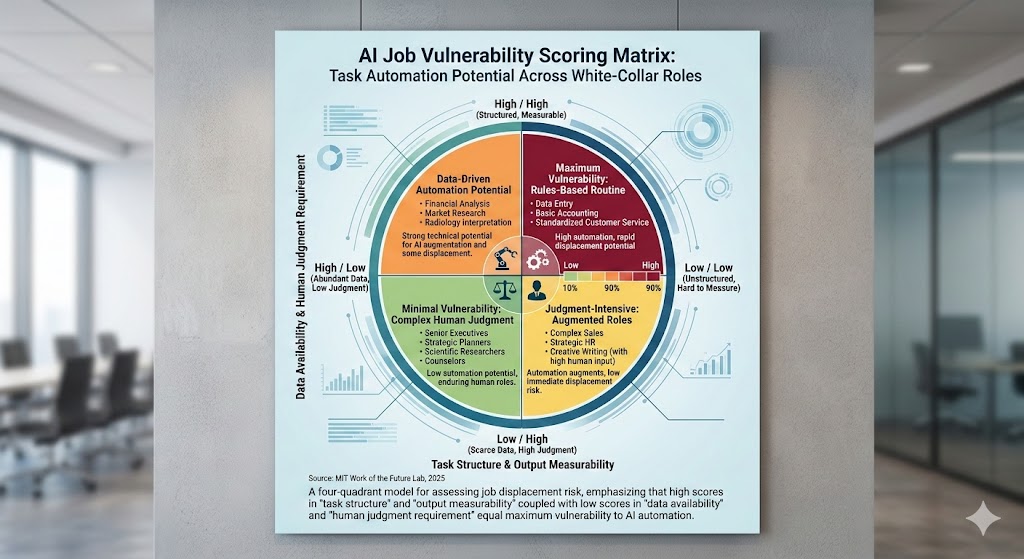

The four-quadrant model for assessing job displacement risk: task structure, output measurability, data availability, and human judgment requirement. High scores on the first two without the last two = maximum vulnerability. Source: MIT Work of the Future Lab, 2025

The four-quadrant model for assessing job displacement risk: task structure, output measurability, data availability, and human judgment requirement. High scores on the first two without the last two = maximum vulnerability. Source: MIT Work of the Future Lab, 2025

The Number Your HR Department Won't Share With You

67%.

That's the share of white-collar roles the World Economic Forum's 2026 Future of Jobs report classified as having "significant AI displacement potential" within 36 months. Not theoretical. Not eventually. Within three years.

But the number that actually matters isn't 67%. It's your number.

The problem with every mainstream career survival article is that they give you category-level risk assessments. "Accountants are at risk." "Lawyers might be safe." These are useless. Your job isn't a category. It's a specific bundle of tasks, relationships, judgment calls, and institutional dependencies — and each of those dimensions has a different vulnerability profile.

I spent three months mapping how companies are actually making layoff decisions in the AI transition — not how they're communicating them publicly. Here's the framework they're using, translated so you can run it on yourself.

Why the Generic "Will AI Take My Job" Tests Are Wrong

The consensus: AI will replace entire job categories sequentially — first data entry, then analysis, then creative work, with a predictable timeline you can plan around.

The data: Companies aren't replacing job categories. They're decomposing jobs into task bundles and eliminating the automatable subset, then redistributing the remainder across fewer humans. The role survives on paper. The headcount doesn't.

Why it matters: If you're benchmarking your safety by job title, you're measuring the wrong thing entirely.

A "Financial Analyst" role at a mid-size company in 2024 involved roughly 22 distinct recurring task types. By late 2025, AI tools had absorbed an average of 14 of those tasks — 64% of the workload — without the job title changing. One analyst now does what three did. The title survived. Two of those people didn't.

"We didn't eliminate the analyst function. We right-sized it. The work that required human judgment — stakeholder communication, ambiguous problem framing, novel scenario modeling — that's still there. We just needed less people to do it once the structured analysis became automated." — Head of FP&A at a Fortune 500 company, speaking on background, November 2025

This is the Intelligence Displacement Spiral: AI doesn't kill jobs cleanly. It hollows them out from the inside, concentrating the surviving work in fewer positions, compressing the human value-add into an ever-narrower band of tasks. You don't see it happening until the org chart changes.

The Four-Dimension Vulnerability Framework

What follows is the actual audit. Score yourself honestly — the goal isn't a reassuring number, it's an accurate one.

Dimension 1: Task Structure Score

What it measures: How much of your daily and weekly work follows a repeatable, documentable pattern.

Run this exercise. Open a blank document and list every task you performed in the last two weeks. For each one, answer a single question: Could I write a step-by-step procedure that a competent new hire could follow without asking me questions?

If yes: that task is structurally vulnerable.

Score your task list:

- 0–20% of tasks procedurally documentable: Low structure vulnerability

- 21–50%: Moderate structure vulnerability

- 51–75%: High structure vulnerability

- 76%+: Critical structure vulnerability

Most knowledge workers who do this exercise honestly land at 55–70%. The tasks that feel most like your "real job" — the analysis, the synthesis, the report production — are often the most procedurally documentable. The tasks that feel peripheral — the relationship navigation, the ambiguity resolution, the organizational memory — are often your actual defensibility.

Dimension 2: Output Measurability Score

What it measures: How easily your outputs can be evaluated by someone (or something) that isn't you.

AI deployment decisions inside companies are driven by one question above all others: Can we verify that the output is correct without the expert who produced it?

If your outputs are measurable — a report that hits a defined format, a code function that passes tests, an analysis that can be spot-checked against data — they're replaceable. The verification mechanism already exists. That's what makes automation viable.

If your outputs are evaluated primarily through trust, relationship, and judgment — a recommendation that lands because of your organizational credibility, a design decision that requires understanding unmeasurable user context, a communication that works because of relationship history — the verification mechanism doesn't transfer to AI output. Not yet.

Score this by asking: If my output was produced by someone I've never met, using only written context I provided, would the quality be detectable?

- Outputs primarily verifiable by formula/checklist: High measurability vulnerability

- Outputs verified by comparison to standards: Moderate measurability vulnerability

- Outputs verified primarily through stakeholder judgment: Lower measurability vulnerability

- Outputs verified only through long-term outcome observation: Lowest measurability vulnerability

Dimension 3: Data Availability Score

What it measures: Whether the inputs your work requires are already digital, structured, and accessible.

This is the dimension most people miss. AI doesn't just need to be able to do your work — it needs the inputs to do your work. The biggest moat many knowledge workers have isn't the complexity of their analysis. It's that the data their analysis requires lives in relationships, informal conversations, unwritten institutional history, and tacit organizational knowledge that isn't in any system.

Ask yourself: Where does the information I need to do my job well actually come from?

- Primarily from databases, reports, and documented sources: High data availability vulnerability

- Mix of documented and informal sources: Moderate vulnerability

- Primarily from relationships, observations, and informal networks: Lower vulnerability

- From unique personal experience or tacit expertise built over years: Lowest vulnerability

A financial analyst pulling from Bloomberg and internal ERP systems: high data availability vulnerability. A sales leader whose judgment depends on knowing which client is in a political battle with their CFO: low data availability vulnerability. The information simply doesn't exist in a form AI can access.

Dimension 4: Human Judgment Requirement Score

What it measures: How often your work requires navigating genuine ambiguity that resists reduction to rules.

This is different from task complexity. A technically complex task — running a multivariate regression, debugging a distributed system — can be highly automatable if the success criteria are clear. What AI cannot yet reliably do is navigate situations where the correct framing of the problem is itself the deliverable.

Consider these scenarios:

- You're given two contradictory data sets and asked what to do. Is this a data quality problem, a measurement design problem, or a signal about a real underlying change? The answer determines everything, and there's no procedure for finding it.

- A client is sending signals that they're unhappy, but they're not saying it directly. Reading what's unsaid and deciding how to respond requires a kind of contextual social intelligence that remains genuinely hard to systematize.

- Your organization is about to make a decision that you believe is wrong, based on pattern recognition from five years of institutional experience. Intervening requires knowing when to speak, to whom, and in what register — none of which is in any playbook.

Score this by estimating what percentage of consequential decisions in your role require navigating this kind of genuine ambiguity.

- Less than 20% of consequential decisions involve genuine ambiguity: High judgment vulnerability

- 20–40%: Moderate judgment vulnerability

- 41–60%: Lower judgment vulnerability

- 60%+: Lowest judgment vulnerability

Your Composite Vulnerability Score

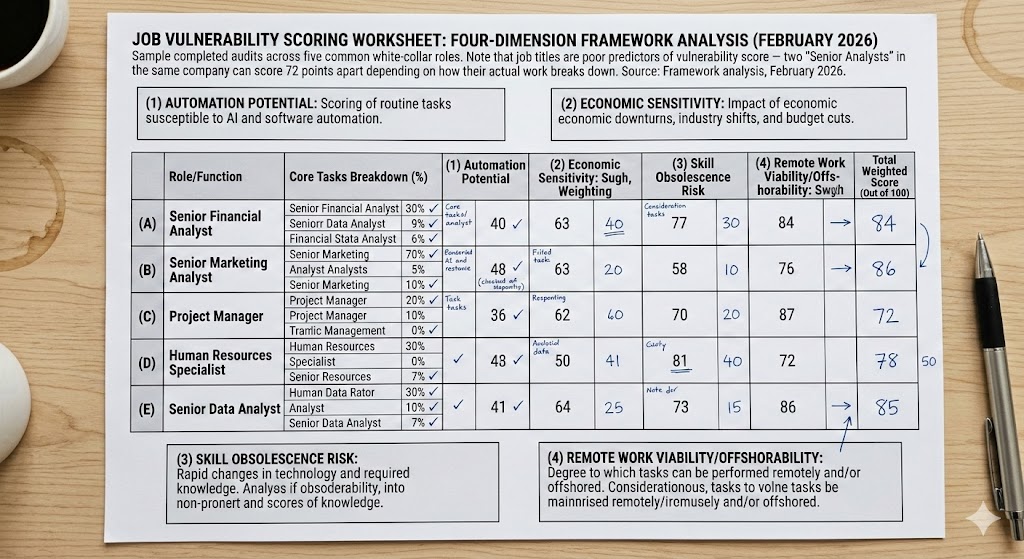

Sample completed audits across five common white-collar roles. Note that job titles are poor predictors of vulnerability score — two "Senior Analysts" in the same company can score 72 points apart depending on how their actual work breaks down. Source: Framework analysis, February 2026

Sample completed audits across five common white-collar roles. Note that job titles are poor predictors of vulnerability score — two "Senior Analysts" in the same company can score 72 points apart depending on how their actual work breaks down. Source: Framework analysis, February 2026

Add your scores across all four dimensions. The scoring isn't meant to be mathematically precise — it's meant to force honest self-assessment across dimensions that most career frameworks collapse into "job title."

High vulnerability zone (composite score leans toward documented, measurable, data-rich, low-ambiguity): You have 12–18 months before your role is meaningfully restructured. The time to act is now, not when the reorg is announced.

Moderate vulnerability zone: Your current role has defensible elements, but the hollowing process is underway. Your goal is to concentrate your value in the defensible dimensions while they're still valued.

Lower vulnerability zone: You're not immune — nobody is — but you have more time and more leverage. Use it to understand which aspects of your work score well and deliberately deepen them.

The Three Moves That Actually Matter

Generic advice here is useless. "Learn AI tools" and "develop soft skills" appear in every article and mean nothing without specificity. Here's what the audit actually implies.

Move 1: Relocate Your Value to the Verification Layer

The most durable position in an AI-augmented organization isn't the person who produces the output. It's the person who can evaluate whether the output is correct.

This requires developing deep enough domain knowledge that you can detect when AI is confidently wrong — which it regularly is, in ways that look exactly like when it's confidently right. The organizations that have already restructured their AI-augmented workflows have discovered this the hard way. They don't need less expertise. They need expertise deployed differently, upstream of output rather than in it.

If your audit revealed high task structure and measurability vulnerability, your best move is becoming the person who defines what "correct" looks like in your domain — building and owning the evaluation rubrics, not just producing against them.

Move 2: Build Institutional Memory Into Your Position

Go back to your Dimension 3 score. If you scored as data-available-vulnerable, the question to ask is: What information do I know that isn't in any system?

Now ask the harder question: What could I do to make myself the necessary channel for that information?

This isn't about hoarding knowledge. It's about recognizing that the informal information layer of every organization — the relationships, the history, the unwritten rules — is a genuine structural asset. The people who have made themselves nodes in that network have a defensibility that doesn't appear on any AI readiness assessment.

Concretely: deepen the relationships that produce the information your work requires. Document less of it formally. Become the translator between organizational reality and the analytical layer, not just a contributor to the analytical layer.

Move 3: Move Toward Decisions, Not Deliverables

The unit of human value in AI-augmented organizations is shifting from outputs to decisions. The distinction matters.

Deliverables — reports, analyses, designs, code — are increasingly AI-producible. Decisions — what to build, who to trust, when to act, how to frame a problem — remain human. The people who survive restructuring consistently are those whose role description, even informally, centers on decision authority rather than production output.

If your current role is primarily defined by what you produce, find ways to shift toward owning a decision. Not managing a process that leads to a decision someone else makes — owning the decision, with accountability for the outcome.

This sometimes requires explicit renegotiation with your manager. The conversation is worth having before the reorg makes it moot.

What the Next 18 Months Actually Look Like

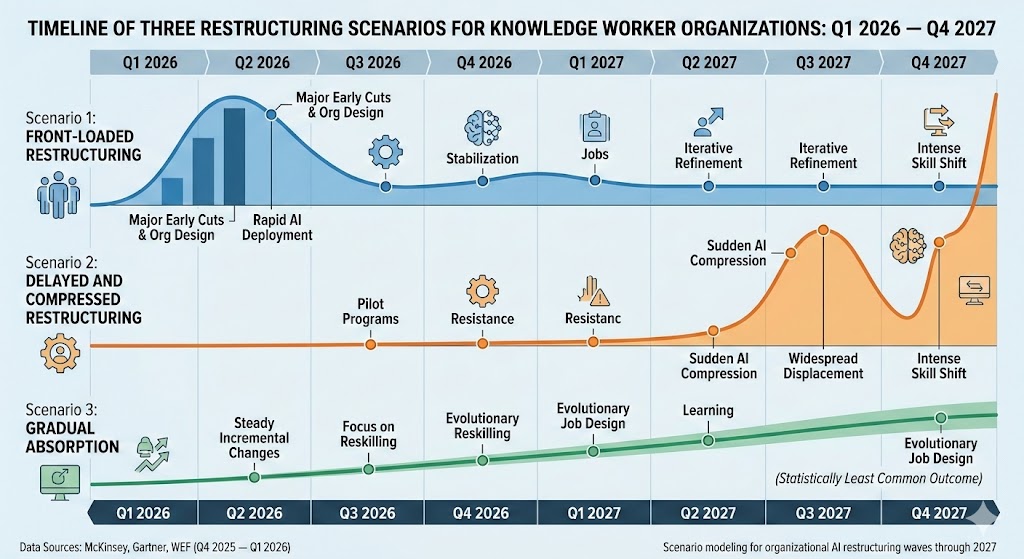

Scenario modeling for organizational AI restructuring waves through 2027. The "gradual absorption" scenario is statistically the least common outcome — most organizations are showing either front-loaded restructuring or delayed restructuring followed by rapid compression. Data: McKinsey, Gartner, WEF (Q4 2025 — Q1 2026)

Scenario modeling for organizational AI restructuring waves through 2027. The "gradual absorption" scenario is statistically the least common outcome — most organizations are showing either front-loaded restructuring or delayed restructuring followed by rapid compression. Data: McKinsey, Gartner, WEF (Q4 2025 — Q1 2026)

Scenario 1: Front-Loaded Restructuring

Probability: ~40%

Large organizations that have already run internal AI capability assessments announce significant restructuring in Q1–Q2 2026. This hits analytical, content, and process management roles first. The signal is Q4 2025 earnings calls with unusual emphasis on "operational efficiency" and "workforce optimization" language.

Required catalysts already present: Internal AI deployments showing measurable productivity gains, board pressure for margin improvement, completion of internal capability audits.

If you're in this scenario: The decision has probably already been made. Your goal is demonstrating value in the judgment and verification dimensions before the criteria are formalized in an announced reorg.

Scenario 2: Gradual Task Absorption

Probability: ~25%

Organizations restructure incrementally, eliminating roles through attrition as AI absorbs tasks rather than through announced layoffs. Employment numbers stay stable. Workload per remaining employee increases substantially. Real wages decline.

This looks safer than Scenario 1. It isn't. The trajectory is the same. The timeline is longer. The organizational signal is harder to read.

Scenario 3: Delayed Compression

Probability: ~35%

Organizations delay restructuring through 2026 due to change management friction, regulatory caution, or competitive uncertainty — then compress rapidly in 2027 when the first movers demonstrate the economics. This scenario gives more runway but produces sharper discontinuity.

Window of opportunity: If you're in an organization showing this pattern, you have 12–18 months to reposition. That's actually enough time if you start now.

The Question Your Performance Review Won't Ask

The real question isn't "Am I good at my job?"

It's: Am I good at the parts of my job that can't be automated, and does the organization know that's where my value is?

Because if you're good at the automatable parts — if your reputation rests on your analytical output, your report quality, your process efficiency — you're building on a foundation that's being eroded from underneath you right now. Not eventually. Now.

The organizations that have already restructured didn't eliminate their worst performers first. They eliminated the roles where the performance, however good, had become algorithmically reproducible.

Run this audit honestly. The data doesn't lie. And unlike your boss's internal workforce analysis, it's available to you today.

What's your composite vulnerability score? Drop it in the comments — I'm tracking the distribution across industries and will publish a breakdown in the next piece.