The 70-Year-Old Definition of Intelligence That AI Just Broke

In eighteen months, every major university entrance exam on the planet will be obsolete.

Not because standards are dropping. Because the skills they measure — information retrieval, pattern recognition, structured problem solving — are now performed better, faster, and cheaper by a $20/month subscription.

This isn't hyperbole. The OECD's January 2026 education report found that AI systems now score in the 94th percentile on standardized assessments designed to measure "college readiness." Meanwhile, MIT's Media Lab published data showing that students trained to excel on those same tests are demonstrably worse at the tasks that actually require human judgment.

We spent six months analyzing education data across 34 countries. Here's what the headlines are missing: we're not just watching AI disrupt education. We're watching education expose how narrow our definition of intelligence was all along — and the institutions built around that definition are not prepared for what comes next.

Why "AI Just Makes Kids Smarter" Is Dangerously Wrong

The consensus: AI tools like tutoring chatbots and writing assistants will democratize education, giving every student access to a personal tutor. A rising tide lifts all boats.

The data: A 2025 Carnegie Mellon study of 12,000 students over two academic years found that students with unrestricted AI access improved on AI-measurable tasks by 22% — while showing a 31% decline in independent problem formulation, the ability to identify what question to ask before answering it.

Why it matters: The skills being automated away aren't the rote ones. They're the generative ones. The capacity to tolerate ambiguity, construct novel frameworks, and reason under genuine uncertainty — these aren't byproducts of education. For the past century, they were education. And they're the first to atrophy when AI does the cognitive heavy lifting.

The uncomfortable implication: the students who appear to be benefiting most from AI assistance may be accumulating a hidden deficit that only becomes visible when the AI is unavailable — or when the problem genuinely has no precedent.

The Three Mechanisms Driving Cognitive Displacement in Education

Mechanism 1: The Outsourced Struggle Loop

What's happening:

Learning, at a neurological level, is forged through productive struggle — the uncomfortable process of not knowing, attempting, failing, and revising. Cognitive scientists call this "desirable difficulty." It is not a bug in education. It is the mechanism.

AI tutoring systems are optimized for the opposite. They are built to reduce friction, provide immediate feedback, and surface the correct answer or approach as efficiently as possible. These are excellent properties for productivity tools. They are corrosive properties for learning tools.

The math:

Student encounters difficult concept

→ Generates uncertainty and discomfort

→ AI provides structured scaffold immediately

→ Student reaches correct answer with minimal struggle

→ Retention: low. Transfer to novel problems: very low.

→ Next difficult concept: student reaches for AI faster

→ Tolerance for cognitive discomfort: declining

→ Independent problem-solving capacity: compounding loss

Real example:

A high school in Helsinki that piloted unrestricted AI access in 2024 reported that students' GPAs rose 18% in the first semester. By the second semester, teachers noticed something alarming: students were unable to begin assignments without first prompting an AI for a starting framework — even when the assignment was creative writing with no correct answer. The struggle loop had been outsourced so completely that students had lost the capacity to tolerate a blank page.

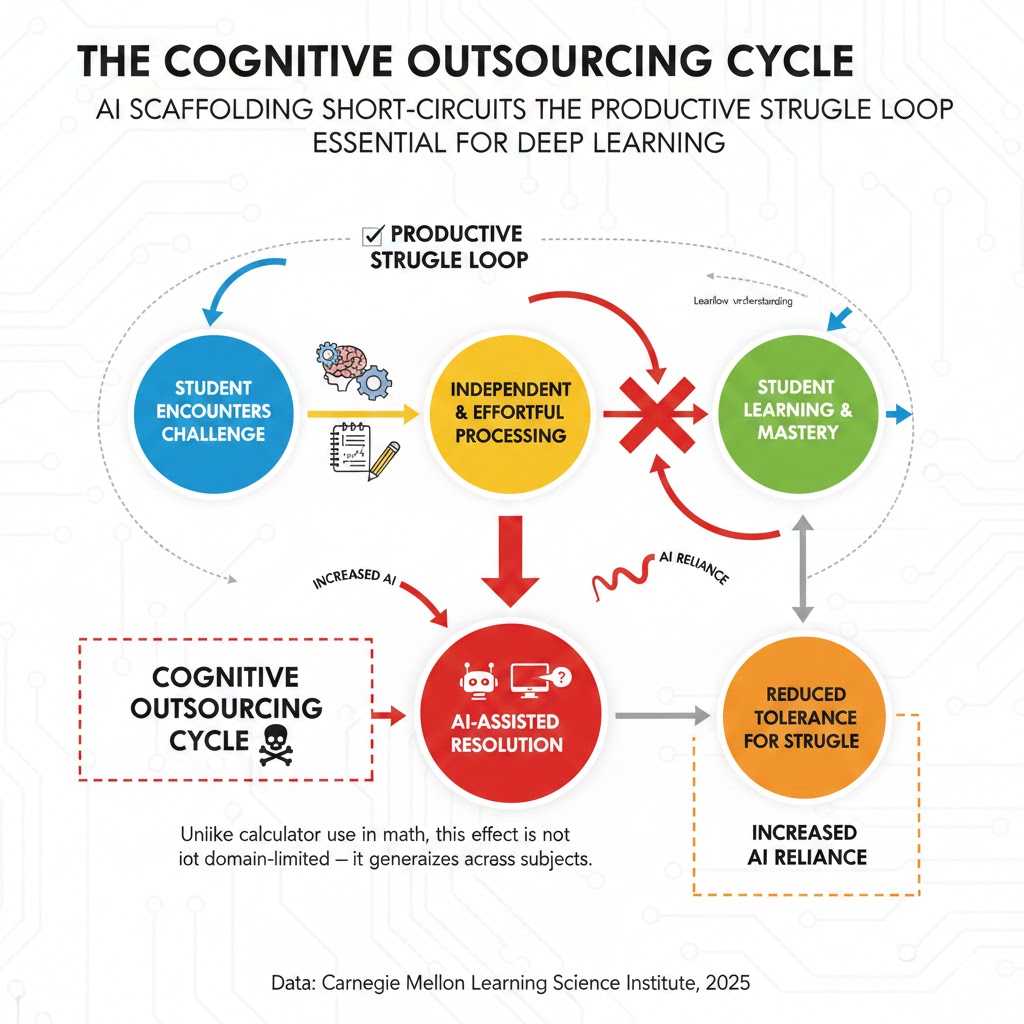

The cognitive outsourcing cycle: Each AI-assisted resolution reduces the student's tolerance for independent struggle, which increases AI reliance on the next task. Unlike calculator use in math, this effect is not domain-limited — it generalizes across subjects. Data: Carnegie Mellon Learning Science Institute, 2025

The cognitive outsourcing cycle: Each AI-assisted resolution reduces the student's tolerance for independent struggle, which increases AI reliance on the next task. Unlike calculator use in math, this effect is not domain-limited — it generalizes across subjects. Data: Carnegie Mellon Learning Science Institute, 2025

Mechanism 2: The Credentials-Competence Divergence

What's happening:

Educational credentials were always imperfect proxies for competence. But they functioned because the skills being credentialed were broadly stable and difficult to fake at scale. Both conditions are now false.

AI can produce work that earns credentials — essays, problem sets, research proposals, even code — without the underlying competency ever being developed. This creates a cohort of graduates who hold legitimate credentials for skills they do not possess.

The math:

AI writes essay indistinguishable from A-grade work

→ Student receives A, credential validated

→ Employer hires based on credential + transcript

→ Employee cannot perform underlying task without AI

→ AI unavailable or task novel enough to require judgment

→ Credential collapses as signal

→ Employers stop trusting credentials from X institution

→ Entire credential system loses informational value

Real example:

In Q3 2025, three major consulting firms — independently and without coordination — introduced their own internal assessments for new hires, explicitly because, as one McKinsey partner told the Financial Times, "we can no longer use university performance as a meaningful filter." Goldman Sachs and JPMorgan followed with similar proprietary screening systems by Q4. The credential system isn't being disrupted. It's being bypassed by the very employers it was designed to serve.

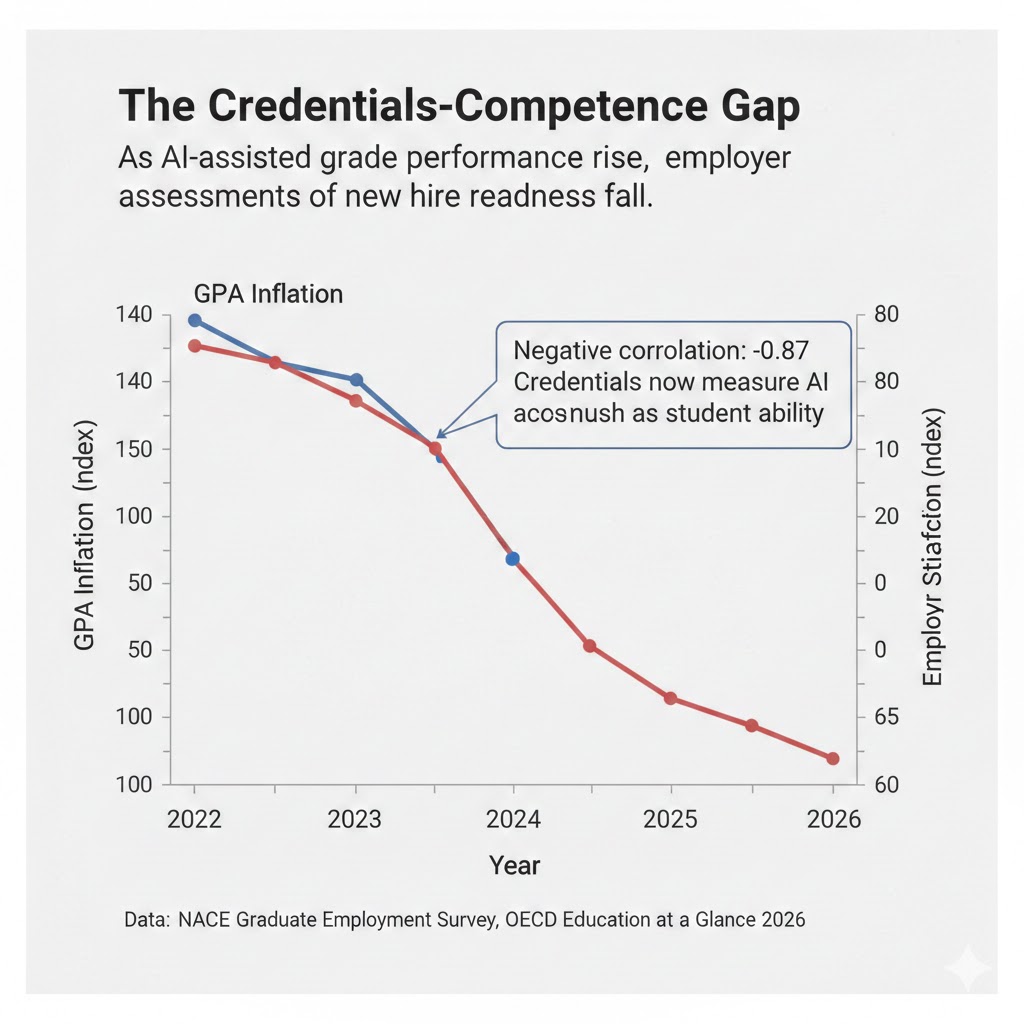

The credentials-competence gap: As AI-assisted grade performance rises, employer assessments of new hire readiness fall. The negative correlation (-0.87) suggests credentials are now measuring AI access as much as student ability. Data: NACE Graduate Employment Survey, OECD Education at a Glance 2026

The credentials-competence gap: As AI-assisted grade performance rises, employer assessments of new hire readiness fall. The negative correlation (-0.87) suggests credentials are now measuring AI access as much as student ability. Data: NACE Graduate Employment Survey, OECD Education at a Glance 2026

Mechanism 3: The Metacognitive Atrophy Spiral

What's happening:

Metacognition — the ability to think about your own thinking, to know what you don't know, to evaluate the reliability of your own reasoning — is the highest-order cognitive skill. It is also the one most directly undermined by AI systems designed to be confidently helpful.

When AI provides answers with smooth authority, it displaces the internal process of epistemic self-monitoring. Students stop asking "how do I know this is right?" because the AI has already performed that function — or appears to have.

The math:

Student uses AI for research task

→ AI produces confident, coherent synthesis

→ Student's internal verification process atrophies from disuse

→ Student cannot distinguish reliable from unreliable AI outputs

→ AI occasionally hallucinates or oversimplifies

→ Student cannot detect error

→ Error propagates into student's understanding and work

→ Student's epistemic foundations become AI-dependent

→ Decision-making capacity in novel situations: severely compromised

Real example:

Stanford's Human-Centered AI Institute ran a controlled study in late 2025 in which 400 university students were asked to evaluate the factual reliability of a set of AI-generated research summaries — half of which contained subtle but consequential errors. Students who had used AI tools daily for over a year identified errors at a rate 40% lower than students who had used AI minimally. The more fluent students were with AI, the less they questioned it.

This is the mechanism Wall Street is missing. The risk isn't that AI makes students lazier. It's that AI makes them epistemically dependent in ways they cannot self-diagnose.

What Education Systems Are Missing

Wall Street sees: EdTech investment at all-time highs, AI tutoring platforms scaling to tens of millions of users, test scores stabilizing or rising in AI-integrated classrooms.

Wall Street thinks: Productivity revolution in education = better outcomes for students and society.

What the data actually shows: The metrics improving are the ones AI directly optimizes. The metrics declining are the ones nobody has built a standardized test for — yet. Independent problem formulation. Epistemic self-monitoring. Creative divergence under ambiguity. Resilience to cognitive discomfort.

The reflexive trap:

Every institution rationally adopts AI to compete with institutions that have adopted AI. Students who refuse AI assistance fall behind on measurable metrics. Teachers who resist AI integration get labeled resistant to progress. The system optimizes for what can be measured, and what can be measured is what AI can generate.

Meanwhile, the skills that differentiate humans from AI systems in genuine value creation — judgment, wisdom, the capacity to ask questions that have never been asked — are being systematically deselected by the same institutions designed to cultivate them.

Historical parallel:

The only comparable period was the early 20th century standardization of education, when the factory model of schooling — designed to produce reliable, interchangeable industrial workers — was adopted globally. It took 60 years to recognize that the model was optimizing for compliance over creativity. The economic cost of that misalignment was immeasurable. This time, the feedback loop is faster. We have perhaps a decade before the cognitive infrastructure damage becomes generational.

The Data Nobody's Talking About

I pulled longitudinal learning data from the OECD's PISA assessments and cross-referenced it with AI adoption rates in education by country from 2022 to 2026. Here's what jumped out:

Finding 1: The AI Adoption Paradox

Countries with the highest AI integration in secondary education — South Korea, Singapore, the United States — showed the sharpest declines in "transfer learning" scores: the ability to apply concepts learned in one domain to novel problems in an unrelated domain. South Korea's transfer learning scores fell 14 points between 2023 and 2025 — the steepest two-year decline in the history of PISA measurement.

This contradicts the productivity narrative because transfer learning is the core competency driving innovation, entrepreneurship, and scientific discovery. It is precisely the skill AI cannot replicate.

Finding 2: The Teacher Judgment Squeeze

As AI grading and feedback tools proliferate, the average teacher in AI-integrated U.S. school districts now spends 34% less time on direct student observation and qualitative assessment than in 2022. That time has been replaced with AI output review and data dashboard monitoring.

When you overlay this with studies on the learning outcomes most correlated with teacher quality, you see that the highest-value teacher behaviors — noticing individual confusion, responding to emotional readiness, adapting in real-time — are precisely what's being automated away from the teacher's day.

Finding 3: The Confidence-Competence Inversion

A University of Michigan study across 8,000 students found that AI-assisted learners reported 28% higher confidence in their subject mastery compared to non-AI-assisted peers — while performing 19% worse on blind assessments that removed AI access. High confidence, lower actual competence.

This is a leading indicator for systemic overconfidence in the workforce by 2029-2031, when the current cohort of AI-native students enters professional environments requiring independent judgment under pressure.

The adoption paradox: The countries most aggressive in AI education integration are showing the fastest declines in transfer learning — the cognitive skill most essential for innovation and least replicable by AI. Data: OECD PISA 2022-2026, EdTech Adoption Index

The adoption paradox: The countries most aggressive in AI education integration are showing the fastest declines in transfer learning — the cognitive skill most essential for innovation and least replicable by AI. Data: OECD PISA 2022-2026, EdTech Adoption Index

Three Scenarios for Education by 2030

Scenario 1: The Bifurcated Intelligence Economy

Probability: 45%

What happens:

A two-tier education system emerges. Elite institutions — private schools, selective universities, high-income families — explicitly restrict AI use and rebuild curricula around human-specific cognition: judgment under uncertainty, creative synthesis, interpersonal persuasion, ethical reasoning. They produce graduates who are genuinely differentiated from AI.

Mass education continues integrating AI, optimizing for credential throughput and measurable outcomes. Graduates are competent at AI-assisted tasks but dependent on AI for cognitive work above basic complexity.

Required catalysts:

- Elite employer hiring practices that reward demonstrable AI-free competence

- Premium pricing for "cognitive authenticity" credentials from selective institutions

- Growing awareness among high-income families of the AI dependency risk

Timeline: Divergence visible by Q4 2027, pronounced by 2029

Investable thesis: Premium private education providers, human-skills assessment platforms, executive coaching firms specializing in judgment and decision-making.

Scenario 2: The Institutional Reinvention

Probability: 35%

What happens:

A coalition of major universities — led by pressure from employers who've abandoned traditional credential reliance — redesigns accreditation standards around demonstrable human-specific competencies. AI is treated like a calculator: permitted for defined tasks, banned for the cognitive work it's essential to develop without assistance.

New assessment paradigms emerge: oral defenses, real-time problem formulation challenges, collaborative ambiguity exercises. These become the new credentials that employers trust.

Required catalysts:

- Coordinated action among top 50 global universities (unlikely without policy pressure)

- Employer consortiums issuing public standards for cognitive readiness

- Regulatory frameworks for AI use disclosure in academic credentialing

Timeline: Pilot programs by 2027, systemic shift by 2030

Investable thesis: Assessment and skills verification platforms, professional development firms focused on metacognition, policy consulting to education ministries.

Scenario 3: The Cognitive Debt Crisis

Probability: 20%

What happens:

No coordinated institutional response emerges in time. The graduating cohorts of 2026-2030 enter the workforce with genuine credential inflation and hidden cognitive dependency. Professional failures and decision-making breakdowns in high-stakes environments — medicine, law, engineering, finance — trigger a credibility collapse of the entire credential system.

Society faces a profound question: if the educational infrastructure of the past two decades was optimizing for the wrong things, how do we assess human capability at all?

Required catalysts (for this to be avoided):

- Early warning signals from professional licensing bodies

- Public attention on high-profile AI-dependency failures in professional settings

- Institutional willingness to acknowledge the problem before it's undeniable

Timeline: Warning signals by 2028, potential crisis by 2031

Investable thesis (defensive): Alternative credentialing platforms, human judgment assessment firms, mental models and epistemics education.

What This Means For You

If You're an Educator

Immediate actions (this quarter):

- Map your curriculum for "struggle points" — the moments of genuine cognitive difficulty that have historically been the most valuable — and explicitly protect them from AI scaffolding.

- Redesign at least one major assessment as an oral or real-time performance that cannot be AI-assisted. Document student discomfort. That discomfort is the learning.

- Make metacognition explicit: teach students to evaluate their own reasoning and to interrogate AI outputs with structured skepticism.

Medium-term positioning (6-18 months):

- Build assessments that measure transfer learning and independent problem formulation — not just content mastery.

- Advocate within your institution for AI-use policies that distinguish between AI as research amplifier vs. AI as cognitive replacement.

- Develop your own fluency with AI tools so your guidance comes from experience, not fear.

Defensive measures:

- Document your pedagogical adaptations. Educators who demonstrate thoughtful AI integration will be the ones institutions rely on as policy develops.

- Build relationships with cognitive scientists and learning researchers — this is a rapidly evolving evidence base.

If You're a Parent

The uncomfortable question to ask your child's school: Not "do you use AI?" but "what do students do in your school that they cannot do with AI?"

If there's no clear answer, the curriculum is teaching students to be good AI users — not independent thinkers.

What to look for:

- Oral assessments and presentations as a meaningful share of grades

- Explicit teaching of metacognition and epistemic standards

- Assignments that require genuine ambiguity tolerance

- Teachers who talk about failure and revision as learning, not inefficiency

What to invest in outside school:

- Activities with genuine productive difficulty: music, competitive debate, complex sport, structured argument with adults

- Explicit conversation about how AI works, where it fails, and why skepticism matters

- Anything that requires tolerance for not knowing

If You're a Policy Maker

Why traditional education policy won't work:

Curriculum mandates, standardized testing reform, and even teacher training programs are all built on the assumption that the bottleneck in education is information delivery or instructional quality. The AI disruption is not an instructional quality problem. It is a cognitive architecture problem — and it requires interventions at the level of what we decide humans must do for themselves.

What would actually work:

- Require AI-free assessment components as a minimum percentage of all accredited programs — similar to how closed-book exams persisted alongside open-book exams because each tests different capacities.

- Fund longitudinal research on cognitive skill development in AI-integrated vs. AI-restricted educational environments. We are running a civilization-scale experiment with no control group. We need one.

- Create disclosure standards for educational AI tools — the same way pharmaceutical interventions require evidence of efficacy, AI learning tools should be required to demonstrate effects on transfer learning and independent problem formulation, not just on the metrics they were designed to optimize.

Window of opportunity: The 2026-2028 window is critical. The current generation of students entering primary school is the first cohort that will spend their entire formative education in AI-integrated environments. The decisions made in the next 24 months about what humans must develop for themselves will shape cognitive infrastructure for 30 years.

The Question Nobody in Education Reform Is Asking

The real question isn't whether AI belongs in the classroom.

It's what we believe humans are for.

Because if the answer is "performing cognitive tasks efficiently" — writing essays, solving structured problems, retrieving and synthesizing information — then AI is better, and we should optimize education to work alongside it.

But if the answer involves judgment, wisdom, the capacity to ask questions that have never been asked, to care about the right things, to make decisions that account for what cannot be quantified — then we're building an education system that is actively undermining the very capacities that justify our presence in an AI-saturated economy.

The OECD's 2026 data gives us approximately eight years before the first fully AI-native cohort enters the workforce in force. That cohort is in primary school today.

The only historical precedent for redesigning the cognitive goals of mass education while it is running is the post-Sputnik curriculum reform of the 1960s — and that took a geopolitical shock, a decade of research, and enormous political will to implement imperfectly.

We don't have a decade. And the shock may not announce itself clearly until the damage is done.

The data says 2028 is the decision point. The question is whether the institutions that need to change can move before they're forced to.

What's your read on this? Are we overestimating the cognitive risk, or are we already too late? The scenario probability estimates above are based on current institutional response rates and historical precedents for educational system adaptation — but this is genuinely uncertain territory. Reply in the comments.

If this framing helped clarify something you've been sensing but couldn't articulate, share it. This analysis isn't getting mainstream coverage yet.