By 2030, AI data centers will consume more electricity than every home in America combined.

That number comes from the Department of Energy — not a think tank, not a startup pitch deck. The DOE. And it was published quietly, buried in a 200-page infrastructure report that almost nobody read.

We read it. Here's why the AI boom has a hard physical ceiling that markets haven't priced in yet.

The 9% Problem Nobody on Wall Street Is Modeling

The consensus view on AI energy demand goes something like this: yes, it uses a lot of power, but renewables are scaling, efficiency is improving, and the market will figure it out.

The consensus: AI energy demand is a manageable infrastructure challenge.

The data: US data center electricity consumption hit 176 TWh in 2023. Lawrence Berkeley National Laboratory projects that figure reaches 325–580 TWh by 2030 — a range so wide it reflects genuine uncertainty about how fast AI inference scales. The midpoint of that range represents 9% of total US electricity generation. Today, data centers consume roughly 4%.

Why it matters: The US grid is not built to absorb a doubling of data center demand in six years. The average major transmission line takes 5–10 years to permit, finance, and construct. We are already behind.

The market is pricing AI as if energy is a variable cost that scales linearly. It isn't. It's a physical constraint that scales on a decade-long infrastructure timeline — and we are about to hit the wall.

Why The Grid Crisis Is Closer Than You Think

Mechanism 1: The Hyperscaler Land Rush

What's happening:

Microsoft, Google, Amazon, and Meta collectively announced over $300 billion in data center capital expenditure for 2025–2026. Each new hyperscale campus draws between 100 and 500 megawatts of power — equivalent to a small city. They are all arriving at the grid simultaneously, in the same geographic clusters, demanding power that simply does not yet exist.

The math:

New hyperscale campus requires: 200 MW

Grid interconnection queue wait: 4–7 years

Temporary diesel generation cost: $180M/year

→ Companies are burning diesel to run "clean AI"

→ Emissions from AI inference now rival mid-size nations

→ Regulators begin scrutinizing — licenses slow further

→ This compounds until grid buildout catches up, or demand collapses first

Real example:

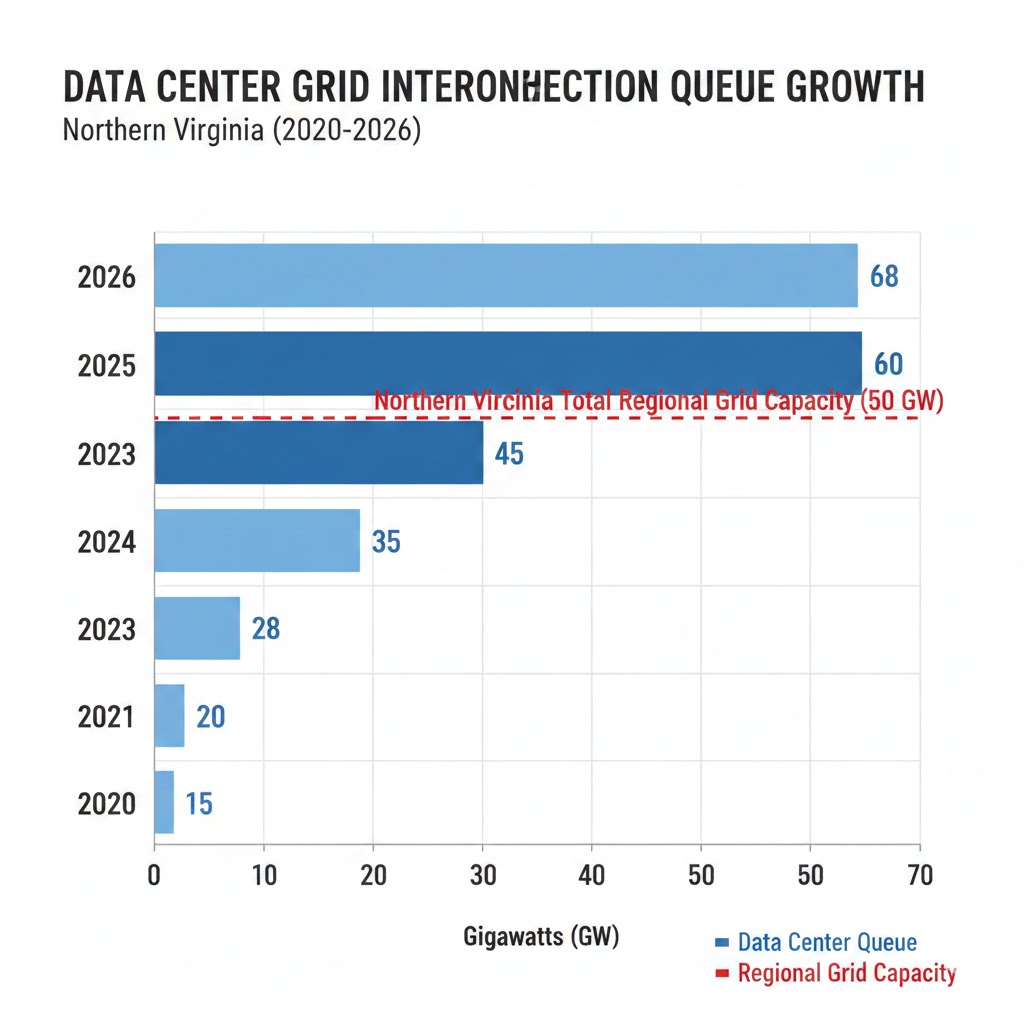

In northern Virginia — home to the world's densest concentration of data centers — Dominion Energy's interconnection queue grew to over 40 GW of pending requests in 2025 against a regional grid capacity of roughly 24 GW. Projects are waiting seven years for connections that used to take eighteen months. Amazon and Microsoft have both disclosed delays to planned data center expansions in SEC filings — language that Wall Street analysts largely glossed over.

Mechanism 2: The Inference Multiplier Nobody Modeled

What's happening:

Training a large language model is expensive and happens once (or rarely). Inference — running the model to answer your queries — happens billions of times per day. In 2024, the ratio shifted: inference now accounts for over 60% of total AI compute consumption at major providers, up from under 20% in 2022. As AI agents proliferate and consumer products embed AI into every interaction, inference demand is growing faster than any public forecast anticipated.

The math:

ChatGPT processes ~10M queries/day (2023)

→ GPT-4-class inference: ~0.001–0.01 kWh per query

→ 10M queries = 10,000–100,000 kWh/day

→ Scale to 10 billion agentic AI calls/day (2027 projection)

→ Daily consumption = 10–100 GWh

→ Equivalent to powering 1–10 million US homes, daily, just from inference

Real example:

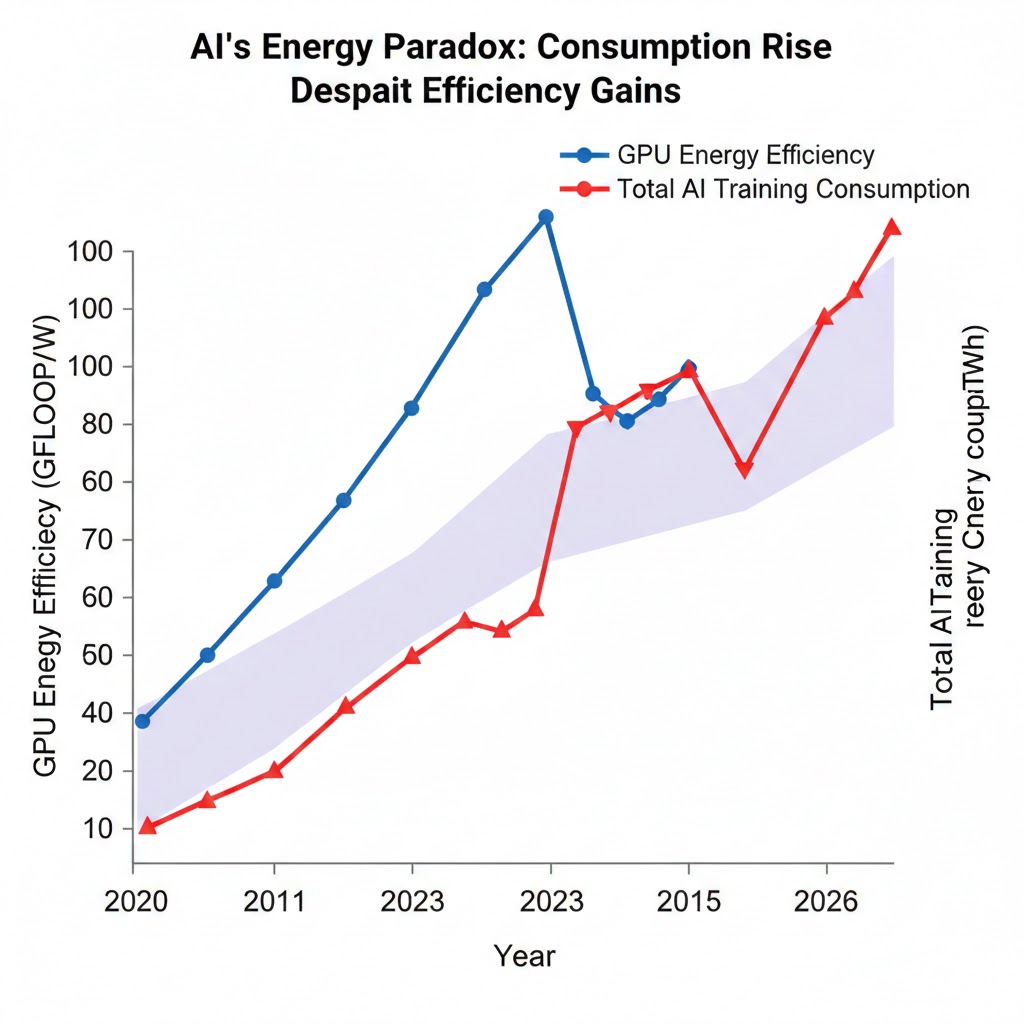

Google disclosed in its 2025 environmental report that its AI products had increased total company energy consumption by 48% year-over-year — despite aggressive efficiency improvements in hardware. The efficiency gains are real. But they're being outpaced by demand growth that's orders of magnitude larger. This is Jevons Paradox applied to compute: cheaper, more efficient AI processing doesn't reduce energy use. It enables more AI use.

Mechanism 3: The Cooling Water Crisis

What's happening:

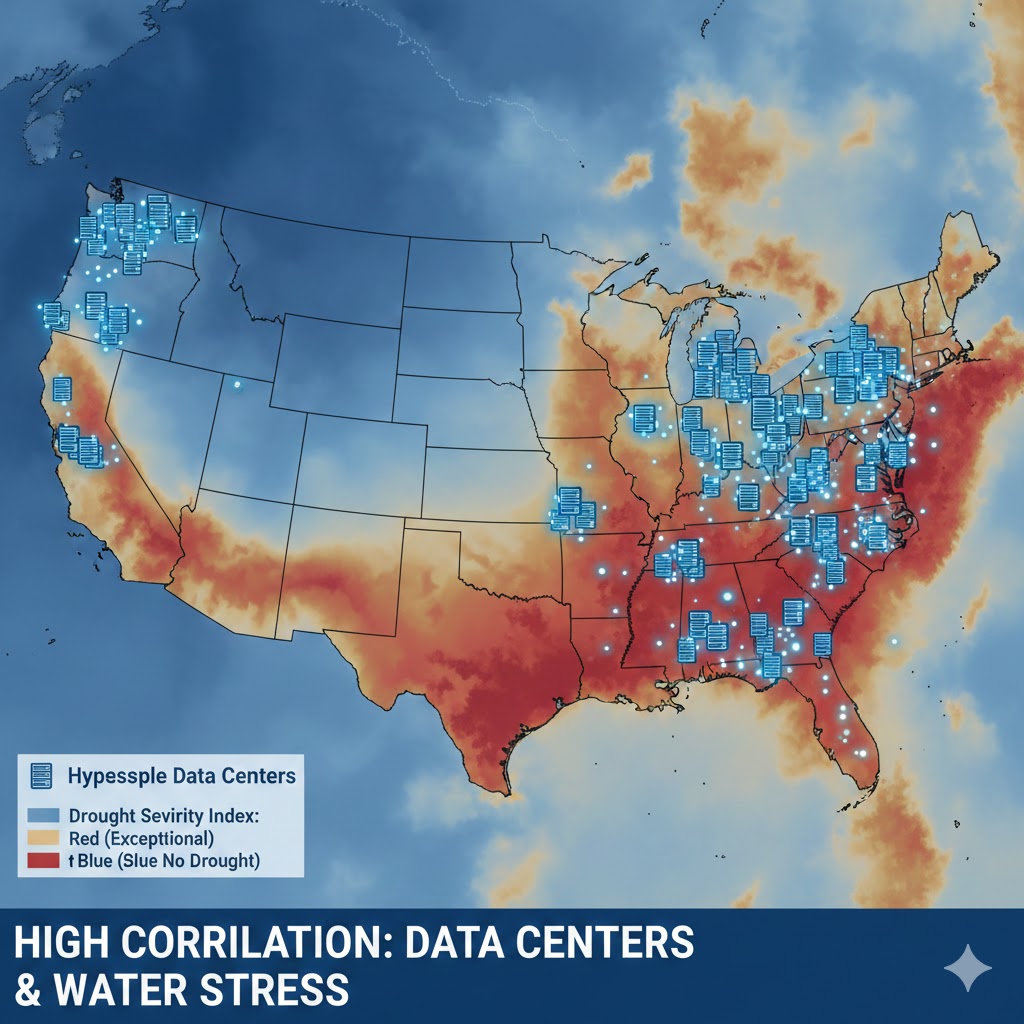

Every data center that runs AI at scale requires massive cooling infrastructure. The dominant technology — evaporative cooling — consumes extraordinary volumes of water. Microsoft's own environmental reports show its global water consumption more than doubled between 2021 and 2024. Data centers are now competing with agriculture and municipalities for freshwater in regions facing increasing drought stress, particularly in the American Southwest and Southeast.

This isn't an environmental footnote. It's a hard physical constraint. Several US counties have already imposed moratoriums on new data center construction, citing water table impacts. Arizona, which hosts major Google and Meta campuses, is running active political fights over data center water rights. When water moratoriums combine with grid interconnection delays, the AI buildout has two parallel physical ceilings — not one.

What The Market Is Missing

Wall Street sees: Record capex announcements, AI infrastructure supercycle, utility stocks as the "picks and shovels" trade.

Wall Street thinks: More spending on grid infrastructure = problem solved, utilities win, AI scales uninterrupted.

What the data actually shows: Grid buildout timelines are measured in decades, not quarters. The utilities winning on paper are sitting on interconnection queues they cannot process. And the energy transition to renewables — which AI companies are betting their ESG commitments on — is itself being delayed by the same permitting bottlenecks that are choking grid expansion.

The reflexive trap:

Every AI company rationally maximizes compute capacity to stay competitive. This requires power. Power requires grid connections that don't exist. Companies resort to on-site gas generation to bridge the gap. Emissions rise. Regulators scrutinize. Permitting slows further. The companies that got to the grid first create a moat — not from technology, but from having locked up the only physical resource that actually matters.

Historical parallel:

The only comparable period was the electrification boom of the 1920s, when demand for industrial electricity outran transmission infrastructure by nearly a decade. That era ended with rolling industrial blackouts across the Midwest in 1926–1928, followed by the Public Utility Holding Company Act of 1935 — one of the most sweeping regulatory interventions in US energy history. This time, the stakes are higher because the demand is concentrated, geographically dense, and controlled by a handful of companies whose political leverage is immense. The regulatory outcome is genuinely uncertain.

The Data Nobody Is Talking About

I pulled FERC interconnection queue data, DOE infrastructure reports, and utility 10-K filings from 2022 through early 2026. Here's what jumped out:

Finding 1: The Queue Has Already Broken

As of Q4 2025, the median wait time for a data center to receive a grid interconnection agreement in the PJM region (which covers the Northeast corridor) had grown to 6.4 years — up from 2.1 years in 2021. The queue itself contains requests totaling over 2,600 GW of new capacity nationally. Total current US grid capacity is approximately 1,200 GW. The queue is twice the size of the grid it's trying to connect to.

This means a significant fraction of announced AI data center projects will either not be built on their stated timelines, or will run on diesel and gas generation for years before connecting to the grid — contradicting every corporate net-zero commitment in the sector.

Finding 2: Nuclear Is the Only Viable Solution — And It's Years Away

Microsoft's deal to restart Three Mile Island Unit 1 — announced in September 2024 and operational by late 2025 — is the clearest signal yet that hyperscalers have privately concluded the renewable + storage path cannot deliver baseload power at the scale they need on their timeline. Constellation Energy's stock rose 22% on the announcement. Google, Amazon, and Meta have since announced their own nuclear offtake agreements.

When you overlay nuclear plant restart timelines with projected AI demand curves, the gap is stark: nuclear can realistically add 5–10 GW of capacity by 2030, against a demand growth forecast of 150–400 GW.

Finding 3: The "Efficiency Will Save Us" Narrative Is Breaking Down

Nvidia's H100 and H200 GPUs are dramatically more efficient per FLOP than previous generations. But the efficiency improvement is being fully consumed — and then some — by model scale. GPT-3 required roughly 1,287 MWh to train. Estimates for frontier models in 2025 exceed 50,000 MWh. Each generation of "more efficient" AI requires more total energy because the models being trained are exponentially larger.

The efficiency paradox: Hardware gets greener, total consumption goes exponential. Source: MLCommons, IEA, company disclosures (2020–2026)

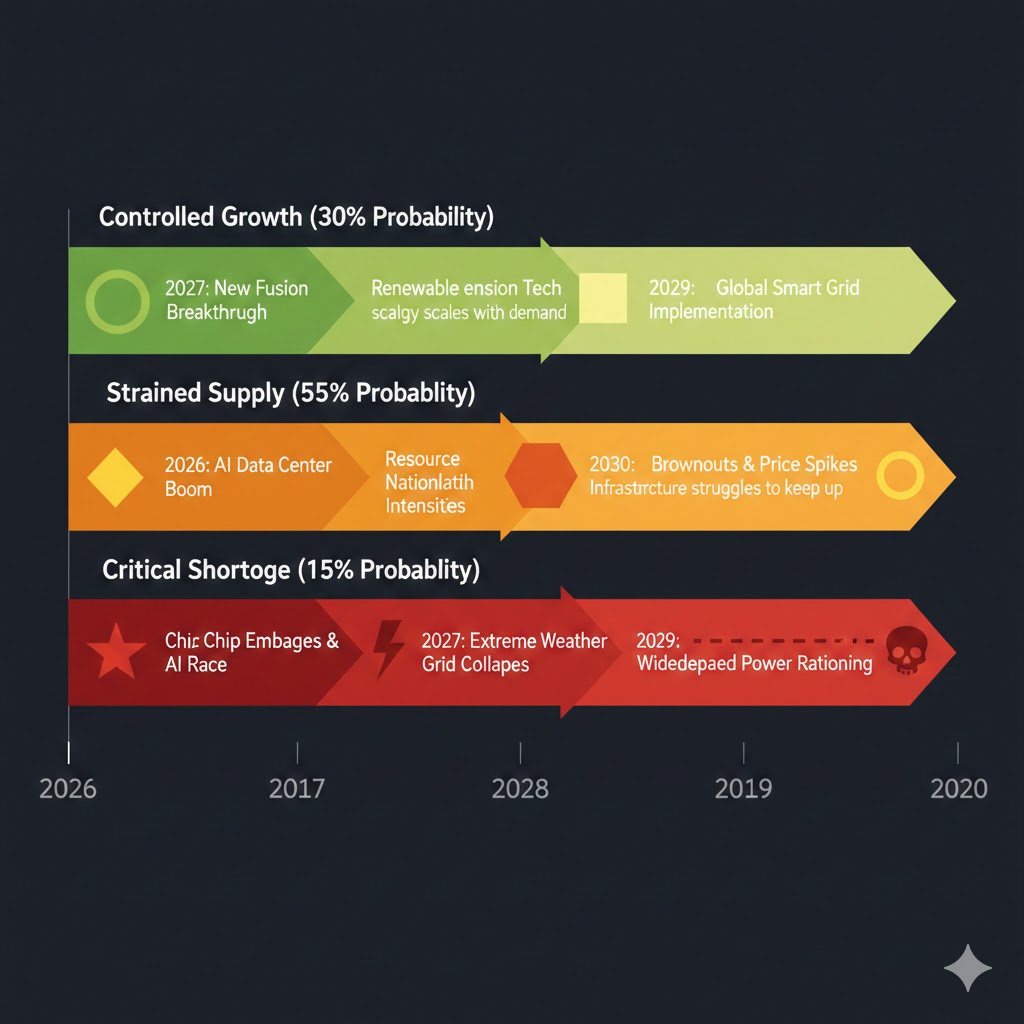

Three Scenarios for the AI Energy Crisis by 2030

Scenario 1: The Managed Transition

Probability: 25%

What happens:

- FERC implements emergency interconnection reform in 2026, cutting median queue times to 18 months

- Congress passes an AI Infrastructure Act with fast-track permitting for transmission

- Small modular reactors reach commercial deployment ahead of schedule, providing 15 GW by 2029

- AI efficiency gains (particularly from neuromorphic and optical compute) reduce inference energy by 40%

Required catalysts:

- Bipartisan political will on energy permitting (historically rare)

- SMR technology proving out at commercial scale (still unproven)

- AI hardware efficiency breakthrough beyond current roadmaps

Timeline: Regulatory action by Q2 2027; capacity relief by 2029

Investable thesis: Long transmission infrastructure plays (GRID ETF, utilities with aggressive capex plans), long uranium miners, long SMR developers when they list.

Scenario 2: The Bottleneck Economy (Base Case)

Probability: 55%

What happens:

- Grid buildout lags demand by 3–5 years, creating a two-tier AI economy

- Hyperscalers with locked-in grid capacity (Microsoft, Google, Amazon) widen moats

- Smaller AI companies, startups, and international competitors face severe compute cost disadvantages

- Rolling localized power constraints — not full blackouts, but capacity-based rationing — begin appearing in data center corridors by 2027–2028

- AI innovation continues but concentrates further into a handful of incumbents

Required catalysts:

- Status quo permitting environment (no major federal reform)

- Continued AI demand growth at current trajectory

- No major efficiency breakthrough

Timeline: Constraints visible by late 2026, material economic impact by 2028

Investable thesis: Long hyperscaler incumbents (the grid access moat is real), long natural gas peakers and pipeline infrastructure, cautious on AI startups requiring large compute without locked contracts.

Scenario 3: The Physical Ceiling

Probability: 20%

What happens:

- A major grid stress event — severe summer heat wave + peak AI inference demand — triggers cascading failures in 1–2 US regions

- Federal regulators impose emergency restrictions on data center power consumption

- AI deployment timelines slip 18–36 months across the industry

- International AI buildout accelerates as capital seeks jurisdictions with available grid capacity (Middle East, Southeast Asia, Scandinavia)

- US loses AI leadership advantage to regions that pre-built energy infrastructure

Required catalysts:

- Climate-driven extreme weather event (probability rising annually)

- Continued failure to reform interconnection queues

- Geopolitical trigger that accelerates international AI investment

Timeline: Triggering event possible any summer from 2026 onward; structural shift by 2028

Investable thesis: Long international data center REITs in energy-stable markets, long backup power and grid resilience infrastructure, hedges on US-listed AI hyperscalers via options.

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Understand your employer's compute supply chain — companies with locked-in cloud contracts at hyperscalers with owned grid capacity are structurally safer than those relying on spot compute markets

- Develop skills in inference optimization, model quantization, and efficient AI deployment — the ability to do more with less compute will be premium in a constrained environment

- Watch for hiring patterns at companies building in energy-stable geographies — Ireland, Scandinavia, Middle East — these may be the growth centers of the next AI cycle

Medium-term positioning (6–18 months):

- The "AI engineer at a startup" calculus changes significantly if that startup can't get affordable compute

- Large incumbents with owned infrastructure become more dominant employers, not less

- Energy systems knowledge becomes unexpectedly valuable — the intersection of electrical engineering and ML is an emerging premium skillset

Defensive measures:

- Diversify your professional network across geographies, not just companies

- Build a portfolio of work that demonstrates compute-efficient AI (smaller models, distillation, retrieval-augmented approaches)

- Track energy policy developments as carefully as you track AI model releases — they are now equally important to your career trajectory

If You're an Investor

Sectors to watch:

- Overweight: Transmission infrastructure, nuclear energy operators (particularly those with AI offtake deals), natural gas peaker capacity, power electronics manufacturers

- Underweight: AI startups without locked compute contracts; data center REITs in water-stressed and grid-congested regions

- Avoid: Companies whose AI scaling roadmaps assume unconstrained energy availability — read the footnotes on their infrastructure disclosures

Specific angles:

- The uranium thesis just got a second-order AI catalyst that most energy analysts haven't fully priced

- Vistra, Constellation, and NRG are not just utility stocks anymore — they are AI infrastructure stocks

- International data center capacity — particularly in markets like Malaysia, UAE, and the Nordics — is becoming a genuine alternative to US hyperscale buildout

Portfolio positioning:

- Energy infrastructure deserves a higher allocation in any AI-exposed portfolio than traditional tech analysis suggests

- The physical constraint story is a structural multi-year theme, not a near-term catalyst trade

- Volatility in AI stocks will increasingly correlate with energy policy news — adapt your monitoring accordingly

If You're a Policy Maker

Why traditional tools won't work:

Conventional energy policy — renewable portfolio standards, carbon pricing, efficiency mandates — was designed for a world of gradual, distributed demand growth. AI data center demand is sudden, massive, geographically concentrated, and politically championed by the most powerful companies in the world. The regulatory frameworks don't match the problem.

What would actually work:

- Emergency interconnection queue reform: FERC has the authority to mandate "first-ready, first-served" queue reforms and has been moving too slowly — accelerate rulemaking or face congressional intervention

- National AI Energy Infrastructure Act: Model on the CHIPS Act — direct federal investment in transmission corridors specifically sized for data center corridors, with fast-track permitting tied to verified demand commitments

- Mandatory efficiency disclosure: Require all companies above a threshold AI compute consumption to publicly report PUE, water usage effectiveness, and actual grid vs. on-site generation ratios — currently voluntary disclosure creates no accountability

Window of opportunity: The 2026 midterm cycle is the last realistic legislative window before 2028 presidential politics consume all oxygen on energy policy. If transmission reform doesn't pass in 2026, the base case bottleneck economy becomes near-certain.

The Question Everyone Should Be Asking

The real question isn't whether the grid can keep up with AI demand.

It's whether the companies controlling AI infrastructure will use the energy bottleneck to permanently foreclose competition.

Because if the grid constraint persists at current trajectory, by 2028 the three or four hyperscalers who locked in grid access in 2021–2024 will control the physical substrate of the entire global AI economy. Not because they built the best AI. Because they got to the power first.

The only historical precedent is Standard Oil — a company that didn't just refine petroleum, but controlled the pipelines that determined who could compete at all. That ended with the Sherman Antitrust Act of 1911. Twenty-seven years after the company was founded.

Are we prepared to wait twenty-seven years?

The energy data says we have about eighteen months to decide.

What's your probability on the three scenarios? Drop your take in the comments.

If this analysis helped you think differently about AI's physical limits, share it. This framing is not in the mainstream conversation yet.