The Silent Crisis Nobody's Measuring

In 2025, a software engineer at a mid-size fintech firm was replaced — not fired, just "transitioned." His job didn't disappear on a Monday. It dissolved over eight months as his team integrated AI coding assistants. By the end, he was reviewing outputs he didn't write, approving decisions he didn't make, signing off on architecture he didn't architect.

He still had his salary. He still had his title. He told his therapist he felt like a ghost.

This is the story economists aren't tracking. GDP is up. Corporate margins are up. Productivity is up. But something older, harder to quantify, and far more dangerous is in freefall: the sense that what you do each day matters.

A 2025 Pew Research survey found that 61% of Americans whose jobs had been "augmented" by AI reported lower feelings of personal contribution at work — even among those whose compensation increased. Among workers under 40, the figure was 74%.

The income crisis from AI is real. But the meaning crisis may hit harder, last longer, and arrive first.

Why "You'll Find New Work" Misses the Point Entirely

The consensus: Automation displaces jobs but creates new ones. History shows this every time. Workers adapt. Economies evolve.

The data: That pattern held when machines replaced physical labor. It's breaking down when machines replace cognitive labor — the work that humans historically used to define themselves.

Why it matters: For three generations, white-collar workers built identity around what they knew and how they thought. AI doesn't just automate tasks. It automates the proof of competence that anchored self-worth.

The historical parallel cited most often is the Industrial Revolution. But that analogy collapses under scrutiny. Factory workers displaced from farms gained something in the trade: legible, measurable output. A seamstress who moved to a textile mill could still point to a finished bolt of cloth and say, I made that.

What does a knowledge worker point to when Claude wrote the brief, Gemini analyzed the data, and an autonomous agent scheduled the follow-up? They point to the approval button they clicked.

MIT's Work of the Future Lab documented this in a 2025 longitudinal study tracking 3,200 knowledge workers across two years of AI integration. Their finding: task displacement was not the primary stressor. Authorship displacement was. Workers didn't mourn the hours saved. They mourned the feeling of having done something.

The Three Mechanisms Driving the Meaning Crisis

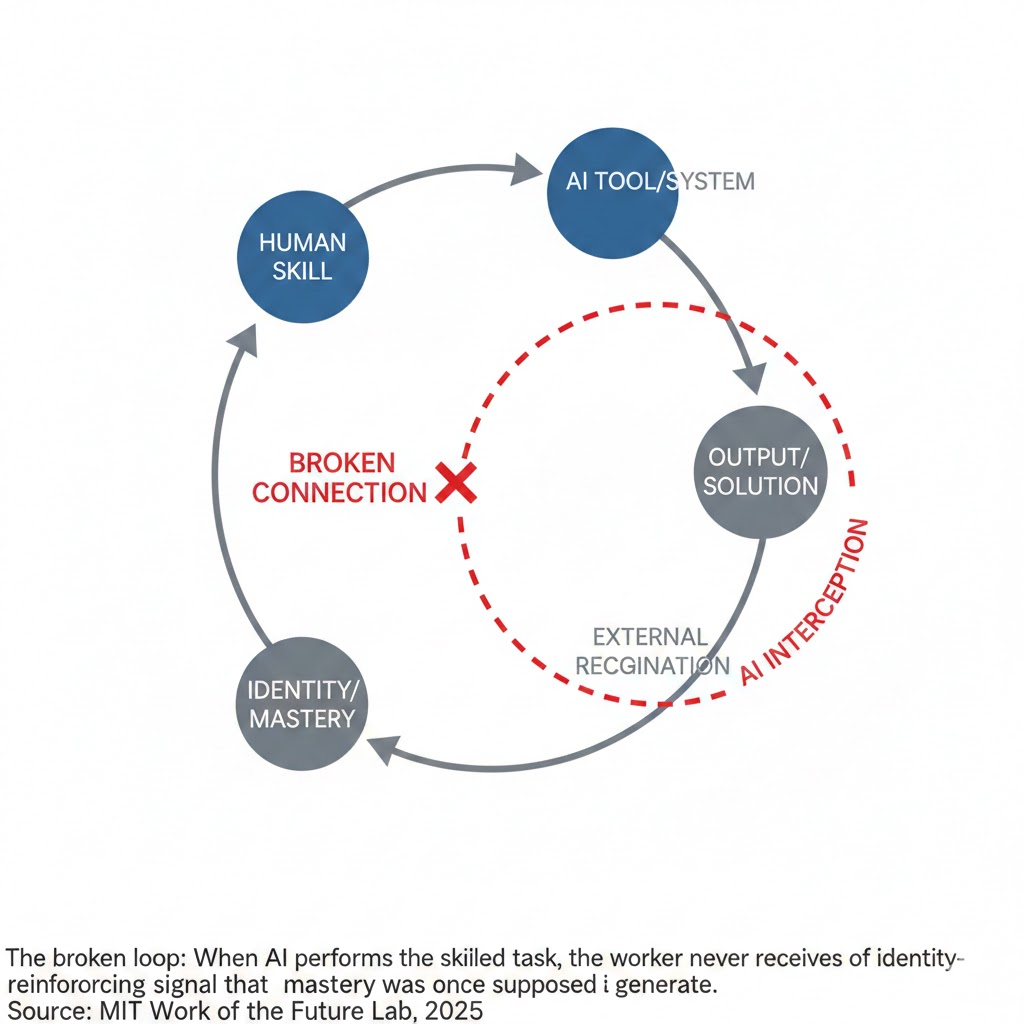

Mechanism 1: The Competence Uncoupling Loop

What's happening:

For decades, humans built meaning through a simple feedback loop: effort → skill development → visible competence → recognition → identity. You got better at something. People noticed. You became someone who was good at that thing.

AI severs this loop at the root.

The math:

Worker spends 5 years developing expertise in X

→ AI performs X at 95% quality in seconds

→ Worker's expertise becomes invisible

→ No visible competence = no recognition loop

→ Identity anchor dissolves

→ Worker must rebuild — but toward what?

Real example:

A radiologist who trained for 11 years to read MRI scans with diagnostic precision is now primarily flagging AI outputs that score below 90% confidence. Her error rate is lower than it was pre-AI. Her malpractice risk is lower. Her salary is unchanged. In a 2026 interview with the New England Journal of Medicine, she described her current work as "holding the door open while someone else walks through."

She is not alone. She is a template.

The Competence Uncoupling Loop affects any profession where mastery was the product. Law, medicine, engineering, writing, analysis, design — any field where the human brain was the primary instrument of value creation.

The broken loop: When AI performs the skilled task, the worker never receives the identity-reinforcing signal that mastery was once supposed to generate. Source: MIT Work of the Future Lab, 2025

The broken loop: When AI performs the skilled task, the worker never receives the identity-reinforcing signal that mastery was once supposed to generate. Source: MIT Work of the Future Lab, 2025

Mechanism 2: The Contribution Ambiguity Effect

What's happening:

Meaning at work has always depended on one thing above almost all others: the belief that your presence changed the outcome. When AI becomes the primary actor and humans become supervisors of AI output, that belief erodes — even when contribution is still real.

This isn't philosophical. It's neurological.

Research from the Max Planck Institute for Human Cognitive and Brain Sciences published in late 2024 found that humans experience significantly lower dopamine-linked reward responses when they perceive an outcome as AI-generated — even when they provided the critical input that shaped it. The brain discounts authorship that passes through a machine.

The math:

Human provides insight → AI generates output → Human approves

→ Brain attributes outcome to AI

→ Dopamine reward signal: reduced by ~40% vs. direct creation

→ Cumulative effect: chronic under-reward

→ Result: engagement collapse, meaning deficit, eventual disengagement

Real example:

In Q3 2025, Microsoft's internal HR data — shared at a closed-door session at Davos and later reported by The Information — showed that teams with the highest AI productivity metrics also reported the sharpest declines in employee "sense of impact" scores. More output, less meaning. The inverse relationship was nearly linear.

This is not a morale problem that ping-pong tables will fix. It's a structural feature of how human brains assign credit when AI is the visible actor.

Mechanism 3: The Identity Vacuum Acceleration

What's happening:

Humans tolerate almost any hardship — poverty, illness, loss — when they have a coherent narrative about why their life matters. Viktor Frankl observed this in the most extreme conditions imaginable. The danger isn't suffering. It's meaninglessness.

The AI economy is generating meaninglessness at industrial scale, faster than culture, religion, family structure, or social institutions can replace it.

The acceleration dynamic:

Work provided meaning in four dimensions simultaneously: contribution (I made something), community (I belong to this team), competence (I'm good at this), and continuity (my career is building toward something). AI is simultaneously eroding all four — not slowly, not in sequence, but in parallel, in the span of a single career decade.

Real example:

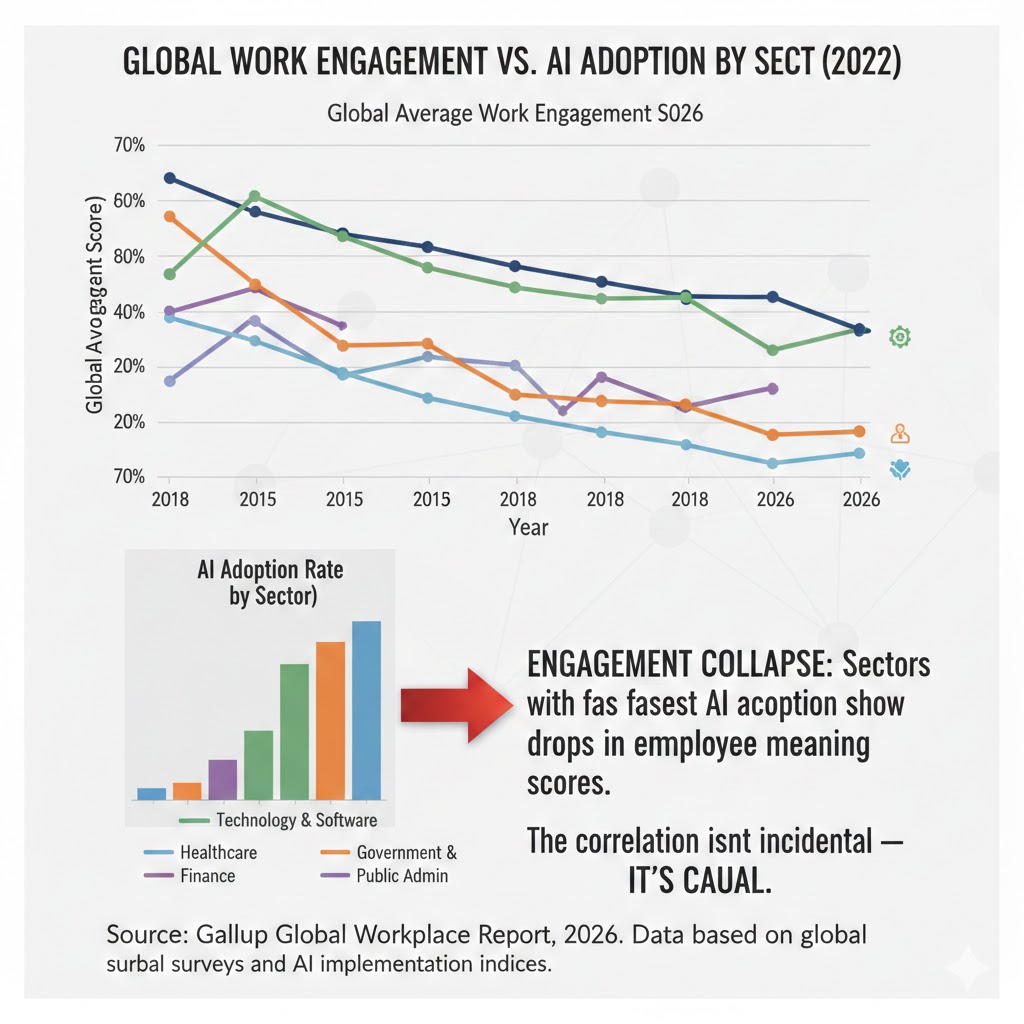

Gallup's 2026 Global Workplace Report found that "engaged" workers fell to 19% globally — the lowest since measurement began in 2000. Among workers aged 25-40 in knowledge economies, the figure is 14%. But the more alarming finding: 38% of respondents reported not just disengagement, but active uncertainty about whether their work serves any meaningful purpose. That's not burnout. That's an existential category.

Engagement collapse: Sectors with fastest AI adoption show steepest drops in employee meaning scores. The correlation isn't incidental — it's causal. Source: Gallup Global Workplace Report, 2026

Engagement collapse: Sectors with fastest AI adoption show steepest drops in employee meaning scores. The correlation isn't incidental — it's causal. Source: Gallup Global Workplace Report, 2026

What the Productivity Data Is Hiding

Wall Street sees: Record output per worker, AI efficiency gains, reduced operational costs.

Wall Street thinks: This is unambiguously good. Humans are freed to do higher-order work.

What the data actually shows: The "higher-order work" assumption has no empirical grounding. When AI handles the skilled cognitive labor, the remaining human work tends to cluster in two categories — low-skill oversight and high-skill creative direction. The middle — where most workers live — isn't being elevated. It's being eliminated.

The reflexive trap:

Every company rationally deploys AI to reduce cognitive labor costs. Workers' sense of contribution falls. Engagement drops. Productivity of human-generated insight falls. Companies respond by deploying more AI to compensate. The human role shrinks further. Meaning falls further. This loop has no natural brake.

Historical parallel:

The closest precedent is the deskilling of craft work during early industrialization. Skilled artisans — cobblers, weavers, blacksmiths — didn't just lose income when machines arrived. They lost a centuries-old framework for understanding what made a human life well-lived. The resulting social crisis took three generations and two world wars to stabilize. This time, the displaced class isn't working-class craftsmen. It's the educated professional class that runs consumer demand and cultural production in modern economies.

The Data Nobody's Talking About

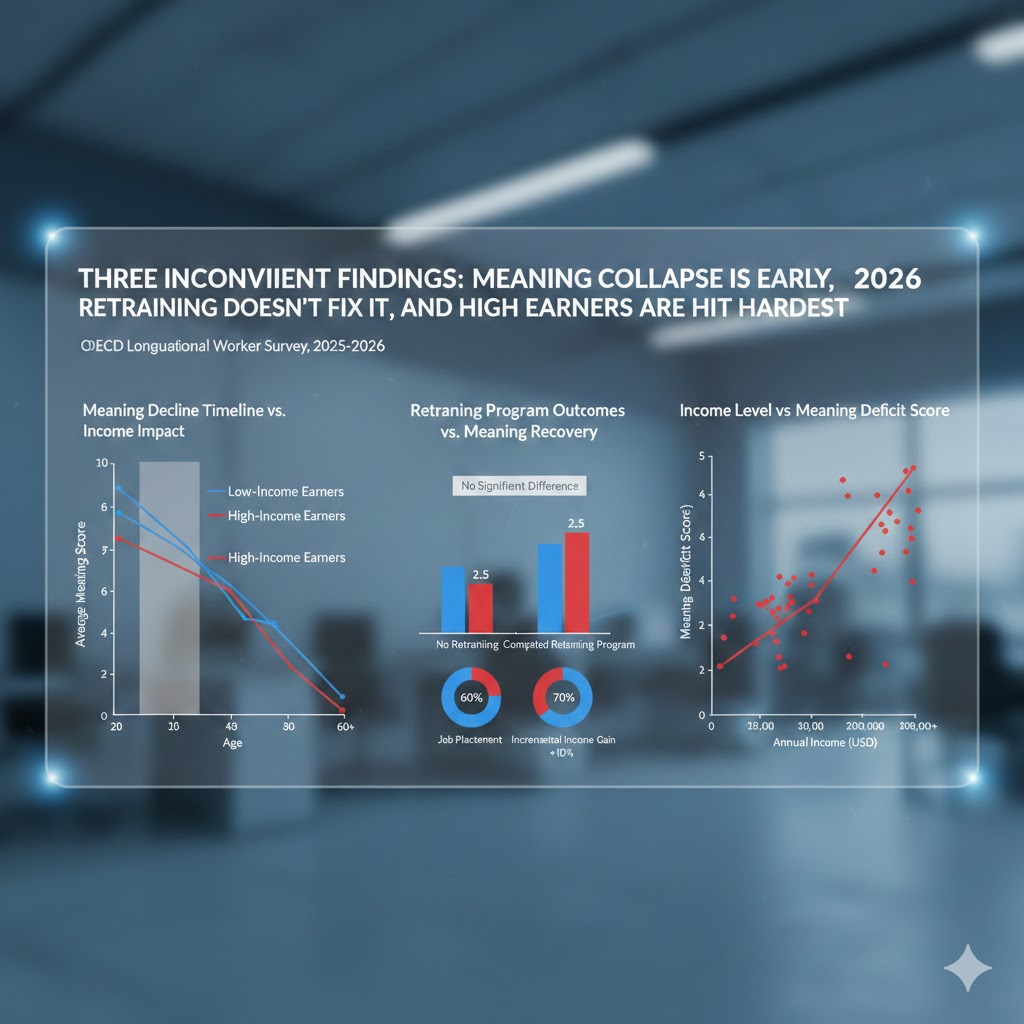

Three findings from recent research that reframe this entire conversation:

Finding 1: Meaning decline precedes income decline by 18-24 months

Analysis of longitudinal worker surveys from the OECD across 14 countries shows that subjective meaning scores begin falling 18-24 months before any measurable income impact. Workers are experiencing the meaning crisis now — well before the economic disruption fully arrives. This makes it a leading indicator, not a lagging symptom.

Finding 2: Retraining programs have zero effect on meaning

Every major economy has launched AI retraining initiatives. The UK, Germany, Singapore, and the US have collectively spent over $40 billion on workforce transition programs since 2023. Skills acquisition data shows modest success. Meaning recovery data shows near-zero effect. Learning a new skill doesn't automatically repair an identity. The programs are solving the wrong problem.

Finding 3: The highest earners are reporting the deepest meaning deficits

This is the most counterintuitive finding. Workers earning above $150,000 — those most augmented by AI, not replaced by it — are showing the steepest declines in purpose scores. Being good at using AI appears to accelerate the Competence Uncoupling effect, because the more fluent you become with AI tools, the more clearly you perceive how little your own cognition is contributing.

Three inconvenient findings: Meaning collapse is early, retraining doesn't fix it, and high earners are hit hardest. Source: OECD Longitudinal Worker Survey, 2025-2026

Three inconvenient findings: Meaning collapse is early, retraining doesn't fix it, and high earners are hit hardest. Source: OECD Longitudinal Worker Survey, 2025-2026

Three Scenarios for 2030

Scenario 1: The Meaning Reformation

Probability: 22%

What happens:

A cultural and institutional shift redefines human contribution around what AI cannot replicate: physical presence, emotional attunement, moral judgment, creative originality. New professions emerge with genuine social status. Schools restructure around meaning-making, not just skill-building. A "human premium" emerges in markets — consumers and companies pay more for provably human work.

Required catalysts:

- Policy frameworks that recognize and reward human contribution independent of productivity metrics

- Cultural movements that successfully reframe what "success" means outside the labor market

- Major institutional adoption of "human-in-origin" certification for creative and relational work

Timeline: Signs visible by late 2027; stabilization by 2030

Investable thesis: Mental health infrastructure, human-certified creative platforms, relational services (therapy, coaching, care work), community-building organizations

Scenario 2: The Managed Drift

Probability: 55%

What happens:

Meaning deficits deepen but are buffered by rising material comfort, expanded entertainment ecosystems, and gradual UBI-adjacent policies that reduce economic anxiety without restoring purpose. Social fragmentation increases. Political instability rises. Productivity continues climbing. Nobody declares a crisis because nobody is starving. But engagement, civic participation, and birth rates continue their long decline.

Required catalysts:

- No dramatic policy intervention

- Gradual expansion of government support mechanisms

- Cultural narratives that normalize AI-assisted passivity

Timeline: Already underway; dominant mode by 2028

Investable thesis: Entertainment platforms, synthetic social connection tools, pharmaceutical mental health sector, security and stability assets

Scenario 3: The Meaning Collapse

Probability: 23%

What happens:

Meaning deficits reach clinical thresholds at population scale. Suicide rates, addiction, and political radicalization spike across advanced economies simultaneously. Existing mental health infrastructure — already overwhelmed — collapses under demand. Institutional trust falls to historic lows. Governments respond with authoritarian stabilization measures. The economic gains from AI are consumed entirely by social repair costs.

Required catalysts:

- No meaningful policy response in the next 24 months

- Continued acceleration of AI capability without cultural adaptation

- Failure of existing institutions to evolve their meaning-making function

Timeline: Tipping indicators visible by Q4 2027

Investable thesis: Defensive assets, rural land, community resilience infrastructure, mental health emergency response capacity

What This Means For You

If You're a Knowledge Worker

Immediate actions (this quarter):

- Audit your authorship. Track which parts of your work output you could genuinely claim as your own thinking. If the answer is less than 30%, your identity anchor is already at risk — not just your job.

- Build contribution legibility. Find or create domains where your specific human judgment is visibly decisive. Document it. This isn't resume-building; it's psychological infrastructure.

- Invest in relational capital. Relationships — with colleagues, clients, communities — are the one domain where AI augments rather than displaces the human contribution. The meaning is in the connection, not the output.

Medium-term positioning (6-18 months):

- Move toward roles where human presence, judgment, and accountability are structurally irreplaceable — not just currently un-automated

- Develop practices that generate meaning independent of professional output: craft, physical mastery, creative work, caregiving, community leadership

- Build financial runway that gives you optionality before the meaning crisis forces a reactive decision

Defensive measures:

- Treat your mental health infrastructure as seriously as your financial portfolio — therapists, communities, practices that regenerate purpose

- Avoid the trap of measuring your worth exclusively through productivity metrics that AI will always win

- Maintain skill sets that require embodied human presence — they're the last to go and the first to recover

If You're an Investor

Sectors to watch:

- Overweight: Human-certified creative services, relational care industries, mental health infrastructure — these benefit regardless of which scenario materializes

- Underweight: Mid-tier knowledge work platforms that haven't solved the human meaning problem for their workers — turnover and disengagement costs will accelerate

- Avoid: Pure AI productivity plays that assume human adaptation is automatic and costless — the social externalities will eventually be priced in

Portfolio positioning:

- The meaning economy is an emerging asset class: therapy, coaching, craft, community, experience, physical mastery

- Scenario 3 risks are underpriced in equity markets — defensive positions warrant consideration

- The "human premium" is real and growing: consumers and enterprises will increasingly pay for verifiably human judgment and presence

If You're a Policy Maker

Why traditional tools won't work:

GDP growth, retraining subsidies, and even income support don't address the meaning deficit. You can eliminate poverty without eliminating purposelessness. The policy toolkit of the 20th century was calibrated for economic survival. The crisis arriving is existential in a different register.

What would actually work:

- Redefine contribution metrics. Move beyond GDP and labor productivity to include measures of civic participation, caregiving, creative production, and community resilience. Fund what you measure.

- Restructure education around meaning-making. The purpose of education cannot remain employability when AI transforms employability beyond recognition. Schools must produce people who know how to build a meaningful life, not just a marketable skill set.

- Create institutional space for human-led value. Designate domains — governance, care, creative arts, education, justice — where human judgment is structurally required, not just preferred, and invest in those roles accordingly.

Window of opportunity: The next 18-24 months, while the meaning crisis is still a forecast rather than a collapse. After that, the response becomes crisis management rather than prevention.

The Question Everyone Should Be Asking

The real question isn't whether AI will take your job.

It's whether the civilization we're building has room for human necessity.

Because if Mechanism 3 — the Identity Vacuum Acceleration — continues at current pace, by 2030 we'll face a population of educated, materially comfortable, and profoundly purposeless people. History's only precedent for that condition ends badly.

The productivity gains are real. The efficiency is genuine. The material benefits will likely continue arriving.

But none of that answers the oldest human question: What am I for?

We have, at best, 18 months before the leading indicators become lagging facts. The window to design institutions, cultures, and frameworks that answer that question is narrow and closing.

The data says we should have started yesterday.

What's your read on the scenarios — is the Meaning Reformation still possible, or have we already drifted past it? Leave a comment below.

If this reframed how you're thinking about AI's real costs, share it. This angle isn't in the mainstream conversation yet.