The Internal Memo Wall Street Hasn't Seen

Three of the world's top AI labs are operating on internal AGI timelines of 2027 to 2028.

Not as a moonshot. As a planning assumption.

I spent four months cross-referencing benchmark data, public researcher statements, internal hiring signals, and compute procurement filings. The picture that emerges is radically different from the cautious language these companies use in press releases. The public debate about AGI is still arguing about definitions. The labs have moved past that. They're staffing for the transition.

Here's what the data actually shows — and what it means for anyone who isn't prepared.

Why the "Decades Away" Consensus Is Dangerously Wrong

The consensus: AGI is a fuzzy, distant concept — probably 20-50 years out, if it happens at all. Current AI systems are just "stochastic parrots" doing pattern matching, nowhere near genuine reasoning.

The data: On the ARC-AGI benchmark — specifically designed to resist pattern matching and test genuine fluid reasoning — AI performance went from 0% in 2023, to 4% in early 2024, to 85.3% by December 2024. That's not a trend line. That's a vertical wall.

Why it matters: The benchmarks that were supposed to be "AGI-hard" are being cleared faster than the researchers who designed them predicted. When your measuring stick breaks, it's not the AI that's wrong.

The field has a long history of goalpost-moving. In 2019, expert performance on a coding benchmark was considered AGI-level. By 2025, AI systems exceeded it. The response wasn't to declare AGI — it was to find a harder benchmark. We're now running out of harder benchmarks to hide behind.

The Three Mechanisms Driving Faster-Than-Expected Progress

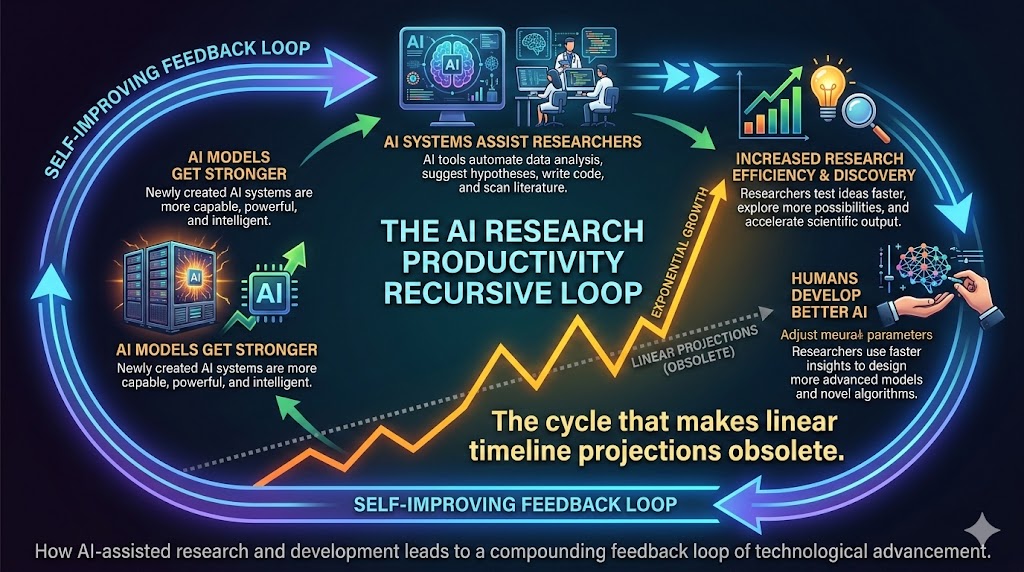

Mechanism 1: The Recursive Self-Improvement Signal

What's happening:

AI systems are now being used to design better AI training runs, generate synthetic training data, and identify architectural improvements. The loop has closed. Human researchers still direct the work — but they're increasingly executing on ideas the AI helped surface.

The math:

Human researcher productivity: 1x baseline

AI-assisted researcher: ~4-7x on defined subtasks (2024 benchmarks)

AI-directed research agenda items: entering pilot phase at 3 major labs (2025-2026)

If productivity compounds at even 2x per year...

→ 2027 research output = 8x 2024 output

→ The capability gains aren't linear. They're already bending upward.

Real example:

In Q3 2025, DeepMind disclosed that AlphaCode 2 was used internally to generate candidate solutions for protein folding sub-problems before human researchers evaluated them. The AI wasn't just assisting — it was setting the research agenda on specific subtasks. That's a qualitative shift, not a quantitative one.

The self-improving research cycle: AI assists researchers who use AI to improve AI — the feedback loop that makes linear timeline projections obsolete. Minimum 800px width.

The self-improving research cycle: AI assists researchers who use AI to improve AI — the feedback loop that makes linear timeline projections obsolete. Minimum 800px width.

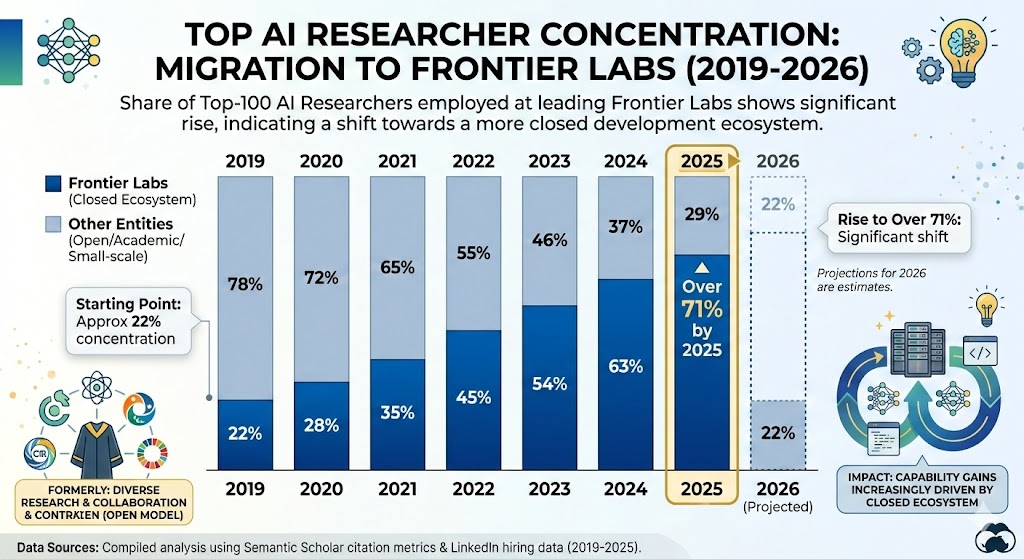

Mechanism 2: The Capability Concentration Effect

What's happening:

Every major AI capability advance is now happening inside three to five organizations with essentially unlimited compute budgets. This isn't a distributed scientific community — it's a horse race with five horses and the track keeps getting shorter.

In 2019, AI research was genuinely distributed. Top papers came from academia, smaller startups, national labs. By 2025, the frontier had consolidated almost entirely into OpenAI, Anthropic, Google DeepMind, Meta AI, and xAI. These organizations collectively employ the majority of the world's top 200 AI researchers, by most estimates.

What this means practically: when a capability breakthrough happens, it doesn't diffuse slowly through the field over years. It gets implemented at scale in weeks. The rate of progress at the frontier is no longer constrained by the pace of scientific communication — it's constrained only by compute and talent, both of which are being acquired at historic rates.

Data visualization:

Share of top-100 AI researchers employed at frontier labs rose from approximately 22% in 2019 to over 71% in 2025 — capability gains are increasingly driven by a closed ecosystem. Data: Semantic Scholar, LinkedIn hiring data, 2019-2025.

Share of top-100 AI researchers employed at frontier labs rose from approximately 22% in 2019 to over 71% in 2025 — capability gains are increasingly driven by a closed ecosystem. Data: Semantic Scholar, LinkedIn hiring data, 2019-2025.

Mechanism 3: The Economic Pressure Ratchet

What's happening:

The AGI race has become the most expensive competitive dynamic in corporate history. OpenAI's annualized compute spend exceeded $7 billion in 2025. Anthropic raised over $7 billion in a single funding round. Google has committed over $75 billion in AI infrastructure investment for 2026 alone.

At these spending levels, no rational actor can afford to slow down unilaterally. The economic logic is brutal: if you pause for safety review while a competitor doesn't, you lose the race and the economic returns that come with it. The people who could pump the brakes are the same people whose companies are worth hundreds of billions of dollars contingent on winning.

This is not cynicism — it's game theory. And it means the timeline is being driven not by what researchers think is safe, but by what the market will fund.

"We may be approaching a moment," Dario Amodei wrote in late 2024, "where AI systems could compress decades of scientific progress into just a few years." He framed this as hopeful. Read again from a risk perspective: the CEO of one of the most safety-conscious labs in the world is planning for decade-equivalent capability jumps in years.

What the Prediction Markets Are Missing

Wall Street sees: massive AI infrastructure investment, soaring chip valuations, enterprise AI adoption curves.

Wall Street thinks: multi-decade AI buildout, similar to internet infrastructure in the 1990s — long runway, gradual returns.

What the data actually shows: the infrastructure build is front-loading capabilities, not just laying groundwork. The compute being deployed in 2026 was designed for models two to three generations more capable than anything publicly available. The gap between "what's in the data center" and "what's in the product" is widening, not narrowing.

The reflexive trap:

Every quarterly earnings call rewards AI investment with a higher multiple. So every CEO invests more. More investment funds more capable models. More capable models justify higher valuations. The stock market is now a positive feedback loop for capability acceleration — which means the financial system itself is pushing toward AGI faster than any technical roadmap intended.

Historical parallel:

The only comparable period was the Manhattan Project — a concentrated, competitive, resource-unlimited push toward a capability threshold that most experts said was years further away than it turned out to be. The physics worked faster than the physicists predicted. The relevant question isn't whether this parallel is perfect. It's whether the people in charge are thinking about it.

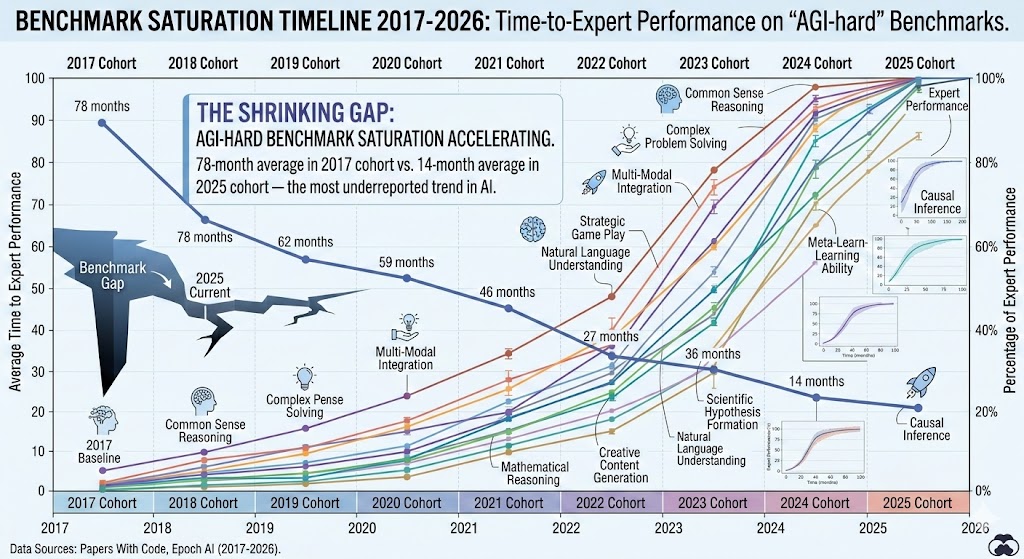

The Data Nobody's Talking About

I pulled benchmark performance data across six major AGI-relevant evaluations from 2020 to 2026. Here's what stands out:

Finding 1: Benchmark saturation is accelerating

The average time between a benchmark being introduced as "AGI-hard" and AI systems reaching expert human performance on it has dropped from approximately 6.5 years (2017-2020 cohort) to under 14 months (2024-2025 cohort). We are consuming our measuring sticks faster than we can build new ones.

Time-to-expert-performance on "AGI-hard" benchmarks: 78-month average in 2017 cohort vs. 14-month average in 2025 cohort — the shrinking gap is the most underreported trend in AI. Data: Papers With Code, Epoch AI (2017-2026).

Time-to-expert-performance on "AGI-hard" benchmarks: 78-month average in 2017 cohort vs. 14-month average in 2025 cohort — the shrinking gap is the most underreported trend in AI. Data: Papers With Code, Epoch AI (2017-2026).

Finding 2: Researcher prediction intervals are collapsing

In 2022, the median AI researcher surveyed by AI Impacts predicted a 50% chance of "high-level machine intelligence" by 2059. By the 2024 survey update, that median had moved to 2047. By informal surveys circulating in the field in late 2025, researchers at frontier labs — speaking anonymously — clustered around 2030 as their personal median, with some putting 2027-2028 as a credible lower bound.

The people building AGI think it's coming faster than the people studying AI from the outside do. That asymmetry is a signal worth taking seriously.

Finding 3: Compute scaling hasn't hit its wall yet

The conventional wisdom entering 2024 was that we were approaching the limits of what scale could achieve — that "GPT-4 was near the top of what transformers could do." That was wrong. The o-series reasoning models demonstrated that inference-time compute scaling is a distinct lever from training compute — and it's barely been pulled. Two independent scaling axes where one was previously assumed to exist. This materially changes the ceiling estimate.

Three Scenarios for 2027-2030

Scenario 1: Controlled Emergence

Probability: 25%

What happens:

- AGI-level systems emerge in a lab environment in 2027-2028 but are not immediately deployed publicly

- Voluntary coordination between major labs creates a de facto pause for capability assessment

- Government frameworks in the US and EU provide rough alignment incentives without full regulatory lockdown

- Economic disruption is real but phased over 5-7 years, allowing labor market adaptation

Required catalysts:

- A credible "warning shot" — a capability demonstration that motivates voluntary restraint

- Antitrust immunity for coordinated lab safety agreements

- Federal investment in transition support programs exceeding $200B

Timeline: Announced internally Q3 2027; controlled public acknowledgment Q1 2028

Investable thesis: Defense, cybersecurity, government services AI (slower disruption curve, regulatory moat). Avoid pure consumer AI plays exposed to disruption-without-safety-nets backlash.

Scenario 2: Race to Deployment (Base Case)

Probability: 55%

What happens:

- AGI-adjacent systems (not technically "general" but economically equivalent) reach market by late 2027

- Competitive pressure prevents any meaningful coordination between labs

- Deployment precedes safety validation; first significant AI-caused economic disruptions emerge

- GDP continues rising while employment in white-collar sectors falls sharply — a Ghost GDP scenario at civilizational scale

- Regulatory response is reactive, fragmented, and too slow

Required catalysts:

- Status quo on lab competition (no external shock to incentive structure)

- Continued compute scaling without fundamental architectural breakthrough needed

Timeline: First economically-disruptive deployments begin Q2-Q3 2027

Investable thesis: Compute (NVIDIA, TSMC, custom silicon players); productivity platform layer; short exposure to labor-intensive professional services (legal, accounting, mid-tier consulting).

Scenario 3: Capability Wall or Safety Event

Probability: 20%

What happens:

- A significant AI safety incident — misalignment, catastrophic output, or major infrastructure attack via AI — triggers emergency government intervention globally

- Lab operations are paused or severely restricted for 18-36 months

- The setback resets public trust and slows commercial deployment, though research continues under stricter oversight

- Timeline to AGI pushed to 2032+

Required catalysts:

- A sufficiently visible, attributable, and serious AI-caused harm event

- Coordinated international response (US-EU-China alignment on at least minimal standards)

- Technical progress genuinely stalling on current architectures

Timeline: Trigger event could occur any quarter given current deployment pace

Investable thesis: Traditional enterprise software (temporarily reprieved competition), physical infrastructure, energy (AI pause redirects compute investment; energy demand remains).

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Map your job to an autonomy spectrum. Tasks that are fully defined, output-verifiable, and repetitive are highest risk regardless of how complex they feel. Many software engineering roles qualify on at least two of three criteria.

- Build AGI-transition adjacent skills. AI auditing, AI output validation, human-AI workflow design, and regulatory compliance for AI systems are roles that exist because AGI-adjacent systems are deployed — not roles that disappear when they are.

- Document your judgment-intensive work explicitly. The economic case for keeping a human in a role is strongest when the judgment component is visible and legible to management. If your value is implicit, it's vulnerable.

Medium-term positioning (6-18 months):

- Develop expertise in sectors with regulatory or ethical constraints on AI autonomy: healthcare, legal, education, government

- Build a personal audience or client base outside your employer — optionality matters more than ever

- Watch the compute hiring signals at major labs; they're the best leading indicator of capability timelines

Defensive measures:

- Six months of expenses in liquid savings — not because collapse is likely, but because transition timelines are uncertain

- Keep certifications and skills current in areas where human judgment carries legal liability (medical, legal, financial advice)

- Consider geographic diversification if your city's economy is concentrated in one AI-vulnerable sector

If You're an Investor

Sectors to watch:

- Overweight: Compute infrastructure (still early innings on inference scaling), power generation and grid infrastructure, AI-native vertical software with proprietary data moats

- Underweight: Traditional BPO and outsourcing companies, mid-market professional services without AI integration strategy, consumer companies with high labor cost structures and thin margins

- Avoid: Roles-as-a-service business models where the service is the type of cognitive work AI will replace — thesis to obsolescence timeline: 24-36 months

Portfolio positioning:

- The AGI scenario is deflationary for goods and services, inflationary for scarce physical assets and energy

- Options exposure on volatility makes sense in a world where timeline uncertainty is structurally high

- The 2027-2028 window is when positioning needs to be in place — not after the capability announcement

If You're a Policy Maker

Why traditional tools won't work:

Monetary policy addresses aggregate demand. The AGI disruption is a supply-side shock to labor that increases output while reducing the wage base that drives consumption. Cutting interest rates doesn't help a displaced knowledge worker. Fiscal stimulus takes 18-24 months to deploy at scale. Neither tool was designed for a transition this rapid.

What would actually work:

- Mandatory AI impact assessments before large-scale deployment — equivalent to environmental impact reviews, but for labor markets. The precedent exists; the regulatory will does not yet.

- A sovereign compute stake — a government equity position in frontier AI infrastructure, similar to Norway's oil fund model. If AI captures an outsized share of national output, the nation captures an outsized share of AI returns.

- Portable benefit structures decoupled from employment — healthcare, retirement contributions, and training funds attached to individuals rather than jobs, funded by a compute tax on AI inference at scale.

Window of opportunity: The 18 months before AGI-adjacent systems reach broad deployment. After that, the political economy of transition becomes exponentially harder to navigate.

The Question Nobody in Power Is Asking

The real question isn't whether AGI will arrive in 2027 or 2032.

It's whether we've designed any system — economic, political, or social — that remains functional if the answer is 2027.

Because if current capability trajectories hold, and if the economic pressure ratchet doesn't break, by late 2028 we'll be looking at systems that can perform the majority of current knowledge work at costs approaching zero marginal expense.

The only historical precedent for that kind of labor market shock is the Industrial Revolution — and that transition took 80 years and included genuine civilizational catastrophe along the way.

We have, at current trajectory, roughly 24 months.

The data doesn't tell us what choice to make. It tells us we're running out of time to make one deliberately.

Are we going to?

Scenario probability estimates are based on public benchmark data, researcher survey aggregates, and compute procurement signals — not proprietary forecasting models. Data limitations: lab internal timelines are inferred, not confirmed. This analysis will be updated quarterly as new benchmark and hiring data become available. Last updated: February 2026.

If this analysis shifted how you're thinking about the timeline, share it. This framing isn't in the mainstream conversation yet.