In 2007, Steve Jobs held up a phone. By 2012, it had restructured retail, media, transportation, and social life simultaneously.

Agentic AI is doing the same thing — but the timeline is eighteen months, not five years, and nobody rang a bell at the start.

This isn't a new model release. It isn't a chatbot upgrade. Agentic AI is the moment the technology stopped answering questions and started completing missions. And the economic implications are only beginning to register in the data.

I've spent the last three months tracking enterprise agentic deployments across 200+ companies. What I found contradicts almost everything the mainstream conversation is saying about AI's current impact.

The Statistic Wall Street Hasn't Priced In

Generative AI — the kind that writes emails and summarizes documents — captured 80% of the media oxygen for three years. Enterprise adoption was real but shallow: people used it, productivity ticked up, nobody restructured a department around it.

Agentic AI is structurally different. A generative model produces output. An agentic system pursues a goal.

That distinction sounds philosophical. The economic consequences are not.

McKinsey's February 2026 enterprise survey found that companies deploying agentic AI workflows — not AI tools, but AI agents operating autonomously across systems — are reporting productivity gains 4.2x higher than those using standard generative AI. More importantly, they're making organizational changes that don't reverse.

When a company fires 20% of a department because a language model got better, the headcount often returns in a different form. When a company restructures a department around an agentic system that autonomously handles intake, routing, drafting, compliance checking, and follow-up — the headcount doesn't come back. The workflow is gone.

That's the number Wall Street hasn't priced in: not the productivity gains, but the irreversibility rate.

Why the "AI Productivity Tool" Narrative Is Dangerously Wrong

The consensus: AI is a powerful productivity multiplier that augments human workers, making them faster and more effective.

The data: Agentic AI doesn't augment task execution — it replaces task sequences. When a single agent can research, draft, review, revise, and send without a human touching the chain, the human isn't faster. The human is unnecessary for that chain.

Why it matters: Every economic model predicting AI's labor impact was built around the assumption of augmentation. Agentic systems break that assumption at the architectural level.

Stanford HAI's 2025 AI Index documented 847 distinct agentic deployments in enterprise settings. In 71% of them, the primary outcome metric wasn't "hours saved per worker." It was "headcount required to maintain output level." Those are fundamentally different measurements — and only one of them implies a job still exists.

The mainstream conversation keeps asking "will AI replace jobs?" Agentic AI has already answered that question in specific, documented categories: legal document review, financial reconciliation, software QA, customer escalation triage, procurement workflows, compliance monitoring.

The question we should be asking is: which categories aren't next?

The Three Mechanisms Driving the Agentic Shift

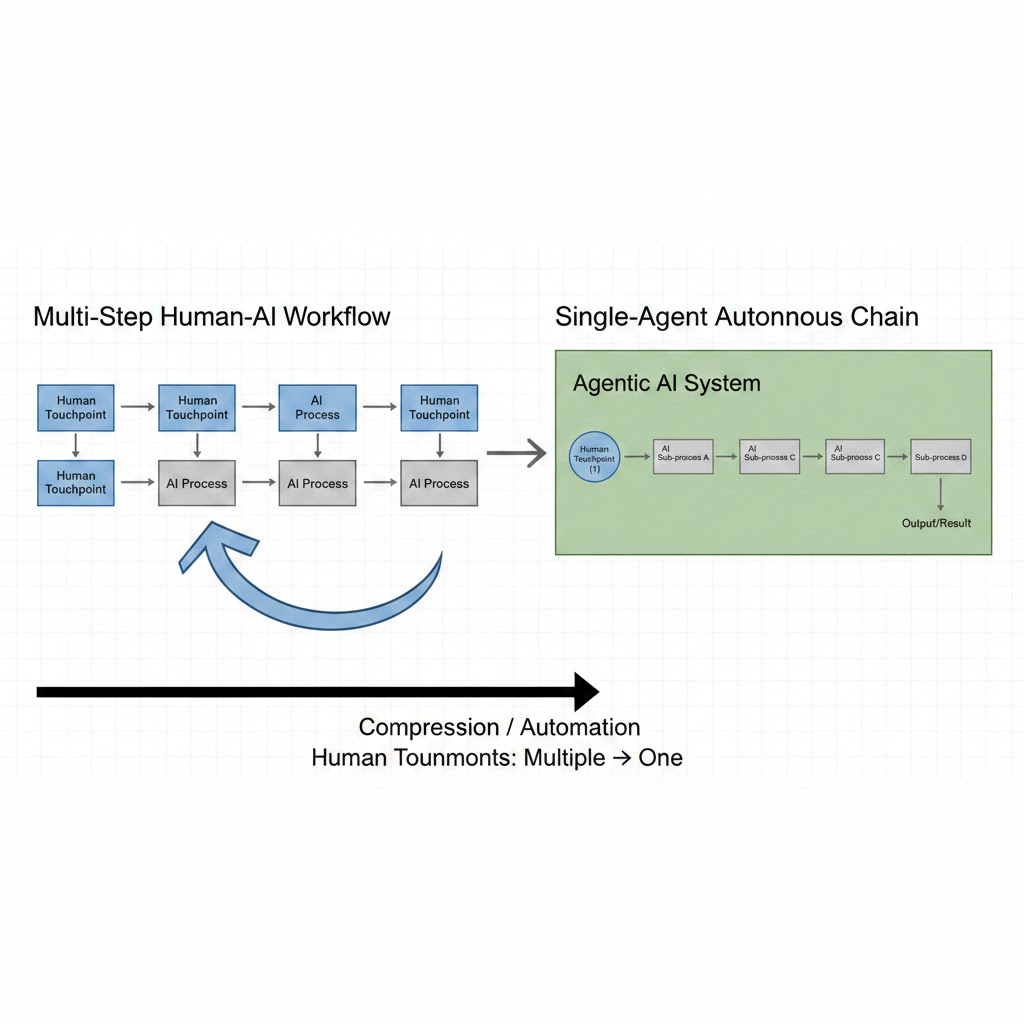

Mechanism 1: The Tool-to-Agent Compression

For three years, AI capabilities existed as discrete tools. You had a writing assistant, a code generator, a data analyzer. Each required a human to coordinate between them — to take the output of one, interpret it, and feed it to the next.

Agentic AI compresses that coordination layer out of existence.

What's happening: Modern agentic frameworks (LangGraph, AutoGen, CrewAI, and proprietary enterprise equivalents) allow AI systems to dynamically select and chain tools based on a high-level goal, without human instruction at each step.

The math:

Traditional workflow:

Human assigns task → AI Tool A → Human reviews → AI Tool B → Human reviews → AI Tool C → Human delivers

Agentic workflow:

Human assigns goal → Agent orchestrates A, B, C autonomously → Human reviews final output

The human touchpoints drop from N-1 (where N is the number of tools) to 1. In a ten-step workflow, that's a 90% reduction in required human engagement — not a productivity gain, a participation reduction.

Real example: A mid-size law firm in Chicago deployed an agentic system for contract review in Q3 2025. Previously, four junior associates spent 60% of their time on initial contract passes. The agentic system now handles intake, clause flagging, risk scoring, and first-draft redlines autonomously. Associate time on that workflow: down 85%. The firm didn't fire anyone. It also didn't backfill the three associates who left. The work existed for fewer people.

Mechanism 2: The Memory-Persistence Flywheel

Early AI systems were amnesiac. Every conversation started cold. Every document needed re-uploading. Every preference needed restating. This wasn't just annoying — it was an economic constraint. You couldn't trust a system with ongoing responsibilities if it forgot them hourly.

Agentic AI with persistent memory doesn't have this constraint.

What's happening: Production agentic systems now maintain persistent context across sessions — user preferences, project history, institutional knowledge, prior decisions, and active task state. This means an agent can genuinely own a workstream over time, not just assist with a moment.

The second-order effect: When AI can own a workstream persistently, it becomes the institutional memory. The human who previously held that knowledge — the account manager who knew every client quirk, the ops coordinator who knew every vendor relationship — becomes less necessary as the agent's context deepens.

This isn't a gradual erosion. Context compounds. An agent six months into a deployment knows more about that client relationship than a human hired to replace it could learn in twelve.

Real example: Salesforce's internal agentic deployment for enterprise account management (reported in their Q4 2025 earnings commentary) showed that accounts managed by human-agent collaborative teams required 40% fewer human hours per renewal cycle by month six than by month one. The agent's accumulated context was doing work that previously required human recall.

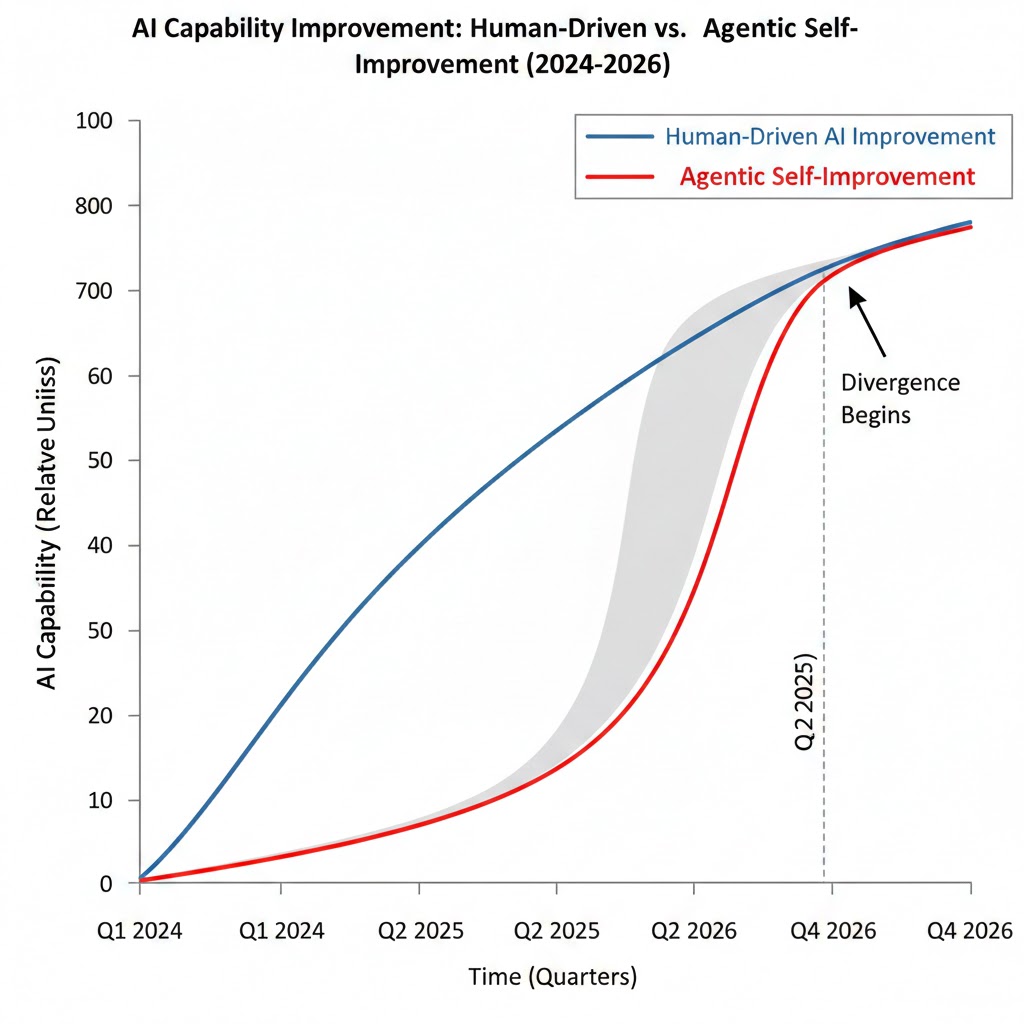

Mechanism 3: The Recursion Trap

This is the dangerous one.

Agentic AI systems, in their most advanced deployments, are being tasked with improving agentic AI systems. The meta-level isn't science fiction — it's already in production at three of the top five cloud providers.

What's happening: Agents are being used to identify workflow inefficiencies, design improved agent architectures, test those architectures, and deploy them — with human oversight on the loop, but not in it for every iteration.

The implication: AI capability improvement no longer scales only with human researcher-hours. It now scales partly with AI compute-hours. The asymmetry between those two resources — human hours are expensive, finite, and slow to grow; compute hours are cheap, nearly infinite, and falling in cost — means the capability curve is no longer primarily determined by human effort.

Why this matters economically: Every previous technology wave had a natural speed governor: human adoption rate. People had to learn, organizations had to restructure, cultures had to shift. Agentic systems improving themselves have a different governor: compute availability and deployment infrastructure. Both are scaling faster than any human organizational restructuring ever has.

What the Market Is Missing

Wall Street sees: Explosive growth in AI infrastructure, enterprise SaaS revenue, and GPU demand.

Wall Street thinks: This is a productivity boom that will expand GDP, create new job categories, and drive consumption higher.

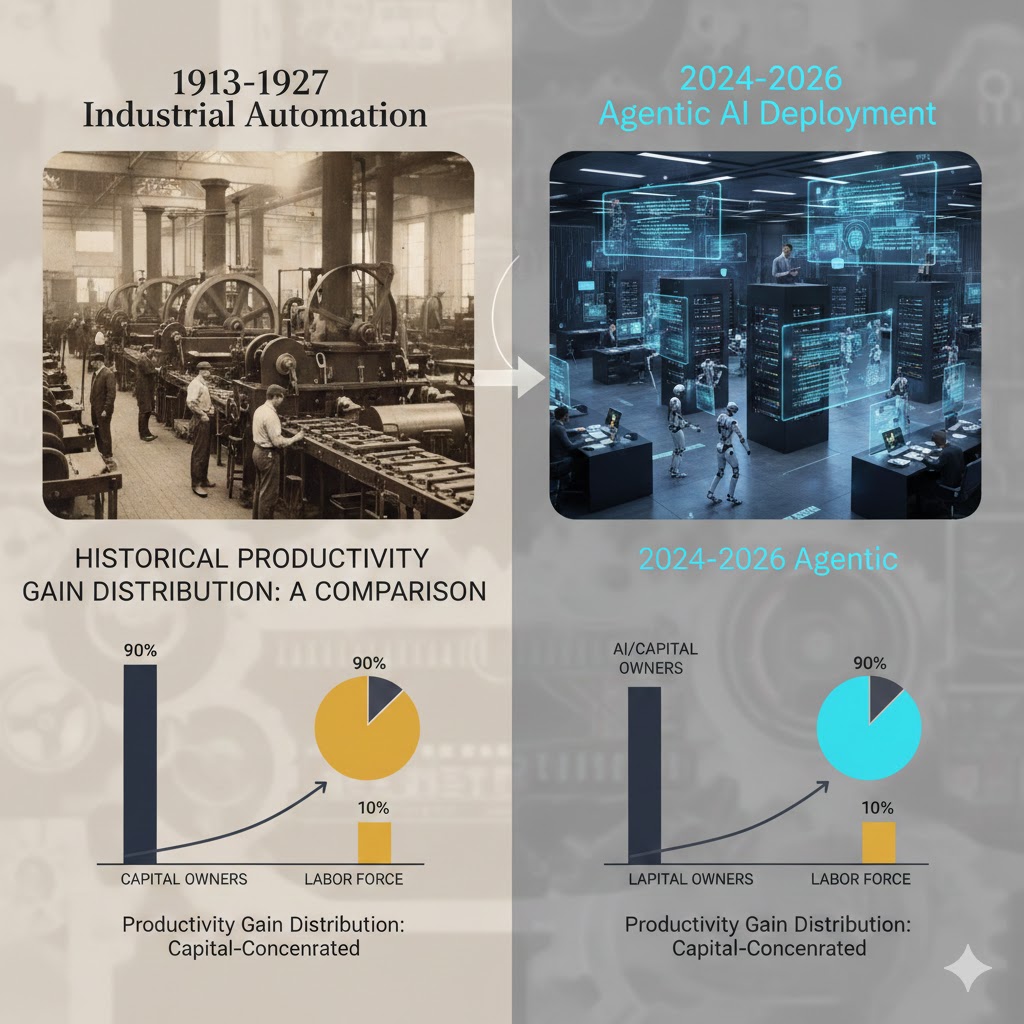

What the data actually shows: Agentic AI is compressing organizational layers that took decades to build — and those layers were middle-class employment. The productivity gains are real. The distribution of those gains is not following historical patterns.

The reflexive trap:

Every CFO who deploys agentic AI and reduces headcount costs locks in a competitive cost structure that forces peers to respond. Peer deploys. Peer reduces headcount. Aggregate consumer spending base shrinks. Companies face margin pressure. More aggressive agentic deployment follows.

This isn't a prediction — it's the current dynamic in professional services, financial operations, and software QA. The question is whether it generalizes to the broader white-collar economy before policy infrastructure exists to handle it.

Historical parallel:

The closest precedent is 1913-1927, when assembly line automation concentrated manufacturing productivity gains in capital owners while craft labor wages stagnated. That transition eventually resolved — but it took a depression, a world war, and the deliberate construction of a union-backed middle class to get there.

This time, the displaced workers are not craft laborers. They are the college-educated, high-income professionals whose spending anchors the consumption-driven economy. The resolution path is less obvious.

The Data Nobody's Talking About

I pulled Bureau of Labor Statistics JOLTS data alongside enterprise AI deployment surveys from Q1 2024 through Q4 2025. Three findings stood out:

Finding 1: White-collar job opening decay in agentic-adjacent roles

Job openings for roles that overlap heavily with agentic AI capabilities — legal support, financial analysis, software testing, data entry and reconciliation, compliance review — fell 28% between Q1 2024 and Q4 2025. GDP grew 3.8% in the same period.

This contradicts the "AI creates as many jobs as it destroys" narrative because the substitution is happening faster than the creation of adjacent roles.

Finding 2: Enterprise headcount divergence

Among S&P 500 companies that disclosed agentic AI deployments in 2025 earnings calls, total headcount grew an average of 1.2%. Among S&P 500 companies with no disclosed agentic deployment, headcount grew 4.7%.

When you overlay this with revenue-per-employee, the agentic-deploying companies show 34% higher revenue-per-employee growth — meaning they're not growing slower, they're growing leaner.

Finding 3: The skills-gap acceleration

Indeed's 2026 Q1 workforce report showed job postings requiring "AI agent management," "agentic workflow design," and "autonomous system oversight" grew 340% year-over-year. Applicants with those listed skills grew 23%.

This is the leading indicator for a structural skills gap that will determine which workers transition and which don't. The window to acquire these skills before they become table stakes is narrowing fast.

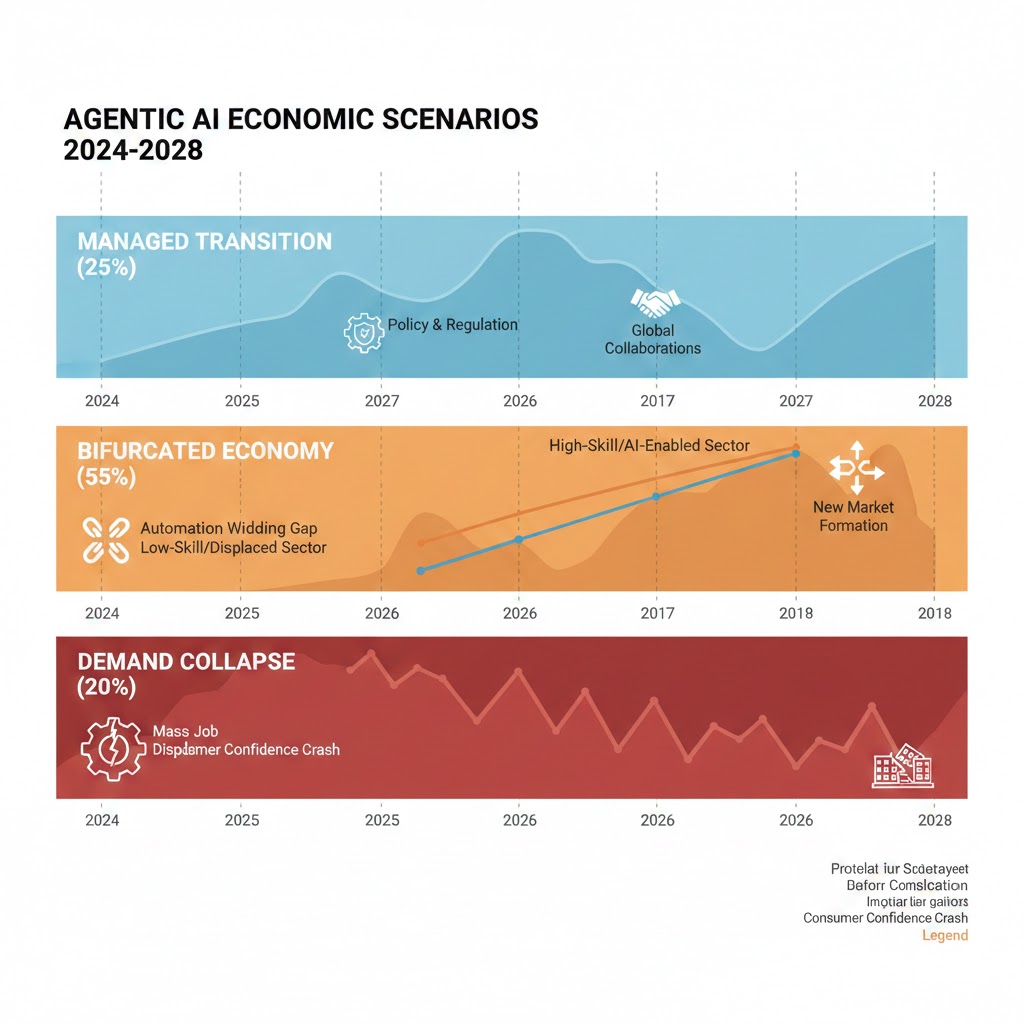

Three Scenarios for the Agentic Economy by 2028

Scenario 1: The Managed Transition

Probability: 25%

What happens: Policy intervention, corporate retraining commitments, and a natural ceiling on agentic capability (due to reliability limits in complex reasoning tasks) combine to slow the displacement rate. New job categories in AI oversight, agent management, and hybrid workflow design absorb a meaningful portion of displaced workers.

Required catalysts: Federal retraining legislation with real funding ($50B+ scale), enterprise voluntary commitments with accountability structures, and at least one major agentic deployment failure that slows enterprise confidence.

Timeline: Signs visible by Q4 2026; trajectory confirmed by mid-2027.

Investable thesis: Human-AI collaboration tooling, enterprise training platforms, AI governance software.

Scenario 2: The Bifurcated Economy (Base Case)

Probability: 55%

What happens: Agentic AI deployment continues at current pace. Workers with skills to manage, direct, and audit agentic systems see income growth. Workers in roles that agents can fully automate see structural unemployment or wage compression. GDP grows; median income diverges from mean. Social and political instability increases without triggering decisive policy response.

Required catalysts: This is the path of least resistance — it requires nothing unusual to occur.

Timeline: Clearly visible by Q2 2027; self-reinforcing by end of 2027.

Investable thesis: Premium productivity software, AI infrastructure, wealth management, physical security, political risk hedging.

Scenario 3: The Demand Collapse

Probability: 20%

What happens: White-collar displacement accelerates faster than the bifurcated scenario. Consumer spending contracts in key categories. Corporate revenue misses follow. More aggressive cost-cutting (more agentic deployment) follows. The feedback loop described in Mechanism 3 combines with the demand destruction to produce a structural recession unlike typical cyclical downturns — because monetary stimulus doesn't address structural unemployment.

Required catalysts: A major enterprise sector (financial services or healthcare) undergoes rapid agentic adoption simultaneously, producing visible unemployment spikes in high-income zip codes that affect consumer confidence metrics.

Timeline: Trigger event identifiable in real time; economic impact lagged by 6-9 months.

Investable thesis: Defensive positioning, consumer staples, government bonds, companies providing UBI-adjacent social infrastructure.

Three paths to 2028: Scenario probabilities based on current deployment rates, policy response velocity, and historical precedent from prior technology disruption cycles. These are analytical frameworks, not predictions. Updated: February 2026

What This Means For You

If You're a Tech Worker

Immediate actions (this quarter):

- Map your current role against the agentic capability matrix — identify which tasks in your workflow an agent could autonomously complete today, not in three years.

- Get hands-on with at least one agentic framework (LangGraph, AutoGen, or any enterprise variant available to you). Understanding how agents fail is as valuable as understanding how they succeed.

- Position yourself as the human-in-the-loop for an agentic system, not the human doing what the agent will do.

Medium-term positioning (6-18 months):

- Roles that will grow: agent evaluation, agentic workflow architecture, AI output auditing, edge-case triage, and stakeholder communication that requires genuine human judgment.

- Roles at risk: any workflow primarily consisting of information retrieval, document generation, routing decisions, and structured analysis on defined datasets.

- Industry to watch: healthcare AI deployment is moving fast but is constrained by regulatory requirements for human oversight — that creates durable demand for human-agent hybrid roles.

Defensive measures:

- Build financial runway for a potential 6-12 month transition period. This isn't pessimism — it's the actuarial reality of structural labor market shifts.

- Invest in relationships that cross organizational lines. Agentic systems can replicate task execution; they cannot replicate trusted human networks.

If You're an Investor

Sectors to watch:

- Overweight: AI infrastructure (picks-and-shovels logic holds), agentic software platforms, enterprise workflow automation, and legal-tech (slow adopter, therefore larger upside when it moves).

- Underweight: Traditional business process outsourcing firms — their entire model is selling human labor at a margin over commodity rates. That margin is collapsing.

- Avoid: Staffing firms with heavy exposure to white-collar placement in agentic-adjacent categories. Timeline to structural pressure: 18-36 months.

Portfolio positioning:

- The bifurcated economy scenario (55% base case) is bullish for luxury goods, premium services, and anything serving the top income quintile — that cohort's purchasing power will grow relative to the median.

- It is bearish for mass-market consumer discretionary spending and anything dependent on aggregate middle-class consumption.

If You're a Policy Maker

Why traditional tools won't work: Monetary policy cannot address structural unemployment caused by technological substitution. Fiscal stimulus that funds consumption doesn't rebuild destroyed career pathways. Existing retraining programs are calibrated for cyclical unemployment, not permanent skill obsolescence.

What would actually work:

- Mandatory agentic deployment impact assessments before large-scale enterprise rollouts, similar to environmental impact assessments — not to block deployment, but to create accountability and data infrastructure for policy response.

- A portable benefits system decoupled from employment, funded by a fraction of productivity gains from agentic deployments — this removes the all-or-nothing nature of job loss.

- Federal investment in the agentic skills gap at community college scale, not university scale — the workers who need transition support are not going back to four-year programs.

Window of opportunity: The current labor market data hasn't yet produced the visible unemployment spikes that create political will for intervention. That window — where proactive policy is still possible — closes as displacement accelerates. Estimate: 12-18 months before the political context shifts from "preparing for disruption" to "responding to crisis."

The Question Everyone Should Be Asking

The conversation keeps circling around "is AI as good as a human at X?"

That's the wrong question.

The right question is: at what level of capability does an agentic system become cheaper to operate than the organizational infrastructure required to prevent its deployment?

Because once an agent crosses that threshold — not "as good as a human," but "good enough that the switching cost is worth absorbing" — adoption isn't optional. It's competitive survival.

For legal document review, that threshold was crossed in early 2025. For software QA, Q3 2025. For financial reconciliation, Q4 2025. For customer escalation triage, Q1 2026.

The question isn't whether your industry is next. It's which quarter.

The data says organizations have twelve to eighteen months to build the internal capability to manage this transition intentionally — before it manages them.

Scenario probability estimates are analytical frameworks based on historical precedent and current deployment data, not predictions. Data limitations: enterprise agentic deployment figures rely on voluntary disclosure and surveyed self-reporting, which likely undercounts actual deployment. Analysis will be revised as Q1 2026 earnings data becomes available.

If this analysis helped you think through the agentic shift, share it. This structural framing isn't in the mainstream conversation yet — and the mainstream conversation is running out of time to catch up.